Managing 8 Rome CPUs in 1U: Cray’s Shasta Direct Liquid Cooling

by Ian Cutress on November 19, 2018 5:00 PM EST- Posted in

- CPUs

- AMD

- Trade Shows

- Enterprise CPUs

- Cray

- Rome

- Supercomputing 18

- Shasta

- CoolIT

*After posting this news, we have recieved additional information from Cray that states that the top plate is not being used for CPUs, but for the Slingshot interconnect. As a result, the system supports only 8 CPUs in 1U. The text below has been changed to reflect this new information.

The Supercomputing show was a hive of activity, with lots of whispers surrounding the next generation of x86 CPUs, such as AMD’s Rome platform and Intel’s Cascade Lake platform. One of the big announcements at the start of the show is that Cray has developed an AMD Rome based version of its Shasta supercomputer deployments.

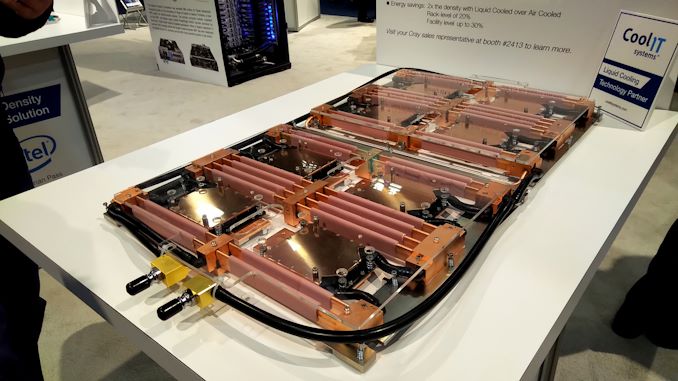

Cray had a sizeable presence at the show, given its position as one of the most prominent supercomputer designers in the industry. At its booth, we saw one of the compute blades for the Shasta design:

It’s a bit difficult to see what is going on here, but there are what looks like eight processors in a 1U system. The processors are not facing in the traditional way for a server, in line with airflow, because the system is not air cooled.

The cooling plate and pipes connect to separate reservoirs that also supply additional cooling to the memory. Of course in this situation we’re not in a dense memory configuration, given the cooling required.

This display was being shown off in the CoolIT booth. CoolIT is a well-known specialist of liquid cooling for both the consumer and enterprise worlds, and they had a few interesting points on their implementation for Shasta.

Firstly, the eight CPU design with split memory cooling was obvious, but CoolIT stated that these processor cooling plates were designed to cool a CPU below and the custom Cray Slingshot interconnect above. We were told that with this design, the full cooling apparatus could go up to eight CPUs, several interconnect nodes, and up to 4kW.

The cooling loops are split between the front and the rear, recombining again near the front. This means that four cooling plates and memory will be pumping through in each loop. There is a small question about how the memory all fits in, physically, but this is certainly impressive.

CoolIT is claiming that its custom solution for Cray’s Shasta platform helps double the compute density over an aircooled system, offers 20% better rack-level efficiency, and up to 30% better facility-wide energy efficiency.

Shasta is set to be deployed at NERSC as part of its upcoming ‘Perlmutter’ supercomputer, slated for delivery in late 2020.

*This article was updated on Nov-20 with new information.

26 Comments

View All Comments

iwod - Tuesday, November 20, 2018 - link

The title "Managing 16 Rome CPUs in 1U" , then "eight processors in a 1U system", then "Firstly, the eight CPU design with split memory cooling was obvious, but CoolIT stated that these processor cooling plates were designed to cool CPUs above and below, for a total cooling power of 500W apiece. This means that in this design, the full cooling apparatus could go up to sixteen CPUs and 4kW."So is it 8 CPU? or 16 CPU per 1U? I am confused.

SaturnusDK - Tuesday, November 20, 2018 - link

16 CPUs in 1U. 8 on the top side. 8 on the bottom side. But still in the 1U size.Dug - Tuesday, November 20, 2018 - link

Thanks, because that was clear as mud.iwod - Tuesday, November 20, 2018 - link

And yet Servethehome suggest only 8 CPU and 1024 Thread. So who is right who is wrong?rahvin - Tuesday, November 20, 2018 - link

Look at photo #2, they show half the water blocks with the Rome card on the "top" side.Valantar - Tuesday, November 20, 2018 - link

I get that you can (theoretically) fit 8 more CPUs on top of the waterblocks here, but where does the RAM for the additional CPUs go?tomatotree - Tuesday, November 20, 2018 - link

I imagine you're limited to 1 die per channel with a 16 processor configuration, so you'd remove half the ram that's in the current picture, and turn it upside down.tomatotree - Tuesday, November 20, 2018 - link

DIMM, not die. There really needs to be an edit button.Valantar - Wednesday, November 21, 2018 - link

Indeed there does. The edit to the article seems to have cleared this up, but just to be clear, there is already only 1DPC in the picture - EPYC has 8C memory, and there are quite clearly 8 dimms per CPU here :) Hence my question!iwod - Tuesday, November 20, 2018 - link

Article updated to "Correct" 8 CPU now. Which makes more sense, if it was 16 CPU the density improvement on 1U would have been 4x rather than then 2x as stated.Which leads to next question, 4KW per 8 CPU? that is roughly ~420W per CPU excluding DIMM. That is a lot for a single CPU. Last time Intel tried a 500W push overclock it requires liquid nitrogen. So is that 4KW number correct as well?