The Intel Core i9-9980XE CPU Review: Refresh Until it Hertz

by Ian Cutress on November 13, 2018 9:00 AM ESTCore i9-9980XE Conclusion: A Generational Upgrade

Users (and investors) always want to see a year-on-year increase in performance in the products being offered. For those on the leading edge, where every little counts, dropping $2k on a new processor is nothing if it can be used to its fullest. For those on a longer upgrade cycle, a constant 10% annual improvement means that over 3 years they can expect a 33% increase in performance. As of late, there are several ways to increase performance: increase core count, increase frequency/power, increase efficiency, or increase IPC. That list is rated from easy to difficult: adding cores is usually trivial (until memory access becomes a bottleneck), while increasing efficiency and instructions per clock (IPC) is difficult but the best generational upgrade for everyone concerned.

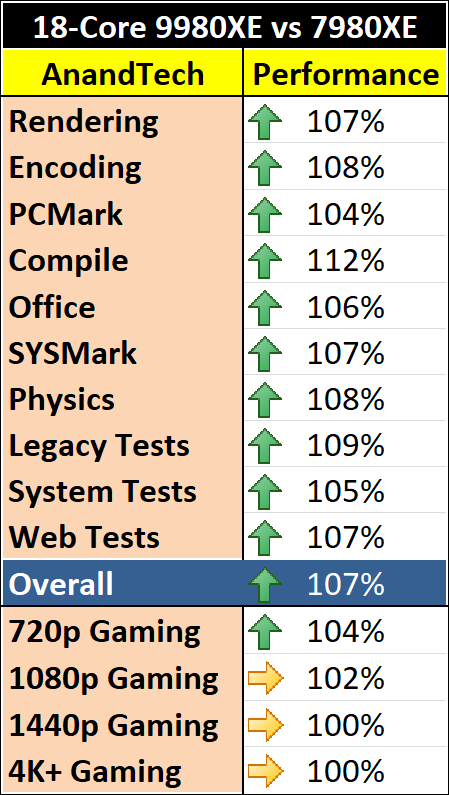

For Intel’s latest Core i9-9980XE, its flagship high-end desktop processor, we have a mix of improved frequency and improved efficiency. Using an updated process has helped increase the core clocks compared to the previous generation, a 15% increase in the base clock, but we are also using around 5-6% more power at full load. In real-world terms, the Core i9-9980XE seems to give anywhere from a 0-10% performance increase in our benchmarks.

However, if we follow Intel’s previous cadence, this processor launch should have seen a substantial change in design. Normally Intel follows a new microarchitecture and socket with a process node update on the same socket with similar features but much improved efficiency. We didn’t get that. We got a refresh.

An Iteration When Intel Needs Evolution

When Intel announced that its latest set of high-end desktop processors was little more than a refresh, there was a subtle but mostly inaudible groan from the tech press at the event. We don’t particularly like generations using higher clocked refreshes with our graphics, so we certainly are not going to enjoy it with our processors. These new parts are yet another product line based on Intel’s 14nm Skylake family, and we’re wondering where Intel’s innovation has gone.

These new parts involve using larger silicon across the board, which enables more cache and PCIe lanes at the low end, and the updates to the manufacturing process afford some extra frequency. The new parts use soldered thermal interface material, which is what Intel used to use, and what enthusiasts have been continually requesting. None of this is innovation on the scale that Intel’s customer base is used to.

It all boils down to ‘more of the same, but slightly better’.

While Intel is having another crack at Skylake, its competition is trying to innovate, not only by trying new designs that may or may not work, but they are already showcasing the next generation several months in advance with both process node and microarchitectural changes. As much as Intel prides itself on its technological prowess, and has done well this decade, there’s something stuck in the pipe. At a time when Intel needs evolution, it is stuck doing refresh iterations.

Does It Matter?

The latest line out of Intel is that demand for its latest generation enterprise processors is booming. They physically cannot make enough, and other product lines (publicly, the lower power ones) are having to suffer when Intel can use those wafers to sell higher margin parts. The situation is dire enough that Intel is moving fab space to create more 14nm products in a hope to match demand should it continue. Intel has explicitly stated that while demand is high, it wants to focus on its high performance Xeon and Core product lines.

You can read our news item on Intel's investment announcement here.

While demand is high, the desire to innovate hits this odd miasma of ‘should we focus on squeezing every cent out of this high demand’ compared to ‘preparing for tomorrow’. With all the demand on the enterprise side, it means that the rapid update cycles required from the consumer side might not be to their liking – while consumers who buy one chip want 10-15% performance gains every year, the enterprise customers who need chips in high volumes are just happy to be able to purchase them. There’s no need for Intel to dip its toes into a new process node or design that offers +15% performance but reduces yield by more, and takes up the fab space.

Intel Promised Me

In one meeting with Intel’s engineers a couple of years back, just after the launch of Skylake, I was told that two years of 10% IPC growth is not an issue. These individuals know the details of Intel’s new platform designs, and I have no reason to doubt them. Back then, it was clear that Intel had the next one or two generations of Core microarchitecture updates mapped out, however the delays to 10nm seem to put a pin into those +10% IPC designs. Combine Intel’s 10nm woes with the demand on 14nm Skylake-SP parts, and it makes for one confusing mess. Intel is making plenty of money, and they seem to have designs in their back pocket ready to go, but while it is making super high margins, I worry we won’t see them. All the while, Intel’s competitors are trying to do something new to break the incumbents hold on the market.

Back to the Core i9-9980XE

Discussions on Intel’s roadmap and demand aside, our analysis of the Core i9-9980XE shows that it provides a reasonable uplift over the Core i9-7980XE for around the same price, albeit for a few more watts in power. For users looking at peak Intel multi-core performance on a consumer platform, it’s a reasonable generation-on-generation uplift, and it makes sense for those on a longer update cycle.

A side note on gaming – for users looking to push those high-frame rate monitors, the i9-9980XE gave a 2-4% uplift over our games at our 720p settings. Individual results varied from a 0-1% gain, such as in Ashes or Final Fantasy, up to a 5-7% gain in World of Tanks, Far Cry 5, and Strange Brigade. Beyond 1080p, we didn’t see much change.

When comparing against the AMD competition, it all depends on the workload. Intel has the better processor in most aspects of general workflow, such as lightly threaded workloads on the web, memory limited tests, compression, video transcoding, or AVX512 accelerated code, but AMD wins on dedicated processing, such as rendering with Blender, Corona, POV-Ray, and Cinema4D. Compiling is an interesting one, because in for both Intel and AMD, the more mid-range core count parts with higher turbo frequencies seem to do better.

143 Comments

View All Comments

MisterAnon - Wednesday, November 14, 2018 - link

PNC is not right at all, he's completely wrong. Unless your job requires you to walk around and type at the same time using a laptop is a net loss of producitivity for zero gain. At a professional workplace anyone who thinks that way would definitely be fired. If you're going to be in the same room for 8 hours a day doing real work, it makes sense to have a desktop with dual monitors. You will be faster, more efficient, more productive, and more comfortable. Powerful desktops are more useful today than ever before due to the complexity of modern demands.TheinsanegamerN - Wednesday, November 14, 2018 - link

What is your source for gamers being the primary consumers of HDET?imaheadcase - Tuesday, November 13, 2018 - link

Well of course for programming its ok. That is like saying you moved from a desktop to a phone for typing. It requires nothing to type hardly for power. lol That pretty much as always been the case.bji - Tuesday, November 13, 2018 - link

I think you are implying programming is not a CPU intensive task? Certainly it can be low intensity for small projects, but trust me it can also use as much CPU as you can possibly throw at it. When you have a project that requires compiling thousands or tens of thousands of files to build it ... the workload scales fairly linearly with the number of cores, up to some fuzzy limit mostly set by memory bandwidth.twtech - Thursday, November 15, 2018 - link

I also work in software development (games), and my experience has been completely the opposite. I've actually only known one programmer who preferred to work on a laptop - he bought a really high-end Clevo DTR and brought it in to work.I do have a laptop at my desk - I brought in a Surface Book 2 - but I mostly just use it for taking notes. I don't code on it.

Unless you're going to be moving around all the time, I don't know why you'd prefer to look at one small screen and type on a sub-par laptop keyboard if there's the choice of something better readily available. And two 27" screens is pretty much the minimum baseline - I have 3x 30" here at home.

:And then of course there's the CPU - if you're working on a really small codebase, it might not matter. But if it's a big codebase, with C++, you want to have a lot of cores to be able to distribute the compiling load. That's why I'm really interested in the forthcoming W3175x - high clocks plus 28 cores on a monolithic chip sounds like a winning combination for code compiling. High end for a laptop is what, 6 cores now?

Laibalion - Saturday, November 17, 2018 - link

What utter nonsense. I'be been working on large and complex c++ codebases (2M+ LOC for a single product) for over a decade, and compute power is an absolute necessity to work efficiently. Compile times such beast scales linearly (if done properly), so no one wants a shit mobile cpu for their workstation.HStewart - Tuesday, November 13, 2018 - link

Mobile has been this way for decade - I got a new job working at home and everyone is on laptops - todays laptop are as powerful as most desks - work has quad core notebook and this is my 2nd notebook and first one was from nine years ago. Desktops were not used in my previous job. Notebook mean you can be mobile - for me that is when I go to home office which is not often - but also bring notebook to meeting and such.I am development C++ and .net primary.

Desktop are literary dinosaurs now becoming part of history.

bji - Tuesday, November 13, 2018 - link

You are not working on big enough projects. For your projects, a laptop may be sufficient; but for larger projects, there is certainly a wide chasm of difference between the capabilities of a laptop and those of a workstation class developer system.MisterAnon - Wednesday, November 14, 2018 - link

Today's laptops are not as powerful as desktops. They use slow mobile processors, and overheat easily due to thermals. If you're working from home you're still sitting in a chair all day, meaning you don't need a laptop. If your company fired you and hired someone who uses a desktop with dual monitors, they would get significantly more work done for them per dollar.Atari2600 - Tuesday, November 13, 2018 - link

I wouldn't call them very "professional" when they are sacrificing 50+% productivity for mobility.Anyone serious about work in a serious work environment* has a workstation/desktop and at least 2 of UHD/4k monitors. Anything else is just kidding yourself thinking you are productive.