The Intel Core i9-9980XE CPU Review: Refresh Until it Hertz

by Ian Cutress on November 13, 2018 9:00 AM ESTCore i9-9980XE Conclusion: A Generational Upgrade

Users (and investors) always want to see a year-on-year increase in performance in the products being offered. For those on the leading edge, where every little counts, dropping $2k on a new processor is nothing if it can be used to its fullest. For those on a longer upgrade cycle, a constant 10% annual improvement means that over 3 years they can expect a 33% increase in performance. As of late, there are several ways to increase performance: increase core count, increase frequency/power, increase efficiency, or increase IPC. That list is rated from easy to difficult: adding cores is usually trivial (until memory access becomes a bottleneck), while increasing efficiency and instructions per clock (IPC) is difficult but the best generational upgrade for everyone concerned.

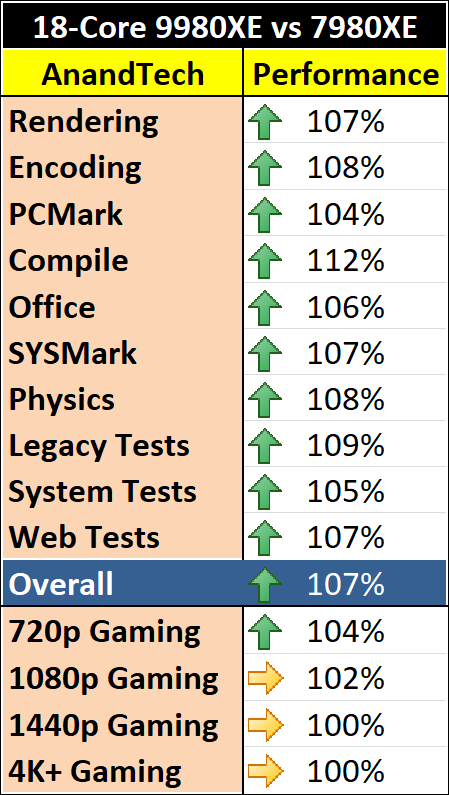

For Intel’s latest Core i9-9980XE, its flagship high-end desktop processor, we have a mix of improved frequency and improved efficiency. Using an updated process has helped increase the core clocks compared to the previous generation, a 15% increase in the base clock, but we are also using around 5-6% more power at full load. In real-world terms, the Core i9-9980XE seems to give anywhere from a 0-10% performance increase in our benchmarks.

However, if we follow Intel’s previous cadence, this processor launch should have seen a substantial change in design. Normally Intel follows a new microarchitecture and socket with a process node update on the same socket with similar features but much improved efficiency. We didn’t get that. We got a refresh.

An Iteration When Intel Needs Evolution

When Intel announced that its latest set of high-end desktop processors was little more than a refresh, there was a subtle but mostly inaudible groan from the tech press at the event. We don’t particularly like generations using higher clocked refreshes with our graphics, so we certainly are not going to enjoy it with our processors. These new parts are yet another product line based on Intel’s 14nm Skylake family, and we’re wondering where Intel’s innovation has gone.

These new parts involve using larger silicon across the board, which enables more cache and PCIe lanes at the low end, and the updates to the manufacturing process afford some extra frequency. The new parts use soldered thermal interface material, which is what Intel used to use, and what enthusiasts have been continually requesting. None of this is innovation on the scale that Intel’s customer base is used to.

It all boils down to ‘more of the same, but slightly better’.

While Intel is having another crack at Skylake, its competition is trying to innovate, not only by trying new designs that may or may not work, but they are already showcasing the next generation several months in advance with both process node and microarchitectural changes. As much as Intel prides itself on its technological prowess, and has done well this decade, there’s something stuck in the pipe. At a time when Intel needs evolution, it is stuck doing refresh iterations.

Does It Matter?

The latest line out of Intel is that demand for its latest generation enterprise processors is booming. They physically cannot make enough, and other product lines (publicly, the lower power ones) are having to suffer when Intel can use those wafers to sell higher margin parts. The situation is dire enough that Intel is moving fab space to create more 14nm products in a hope to match demand should it continue. Intel has explicitly stated that while demand is high, it wants to focus on its high performance Xeon and Core product lines.

You can read our news item on Intel's investment announcement here.

While demand is high, the desire to innovate hits this odd miasma of ‘should we focus on squeezing every cent out of this high demand’ compared to ‘preparing for tomorrow’. With all the demand on the enterprise side, it means that the rapid update cycles required from the consumer side might not be to their liking – while consumers who buy one chip want 10-15% performance gains every year, the enterprise customers who need chips in high volumes are just happy to be able to purchase them. There’s no need for Intel to dip its toes into a new process node or design that offers +15% performance but reduces yield by more, and takes up the fab space.

Intel Promised Me

In one meeting with Intel’s engineers a couple of years back, just after the launch of Skylake, I was told that two years of 10% IPC growth is not an issue. These individuals know the details of Intel’s new platform designs, and I have no reason to doubt them. Back then, it was clear that Intel had the next one or two generations of Core microarchitecture updates mapped out, however the delays to 10nm seem to put a pin into those +10% IPC designs. Combine Intel’s 10nm woes with the demand on 14nm Skylake-SP parts, and it makes for one confusing mess. Intel is making plenty of money, and they seem to have designs in their back pocket ready to go, but while it is making super high margins, I worry we won’t see them. All the while, Intel’s competitors are trying to do something new to break the incumbents hold on the market.

Back to the Core i9-9980XE

Discussions on Intel’s roadmap and demand aside, our analysis of the Core i9-9980XE shows that it provides a reasonable uplift over the Core i9-7980XE for around the same price, albeit for a few more watts in power. For users looking at peak Intel multi-core performance on a consumer platform, it’s a reasonable generation-on-generation uplift, and it makes sense for those on a longer update cycle.

A side note on gaming – for users looking to push those high-frame rate monitors, the i9-9980XE gave a 2-4% uplift over our games at our 720p settings. Individual results varied from a 0-1% gain, such as in Ashes or Final Fantasy, up to a 5-7% gain in World of Tanks, Far Cry 5, and Strange Brigade. Beyond 1080p, we didn’t see much change.

When comparing against the AMD competition, it all depends on the workload. Intel has the better processor in most aspects of general workflow, such as lightly threaded workloads on the web, memory limited tests, compression, video transcoding, or AVX512 accelerated code, but AMD wins on dedicated processing, such as rendering with Blender, Corona, POV-Ray, and Cinema4D. Compiling is an interesting one, because in for both Intel and AMD, the more mid-range core count parts with higher turbo frequencies seem to do better.

143 Comments

View All Comments

zeromus - Wednesday, November 14, 2018 - link

@linuxgeek, logged in for the first time in 10 years or more just to laugh with you for having cracked the case with your explanation there at the end!HStewart - Tuesday, November 13, 2018 - link

I have IBM Thinkpad 530 with NVidia Quadro - in software development unless into graphics you don't even need more than integrated - even more for average business person - unless you are serious into gaming or high end graphics you don't need highend GPU. Even gaming as long as you are not into latest games - lower end graphics will do.pandemonium - Wednesday, November 14, 2018 - link

"Even gaming as long as you are not into latest games - lower end graphics will do."This HEAVILY depends on your output resolution, as every single review for the last decade has clearly made evident.

Samus - Wednesday, November 14, 2018 - link

Don't call it an IBM Thinkpad. It's disgraceful to associate IBM with the bastardization Lenovo has done to their nameplate.imaheadcase - Tuesday, November 13, 2018 - link

Uhh yah but no one WILL do it on mobility. Makes no sense.TEAMSWITCHER - Tuesday, November 13, 2018 - link

You see .. there you are TOTALLY WRONG. Supporting the iPad is a MAJOR REQUIREMENT as specified by our customers.Augmented reality has HUGE IMPLICATIONS for our industry. Try as you may ... you can't hold up that 18 core desktop behemoth (RGB lighting does not defy gravity) to see how that new Pottery Barn sofa will look in your family room. I think what you are suffering from is a historical perspective on computing which the ACTUAL WORLD has moved away from.

scienceomatica - Tuesday, November 13, 2018 - link

@TEAMSWITCHER - I think your comments are an unbalanced result between fantasy and ideals. I think you're pretty superficially, even childishly looking at the use of technology and communicating with the objective world. Of course, a certain aspect of things can be done on a mobile device, but by its very essence it is just a mobile device, therefore, as a casual, temporary solution. It will never be able to match the raw power of "static" desktop computers.working in a laboratory for physical analysis, numerous simulations of supersymmetric breakdowns of material identities, or transposition of spatial-temporal continuum, it would be ridiculous to imagine doing on a mobile device.There are many things I would not even mention.HStewart - Tuesday, November 13, 2018 - link

For videos - as long as you AVX 2 (256bit) you are ok.SanX - Wednesday, November 14, 2018 - link

AMD needs to beat Intel with AVX to be considered seriously for scientific apps (3D particle movement test)PeachNCream - Tuesday, November 13, 2018 - link

All seven of our local development teams have long since switched from desktops to laptops. That conversion was a done deal back in the days of Windows Vista and Dell Latitude D630s and 830s. Now we live in a BYOD (bring your own device) world where the company will pay up to a certain amount (varies between $1,100 and $1,400 depending on funding from upper echelons of the corporation) and employees are free to purchase the computer hardware they want for their work. There are no desktop PCs currently and in the past four years, only one person purchased a desktop in the form of a NUC. The reality is that desktop computers are for the most part a thing of the past with a few instances remaining on the desks of home users that play video games on custom-built boxes as the primary remaining market segment. Why else would Intel swing overclocking as a feature of a HEDT chip if there was a valid business market for these things?