The Intel Core i9-9980XE CPU Review: Refresh Until it Hertz

by Ian Cutress on November 13, 2018 9:00 AM ESTHEDT Performance: SYSMark 2018

You either love it or hate it. BAPCo’s SYSMark suite of tests is both an interesting combination of benchmarks but also clouded in a substantial amount of ire. The altruistic original goal was to develop an industry standard suite of real-world tests. AMD (and NVIDIA) left BAPCo several years ago citing that workloads were being chosen on purpose that favoured Intel processors, and didn’t include enough several emerging computing paradigms for the industry. Intel disagrees with that statement, and here we are today.

We run this disclaimer on our SYSMark testing primarily to emphasise that this benchmark suite, while some consider it more than relevant and encompassing a lot of modern professional software, others feel is engineered with specific goals in mind.

We haven’t run SYSMark on every processor, as it requires a fresh OS image compared to our automated suite, and requires refreshing that image every seven days. As a result we are trying to do sets of processors at a time where it makes sense and when time is available.

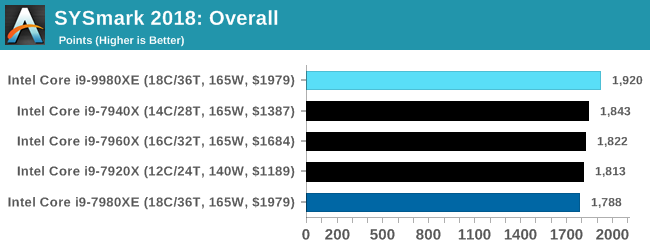

It’s clear from the Intel comparisons that the i9-9980XE takes a lead here, with a nice bump over the 7980XE of around 7% in the overall test. Given that the 7900X is the best of the Skylake-X processors, it will be interesting to see what the 9900X scores here.

143 Comments

View All Comments

TheJian - Friday, November 16, 2018 - link

I stopped reading when I saw 8k with a 1080. Most tests are just pointless, as it would be more interesting with a 1080ti at least or better 2080ti. That would give the chips more room to run when they can to separate the men from the boys so to speak.Vid tests with handbrake stupid too. Does anyone look at the vid after those tests? It would look like crap. Try SLOWER as a setting and lets find out how the chips fare, and bitrates of ~4500-5000 for 1080p. Something I'd actually watch on a 60in+ tv without going blind.

Release groups for AMZN for example release 5000 bitrate L4.1, 5-9 ref frames, SLOWER. etc. Nfo files reveal stuff like this:

cabac=1 / ref=9 / deblock=1:-3:-3 / analyse=0x3:0x133 / me=umh / subme=11 / psy=1 / psy_rd=1.00:0.00 / mixed_ref=1 / me_range=32 / chroma_me=1 / trellis=2 / 8x8dct=1 / cqm=0 / deadzone=21,11 / fast_pskip=0 / chroma_qp_offset=-2 / threads=6 / lookahead_threads=1 / sliced_threads=0 / nr=0 / decimate=0 / interlaced=0 / bluray_compat=0 / constrained_intra=0 / bframes=8 / b_pyramid=2 / b_adapt=2 / b_bias=0 / direct=3 / weightb=1 / open_gop=0 / weightp=2 / keyint=250 / keyint_min=23 / scenecut=40 / intra_refresh=0 / rc=crf / mbtree=0 / crf=17.0 / qcomp=0.60 / qpmin=0 / qpmax=69 / qpstep=4 / ip_ratio=1.40 / pb_ratio=1.30 / aq=3:0.85

More than I'd do, but the point is, SLOWER will give you far better quality (something I could actually stomach watching), without all the black blocks in dark scenes etc. Current 720p releases from nf or amzn have went to crap (700mb files for h264? ROFL). We are talking untouched direct from NF or AMZN. Meaning that is the quality you are watching as a subscriber that is, which is just one of the reasons we cancelled NF (agenda TV was the largest reason to dump them).

If you're going to test at crap settings nobody would watch, might as well kick in quicksync with quality maxed and get better results as MOST people would do if quality wasn't an issue anyway.

option1=value1:option2=value2:tu=1:ref=9:trellis=3 and L4.1 with encoder preset set to QUALITY.

That's a pretty good string for decent quality with QSV. Seems to me you're choosing to turn off AVX/Quicksync so AMD looks better or something. Why would any USER turn off stuff that speeds things up unless quality (guys like me) is an issue? Same with turning off gpu in blender etc. What is the point of a test that NOBODY would do in real life? Who turns off AVX512 in handbrake if you bought a chip to get it? LOL. That tech is a feature you BUY intel for. IF you turn off all the good stuff, the chip becomes a ripoff. But users don't do that :) Same for NV, if you have the ability to use RTX stuff, why would you NOT when a game supports it? To make AMD cards look better? Pffft. To wait for AMD to catch up? Pffft.

I say this as an AMD stock holder :) Most useless review I've seen in a while. Not wasting my time reading much of it. Moving on to better reviews that actually test how we PLAY/WATCH/WORK in the real world. 8K...ROFLMAO. Ryan has been claiming 1440p was norm since 660ti. Then it was 4k not long after for the last 5yrs when nobody was using that, now it's 8k tests with a GTX 1080...ROFLMAO. No wonder I come here once a month or less pretty much and when I do, I'm usually turned off by the tests. Constantly changing what people do (REAL TESTS) to turning stuff off, down, (vid cards at ref speeds instead of OC OOTB settings etc), etc etc...Let's see if we can set up this test in a way nobody would do at home to strike down advantages of anyone competing with AMD. Blah. I'd rather see where both sides REALLY win in ways we USE these products. Turn everything on if it's in the chip, gpu, test, etc and spend MORE time testing resolutions etc we actually USE in practice. 8k...hahaha. Whatever. 13fps?

"Ashes has dropdown options for MSAA, Light Quality, Object Quality, Shading Samples, Shadow Quality, Textures, and separate options for the terrain."

Yeah, I'm out. Dropdown quality is against my religion and useless to me. I'm sure the other tests have issues I'd hate also, no time to waste on junk review tests. Too many other places that don't do this crap. I bought a 1070ti to run MAX settings at 1200p (dell 24in) in everything or throw it to my lower res 22in. If I can't do that, I'll wait for my next card to play game X. Not knocking AMD here, just Anandtech. I'll likely buy a 7nm AMD cpu when they hit, and they have a shot at a 7nm gpu for me too. You guys and tomshardware (heh, you joined) have really went downhill with irrational testing setups. If you're going to do 4k at ultra, why not do them all there? I digress...

spikespiegal - Saturday, November 24, 2018 - link

Just curious, but how many of you AMD fanbois have ever been in a data center or been responsible for adjusting performance on a couple dozen VMware hosts running mixed applications? Oh wait...none. In the mythical world according to AMDs BS dept a Hypervisor / Operating system takes the number of tasks running and divides them by the number of cores running, and you clowns believe it. In the *real world* where we have to deal with really expensive hosts that don't have LED fans in them and run applications adults use we know that's not the truth. Hypervisors and Operating systems schedulers all favor cores that process mixed threads faster, and if you want to argue that please consult with a VMware or Hyper-V engineer the next time you see them in your drive thru. Oh wait...I am a VMware engineer.An i3 8530 costs $200 and literally beats any AMD chip made running stock in dual threaded applications. Seriously....look up the single threaded performance. More cores don't make an application more multithreaded and they don't make contribute to a better desktop experience. I have servers with 30-40% of my CPU resources not being used, and just assigning more cores won't make applications faster. It just ties up my scheduler doing nothing and wastes performance. The only way to get better application efficiency is vertical, and that's higher core performance, and that's nothing I'm seeing AMD bringing to the table.

Michael011 - Wednesday, December 12, 2018 - link

The pricing shows just how greedy Intel has become. It is better to spend your money on a top end AMD Threadripper and motherboard. https://mobdro.io/