896 Xeon Cores in One PC: Microsoft’s New x86 DataCenter Class Machines Running Windows

by Ian Cutress on October 26, 2018 11:00 AM EST- Posted in

- CPUs

- Windows

- Microsoft

- Enterprise CPUs

- Azure

This week Microsoft released a new blog dedicated to the Windows Kernel internals. The purpose of the blog is to dive into the Kernel across a variety of architectures and delve into the elements, such as the evolution of the kernel, the components, the organization, and in this post, the focus was on the scheduler. The goal is to develop the blog over the next few months with insights into what goes on behind the scenes, and the reasons why it does what it does. However, we got a sneak peek into a big system that Microsoft looks like it is working on.

For those that want to read the blog, it’s really good. Take a look here:

https://techcommunity.microsoft.com/t5/Windows-Kernel-Internals/One-Windows-Kernel/ba-p/267142

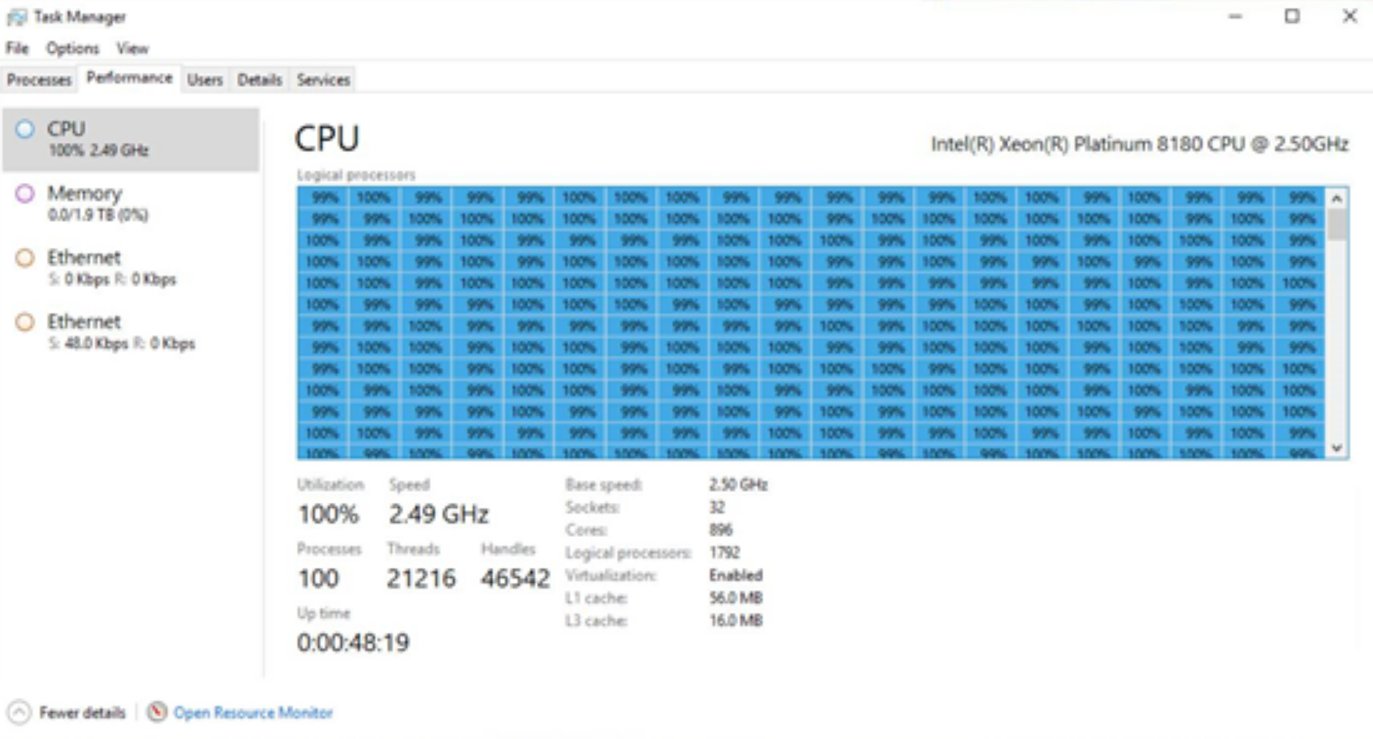

When discussing the scalability of Windows, the author Hari Pulapaka, Lead Program Manager in the Windows Core Kernel Platform, showcases a screenshot of Task Manager from what he describes as a ‘pre-release Windows DataCenter class machine’ running Windows. Here’s the image:

Click to zoom. Unfortunately the original image is low resolution

If you weren’t amazed by the number of threads in task manager, you might notice that on the side there’s a scroll bar. That’s right: 896 cores means 1792 threads when hyperthreading is enabled, which is too much for task manager to show at once, and this new type of ‘DataCenter class machine’ looks like it has access to them all. But what are we really seeing here, aside from every single thread loaded at 100%?

So to start, the CPU listed is a Xeon Platinum 8180, Intel’s highest core count, highest performing Xeon Scalable ‘Skylake-SP’ processor. It has 28 cores and 56 threads, and by math we get a 32 socket system. In fact in the bumf below the threads all running at 100%, it literally says ‘Sockets: 32’. So this is 32 full 28 core processors all acting together under one version of Windows. Again, the question is how?

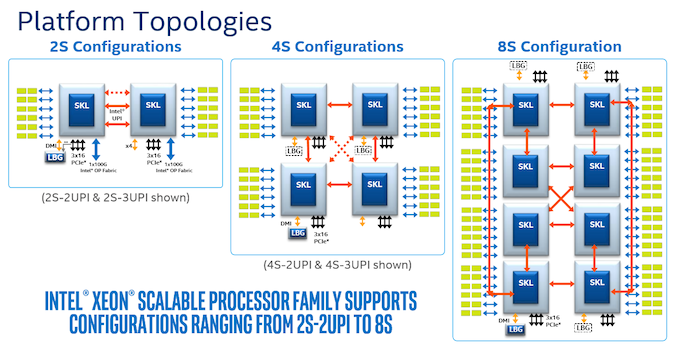

Normally, Intel only rates Xeon Platinum processors for up to 8 sockets. It does this by using three QPI links per processor to form a dual-box configuration. The Xeon Gold 6100 range does up to four sockets with three QPI links, ensuring each processor is linked to each other processor, and then the rest of the range does single socket or dual socket.

What Intel doesn’t mention is that with an appropriate fabric connecting them, system builders and OEMs can chain together several 4-socket or 8-socket systems into a single, many-socket interface. Aside from the fabric to be used and the messaging, there are other factors in play here, such as latency and memory architecture, which are already present in 2-8 socket platforms but get substantially increased going beyond eight sockets. If one processor needs memory that is two fabric hops and a processor hop is away, to a certain extent having that data in a local SDD might be quicker.

As for the fabric: I’m actually going to use an analogy here. AMD’s EPYC platform goes up to two sockets, but for the interconnect between sockets, it uses 64 PCIe lanes from each processor to host AMD’s Infinity Fabric protocol to act as links, and has the benefit of the combined bandwidth of 128 PCIe lanes. If EPYC had 256 PCIe lanes for example, or cut the number of PCIe lanes down to 32 per link, then we could end up with EPYC servers with more than two sockets built on Infinity Fabric. With Intel CPUs, we’re still using the PCIe lanes, but we’re doing it in one of three ways: control over Omni-Path using PCIe, control over Infiniband using PCIe, or control using custom FPGAs, again over PCIe. This is essentially how modern supercomputers are run, albeit not as one unified system.

Unfortunately this is where we go out of my depth. When I spoke to a large server OEM last year, they said quad socket and eight socket systems are becoming rarer and rarer as each CPU by itself has more cores the need for systems that big just doesn't exist anymore. Back in the days pre-Nehalem, the big eight socket 32-core servers were all the rage, but today not so much, and unless a company is willing to spend $250k+ (before support contracts or DRAM/NAND) on a single 8-socket system, it’s reserved for the big players in town. Today, those are the cloud providers.

In order to get 32 sockets, we’re likely seeing eight quad-socket systems connected in this way in one big blade infrastructure. It likely takes up half a rack, of not a whole one, and your guess is as good as mine on the price, or power consumption. In our screenshot above it does say ‘Virtualization: Enabled’, and given that this is Microsoft we’re talking about, this might be one of their internal planned Azure systems that is either rented to defence-like contractors or partitioned off in instances to others.

I’ve tried reaching out to Hari to get more information on the system this is, and will report back if we get anything. Microsoft may make an official announcement if these large 32-socket systems are going to be 'widespread' (meant in the leanest sense) offerings on Azure.

Note: DataCenter is stylized with a capital C as quoted through Microsoft's blog post.

56 Comments

View All Comments

speculatrix - Sunday, October 28, 2018 - link

I think another factor, licensing conditions, also reduce the benefit of paying huge premiums for servers with massive core counts.If you're buying VMware licenses you currently pay per socket, and there's a sweet spot in combined server price with high core cpu to get the best overall cost per core.

With Oracle, I think licensing is by core, not socket or server, so there's no benefit in stuffing a machine with higher sockets and higher core counts to optimise the oracle licensing cost.

so it's worth optimising the overall price of the entire server and license t

SarahKerrigan - Sunday, October 28, 2018 - link

As others have noted, the Superdome Flex supports Windows and is 32s. There have also been previous 32s and 64s IPF machines running Windows, although obviously the fact that this is x86 is a distinction from those. :-)peevee - Monday, October 29, 2018 - link

"When I spoke to a large server OEM last year, they said quad socket and eight socket systems are becoming rarer and rarer as each CPU by itself has more cores the need for systems that big just doesn't exist anymore"The real reason is rise of distributed computing (Hadoop, Spark and alike), which can achieve similar performance using much cheaper hardware (2-cpu servers). Those monster multi-socket systems allow you to write applications like for SMP, but in practice if you write for NUMA like for SMP it does not scale, you need to write for NUMA like it is a distributed thing anyway.

spikespiegal - Wednesday, October 31, 2018 - link

"The real reason is rise of distributed computing (Hadoop, Spark and alike), which can achieve similar performance using much cheaper hardware (2-cpu servers)."??

The decline in > 2 socket servers is because those companies still running on prem data services don't see the value and the far bigger customer base which is cloud providers would rather scale horizontal than vertical. Aside from XenApp / RDS or the rare Database instance provisioning more than 4 CPUs to a single guest VM is the exception when it comes to looking at all virtual servers as an aggragate. Any time you start slinging more virtual CPUs to a single guest the odds are increasingly in favor of a cluster of those OS's hosting the application pool doing it more efficiently than a single OS. So, in all likelyhood this is a product that's designed to better facilitate spinning up large commercial virtual server environments and not solving a problem for a singular application.

DigiWise - Sunday, November 4, 2018 - link

I have a stupid question, the single 8180 CPU has 38.5MB L3 cashe, I see from the screenshot it's only 16MB for this massive count? how is it possible?alpha754293 - Friday, January 11, 2019 - link

Dr. Cutress:I don't have the specifics, but there are ways to present multiple hardware nodes to an OS as a single image.

There is a cluster-specific Linux distro called "Rocks Cluster" where it would be able to find all of the nodes on the cluster and then if you give it work to do, it will automatically "move" the processes over from the head node over to slave nodes and manage it entirely.

I would not be surprised if it is managing the system as a single-image OS if this is going through Intel's Omni-Path interconnect so that they have some form of RDMA (whether it'd be RoCE or not).

If they're quad socket blades, and you can fit 14 blades in 7U, then this will fit within that physical space. (Actually, with room to spare. Only 8 of the 14 quad socket blades would be populated). This can be powered by up to eight 2200 W redudant power supplies (using Supermicro's offerings as surrogate source of data, which would take upto 17.6 kW total, peak/max power consumption).)

The other possibility would be dual socket per node, quad half-width nodes per 2U. That would require four servers to get 32 sockets, and that will take 8U total physical space. If it is just uses four servers like this, and each server uses a 1600 W redudant power supplies, so that would put the total power consumption at 6.4 kW, so it's entirely possible.

Unfortunately, this is just my speculation based on some of the available hardware offerings (based on Supermicro's offerings).