Intel's 10nm Cannon Lake and Core i3-8121U Deep Dive Review

by Ian Cutress on January 25, 2019 10:30 AM ESTConclusion: I Actually Used the Cannon Lake Laptop as a Daily System

When we ordered the Lenovo laptop, not only was I destined to test it to see how well Intel’s 10nm platform performs, but I also wanted to see what the device was like to actually use. Once I’d removed the terrible drives it came with and put in a 1TB Crucial MX200 SSD, I started to put it to good use.

The problem with this story is that because this is a really bad configuration of laptop, it gives the hardware very little chance to show its best side. We covered this in our overview of Carrizo several years ago, after OEM partners kept putting chips with reasonable performance into the worst penny pinching designs. The same thing goes with this laptop – it is an education focused 15.6-inch laptop whose screen is only 1366x768, and the TN panel’s best angle to view is as it is tilted away from you. It is bulky and heavy but only has a 34 Wh battery, whereas the ideal laptop is thin and light and lasts all day on a single charge. From the outset, using this device was destined to be a struggle.

I first used the device when I attended Intel’s Data Summit in mid-August. On the plane I didn’t have any space issues because I had reserved a bulkhead economy seat, however after only 4 hours or so of light word processing on a low screen brightness, I was already out of battery. Thankfully I could work on other things on my second laptop (always take two laptops to events, maybe not day-to-day at a show, but always fly with two). At the event, I planned to live blog the day of presentations. This means being connected online, uploading text, and being of a sufficient brightness to see the screen. After 90 minutes, I had 24% battery left. This device has terrible battery life, a terrible screen, is bulky, and weighs a lot.

I will say this though, it does have several positives. Perhaps this is because the RX540 is in the system, but the Windows UI was very responsive. Now of course this is a subjective measure, however I have used laptops with Core i7 and MX150 hardware that were slower to respond than this. It did get bogged down when I went into my full workflow with many programs, many tabs, and many messaging software tools, but I find that any system with only 8GB of memory will hit my workflow limits very quickly. On the natural responsiveness front, I can’t fault it.

Ultimately I haven’t continued to use the laptop much more – the screen angle required to get a good image, the battery life, and the weight are all critical issues that individually would cause me to ditch the unit. At this price, there are plenty of Celeron or Atom notebooks that would fit the bill and feel nicer to use. I couldn’t use this Ideapad unit with any confidence that I would make it through an event, either a live blog or a note taking session, without it dying. As a journalist, we can never guarantee there will be a power outlet (or an available power outlet) at the events we go to, so I always had to carry a second laptop in my bag regardless. The issue is that the second laptop I use often lasts all day at an event on its own.

Taking Stock of Intel’s 10nm Cannon Lake Design

When we lived in a world with Intel’s Tick Tock, Cannon Lake would be a natural tick – a known microarchitecture with minor tweaks but on a new process node. The microarchitecture is a tried and tested design, as we now have had four generations of it from Skylake to Coffee Lake Refresh, however the chip just isn’t suitable for prime time.

Looking at how Intel has presented its improvements on 10nm, with features like using Cobalt, Dummy Gates, Contact Over Active Gates, and new power design rules, if we assume that every advancement works perfectly then 10nm should have been a hit out of the gate. The problem is, semiconductor design is like having 300 different dials to play with, and tuning one of those dials causes three to ten others to get worse. This is the problem Intel has had with 10nm, and it is clear that some potential features work and others do not – but the company is not saying which ones for competitive and obvious reasons.

At Intel’s Architecture Day in December, the Chief Engineering Officer Dr. Murthy Renduchintala was asked if the 10nm design had changed. His response was contradictory and cryptic: ‘It is changing, but it hasn’t changed’. At that event the company was firmly in the driving seat of committing to 10nm by the end of 2019, in a quad core Ice Lake mobile processor, in a new 3D packaging design called Lakefield, in an Ice Lake server CPU for 2020, and in a 5G/AI focused processor called Snow Ridge. Whatever 10nm variant of the process they’re planning to use, we will have to wait and see.

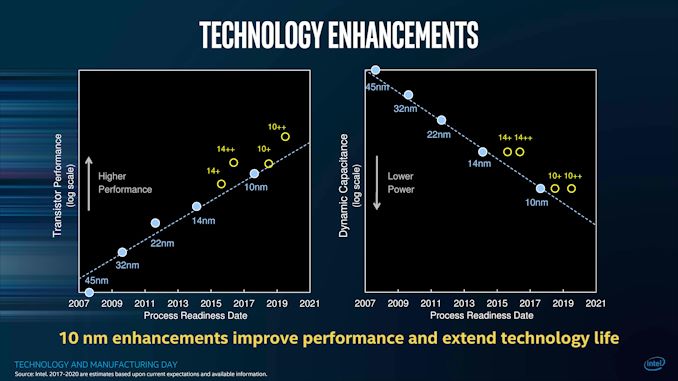

I’ll go back to this slide that Intel presented back at the Technology and Manufacturing Day:

In this slide it shows on the right that 10nm (and its variants) have lower power through lower dynamic capacitance. However, on the left, Intel shows both 10nm (Cannon Lake) and 10nm+ (Ice Lake) as having lower transistor performance than 14nm++, the current generation of Coffee Lake processors.

This means we might not see a truly high-performance processor on 10nm until the third generation of the process is put into place. Right now, based on our numbers on Cannon Lake, it’s clear that the first generation of 10nm was not ready for prime time.

Cannon Lake: The Blip That Almost Didn’t Happen

We managed to snap up a Cannon Lake chip by calling in a few favors to buy it from a Chinese reseller who I’m pretty sure should not have been selling them to the public. They were educational laptops that may not have sold well, and the reseller just needed to get rid of them. Given Intel’s reluctance to talk about anything 10nm at CES 2018, and we find that the chips ‘shipped for revenue’ end up in a backwater design like this, then it would look like that Intel was trying to hide them. That was our thought for a good while, until Intel announced the Cannon Lake NUC. Even then, from launch announcement to being at general retail took four months, and by that time most people had lost interest.

At some point Intel had to make good on its promises to investors by shipping something 10nm to somewhere. Exactly how many chips were sold (and to whom) is not discussed by Intel, but I have heard some numbers flying around. Based on our performance numbers, it’s obvious why Intel didn’t want to promote it. On the other hand, at least being told about it beyond a simple sentence would have been nice.

After testing the chip, the only way I’d recommend one of these things is for the AVX512 performance. It blows everything else in that market out of the water, however AVX512 enabled programs are few and far between. Plus, given what Intel has said about the Sunny Cove core, that part will have it instead. If you really need AVX512 in a small form factor, Intel will sell you a NUC.

Cannon Lake, and the system we have with it inside, is ultimately now nothing more than a curio on the timeline of processor development. Which is where it belongs.

129 Comments

View All Comments

eva02langley - Sunday, January 27, 2019 - link

Even better...https://youtu.be/osSMJRyxG0k?t=1220

AntonErtl - Sunday, January 27, 2019 - link

Great Article! The title is a bit misleading given that it is much more than just a review. I found the historical perspective of the Intel processes most interesting: Other reporting often just reports on whatever comes out of the PR department of some company, and leaves the readers to compare for themselves with other reports; better reporting highlights some of the contradictions; but rarely do we se such a pervasive overview.The 8121U would be interesting to me to allow playing with AVX512, but the NUC is too expensive for me for that purpose, and I can wait until AMD or Intel provide it in a package with better value for money.

RamIt - Sunday, January 27, 2019 - link

Need gaming benches. This would make a great cs:s laptop for my daughter to game with me on.Byte - Monday, January 28, 2019 - link

Cannonlake, 2019's Broadwell.f4tali - Monday, January 28, 2019 - link

I can't believe I read this whole review from start to finish...And all the comments...

And let it sink in for over 24hrs...

But somehow my main takeaway is that 10nm is Intel's biggest graphics snafu yet.

(Well THAT and the fact you guys only have one Steam account!)

;)

NikosD - Monday, January 28, 2019 - link

@Ian CutressGreat article, it's going to become all-time classic and kudos for mentioning semiaccurate and Charlie for his work and inside information (and guts)

But really, how many days, weeks or even months did it take to finish it ?

bfonnes - Monday, January 28, 2019 - link

RIP IntelCharonPDX - Monday, January 28, 2019 - link

Insane to think that there have been as many 14nm "generations" as there were "Core architecture" generations before 14nm.ngazi - Tuesday, January 29, 2019 - link

Windows is snappy because there is no graphics switching. Any machine with the integrated graphics completely off is snappier.Catalina588 - Wednesday, January 30, 2019 - link

@Ian, This was a valuable article and it is clipped to Evernote. Thanks!Without becoming Seeking Alpha, you could add another dimension or two to the history and future of 10nm: cost per transistor and amortizing R&D costs. At Intel's November 2013 investor meeting, William Holt strongly argued that Intel would deliver the lowest cost per transistor (slide 13). Then-CFO Stacey Smith and other execs also touted this line for many quarters. But as your article points out, poor yields and added processing steps make 10nm a more expensive product than the 14nm++ we see today. How will that get sold and can Intel improve the margins over the life of 10nm?

Then there's amortizing the R&D costs. Intel has two independent design teams in Oregon and Israel. Each team in the good-old tick-tock days used to own a two-year process node and new microarchitecture. The costs for two teams over five-plus years without 10nm mainstream products yet is huge--likely hundreds of millions of dollars. My understanding is that Intel, under general accounting rules, has to write off the R&D expense over the useful life of the 10nm node, basically on a per chip basis. Did Intel start amortizing 10nm R&D with the "revenue" for Cannon Lake starting in 2017, or is all of the accrued R&D yet to hit the income statement? Wish I knew.

Anyway, it sure looks to me like we'll be looking back at 10nm in the mid-2020s as a ten-year lifecycle. A big comedown from a two-year TickTock cycle.