The Intel 9th Gen Review: Core i9-9900K, Core i7-9700K and Core i5-9600K Tested

by Ian Cutress on October 19, 2018 9:00 AM EST- Posted in

- CPUs

- Intel

- Coffee Lake

- 14++

- Core 9th Gen

- Core-S

- i9-9900K

- i7-9700K

- i5-9600K

CPU Performance: Rendering Tests

Rendering is often a key target for processor workloads, lending itself to a professional environment. It comes in different formats as well, from 3D rendering through rasterization, such as games, or by ray tracing, and invokes the ability of the software to manage meshes, textures, collisions, aliasing, physics (in animations), and discarding unnecessary work. Most renderers offer CPU code paths, while a few use GPUs and select environments use FPGAs or dedicated ASICs. For big studios however, CPUs are still the hardware of choice.

All of our benchmark results can also be found in our benchmark engine, Bench.

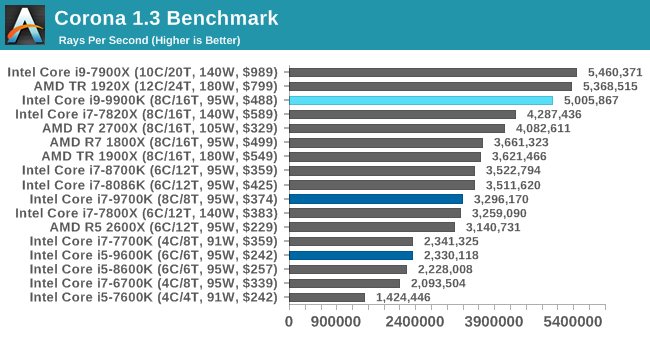

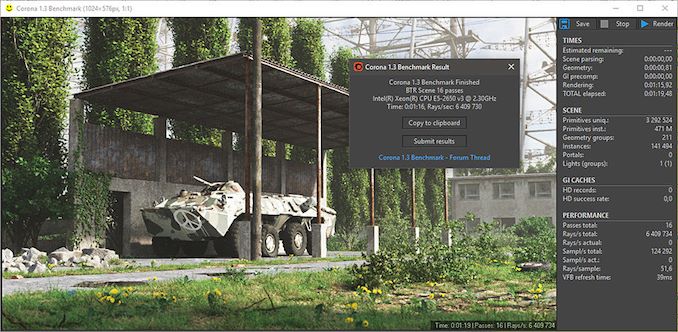

Corona 1.3: Performance Render

An advanced performance based renderer for software such as 3ds Max and Cinema 4D, the Corona benchmark renders a generated scene as a standard under its 1.3 software version. Normally the GUI implementation of the benchmark shows the scene being built, and allows the user to upload the result as a ‘time to complete’.

We got in contact with the developer who gave us a command line version of the benchmark that does a direct output of results. Rather than reporting time, we report the average number of rays per second across six runs, as the performance scaling of a result per unit time is typically visually easier to understand.

The Corona benchmark website can be found at https://corona-renderer.com/benchmark

Corona is a fully multithreaded test, so the non-HT parts get a little behind here. The Core i9-9900K blasts through the AMD 8-core parts with a 25% margin, and taps on the door of the 12-core Threadripper.

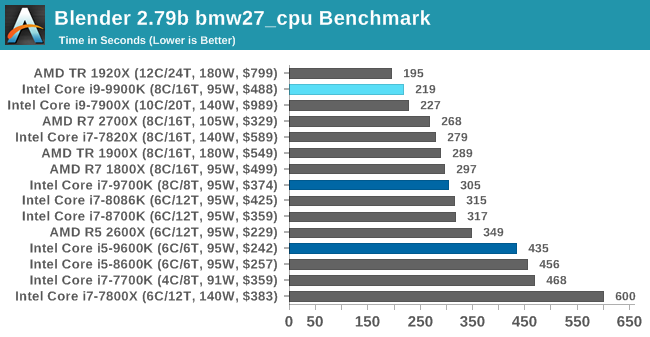

Blender 2.79b: 3D Creation Suite

A high profile rendering tool, Blender is open-source allowing for massive amounts of configurability, and is used by a number of high-profile animation studios worldwide. The organization recently released a Blender benchmark package, a couple of weeks after we had narrowed our Blender test for our new suite, however their test can take over an hour. For our results, we run one of the sub-tests in that suite through the command line - a standard ‘bmw27’ scene in CPU only mode, and measure the time to complete the render.

Blender can be downloaded at https://www.blender.org/download/

Blender has an eclectic mix of requirements, from memory bandwidth to raw performance, but like Corona the processors without HT get a bit behind here. The high frequency of the 9900K pushes it above the 10C Skylake-X part, and AMD's 2700X, but behind the 1920X.

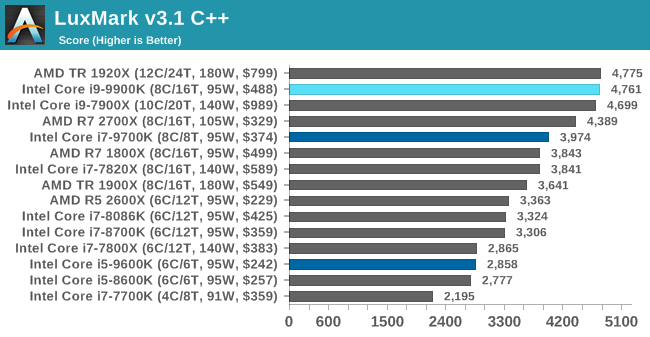

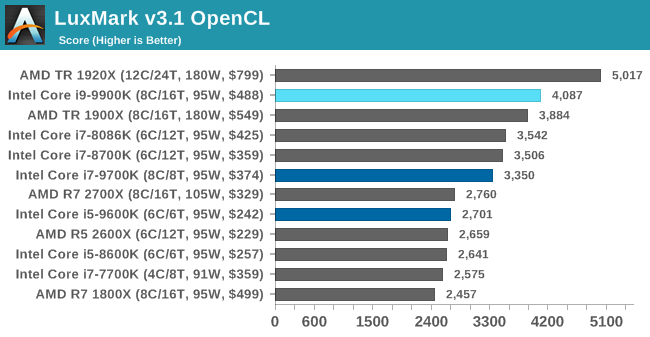

LuxMark v3.1: LuxRender via Different Code Paths

As stated at the top, there are many different ways to process rendering data: CPU, GPU, Accelerator, and others. On top of that, there are many frameworks and APIs in which to program, depending on how the software will be used. LuxMark, a benchmark developed using the LuxRender engine, offers several different scenes and APIs.

Taken from the Linux Version of LuxMark

In our test, we run the simple ‘Ball’ scene on both the C++ and OpenCL code paths, but in CPU mode. This scene starts with a rough render and slowly improves the quality over two minutes, giving a final result in what is essentially an average ‘kilorays per second’.

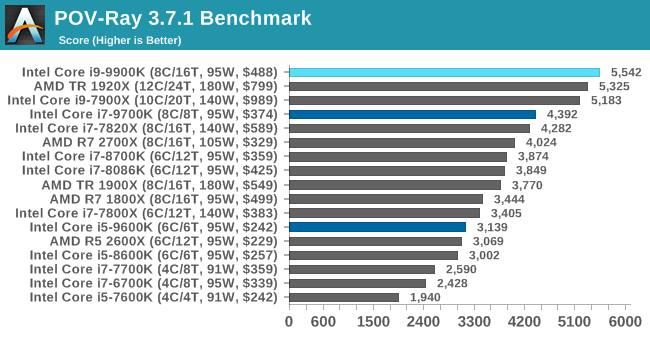

POV-Ray 3.7.1: Ray Tracing

The Persistence of Vision ray tracing engine is another well-known benchmarking tool, which was in a state of relative hibernation until AMD released its Zen processors, to which suddenly both Intel and AMD were submitting code to the main branch of the open source project. For our test, we use the built-in benchmark for all-cores, called from the command line.

POV-Ray can be downloaded from http://www.povray.org/

274 Comments

View All Comments

Total Meltdowner - Sunday, October 21, 2018 - link

Those typoes.."Good, F U foreigners who want our superior tech."

muziqaz - Monday, October 22, 2018 - link

Same to you, who still thinks that Intel CPUs are made purely in USA :DHifihedgehog - Friday, October 19, 2018 - link

What do I think? That it is a deliberate act of desperation. It looks like it may draw more power than a 32-Core ThreadRipper per your own charts.https://i.redd.it/iq1mz5bfi5t11.jpg

AutomaticTaco - Saturday, October 20, 2018 - link

Revisedhttps://www.anandtech.com/show/13400/intel-9th-gen...

The motherboard in question was using an insane 1.47v

https://twitter.com/IanCutress/status/105342741705...

https://twitter.com/IanCutress/status/105339755111...

edzieba - Friday, October 19, 2018 - link

For the last decade, you've had the choice between "I want really fast cores!" and "I want lots of cores!". This is the 'now you can have both' CPU, and it's surprisingly not in the HEDT realm.evernessince - Saturday, October 20, 2018 - link

It's priced like HEDT though. It's priced well into HEDT. FYI, you could have had both of those when the 1800X dropped.mapesdhs - Sunday, October 21, 2018 - link

I noticed initially in the UK the pricing of the 9900K was very close to the 7820X, but now pricing for the latter has often been replaced on retail sites with CALL. Coincidence? It's almost as if Intel is trying to hide that even Intel has better options at this price level.iwod - Friday, October 19, 2018 - link

Nothing unexpected really. 5Ghz with "better" node that is tuned for higher Frequency. The TDP was the real surprise though, I knew the TDP were fake, but 95 > 220W? I am pretty sure in some countries ( um... EU ) people can start suing Intel for misleading customers.For the AVX test, did the program really use AMD's AVX unit? or was it not optimised for AMD 's AVX, given AMD has a slightly different ( I say saner ) implementation. And if they did, the difference shouldn't be that big.

I continue to believe there is a huge market for iGPU, and I think AMD has the biggest chance to capture it, just looking at those totally playable 1080P frame-rate, if they could double the iGPU die size budget with 7nm Ryzen it would be all good.

Now we are just waiting for Zen 2.

GreenReaper - Friday, October 19, 2018 - link

It's using it. You can see points increased in both cases. But AMD implemented AVX on the cheap. It takes twice the cycles to execute AVX operations involving 256-bit data, because (AFAIK) it's implemented using 128-bit registers, with pairs of units that can only do multiplies or adds, not both.That may be the smart choice; it probably saves significant space and power. It might also work faster with SSE[2/3/4] code, still heavily used (in part because Intel has disabled AVX support on its lower-end chips). But some workloads just won't perform as well vs. Intel's flexible, wider units. The same is true for AVX-512, where the workstation chips run away with it.

It's like the difference between using a short bus, a full-sized school bus, and a double decker - or a train. If you can actually fill the train on a regular basis, are going to go a long way on it, and are willing to pay for the track, it works best. Oh, and if developers are optimizing AVX code for *any* CPU, it's almost certainly Intel, at least first. This might change in the future, but don't count on it.

emn13 - Saturday, October 20, 2018 - link

Those AVX numbers look like they're measuing something else; not just AVX512. You'd expect performance to increase (compared to AVX256) by around 50%, give or take quite a margin of error. It should *never* be more than a factor 2 faster. So ignore AMD; their AVX implementation is wonky, sure - but those intel numbers almost have to be wrong. I think the baseline isn't vectorized at all, or something like that - that would explain the huge jump.Of course, AVX512 is fairly complicated, and it's more than just wider - but these results seem extraordinary; and there' just not enough evidence the effect is real, not just some quirk of how the variations were compiled.