The NVIDIA GeForce RTX 2080 Ti & RTX 2080 Founders Edition Review: Foundations For A Ray Traced Future

by Nate Oh on September 19, 2018 5:15 PM EST- Posted in

- GPUs

- Raytrace

- GeForce

- NVIDIA

- DirectX Raytracing

- Turing

- GeForce RTX

Power, Temperature, and Noise

With a large chip, more transistors, and more frames, questions always pivot to the efficiency of the card, and how well it sits with the overall power consumption, thermal limits of the default ‘coolers’, and the local noise of the fans when at load. Users buying these cards are going to be expected to push some pixels, which will have knock on effects inside a case. For our testing, we use a case for the best real-world results in these metrics.

Power

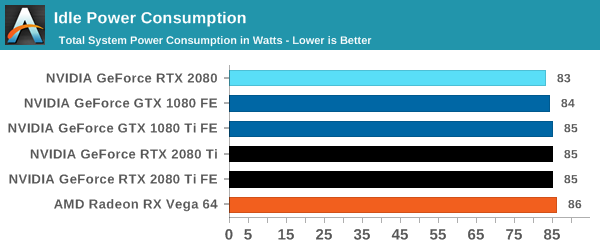

All of our graphics cards pivot around the 83-86W level when idle, though it is noticeable that they are in sets: the 2080 is below the 1080, the 2080 Ti sits above the 1080 Ti, and the Vega 64 consumes the most.

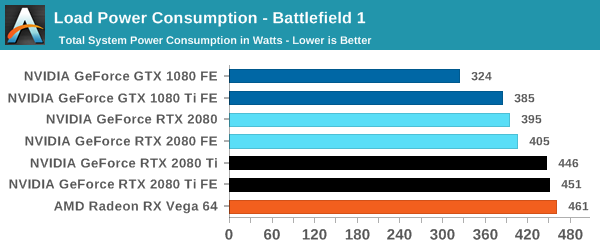

When we crank up a real-world title, all the RTX 20-series cards are pushing more power. The 2080 consumes 10W over the previous generation flagship, the 1080 Ti, and the new 2080 Ti flagship goes for another 50W system power beyond this. Still not as much as the Vega 64, however.

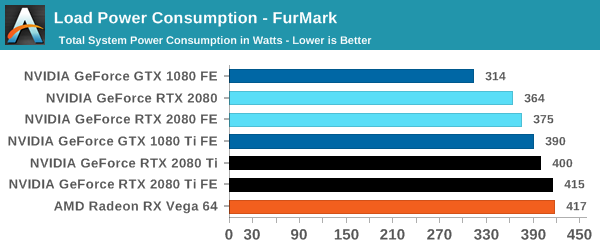

For a synthetic like Furmark, the RTX 2080 results show that it consumes less than the GTX 1080 Ti, although the GTX 1080 is some 50W less. The margin between the RTX 2080 FE and RTX 2080 Ti FE is some 40W, which is indicative of the official TDP differences. At the top end, the RTX 2080 Ti FE and RX Vega 64 are consuming equal power, however the RTX 2080 Ti FE is pushing through more work.

For power, the overall differences are quite clear: the RTX 2080 Ti is a step up above the RTX 2080, however the RTX 2080 shows that it is similar to the previous generation 1080/1080 Ti.

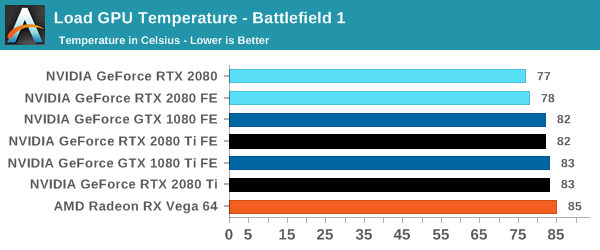

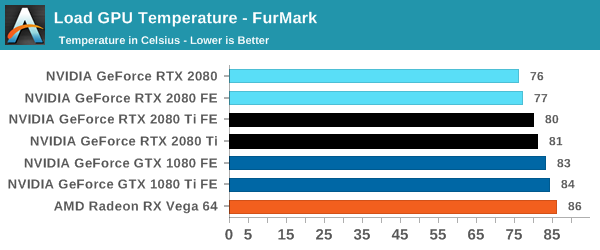

Temperature

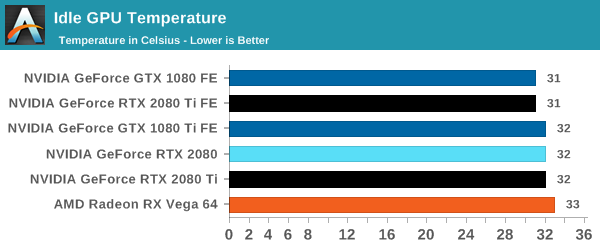

Straight off the bat, moving from the blower cooler to the dual fan coolers, we see that the RTX 2080 holds its temperature a lot better than the previous generation GTX 1080 and GTX 1080 Ti.

At each circumstance at load, the RTX 2080 is several degrees cooler than both the previous generation and the RTX 2080 Ti. The 2080 Ti fairs well in Furmark, coming in at a lower temperature than the 10-series, but trades blows in Battlefield. This is a win for the dual fan cooler, rather than the blower.

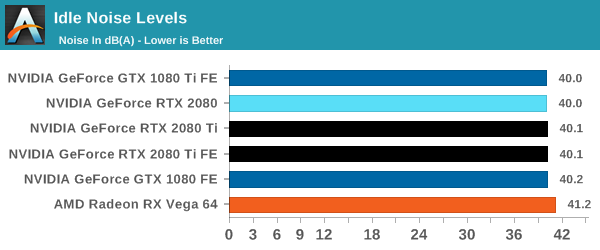

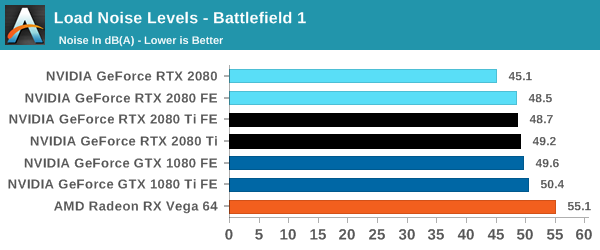

Noise

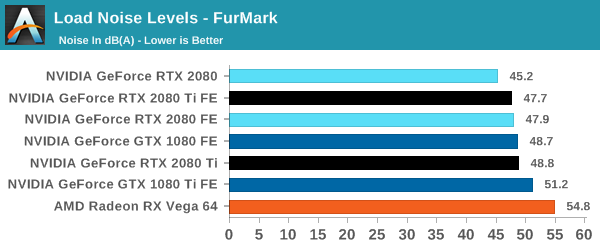

Similar to the temperature, the noise profile of the two larger fans rather than a single blower means that the new RTX cards can be quieter than the previous generation: the RTX 2080 wins here, showing that it can be 3-5 dB(A) lower than the 10-series and perform similar. The added power needed for the RTX 2080 Ti means that it is still competing against the GTX 1080, but it always beats the GTX 1080 Ti by comparison.

337 Comments

View All Comments

imaheadcase - Wednesday, September 19, 2018 - link

Because bluray players played movies from the start, delivered what they promised from the start even if cost a lot? Duh.PopinFRESH007 - Thursday, September 20, 2018 - link

They played DVDs from the start. Your statement is falseimaheadcase - Thursday, September 20, 2018 - link

Umm nope its true.Spunjji - Friday, September 21, 2018 - link

Yeah, there was media available at launch. Also Blu-Ray provided a noticeable jump in both quality AND resolution over DVD. RTX provides maybe the first and definitely not the second.V900 - Wednesday, September 19, 2018 - link

And it’s clear that you didn’t read the article, or skimmed it at best, if you’re claiming that “the two technologies have not even seen the real light of day”.The tools are out there, developers are working with them, and not only are there many games on the way that support them, there are games out now that use RTX.

Let me quote from the review:

“not only was the feat achieved but implemented, and not with proofs-of-concept but with full-fledged AA and AAA games. Today is a milestone from a purely academic view of computer graphics.”

tamalero - Wednesday, September 19, 2018 - link

Development means nothing unless they are released. As plans get cancelled, budgets gets cut and technology is replaced or converted/merged into a different standard.imaheadcase - Wednesday, September 19, 2018 - link

You just proved yourself wrong with own quote. lolGuess what? Python language is out there, lets all develop games from it! All the tools are available! Its so easy! /sarcasm

Ranger1065 - Thursday, September 20, 2018 - link

V900 shillage stench.PopinFRESH007 - Wednesday, September 19, 2018 - link

Just like those HD-DVD adopters, Laser Disc adopters, BetaMax adopters. V900 is pointing out that early adopters accept a level of risk in adopting new technology to enjoy cutting-edge stuff. This is no different that Bluray or DVDs when they came out. People who buy RTX cards have "WORKING TECH" and will have few options to use it just like the 2nd wave of Bluray players. The first Bluray player actually never had a movie released for it and it cost $3800."The first consumer device arrived in stores on April 10, 2003: the Sony BDZ-S77, a $3,800 (US) BD-RE recorder that was made available only in Japan.[20] But there was no standard for prerecorded video, and no movies were released for this player."

Even 3 years after that when they actually had a standard studios would produce movies for the players that were out cost over $1000 and there was a whopping 7 titles that were available. Similar to RTX being the fastest cards available for current technology, those Bluray players also played DVDs (gasp).

imaheadcase - Wednesday, September 19, 2018 - link

Again, the point is bluray WORKED out of the box even if expensive. This doesn't even have any way to even test the other stuff.. You are literally buying something for a FPS boost over previous gens that is not really a big one at that. It be a different tune if lots of games already had the tech in hand by nvidia, had it in games just not enabled...but its not even available to test is silly.