The NVIDIA GeForce RTX 2080 Ti & RTX 2080 Founders Edition Review: Foundations For A Ray Traced Future

by Nate Oh on September 19, 2018 5:15 PM EST- Posted in

- GPUs

- Raytrace

- GeForce

- NVIDIA

- DirectX Raytracing

- Turing

- GeForce RTX

Power, Temperature, and Noise

With a large chip, more transistors, and more frames, questions always pivot to the efficiency of the card, and how well it sits with the overall power consumption, thermal limits of the default ‘coolers’, and the local noise of the fans when at load. Users buying these cards are going to be expected to push some pixels, which will have knock on effects inside a case. For our testing, we use a case for the best real-world results in these metrics.

Power

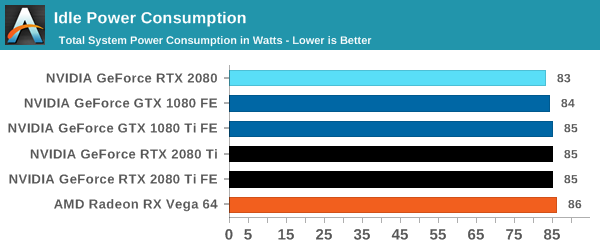

All of our graphics cards pivot around the 83-86W level when idle, though it is noticeable that they are in sets: the 2080 is below the 1080, the 2080 Ti sits above the 1080 Ti, and the Vega 64 consumes the most.

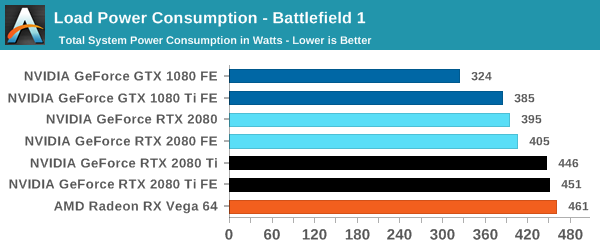

When we crank up a real-world title, all the RTX 20-series cards are pushing more power. The 2080 consumes 10W over the previous generation flagship, the 1080 Ti, and the new 2080 Ti flagship goes for another 50W system power beyond this. Still not as much as the Vega 64, however.

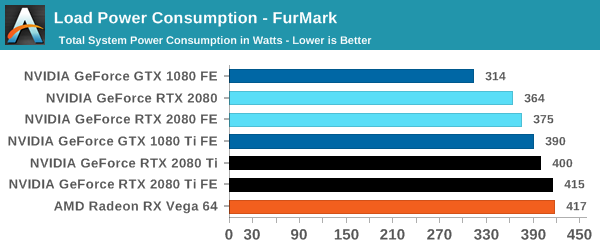

For a synthetic like Furmark, the RTX 2080 results show that it consumes less than the GTX 1080 Ti, although the GTX 1080 is some 50W less. The margin between the RTX 2080 FE and RTX 2080 Ti FE is some 40W, which is indicative of the official TDP differences. At the top end, the RTX 2080 Ti FE and RX Vega 64 are consuming equal power, however the RTX 2080 Ti FE is pushing through more work.

For power, the overall differences are quite clear: the RTX 2080 Ti is a step up above the RTX 2080, however the RTX 2080 shows that it is similar to the previous generation 1080/1080 Ti.

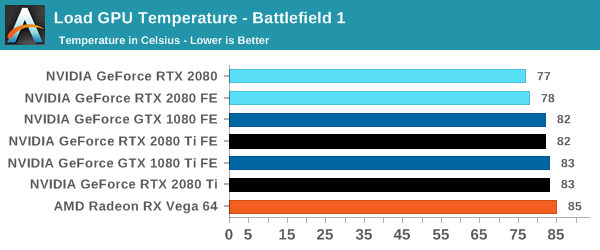

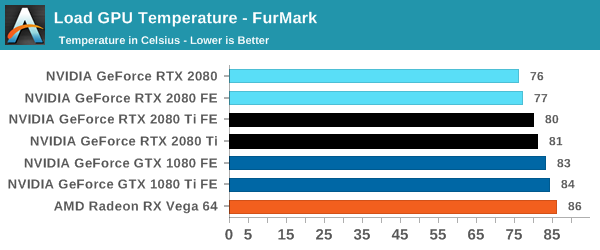

Temperature

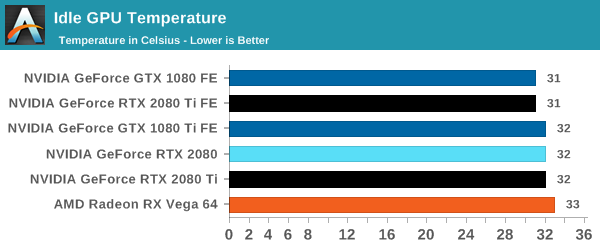

Straight off the bat, moving from the blower cooler to the dual fan coolers, we see that the RTX 2080 holds its temperature a lot better than the previous generation GTX 1080 and GTX 1080 Ti.

At each circumstance at load, the RTX 2080 is several degrees cooler than both the previous generation and the RTX 2080 Ti. The 2080 Ti fairs well in Furmark, coming in at a lower temperature than the 10-series, but trades blows in Battlefield. This is a win for the dual fan cooler, rather than the blower.

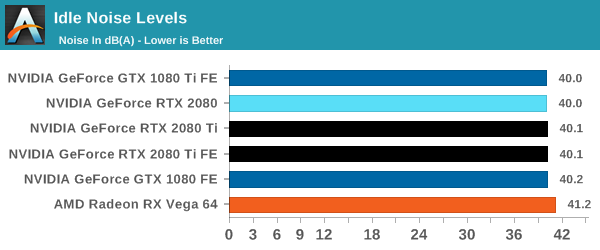

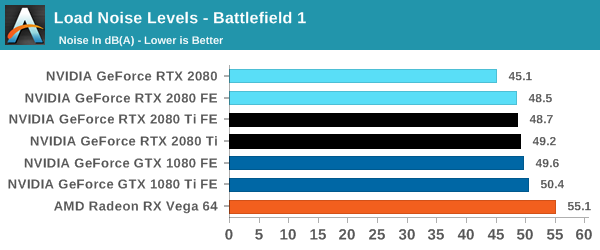

Noise

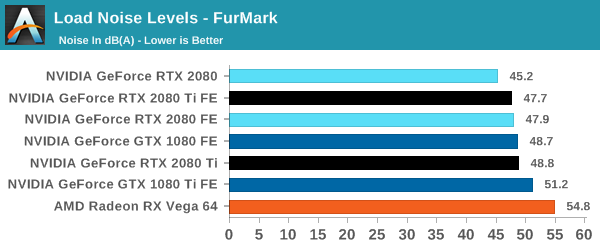

Similar to the temperature, the noise profile of the two larger fans rather than a single blower means that the new RTX cards can be quieter than the previous generation: the RTX 2080 wins here, showing that it can be 3-5 dB(A) lower than the 10-series and perform similar. The added power needed for the RTX 2080 Ti means that it is still competing against the GTX 1080, but it always beats the GTX 1080 Ti by comparison.

337 Comments

View All Comments

Santoval - Wednesday, September 19, 2018 - link

The problem is that it does not bring those things to the current table but is going to bring them to a future table. Essentially they expect you to buy a graphics cards that no current game can support its advanced features merely on faith that it both will deliver them in the future *and* that they will be will be worth the very high premium.If there is one ultimate unwritten rule when buying computers, computer parts or anything really, it must be this one : Never buy anything based on promises of *future* capabilities - always make your purchasing decisions based on what the products you buy can deliver *now*. All experienced computer and console consumers, in particular, must have that maxim engraved on their brain after having been burnt by so many broken promises.

Writer's Block - Monday, October 1, 2018 - link

That is certainly true; 'we promise', politicians and companies selling their shit use it a lot... And break it about as often.Inteli - Wednesday, September 19, 2018 - link

It's not that the price increase wasn't warranted, at least from the transistor count perspective, it's that there's not a lot to show for it.Many more transistors...concentrated in Tensor cores and RTX cores, which aren't being touched in current games. The increased price is for a load of baggage that will take at least a year to really get used (and before you say it, 3 games is not "really getting used"). We're used to new GPUs performing better in current games for the same price, not performing the same in current games for the same price (and I'm absolutely discounting everything before 2008 because that was 10 years ago and the expectations of what a new μArch should bring have changed).

I get the whole "future of gaming" angle you're pushing, and it's a perfectly valid reason to buy these new GPUs, but don't act like an apples-to-apples comparison of performance *right now* is the "wrong way of looking at it". How the card performs right now is an important metric for a lot of people, and will influence their decision. Especially when we're talking a potential price difference of $100+ (with sales on 1080 Ti's, and FE 2080 prices). Obviously there isn't a valid comparison for the 2080 Ti, but anyone who can drop $1300 on a GPU probably doesn't care too much about the price tag.

Flunk - Thursday, September 20, 2018 - link

Nvidia is charging what they are because they have no competition at the top end. That's it, nothing else. They're taking in the cash today in preparation for having to price more competitively later.just4U - Thursday, September 20, 2018 - link

Flunk, we are talking Nvidia here.. typically speaking they don't lower prices to compete.. Sometimes they bump to high and to few bite.. but that's about it. The last time they lowered prices to compete was the 400 series but they'd just come off getting zonked by amd for basically 2 generations.. and when they went to the 500s series it was fairly competitive with amd.. (initially they were better but Amd continued to improve their 5000/6000 series.. til it was consistently beating Nvidia.. did they lower prices? NO.. not one bit..)TNT cards were competitive and cheap.. but once Nvidia knocked off all other contenders (aside from AMD) and started in with their geforce line they have always carried premiums regardless competition or not.

eddman - Thursday, September 20, 2018 - link

GTX 280, launched at $650 because they thought AMD couldn't do much. AMD came up with 4870. What happened? Nvidia cut the card's price to $500 a mere month after launch. So yes, they do cut prices to compete.Dragonstongue - Thursday, September 20, 2018 - link

13.6 and 18.6 (bln transistor estimated) die size of 454/754mm2 (2080/2080Ti) 12nm7.2 and 12 (bln transistor estimated) die size of 314/471 (1070/1080-1080Ti/TitanX) 16nm

yes it is "expensive" no doubt about that, but, it is Nv we are talking about, there is a reason they are way over valued as they are, they produce as cheaply as possible and rack them up in price as much as they can even when their actual cards shipped are no where near the $$$ figure they report as it should.

also, if anything else, they always have and always will BS the numbers to make themselves ALWAYS appear "supreme" no matter if it is actual power used, TDP, API features, or transistor count etc etc etc.

as far as the ray tracing crap...if they used an open source style so that everyone can use the exact same ray tracing engine so they can be directly compared to see how good they are or not then it might be "worthy" but, it is Nv they are and continue to be "it has to be our way or you don't play" type approach...I remember way back when with PhysX (which Nv boug out Ageia to do it) when Radeons were able to use it (before Nv took the ability away) they ran circles around comparable Nv cards AND used less cpu and power to do it.

Nv does not want to get "caught" in their BS, so they find nefarious ways around everything, and when you have a massive amount of $$$$$$$$$$$$$$$$ floating everything you do, it is not hard for them to "buy silence" Intel has done so time and time again, Nv does so time and time again........blekk

DLSS or whatever the fk they want to call it, means jack shit when only specific cards will be able to use it instead of being a truly open source initiative where everyone/everything gets to show how good they are (or not) and also stand to gain benefit from others putting effort into making it as good as it possibly can be...there is a reason why Nv barely supports Vulkan, because they are not "in control" it is way too easy to "prove them wrong"..funny because Vulkan has ray tracing "built in"

IMO if they are as good as they claim they are, they would do everything in the light to show they are "the best" not find ways to "hide" what they are doing.....their days are numbered....hell their stock price just took a hit....good IMHO because they should not be over $200 anyways, $100, maybe, but they absolutely should not be valued above others whos financials and product shipment as magnitudes larger.

Spunjji - Friday, September 21, 2018 - link

Remind me why consumers should give a rats-ass about die size, other than its visible effects of price and performance.If you want to sell me a substantially larger, more expensive chip that performs a little better for a lot more money, a better reason is needed than "maybe it will make some games that aren't out yet really cool in a way that we refuse to give you any performance indications about".

Screw that.

Writer's Block - Monday, October 1, 2018 - link

They look poor value; good performance, sure. But a 1080ti offers the same for much less.They want me to buy promises! Seriously, promises are never worth the paper they are printed on - digital or the real stuff.

Writer's Block - Monday, October 1, 2018 - link

Oh and, yeh agree.