The NVIDIA GeForce RTX 2080 Ti & RTX 2080 Founders Edition Review: Foundations For A Ray Traced Future

by Nate Oh on September 19, 2018 5:15 PM EST- Posted in

- GPUs

- Raytrace

- GeForce

- NVIDIA

- DirectX Raytracing

- Turing

- GeForce RTX

Battlefield 1 (DX11)

Battlefield 1 returns from the 2017 benchmark suite, the 2017 benchmark suite with a bang as DICE brought gamers the long-awaited AAA World War 1 shooter a little over a year ago. With detailed maps, environmental effects, and pacy combat, Battlefield 1 provides a generally well-optimized yet demanding graphics workload. The next Battlefield game from DICE, Battlefield V, completes the nostalgia circuit with a return to World War 2, but more importantly for us, is one of the flagship titles for GeForce RTX real time ray tracing, although at this time it isn't ready.

We use the Ultra preset is used with no alterations. As these benchmarks are from single player mode, our rule of thumb with multiplayer performance still applies: multiplayer framerates generally dip to half our single player framerates. Battlefield 1 also supports HDR (HDR10, Dolby Vision).

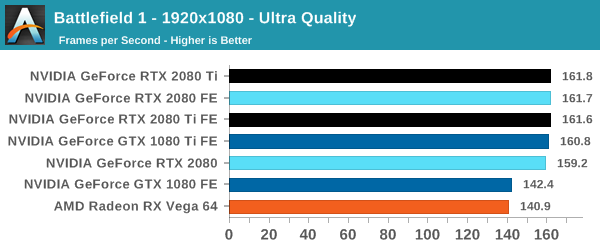

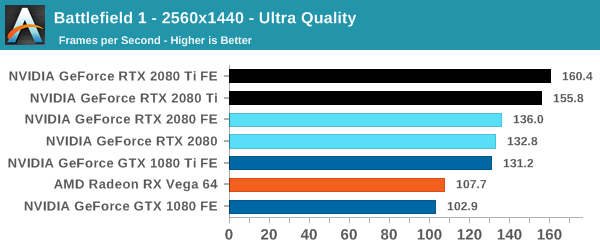

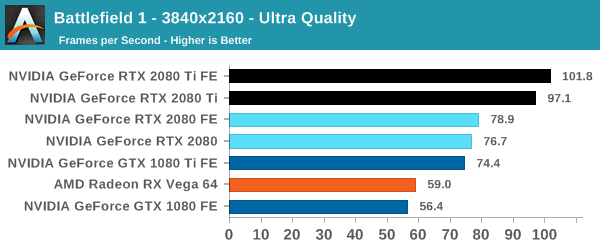

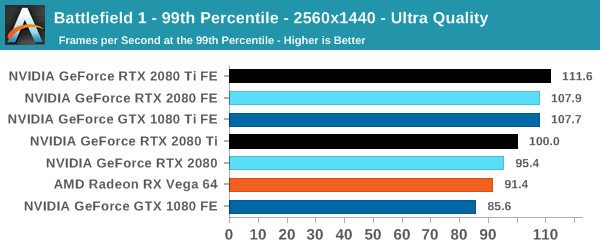

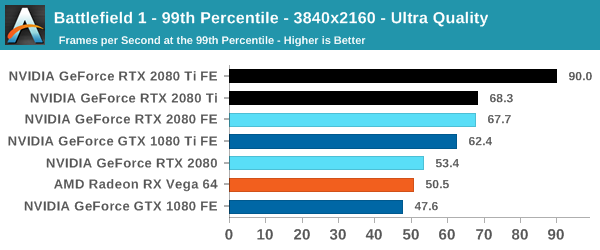

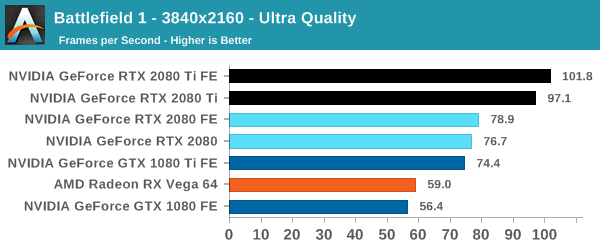

| Battlefield 1 | 1920x1080 | 2560x1440 | 3840x2160 |

| Average FPS |  |

|

|

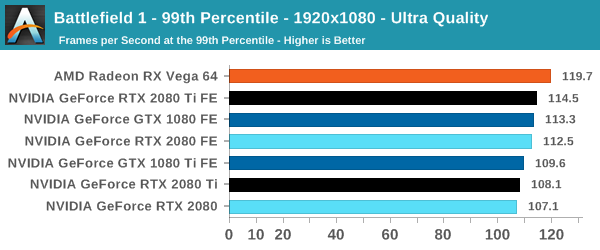

| 99th Percentile |  |

|

|

At this point, the RTX 2080 Ti is fast enough to touch the CPU bottleneck at 1080p, but it keeps its substantial lead at 4K. Nowadays, Battlefield 1 runs rather well on a gamut of cards and settings, and in optimized high-profile games like these, the 2080 in particular will need to make sure that the veteran 1080 Ti doesn't edge too close. So we see the Founders Edition specs are enough to firmly plant the 2080 Founders Edition faster than the 1080 Ti Founders Edition.

The outlying low 99th percentile reading for the 2080 Ti occurred on repeated testing, and we're looking into it further.

337 Comments

View All Comments

Dribble - Thursday, September 20, 2018 - link

The DLSS basically gives you a resolution jump for free (e.g. 4k for 1440p performance) and is really easy to implement. That's going to take off fast and probably means even the 2070 will be faster then the 1080Ti in games that support it.Lolimaster - Saturday, September 22, 2018 - link

No not free, everyone can see the blurry mess the renamed blur effect is.Inteli - Saturday, September 22, 2018 - link

TIL that when you stop isolating variables in a benchmark, a lower-end card can be faster than a higher-end card.tamalero - Wednesday, September 19, 2018 - link

Die size is irrelevant to consumers. They see price vs performance. not how big the silicon is.AMD was toasted for having hot slow chips. many times.. so did nvidia.. big and hot means nothing if it doesn't perform as expected for the insane prices they 're asking for.

Yojimbo - Wednesday, September 19, 2018 - link

Die size is not irrelevant to consumers because increased die size means increased cost to manufacture. Increased cost to manufacture means a pressure for higher prices. The question is what you get in return for those higher prices.People like what they know... what they are used to. If some new AA technique comes along and increases performance significantly but introduces visual artifacts it will be rejected as a step backwards. But if a new technology comes along that has a significant performance cost yet increases visual quality much more significantly than the aforementioned artifacts decrease it, people will also have a tendency to reject it. That is, until they become familiar with the technology... That's where we are with RTX. No one can become familiar with the technology when there are no games that make use of it. So trying to judge the value of the larger die sizes is an abstract thing. In a few months the situation will be different.

Personally, I think the architecture will be remembered as one of the biggest and most important in the entire history of gaming. There is so much new technology in it that some of it barely anyone is saying much about (where have you heard about texture space shading, for example?). Several of these technologies will have their greatest benefits with VR, and if VR had taken off people would be marveling about this architecture immediately. But I think that VR will eventually take off, and I think several of these technologies will become the standard way of doing things for the next several years. They are new and complicated for developers, though. Only a few developers are prepared to take advantage of the stuff today. It's going to be some time before we really can put the architecture into its proper historical perspective.

From the point of view of a purchase today, though, it's a bit of an unknown. If you buy a card now and plan to keep it for 4 years, I think you'd be better off getting a 20 series than a 10 series. If you buy it and keep it for 2 years, then it's a bit less clear, but we'll have a better idea of the answer to that question in 6 months, I think.

I do think, though, that if an architecture with this much new stuff were introduced 20 years ago everybody, including games enthusiast sites like Anandtech, would be going gaga over it. The industry was moving faster then and people were more optimistic. Also the sites didn't try to be so demure. Hmm, gaga as the opposite of demure. Maybe that's why she's called Lady Gaga.

Santoval - Wednesday, September 19, 2018 - link

I agree that this might be the most game-changing graphics tech of the last couple of decades, and that the future belongs to ray-tracing, but I also think that precisely due to the general uncertainty and the very high prices Nvidia might suffer one of their biggest sales slumps this generation, if not *the* biggest. They did not handle the launch well : it is absurd to release a new series with zero ray-traced, DLSS supporting or mesh shaded games at launch.Their extensive NDAs, lack of information and ban on benchmarks between the Gamescom pre-launch and the actual launch, despite going live with (blind faith based) preorders during that window, was also controversial and highly suspicious. It appears that Nvidia gave graphics cards to game developers very late to avoid leaks, but that resulted in having no RTX supporting games at launch. They thought they could not have it both ways apparently, but choosing that over having RTX supporting games at launch was a very risky gamble.

Since their sales will almost certainly slump, they should release 7nm based graphics cards (either a true 30xx series or Turing at 7nm, I guess the latter) much sooner, probably in around 6 months. They might have expected a sales slump, which is why they released 2080Ti now. I suppose they will try to avoid it with aggressive marketing and somewhat lower prices lately, but it is not certain they'll succeed.

eddman - Thursday, September 20, 2018 - link

Would you've still defended this if it was priced at $1500? How about $2000? Do you always ignore price when new tech is involved?The cards, themselves, aren't bad. They are actually very good. It's their pricing.

These cards, specifically 2080 Ti, are overpriced compared to their direct predecessors. Ray tracing, DLSS, etc. etc. they still do not justify such prices for such FPS gains in regular rasterized games.

A 2080 Ti might be an ok purchase for $850-900, but certainly not $1200+. Even 8800 GTX with its new cuda cores and new generation of lighting tech launched at the same MSRP as 7800 GTX.

These cards are surely more expensive to make, but there is no doubt that the biggest factor for these price jumps is that nvidia is basically competing with themselves. Why price them lower when they can easily sell truckloads of pascal cards at their usual prices until the inventory is gone.

Andrew LB - Thursday, September 20, 2018 - link

You, like so many others don't get it. nVidia has re-worked their product lines. Didn't you notice how the Ti came out at the same time as the 2080? You might also notice that Titan is now called Titan V (volta) and not GTX Titan. Titan is now in its own family of non-gaming cards and that is reflected in the driver section on their site. They now have titan specific drivers.Here, watch this. Jay explains it fairly well.

https://youtu.be/5XRWATUDS7o?t=6m2s

eddman - Thursday, September 20, 2018 - link

You took an opinion and decided it's a fact. It's not. That guy is not the authority on graphics cards.There is no official word that titan is now 2080 Ti. Nvidia named that card 2080 Ti, it has a 102 named chip. Nvidia themselves constantly compare it to 1080 Ti, which also has a 102 named chip, therefore it's the successor to 1080 Ti and it's very normal to expect similar pricing.

Don't worry, there will be a Titan turing, considering that 2080 Ti does not even use the fully enabled chip.

It's really baffling to see people, paying customers, defending a $1200 price tag. It is as if you like to be charged more.

eddman - Thursday, September 20, 2018 - link

$1000, but it's still too high, and you cannot find any card at that price anyway.