The NVIDIA GeForce RTX 2080 Ti & RTX 2080 Founders Edition Review: Foundations For A Ray Traced Future

by Nate Oh on September 19, 2018 5:15 PM EST- Posted in

- GPUs

- Raytrace

- GeForce

- NVIDIA

- DirectX Raytracing

- Turing

- GeForce RTX

Power, Temperature, and Noise

With a large chip, more transistors, and more frames, questions always pivot to the efficiency of the card, and how well it sits with the overall power consumption, thermal limits of the default ‘coolers’, and the local noise of the fans when at load. Users buying these cards are going to be expected to push some pixels, which will have knock on effects inside a case. For our testing, we use a case for the best real-world results in these metrics.

Power

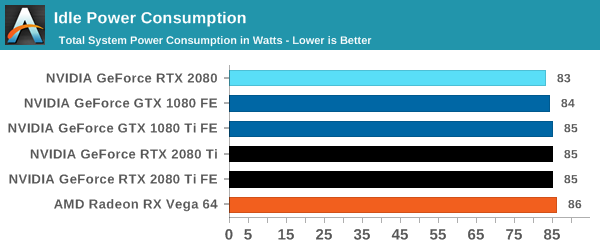

All of our graphics cards pivot around the 83-86W level when idle, though it is noticeable that they are in sets: the 2080 is below the 1080, the 2080 Ti sits above the 1080 Ti, and the Vega 64 consumes the most.

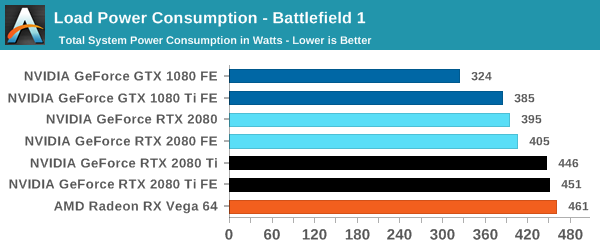

When we crank up a real-world title, all the RTX 20-series cards are pushing more power. The 2080 consumes 10W over the previous generation flagship, the 1080 Ti, and the new 2080 Ti flagship goes for another 50W system power beyond this. Still not as much as the Vega 64, however.

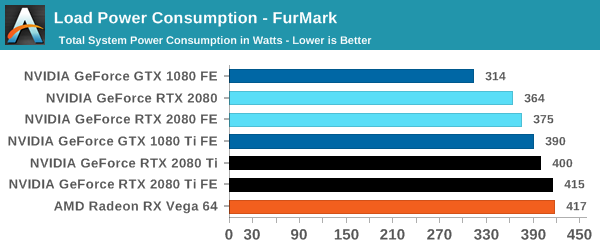

For a synthetic like Furmark, the RTX 2080 results show that it consumes less than the GTX 1080 Ti, although the GTX 1080 is some 50W less. The margin between the RTX 2080 FE and RTX 2080 Ti FE is some 40W, which is indicative of the official TDP differences. At the top end, the RTX 2080 Ti FE and RX Vega 64 are consuming equal power, however the RTX 2080 Ti FE is pushing through more work.

For power, the overall differences are quite clear: the RTX 2080 Ti is a step up above the RTX 2080, however the RTX 2080 shows that it is similar to the previous generation 1080/1080 Ti.

Temperature

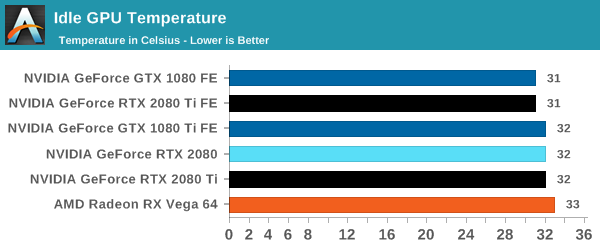

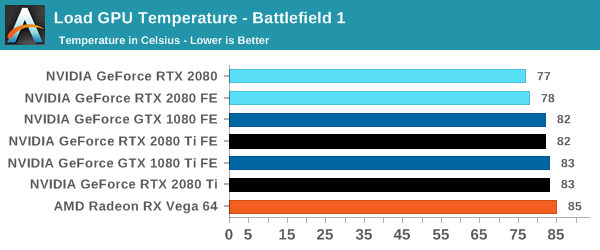

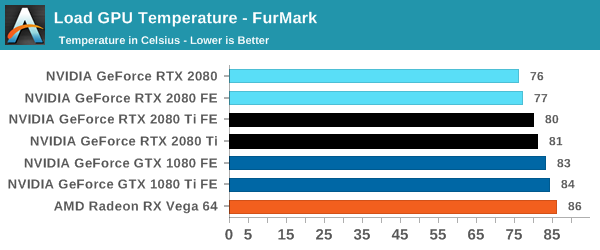

Straight off the bat, moving from the blower cooler to the dual fan coolers, we see that the RTX 2080 holds its temperature a lot better than the previous generation GTX 1080 and GTX 1080 Ti.

At each circumstance at load, the RTX 2080 is several degrees cooler than both the previous generation and the RTX 2080 Ti. The 2080 Ti fairs well in Furmark, coming in at a lower temperature than the 10-series, but trades blows in Battlefield. This is a win for the dual fan cooler, rather than the blower.

Noise

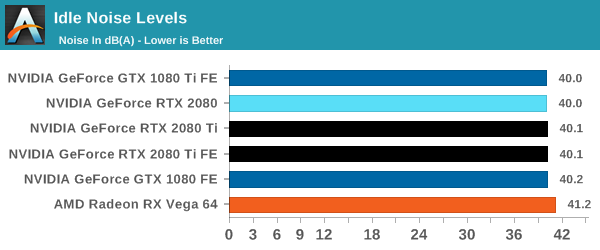

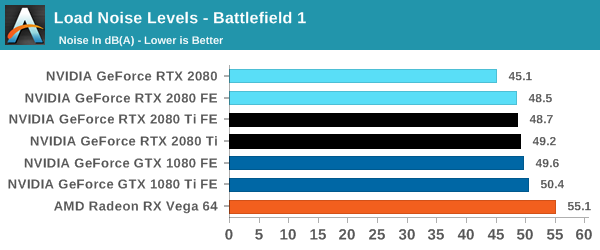

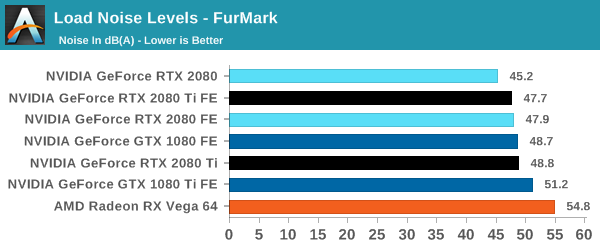

Similar to the temperature, the noise profile of the two larger fans rather than a single blower means that the new RTX cards can be quieter than the previous generation: the RTX 2080 wins here, showing that it can be 3-5 dB(A) lower than the 10-series and perform similar. The added power needed for the RTX 2080 Ti means that it is still competing against the GTX 1080, but it always beats the GTX 1080 Ti by comparison.

337 Comments

View All Comments

AnnoyedGrunt - Friday, September 21, 2018 - link

I think it was actually much less, judging by comments made in one of the reviews I linked. Maybe around $350 or so, which was very expensive at the time. It is true that it was a revolutionary card, but at the same time it was greeted with a lukewarm reception from the gaming community. Much like the 20XX series. I doubt that the 20XX will seem as revolutionary in hindsight as the GeForce256 did, but the initial reception does seem similar between the two. Will be interesting to see what the next year brings to the table.-AG

eddman - Friday, September 21, 2018 - link

Wow, that's just $525 now. I'm interested in old card prices because some people claim they have always been super expensive. It seems they have a selective memory. I'm yet to find a card more expensive than 2080 Ti from that time period.I'm not surprised that people still didn't buy many 256 cards. The previous cards were cheaper and performed close enough for the time.

abufrejoval - Thursday, September 20, 2018 - link

I am pretty sure I'll get a 2080ti, simply because nothing else will run INT4 or INT8 based inference with similar performance and ease of availability and tools support. Sure, when you are BAIDU or Facebook, you can buy even faster inference hardware or if you are Google you can build your own. But if you are not, I don't know where you'll get something that comes close.As far as gaming is concerned, my 1080ti falls short on 4k with ARK, which is noticeable at 43". If the 2080ti can get me through the critical minimum of 30FPS, it will have been worth it.

As far as ray tracing is concerned, I am less concerned about its support in games: Photo realism isn't an absolute necessity for game immersion.

But I'd love to see hybrid render support in software like Blender: The ability to pimp up the quality for video content creation and replace CPU based rander farms with something that is visually "awsome enough" points towards the real "game changing" capacity of this generation.

It pushes three distinct envelopes, raster, compute and render: If you only care about one, the value may not be there. In my case, I like the ability to explore all three, while getting an 2080ti for me allows me to push down an 1070 to one of my kids still running an R290X: Christmas for both of us!

mapesdhs - Thursday, September 27, 2018 - link

In the end though that's kinda the point, these are not gaming cards anymore and haven't been for some time. These are side spins from compute, where the real money & growth lie. We don't *need* raytracing for gaming, that glosses over so many other far more relevant issues about what makes for a good game.Pyrostemplar - Thursday, September 20, 2018 - link

High performance and (more than) matching price. nVidia seemingly put the card classification down one notch (x80 => x70; Ti => x80; Titan => Ti) while keeping the prices and overclocked then from day one so it looks like solid progress if one disregards the price.I think it will be a short lived (1 year or so) generation. A pricey stop gap with a few useless new features (because when devs catch up and actually deploy DXR enabled games, these cards will have been replaced by something faster).

ballsystemlord - Thursday, September 20, 2018 - link

Spelling/grammar errors (Only 2!):Wrong word:

"All-in-all, NVIDIA is keeping the Founders Edition premium, now increased to $100 to $200 over the baseline"

Should be:

"All-in-all, NVIDIA is keeping the Founders Edition premium, now increased from $100 to $200 over the baseline"

Missing "s":

"Of course, NVIDIA maintain that the cards will provide expected top-tier"

Should be:

"Of course, NVIDIA maintains that the cards will provide expected top-tier"

Ryan Smith - Thursday, September 20, 2018 - link

Thanks!ballsystemlord - Thursday, September 20, 2018 - link

Nate! Can you add DP folding @ home benchmark numbers? There were none in the Vega review and only SP in this Nvidia review.SanX - Thursday, September 20, 2018 - link

Author thinks that all gamers buy only fastest cards? May be. But I doubt all of them buy the new generestion card every year. In short, where are comparisons to 980/980Ti and even 780/780Ti? Owners of those cards are more interested to upgrade.milkod2001 - Friday, September 21, 2018 - link

See from top menu on right, there is a bench where you can see results. I presume they add data to huge database soon. And yes,people are talking about high end GPU but most are spending $400 max. for it.