The NVIDIA Turing GPU Architecture Deep Dive: Prelude to GeForce RTX

by Nate Oh on September 14, 2018 12:30 PM ESTTuring Tensor Cores: Leveraging Deep Learning Inference for Gaming

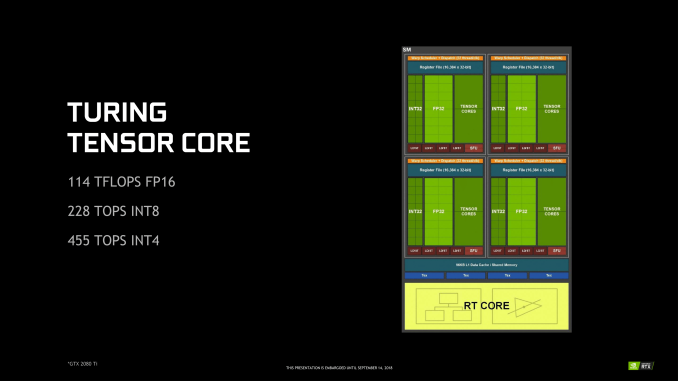

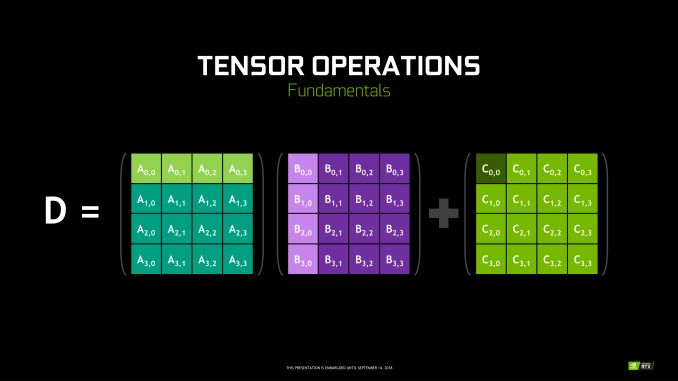

Though RT Cores are Turing’s poster child feature, the tensor cores were very much Volta’s. In Turing, they’ve been updated, reflecting its positioning as a gaming/consumer feature via inferencing. The main changes for the 2nd generation tensor cores are INT8 and INT4 precision modes for inferencing, enabled by new hardware data paths, and perform dot products to accumulate into an INT32 product. INT8 mode operates at double the FP16 rate, or 2048 integer operations per clock. INT4 mode operates at quadruple the FP16 rate, or 4096 integer ops per clock.

Naturally, only some networks tolerate these lower precisions and any necessary quantization, meaning the storage and calculation of compacted format data. INT4 is firmly in the research area, whereas INT8’s practical applicability is much more developed. Regardless, the 2nd generation tensor cores still have FP16 mode, which they now support in a pure FP16 mode without FP32 accumulator. While CUDA 10 is not yet out, the enhanced WMMA operations should shed light on any other differences, such as additional accepted matrix sizes for operands.

Inasmuch as deep learning is involved, NVIDIA is pushing what was a purely compute/professional feature into consumer territory, and we will go over the full picture in a later section. For Turing, the tensor cores can accelerate the features under the NGX umbrella, which includes DLSS. They can also accelerate certain AI-based denoisers that cleanup and correct real time raytraced rendering, though most developers seem to be opting for non-tensor core accelerated denoisers at the moment.

111 Comments

View All Comments

StormyParis - Friday, September 14, 2018 - link

Fascinating subject and excellent treatment. I feel informed and intelligent, so thank you.Gc - Friday, September 14, 2018 - link

Nice introductory article. I wonder if the ray tracing hardware might have other uses, such as path finding in space, or collision detection in explosions.The copy editing was a let down.

Copy editor: please review the "amount vs. number" categorical distinction in English grammar. Parts of this article, that incorrectly use "amount", such as "amount of rays" instead of "number of rays", are comprehensible but jarring to read, in the way that a computer translation can be comprehensible but annoying to read.

(yes: "amount of noise". no: "amount of rays, usually 1 or 2 per pixel". yes: "number of rays, usually 1 or 2 per pixel".) (Recall that "number" is for countable items, that can be singular or plural, such as 1 ray or 2 rays. "Amount" is for an unspecified quantity such as liquid or money, "amount of water in the tank" or "amount of money in the bank". But if pluralizable units are specified, then those units are countable, so "number of liters in the tank", or "number of dollars in the bank". [In this article, "amount of noise" does not refer to an event as in 1 noise, 2 noises, but rather to an unspecified quantity or ratio.] A web search for "amount vs. number" will turn up other explanations.)

Gc - Friday, September 14, 2018 - link

(Hope you're all staying dry if you're in Florence's storm path.)edzieba - Saturday, September 15, 2018 - link

" I wonder if the ray tracing hardware might have other uses, such as path finding in space, or collision detection in explosions."Yes, these were called out (as well as gun hitscan and AI direct visibility checks) in their developer focused GDC presentation.

edzieba - Saturday, September 15, 2018 - link

One thing that might be worth highlighting (or exploring further) is that raytraced reflections and lighting/shadowing are necessary for VR, where screen-space reflections produce very obviously incorrect resultsAchaios - Saturday, September 15, 2018 - link

Τhis is epic. It should be taught as a special lesson in Marketing classes. NVIDIA is selling fanboys technology for which there is presently no practical use for, and the cards are already sold out. Might as well give NVIDIA license to print money.iwod - Saturday, September 15, 2018 - link

Aren't we fast running to Memory Bandwidth bottleneck?Assuming we get 7nm next year at 8192 CUDA Core, that will need at least 80% more bandwidth, or 1TB/s. Neither 512bit memory nor HBM2 could offer that.

HStewart - Saturday, September 15, 2018 - link

I wondering when professional rendering packages support RTX - I personally have Lightwave 3D 2018 and because of Newtek's excellent upgrade process - I could see supporting it in future. I could see this technology do wonders for Movie and Game creations - reducing the dependency on CPU cpresYaleZhang - Saturday, September 15, 2018 - link

Increased power use is disappointing. Is the 225W TDP for 2080 the power used or the heat dissipated? If it's power used, then that would include the 27W power used by VirtualLink. So then the real power usage would be 198 W.willis936 - Sunday, September 16, 2018 - link

I've been in signal integrity for five years. I write automation scripts for half million dollar oscilloscopes. I love it. It's my jam. Why on god's green earth does nvidia think their audience cares about eye diagrams? They mean literally nothing to the target audience. They're not talking to system integrators or chip manufacturers. Even if they were a single eye diagram with an eye width measurement means next to nothing beyond demonstrating that they have an image of what a signal at a given baud rate should look like (it's unclear if it's simulated or taken from one of their test monkeys). If they really wanted to blow us away they could say something like they've verified 97% confidence that their memory interface/channel BER <= 1E-15 when the spec commands BER <= 1E-12 or something. It's just a jargon image to show off how much they must really know their stuff. It just strikes me as tacky.