The NVIDIA Turing GPU Architecture Deep Dive: Prelude to GeForce RTX

by Nate Oh on September 14, 2018 12:30 PM ESTTuring Tensor Cores: Leveraging Deep Learning Inference for Gaming

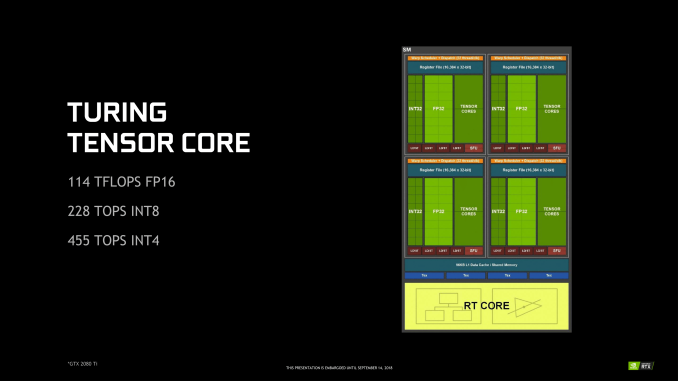

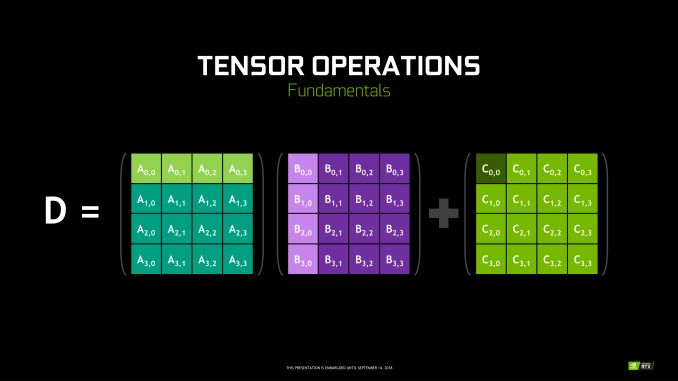

Though RT Cores are Turing’s poster child feature, the tensor cores were very much Volta’s. In Turing, they’ve been updated, reflecting its positioning as a gaming/consumer feature via inferencing. The main changes for the 2nd generation tensor cores are INT8 and INT4 precision modes for inferencing, enabled by new hardware data paths, and perform dot products to accumulate into an INT32 product. INT8 mode operates at double the FP16 rate, or 2048 integer operations per clock. INT4 mode operates at quadruple the FP16 rate, or 4096 integer ops per clock.

Naturally, only some networks tolerate these lower precisions and any necessary quantization, meaning the storage and calculation of compacted format data. INT4 is firmly in the research area, whereas INT8’s practical applicability is much more developed. Regardless, the 2nd generation tensor cores still have FP16 mode, which they now support in a pure FP16 mode without FP32 accumulator. While CUDA 10 is not yet out, the enhanced WMMA operations should shed light on any other differences, such as additional accepted matrix sizes for operands.

Inasmuch as deep learning is involved, NVIDIA is pushing what was a purely compute/professional feature into consumer territory, and we will go over the full picture in a later section. For Turing, the tensor cores can accelerate the features under the NGX umbrella, which includes DLSS. They can also accelerate certain AI-based denoisers that cleanup and correct real time raytraced rendering, though most developers seem to be opting for non-tensor core accelerated denoisers at the moment.

111 Comments

View All Comments

bernstein - Friday, September 14, 2018 - link

a lot of this will also depend on what kind of silicon ends up in the next playstation & xbox generation...Spunjji - Monday, September 17, 2018 - link

Isn't that already pretty much pinned to AMD? AFAIK Navi is pretty much the consumer interpretation of AMD's PS5 design. Microsoft really aren't likely to jump ship because of their history with Nvidia.Yojimbo - Saturday, September 15, 2018 - link

I think Turing's price/perf ratio will be better than Pascal's. It's the increase in price/performance that is not spectacular. But since AMD isn't releasing anything at all, that doesn't reflect negatively on Turing in any way.I don't know why people are throwing around this "50% of transistors" idea. Where is this information coming from?

Of course Turing will be crushed by a next generation of 7 nm GPUs that is architected equally as well, as such GPUs will have both additional time for architectural improvements and the advantage of a full node shrink. That will be true for both hybrid and raster-only rendering. And it would have been true for raster rendering no matter if RT cores were included or not.

It sounds like NVIDIA is providing the DLSS service to developers for free. I'd expect DLSS usage to be widespread for any developers interested in making games geared towards the 4K market.

I am guessing that Microsoft, at least, will want a raytracing-capable GPU in its next console. I doubt they would spend the effort to make the DXR API extension and then leave the technology out of their console, especially considering the convergence of console and PC gaming they seem to be pushing for.

jwcalla - Friday, September 14, 2018 - link

This is probably my first disinterested nvidia launch. Tensor cores and ray tracing don't really get me excited. I can't imagine half a die used for that stuff. Do the graphics really look that much better? Does hyper-realism even matter?Dizoja86 - Friday, September 14, 2018 - link

It doesn't even have to be hyper-realism. Just the basic limitations you can see with rasterized reflections in the Battlefield V tech demo paints a strong case for the use of ray-tracing. Being able to see reflections of objects that aren't directly on the screen in front of you seems like an important thing to move towards.HollyDOL - Saturday, September 15, 2018 - link

classic rasterized shading and reflection is basically one big cheat on human eye. Imagine something along mp3 128kbit being 'cd quality'. Trying to get that cheat closer and closer to 'reality' is more and more a challenge and resource eater. Ray-Tracing _should_ be able to quite simplify the issue on development front in future. And that's not considering possible visuals quality raise.Tamz_msc - Saturday, September 15, 2018 - link

Lol, players are complaining that in BF V it is hard to distinguish between friendlies and enemies. Adding RTX reflections to the mix would just make it worse.jwcalla - Saturday, September 15, 2018 - link

Watching the Battlefield tech demo (and the others), I didn't think it added a lot of value. When you analyze it side-by-side with a magnifying glass, yes, you can see some differences. I just don't think they're that dramatic and in the heat of game play you're not even going to recognize it. The improvements to global illumination look good though.I just feel like the industry has lost a lot of focus.

RSAUser - Saturday, September 15, 2018 - link

In a game like BF V, you're not just going to stand there looking at reflections, and it's going to hammer your frame rate/force you to go to 1080p or lower.I'd rather turn it off and have a high fps on 4k, tyvm, same as near everyone turned off hairworks for witcher 3, though with that it was at least single player so you'd sacrifice performance for visuals.

Dizoja86 - Friday, September 14, 2018 - link

Sometimes I get frustrated with Anandtech, but being able to have these fantastic articles when new technology is released is why I keep coming back.