The NVIDIA Turing GPU Architecture Deep Dive: Prelude to GeForce RTX

by Nate Oh on September 14, 2018 12:30 PM ESTTuring RT Cores: Hybrid Rendering and Real Time Raytracing

As it presents itself in Turing, real-time raytracing doesn’t completely replace traditional rasterization-based rendering, instead existing as part of Turing’s ‘hybrid rendering’ model. In other words, rasterization is used for most rendering, while ray-tracing techniques are used for select graphical effects. Meanwhile, the ‘real-time’ performance is generally achieved with a very small amount of rays (e.g. 1 or 2) per pixel, and a very large amount of denoising.

The specific implementation is ultimately in the hands of developers, and NVIDIA naturally has their raytracing development ecosystem, which we’ll go over in a later section. But because of the computational intensity, it simply isn’t possible to use real-time raytracing for the complete rendering workload. And higher resolutions, more complex scenes, and numerous graphical effects also compound the difficulty. So for performance reasons, developers will be utilizing raytracing in a deliberate and targeted manner for specific effects, such as global illumination, ambient occlusion, realistic shadows, reflections, and refractions. Likewise, raytracing may be limited to specific objects in a scene, and rasterization and z-buffering may replace primary ray casting while only secondary rays are raytraced. Thus, the goal of developers is to use raytracing for the most noticeable and realistic effects that rasterization cannot accomplish.

Essentially, this style of ‘hybrid rendering’ is a lot less raytracing than one might imagine from the marketing material. Perhaps a blunt way to generalize might be: real time raytracing in Turing typically means only certain objects are being rendered with certain raytraced graphical effects, using a minimal amount of rays per pixel and/or only raytracing secondary rays, and using a lot of denoising filtering; anything more would affect performance too much. Interestingly, explaining all the caveats this way both undersells and oversells the technology, because therein lies the paradox. Even in this very circumscribed way, GPU performance is significantly affected, but image quality is enhanced with a realism that cannot be provided by a higher resolution or better anti-aliasing. Except ‘real time’ interactivity in gaming essentially means a minimum of 30 to 45 fps, and lowering the render resolution to achieve those framerates hurts image quality. What complicates this is that real time raytracing is indeed considered the ‘holy grail’ of computer graphics, and so managing the feat at all is a big deal, but there are equally valid professional and consumer perspectives on how that translates into a compelling product.

On that note, then, NVIDIA accomplished what the industry was not expecting to be possible for at least a few more years, and certainly not at this scale and development ecosystem. Real time raytracing is the culmination of a decade or so of work, and the Turing RT Cores are the lynchpin. But in building up to it, NVIDIA summarizes the achievement as a result of:

- Hybrid rendering pipeline

- Efficient denoising algorithms

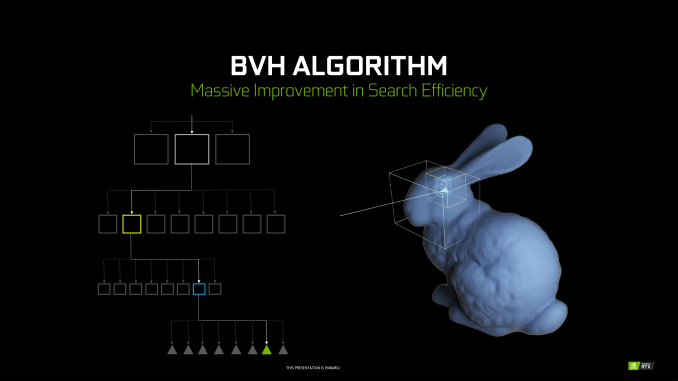

- Efficient BVH algorithms

By themselves, these developments were unable to improve raytracing efficiency, but set the stage for RT Cores. By virtue of raytracing’s importance in the world of computer graphics, NVIDIA Research has been looking into various BVH implementations for quite some time, as well as exploring architectural concerns for raytracing acceleration, something easily noted from their patents and publications. Likewise with denoising, though the latest trend has veered towards using AI and by extension Tensor Cores. When BVH became a standard of sorts, NVIDIA was able to design a corresponding fixed function hardware accelerator.

Being so crucial to their achievement, NVIDIA is not disclosing many details about the RT Cores or their BVH implementation. Of the details given, much is somewhat generic. To reiterate, BVH is a rather general category, and all modern raytracing acceleration structures are typically BVH or kd-tree based.

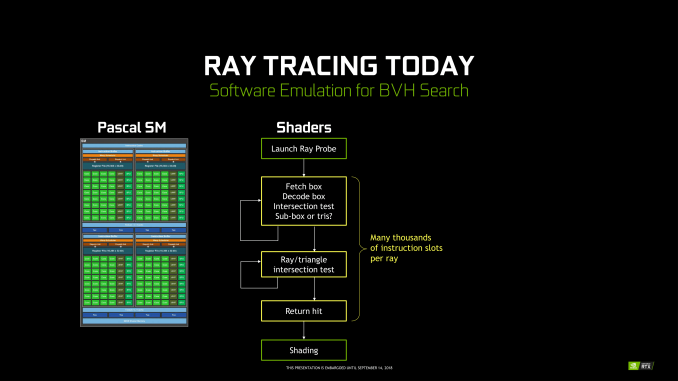

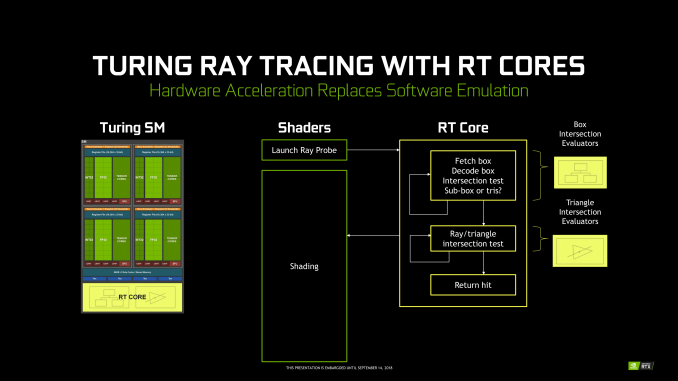

Unlike Tensor Cores, which are better seen as an FMA array alongside the FP and INT cores, the RT Cores are more like a classic offloading IP block. Treated very similar to texture units by the sub-cores, instructions bound for RT Cores are routed out of sub-cores, which is later notified on completion. Upon receiving a ray probe from the SM, the RT Core proceeds to autonomously traverse the BVH and perform ray-intersection tests. This type of ‘traversal and intersection’ fixed function raytracing accelerator is a well-known concept and has had quite a few implementations over the years, as traversal and intersection testing are two of the most computationally intensive tasks involved. In comparison, traversing the BVH in shaders would require thousands of instruction slots per ray cast, all for testing against bounding box intersections in the BVH.

Returning to the RT Core, it will then return any hits and letting shaders do implement the result. The RT Core also handles some grouping and scheduling of memory operations for maximizing memory throughput across multiple rays. And given the workload, presumably some amount of memory and/or ray buffer within the SIP block as well. Like in many other workloads, memory bandwidth is a common bottleneck in raytracing, and has been the focus of several NVIDIA Research papers. And in general, raytracing workloads result in very irregular and random memory accesses, mainly due to incoherent rays, that prove especially problematic for how GPUs typically utilize their memory.

But otherwise, everything else is at a high level governed by the API (i.e. DXR) and the application; construction and update of the BVH is done on CUDA cores, governed by the particular IHV – in this case, NVIDIA – in their DXR implementation.

All-in-all, there’s clearly more involved, and we’ll be looking to run some microbenchmarks in the future. NVIDIA’s custom BVH algorithms are clearly in play, but right now we can’t say what the optimizations might be, such as compressions, wide BVH, node subdivision into treelets. The way the RT Cores are integrated into the SM and into the architecture is likely crucial to how it operates well. Internally, the RT Core might just be a basic traversal and intersection unit, but it might also have other bits inside; one of NVIDIA’s recent patents provide a representation, albeit dated, of what else might be present. I, for one, would not be surprised to see it closely tied with the MIO blocks, and perhaps did more with coherency gathering by manipulating memory traffic for higher efficiency. It would need to coordinate well with the other workloads in the SMs without strangling memory access with unmitigated incoherent rays.

Nevertheless, details like performance impact are as yet unspecified.

111 Comments

View All Comments

Yojimbo - Saturday, September 15, 2018 - link

The sites I look at put $649 2013 dollars at about $700 today. They use the CPI, the Consumer Price Index. That is the standard way to compare values over consumer goods and cost of living in general between different years where the CPI has been recorded. I have no idea where the numbers in your chart have come from. For instance, by the CPI, the 7800 GTX 512, which was released in 2005, is about $840 in 2018 dollars, and $825 in 2017 dollars, far more than the $783 listed in your chart.Note that if you have a steady 2% inflation per year over 13 years you get a 29% increase in prices.

Yojimbo - Saturday, September 15, 2018 - link

And besides, your chart, even with the wrong numbers, doesn't show what you claim to show. The 780, the 280, the 8800 GTX, and the 7800 GTX all have launch prices around the RTX 2080, even though the RTX 2080 has a bigger die than any of them.eddman - Saturday, September 15, 2018 - link

Yes it does. 2080 Ti is the most expensive geforce launch card. It's not my job, as a buyer, to dig up die sizes or even care about them. I only care about performance/price ratios which these cards are terrible at.eddman - Saturday, September 15, 2018 - link

... they are terrible because the price/performance ratio hasn't improved in any substantial way. 1080 Ti offered ~70% performance increase over 980 Ti for just ~8% higher launch MSRP.Yojimbo - Saturday, September 15, 2018 - link

OK, so when GM produced the Hummer that's directly comparable to their Suburban because they were both SUVs. It's not your job to be an intelligent creature and realize that the Hummer opened up a new market segment. Instead, spend your energy resenting GM. You can do what you want but that doesn't make it rational.eddman - Sunday, September 16, 2018 - link

... except a Hummer is a Hummer and a Suburban is a Suburban. They are not part of the same lineup. Also, a car is not a video card.2080 Ti directly follows 1080 Ti based on the naming. It's not my fault they did it. They could've named it 2090 Ti if they really meant it to be a different product category, but they didn't so based on common branding logic 2080 Ti is the successor to 1080 Ti and therefore it is not out-of-the-ordinary to expect close pricing.

Rational? What I wrote is completely rational. It is you who is trying to somehow make it look that a ~42% price jump for a 40%-45% performance increase in regular rasterized games is ok. It is not. This is probably the worst generational price/performance ratio vs. the old gen I've seen.

eddman - Sunday, September 16, 2018 - link

... unless they plan on releasing GTX 2080 Ti/2080 at lower prices, but given the potential confusion that it might cause, probably not.0ldman79 - Sunday, September 16, 2018 - link

Oddly enough, going by that comparison the H2 is built on the same platform as the Suburban.Killmorefor - Sunday, September 16, 2018 - link

That chart is pretty accurate, but would benefit from a 3D showing price drops after the next gen introduction. The chart apples, and but does not show the price jump before the chart started, nor the current jump for the next gen the OP has very good point.markiz - Monday, September 17, 2018 - link

Yeah, so what?I don't understand.. If the price is too high, they will not sell much.