The NVIDIA Turing GPU Architecture Deep Dive: Prelude to GeForce RTX

by Nate Oh on September 14, 2018 12:30 PM ESTThe Turing Architecture: Volta in Spirit

Diving straight into the microarchitecture, the new Turing SM looks very different to the Pascal SM, but those who’ve been keeping track of Volta will notice a lot of similarities to the NVIDIA’s more recent microarchitecture. In fact, on a high-level, the Turing SM is fundamentally the same, with the notable exception of a new IP block: the RT Core. Putting the RT Cores and Tensor Cores aside for now, the most drastic changes from Pascal are same ones that differentiated Volta from Pascal. Turing’s advanced shading features are also in the same bucket in needing explicit developer support.

Like Volta, the Turing SM is partitioned into 4 sub-cores (or processing blocks) with each sub-core having a single warp scheduler and dispatch unit, as opposed Pascal’s 2 partition setup with two dispatch ports per sub-core warp scheduler. There are some fairly major implications with change, and broadly-speaking this means that Volta/Turing loses the capability to issue a second, non-dependent instruction from a thread for a single clock cycle. Turing is presumably identical to Volta performing instructions over two cycles but with schedulers that can issue an independent instruction every cycle, so ultimately Turing can maintain 2-way instruction level parallelism (ILP) this way, while still having twice the amount of schedulers over Pascal.

Like we saw in Volta, these changes go hand-in-hand with the new scheduling/execution model with independent thread scheduling that Turing also has, though differences were not disclosed at this time. Rather than per-warp like Pascal, Volta and Turing have per-thread scheduling resources, with a program counter and stack per-thread to track thread state, as well as a convergence optimizer to intelligently group active same-warp threads together into SIMT units. So all threads are equally concurrent, regardless of warp, and can yield and reconverge.

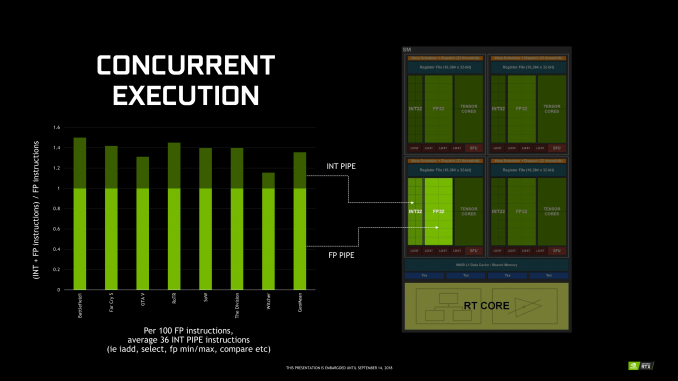

In terms of the CUDA cores and ALUs, the Turing sub-core has 16 INT32 cores, 16 FP32 cores, and 2 Tensor Cores, the same setup as the Volta sub-core. With the split INT/FP datapath model like Volta, Turing can also concurrently execute FP and INT instructions, which as we will see, is much more relevant with the RT cores involved. Where Turing differs is in lacking Volta’s full complement of FP64 cores, instead having a token amount (2 per SM) for compatibility reasons and resulting in FP64 throughput being 1/32 the TFLOP rate of FP32. Maimed FP64 is standard for NVIDIA’s consumer GPUs, but what has not been standard until now is Turing’s full 2x FP16 throughput, which was available in GP100 but was crippled in the other Pascal GPUs.

While these details may be more on the technical side of things, in Volta this design seemed inextricably linked to maximizing the most amount of performance from tensor cores, but minimizing disrupting parallelism or coordination with other compute workloads. The same is most likely true with Turing’s 2nd generation tensor cores and RT cores, where 4 independently scheduled sub-cores and granular thread manipulation would be very useful in extracting the most performance out of mixed gaming-oriented workloads, where rendering a single frame would be pulling in multiple blocks of the GPU to work in conjunction. This is actually a concept that circumscribes the RTX-OPS metric, and we will revisit that in depth later.

Memory-wise, every sub-core now has an L0 instruction cache like Volta, with identically sized 64 KB register file. In Volta, this was important in reducing latency when the tensor cores were in play, and in Turing this likely benefits RT cores similarly, which we will discuss in a later section. Otherwise, the Turing SM also has 4 load/store units per sub-core, down from 8 in Volta, but still maintains 4 texture units.

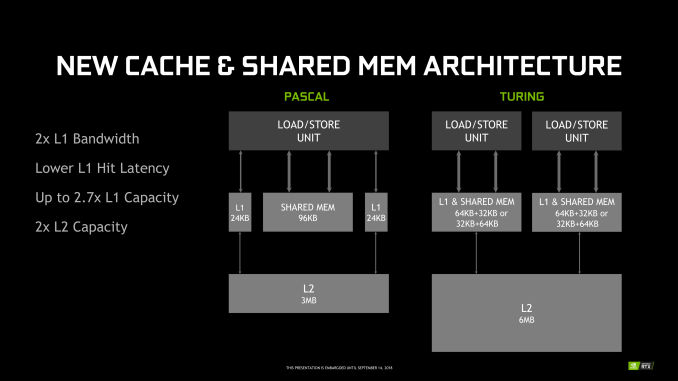

Further up the memory hierarchy is the new L1 data cache and Shared Memory (SMEM) that has been revamped and unified into a single partitionable memory block, another Volta innovation. For Turing, this is looking to be a combined 96 KB L1/SMEM, which traditional graphics workloads divide as 64KB for dedicated graphics shader RAM and as 32 KB for texture cache and register file spill area. Meanwhile, compute workloads can partition the L1/SMEM with up to 64 KB as L1 with the remaining 32 KB as SMEM, or vice versa. For Volta, SMEM can be configured up to 96 KB.

Though many of these details are only of value to developers, there are several important points to make here. One is simply how similar Turing and Volta are, as opposed to ; after all, they are in the same generational compute family. Another is how compute-oriented Volta – and by extension, Turing – are, and the fact that this is being brought to consumers as part of NVIDIA’s proclaimed ‘future of gaming.’ Part of that is, of course, permitting fast FP16 in potential gaming workloads, but Turing goes far beyond that. At the low level, Turing is less about maximizing traditional gaming, and more about maximizing gaming with special technologies such as real-time raytracing.

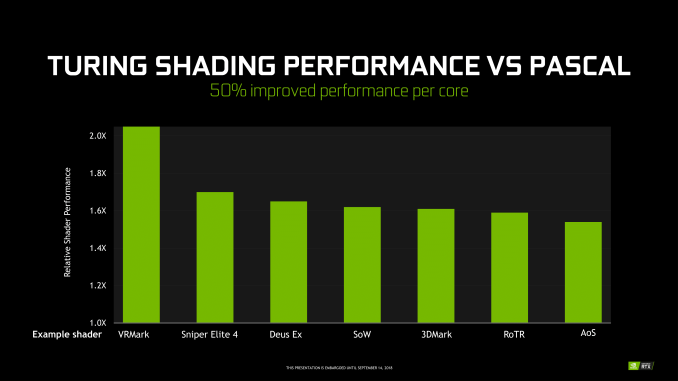

For their part, NVIDIA points to Turing’s leap in performance from Pascal, from memory hierarchy bandwidth uplifts to 50% more shader performance per core, but unfortunately for today we can’t connect this with any real world data or performance. With concurrent FP/INT execution in gaming, the company is keen to point out that around 36 INT instructions could be freed up by moving to its own pipe, which nevertheless doesn’t describe Turing performance, only the applicability of its concurrent execution feature in games.

It becomes a bit of a complex scenario, as we know that Volta already improved on Pascal in these aspects with concurrent execution, a brand new ISA, and reworked SM. And it doesn’t seem to involve architectural changes for significant clockspeed enhancements a la Pascal from Maxwell, though of course on the process side the 12nm FFN is a factor. So it comes down to special gaming workloads and real-world performance. The latter is not available today, but the former is so important to Turing that it merited dropping ‘GTX’ for ‘RTX’. And of those special workloads, real-time raytracing and RT cores take center stage.

111 Comments

View All Comments

Yojimbo - Saturday, September 15, 2018 - link

The sites I look at put $649 2013 dollars at about $700 today. They use the CPI, the Consumer Price Index. That is the standard way to compare values over consumer goods and cost of living in general between different years where the CPI has been recorded. I have no idea where the numbers in your chart have come from. For instance, by the CPI, the 7800 GTX 512, which was released in 2005, is about $840 in 2018 dollars, and $825 in 2017 dollars, far more than the $783 listed in your chart.Note that if you have a steady 2% inflation per year over 13 years you get a 29% increase in prices.

Yojimbo - Saturday, September 15, 2018 - link

And besides, your chart, even with the wrong numbers, doesn't show what you claim to show. The 780, the 280, the 8800 GTX, and the 7800 GTX all have launch prices around the RTX 2080, even though the RTX 2080 has a bigger die than any of them.eddman - Saturday, September 15, 2018 - link

Yes it does. 2080 Ti is the most expensive geforce launch card. It's not my job, as a buyer, to dig up die sizes or even care about them. I only care about performance/price ratios which these cards are terrible at.eddman - Saturday, September 15, 2018 - link

... they are terrible because the price/performance ratio hasn't improved in any substantial way. 1080 Ti offered ~70% performance increase over 980 Ti for just ~8% higher launch MSRP.Yojimbo - Saturday, September 15, 2018 - link

OK, so when GM produced the Hummer that's directly comparable to their Suburban because they were both SUVs. It's not your job to be an intelligent creature and realize that the Hummer opened up a new market segment. Instead, spend your energy resenting GM. You can do what you want but that doesn't make it rational.eddman - Sunday, September 16, 2018 - link

... except a Hummer is a Hummer and a Suburban is a Suburban. They are not part of the same lineup. Also, a car is not a video card.2080 Ti directly follows 1080 Ti based on the naming. It's not my fault they did it. They could've named it 2090 Ti if they really meant it to be a different product category, but they didn't so based on common branding logic 2080 Ti is the successor to 1080 Ti and therefore it is not out-of-the-ordinary to expect close pricing.

Rational? What I wrote is completely rational. It is you who is trying to somehow make it look that a ~42% price jump for a 40%-45% performance increase in regular rasterized games is ok. It is not. This is probably the worst generational price/performance ratio vs. the old gen I've seen.

eddman - Sunday, September 16, 2018 - link

... unless they plan on releasing GTX 2080 Ti/2080 at lower prices, but given the potential confusion that it might cause, probably not.0ldman79 - Sunday, September 16, 2018 - link

Oddly enough, going by that comparison the H2 is built on the same platform as the Suburban.Killmorefor - Sunday, September 16, 2018 - link

That chart is pretty accurate, but would benefit from a 3D showing price drops after the next gen introduction. The chart apples, and but does not show the price jump before the chart started, nor the current jump for the next gen the OP has very good point.markiz - Monday, September 17, 2018 - link

Yeah, so what?I don't understand.. If the price is too high, they will not sell much.