The NVIDIA Turing GPU Architecture Deep Dive: Prelude to GeForce RTX

by Nate Oh on September 14, 2018 12:30 PM ESTBounding Volume Hierarchy - How Computers Test the World

Perhaps the biggest aspect of NVIDIA’s gamble on ray tracing is that traditional GPUs just aren’t very good at the task. They’re fast at rasterization and they’re even fast at parallel computing, however ray tracing does not map very well to either of those computing paradigms. Instead NVIDIA has to add hardware dedicated to ray tracing, which means devoting die space and power to hardware that cannot help with traditional rasterization.

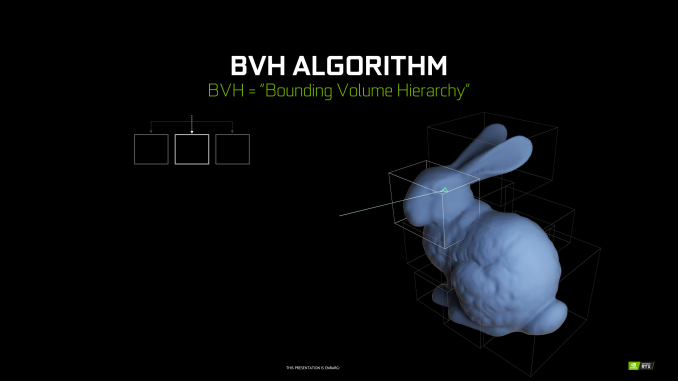

A big part of that hardware, in turn, will go into solving the most basic problem of ray tracing: how do you figure out what a ray is intersecting with? The most common solution to this problem is to store triangles in a data structure that is well-suited for ray tracing. And this data structure is called a Bounding Volume Hierarchy.

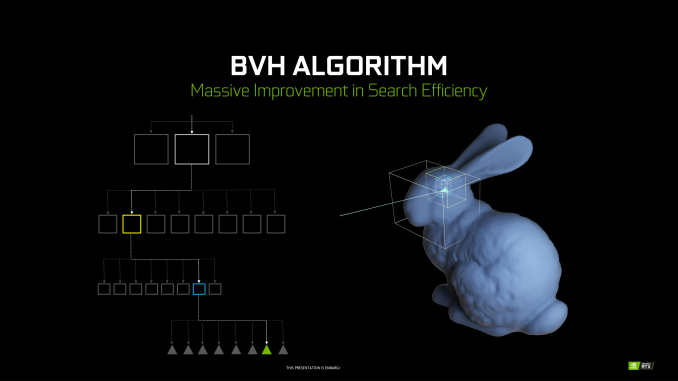

Conceptually, a BVH is relatively simple – at least for the purposes of this article. Rather than testing every polygon to see if a ray interacts with it, the idea is to test a portion of a scene to see if it interacts with a ray, and then keep drilling down. If there is an intersection with that portion of the scene, then subdivide it into smaller portions and test again. And again. And again. All the way until you reach the individual polygon, at which point the ray testing is resolved.

For the computer scientists in the crowd, this might sound a lot like an application of a binary search, and it is. Each test allows for a significant number of options (in this case polygons) to be discarded as possible answers. This gets to the right polygon in just a fraction of the time. A BVH, in turn, is stored in what’s essentially a tree data structure, with each subdivision – called bounding boxes – stored as children of their parent bounding box.

Now the catch with BVH is that while it radically cuts down on the amount of ray intersection needed compared to a naïve implementation, it’s still not super cheap. A number of tests are still required for each ray, with both successful and failed tests adding to the total number of tests taken. And all of this is for a single ray, when a significant number of rays are going to be needed for each pixel. Which is why hardware acceleration of the process is so important (and not at all easy).

The other major computational cost here is that BVHs themselves aren’t free. One needs to be created for a scene from the polygons in it, so there is an additional step before ray casting can even begin. This is more a developer concern – when can they modify and reuse a BVH versus building a new one – but it’s another step in the process. Furthermore it’s an example of why developer training and efficient engine implementations are so crucial to the process, as a poor implementation can make ray tracing much too slow to be viable.

111 Comments

View All Comments

Alistair - Sunday, September 16, 2018 - link

Except for the GTX 780 was the worse nVidia release ever, at a terrible price. Nice try ignoring every other card in the last 10 years.markiz - Monday, September 17, 2018 - link

How can it be the same segment of the market, if the prices are, as you claim, double+?I mean, that claim makes no sense. It's not same segment. it's higher tier.

I mean, who is to say what kind of an advancement in GPU and games have people supposed to be getting?

Buy a 500$ card and max settings as far as they go and call it a day.

If you are

Ej24 - Monday, September 17, 2018 - link

The R&D for smaller manufacturing nodes hasn't scaled linearly. It's been almost exponential in terms of $/Sq.mm to develop each new node. That's why we need die shrinks to cram more transistors per square mm, and why some nodes were skipped because the economics didn't work out, like 20/22nm gpu's never existed. You're assuming that manufacturers have fixed costs that have never changed. The cost of a semiconductor fab, and R&D for new nodes has ballooned much much faster than inflation. That's why we've seen the number of fabs plummet with every new node. There used to be dozens of fabs in the 90nm days and before. Now it's looking like only 3 or 4 will be producing 7nm and below. It's just gotten too expensive for anyone to compete.milkod2001 - Tuesday, September 18, 2018 - link

All those ridiculous prices started when AMD have announced 7970 at $550 plus. NV had mid range card to compete with it: GTX 680 at the same price. And then NV Titan high end cards were introduced at $1000 plus. Since then we pay past high end prices for mid range cards.futrtrubl - Wednesday, September 19, 2018 - link

Just a bit on your math. You say $1 accounting for inflation of 2.7% over 18 years is now just less than $1.50. Maybe you are doing it as $1 * 18 * 1.027 to get that which is incorrect for inflation. It compounds, so it should be $1 * ( 1.027^18) which comes to ~$1.62. Likewise at 5% over 18 years it becomes $2.41.Da W - Sunday, September 16, 2018 - link

Since when does inflation work in the semiconductor industry?Holliday75 - Monday, September 17, 2018 - link

I was wondering the same thing. Smaller, faster, cheaper. For some reason here its the opposite....for 2 out of 3.Yojimbo - Saturday, September 15, 2018 - link

"You must literally live under a rock while also being absurdly naive.It's never been this way in the 20 years that i've been following GPUs. These new RTX GPUs are ridiculously expensive, way more than ever, and the prices will not be changing much at all when there's literally zero competition. The GPU space right now is worse than it's ever been before in history."

No, if you go back and look at historical GPU prices, adjusted for inflation, there have been other times that newly released graphics cards were either as expensive or more expensive. The 700 series is the most recent example of cards that were as expensive as the 20 series is.

eddman - Saturday, September 15, 2018 - link

No.https://i.imgur.com/ZZnTS5V.png

This chart was made last year based on 2017 dollar value, but it still applies. 20 series cards have the highest launch prices in the past 18 years by a large margin.

eddman - Saturday, September 15, 2018 - link

There is one card that surpasses that, 8800 Ultra. It was nothing more than a slightly OCed 8800 GTX. Nvidia simply released it to extract as much money as possible, and that was made possible because of lack of proper competition from ATI/AMD in that time period.