NVIDIA Announces the GeForce RTX 20 Series: RTX 2080 Ti & 2080 on Sept. 20th, RTX 2070 in October

by Ryan Smith on August 20, 2018 4:00 PM ESTPreviewing GeForce RTX 2080 Ti

Turing and NVIDIA’s focus on hybrid rendering aside, let’s take a look at the individual GeForce RTX cards.

Before getting too far here, it’s important to point out that NVIDIA has offered little in the way of information on the cards’ performance besides their formal specifications. Essentially the entirety of the NVIDIA Gamescom presentation – and even most of the SIGGRAPH presentation – was focused on ray tracing/hybrid rendering and the Turing architecture’s unique hardware capabilities to support those features. As a result we don’t have a good frame of reference for how these specifications will translate into real-world performance. Which is also why we’re disappointed that NVIDIA has already started pre-orders, as it pushes consumers into blindly buying cards.

At any rate, with NVIDIA having changed the SM for Turing as much as they have versus Pascal, I don’t believe FLOPS alone is an accurate proxy for performance in current games. It’s almost certain that NVIDIA has been able to improve their SM efficiency, especially judging from what we’ve seen thus far with the Titan V. So in that respect this launch is similar to the Maxwell launch in that the raw specifications can be deceiving, and that it’s possible to lose FLOPS and still gain performance.

In any case, at the top of the GeForce RTX 20 series stack will be the GeForce RTX 2080 Ti. A major departure from the GeForce 700/900/10 series, NVIDIA is not retaining the Ti card as a mid-generation kicker. Instead they’re launching with it right away. This means that the high-end of the RTX family is now a 3 card stack from the start, instead of a 2 card stack as has previously been the case.

NVIDIA has not commented on this change in particular, and this is one of those things that I expect we’ll know more about once we reach the actual hardware launch. But there’s good reason to suspect that since NVIDIA is using the relatively mature TSMC 12nm “FFN” process – itself an optimized version of 16nm – that yields are in a better place than usual at this time. Normally NVIDIA would be using a more bleeding-edge process, where it would make sense to hold back the largest chip another year or so to let yields improve.

| NVIDIA GeForce x80 Ti Specification Comparison | ||||||

| RTX 2080 Ti Founder's Edition |

RTX 2080 Ti | GTX 1080 Ti | GTX 980 Ti | |||

| CUDA Cores | 4352 | 4352 | 3584 | 2816 | ||

| ROPs | 88? | 88? | 88 | 96 | ||

| Core Clock | 1350MHz | 1350MHz | 1481MHz | 1000MHz | ||

| Boost Clock | 1635MHz | 1545MHz | 1582MHz | 1075MHz | ||

| Memory Clock | 14Gbps GDDR6 | 14Gbps GDDR6 | 11Gbps GDDR5X | 7Gbps GDDR5 | ||

| Memory Bus Width | 352-bit | 352-bit | 352-bit | 384-bit | ||

| VRAM | 11GB | 11GB | 11GB | 6GB | ||

| Single Precision Perf. | 14.2 TFLOPs | 13.4 TFLOPs | 11.3 TFLOPs | 6.1 TFLOPs | ||

| "RTX-OPS" | 78T | 78T | N/A | N/A | ||

| TDP | 260W | 250W | 250W | 250W | ||

| GPU | Big Turing | Big Turing | GP102 | GM200 | ||

| Architecture | Turing | Turing | Pascal | Maxwell | ||

| Manufacturing Process | TSMC 12nm "FFN" | TSMC 12nm "FFN" | TSMC 16nm | TSMC 28nm | ||

| Launch Date | 09/20/2018 | 09/20/2018 | 03/10/2017 | 06/01/2015 | ||

| Launch Price | $1199 | $999 | MSRP: $699 Founders: $699 |

$649 | ||

The king of NVIDIA’s new product stack, the GeForce RTX 2080 Ti is without a doubt an interesting card. And if we’re being honest, it’s not a card I was expecting. Based on these specifications, it’s clearly built around a cut-down version of NVIDIA’s “Big Turing” GPU, which the company just unveiled last week at SIGGRAPH. And like the name suggests, Big Turing is big: 18.6B transistors, measuring 754mm2 in die size. This is closer in size to GV100 (Volta/Titan V) than it is any past x80 Ti card, so I am surprised that, even as a cut-down chip, NVIDIA can economically offer it for sale. None the less here we are, with Big Turing coming to consumer cards.

Even though it’s a cut-down part, RTX 2080 Ti is still a beast, with 4352 Turing CUDA cores and what I estimate to be 544 tensor cores. Like its Quadro counterpart, this card is rated for 10 GigaRays/second, and for traditional compute we’re looking at 13.4 TFLOPS based on these specifications. Note that this is only 19% higher than GTX 1080 Ti, which is all the more reason why I want to learn more about Turing’s architectural changes before predicting what this means for performance in current-generation rasterization games.

Clockspeeds have actually dropped from generation to generation here. Whereas the GTX 1080 Ti started at 1.48GHz and had an official boost clock rating of 1.58GHz (and in practice boosting higher still), RTX 2080 Ti starts at 1.35GHz and boosts to 1.55GHz, while we don’t know anything about the practical boost limits. So assuming NVIDIA is being as equally conservative as the last generation, then this means the average clockspeeds have dropped slightly. Which in turn means that whatever performance gains we see from GTX 2080 Ti are going to ride entirely on the increased CUDA core count and any architectural efficiency improvements.

Meanwhile the ROP count is unknown, but as it needs to match the memory bus width, we’re almost certainly looking at 88 ROPs. Even more so than the core compute architecture, I’m curious as to whether there are any architectural improvements here. Otherwise because the ROP count is identical, then the maximum pixel throughput (on paper) is actually ever so slightly lower than it was on GTX 1080 Ti.

Speaking of the memory bus, this is another area that is seeing a significant improvement. NVIDIA has moved from GDDR5X to GDDR6, so memory clockspeeds have increased accordingly, from 11Gbps to 14Gbps, a 27% increase. And since the memory bus width itself remains identical at 352-bits wide, this means the final memory bandwidth increase is also 27%. Memory bandwidth has long been the Achilles heel of GPUs, so even if NVIDIA’s theoretical ROP throughput has not changed this generation, the fact of the matter is that having more memory bandwidth is going to remove bottlenecks and improve performance throughout the rendering pipeline, from the texture units and CUDA cores straight out to the ROPs. Of course, the tensor cores and RT cores are going to be prolific bandwidth consumers as well, so in workloads where they’re in play, NVIDIA is once again going to have to do more with (relatively) less.

Past this, things start diverging a bit. NVIDIA is once again offering their reference-grade Founders Edition cards, and unlike with the GeForce 10 series, the 20 series FE cards have slightly different specifications than their base specification compatriots. Specifically, NVIDIA has cranked up the clockspeed and the resulting TDP a bit, giving the 2080 Ti FE an on-paper 6% performance advantage, and also a 10W higher TDP. For the standard cards then, the TDP is the x80 Ti-traditional 250W, while the FE card moves to 260W.

Meanwhile, starting with the GeForce 20 series cards, NVIDIA is rolling out a new design to their reference/Founders Edition cards, the first such redesign since the original GeForce GTX Titan back in 2013. Up until now NVIDIA has focused on a conservative but highly effective blower design, pairing the best blower in the industry with a metal grey & black metal shroud. The end result is that these reference/FE cards could be dropped in virtually any system and work, thanks to the self-exhausting nature of blowers.

However for the GeForce 20 series, NVIDIA has blown off the blower, and instead opted to design their cards around the industry’s other favorite cooler design: the dual-fan open air cooler. Combined with NVIDIA’s metallic aesthetics, which they have retained, and the resulting product pretty much looks exactly like you’d expect a high-end open air cooled NVIDIA card to look like: two fans buried inside a meticulous metal shroud. And while we’ll see where performance stands once we review the card, it’s clear that NVIDIA is at the very least aiming to lead the pack in industrial design once again.

The switch to an open air cooler has three particular ramifications versus NVIDIA’s traditional blower, which for regular AnandTech readers you’ll know we’ve discussed before.

- Cooling capacity goes up

- Noise levels go down

- A card can no longer guarantee that it can cool itself

In an open air design, hot air is circulated back into the chassis via the fans, as the shroud is not fully closed and the design doesn’t force hot air out of the back of the case. Essentially in an open air design a card will push the hottest air away from itself, but it’s up to the chassis to actually get rid of that hot air. Which a well-designed case will do, but not without first circulating it through the CPU cooler, which is typically located above the GPU.

GPU cooler design is such that there is no one right answer. Because open air designs can rely on large axial fans with little air resistance, they can be very quiet. But overall cooling becomes the chassis’ job. Otherwise blowers are fully exhausting and work in practically any chassis – no matter how bad the chassis cooling is – but it is nosier thanks to the high-RPM radial fan. NVIDIA for their part has long favored blowers, but this appears to be at an end. It does make me wonder what this means for their OEM customers (whose designs often count on the video card being a blower), but that’s a deeper discussion for another time.

At any rate, from NVIDIA’s press release we know that each fan features 13 blades, and that the shroud itself is once again made out of die-cast aluminum. Also buried in the press release is information that NVIDIA is once again using a vapor chamber here to transfer heat between the GPU and the heatsink, and that it’s being called a “full length” vapor chamber, which would mean it’s notably larger than the vapor chamber in NVIDIA’s past cards. Unfortunately this is the limit to what we know right now about the cooler, and I expect there’s more to find out in the coming days and weeks. In the meantime NVIDIA has disclosed that the resulting card the standard size for a high-end NVIDIA reference card: dual slot width, 10.5-inches long.

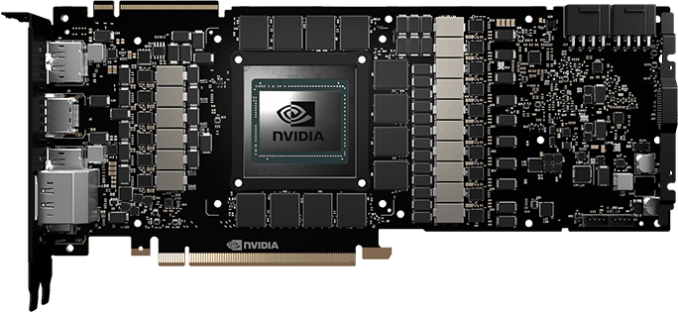

Diving down, we also have a few tidbits about the reference PCB, including the power delivery system. NVIDIA’s press release specifically calls out a 13 phase power delivery system, which matches the low-resolution PCB render they’ve posted to their site. NVIDIA has always been somewhat frugal on VRMs – their cards have more than enough capacity for stock operation, but not much excess capacity for power-intensive overclocking – so it sounds like they are trying to meet overclockers half-way here. Though once we get to fully custom partner cards, I still expect the MSIs and ASUSes of the world to go nuts and try to outdo NVIDIA.

NVIDIA’s photos also make it clear that in order to meet that 250W+ TDP, we’re looking at an 8pin + 8pin configuration for PCIe power connectors. On paper such a setup is good for 375W, and while I don’t expect NVIDIA to go quite that far, typically we’d see a 300W 6pin + 8pin setup instead. So NVIDIA is clearly planning on drawing more power, and they’re using the connectors to match. Thankfully 8pin power connectors are fairly common on 500W+ PSUs these days, however it’s possible that older PSU owners may get pinched by the need for dual 8pin cables.

Finally, for display outputs, NVIDIA has confirmed that their latest generation flagship once again supports up to 4 displays. However there are actually 5 display outputs on the card: the traditional 3 DisplayPorts and a sole HDMI port, but now there’s also a singular USB Type-C port, offering VirtualLink support for VR headsets. As a result, users can pick any 4 of the 5 ports, with the Type-C port serving as a DisplayPort when not hooked up to a VR headset. Though this does mean that the final DisplayPort has been somewhat oddly shoved into the second row, in order to make room for the USB Type-C port.

Wrapping up the GeForce RTX 2080 Ti, NVIDIA’s new flagship has been priced to match. In fact it is seeing the greatest price hike of them all. Stock cards will start at $999, $300 above the GTX 1080 Ti. Meanwhile NVIDIA’s own Founders Edition card carries a $200 premium on top of that, retailing for $1199, the same price as the last-generation Titan Xp. The Ti/Titan dichotomy has always been a bit odd in recent years, so it would seem that NVIDIA has simply replaced the Titan with the Ti, and priced it to match.

223 Comments

View All Comments

eva02langley - Tuesday, August 21, 2018 - link

You didn't read the article, did you? This MSRP will never be achieved. As of now, third party cards are retailing for as much or even more.Vega went into the same situation because of HBM2 pricing. The same will occur for GDDR6. Add to this a 760 mm2 die and you got yourself a trap.

piiman - Tuesday, August 21, 2018 - link

Couple of weeks? lol good luckevernessince - Friday, August 24, 2018 - link

Nvidia certainly does know how to milk people. They've got people paying the $200 founders edition tax and people believing that they'll actually pay MSRP. Oh, that's rich. MSRP is a joke, expect to be milked for the full founders edition price until Nvidia is forced otherwise.Remember the 10 years of Intel CPU's being the only choice? Welcome to the GPU version of that.

Frenetic Pony - Monday, August 20, 2018 - link

It's not a "bad deal" only because AMD is failing still, would probably be hundreds less if the competition could get their act together : /sgeocla - Tuesday, August 21, 2018 - link

This is exactly what is wrong with AMD competing against Nvidia in the high-end GPU market. People only want AMD to compete to drive down Nvidia prices so that they buy the Nvidia cards anyway, and AMD gets stuck with the bill without being compensated for R&D.AMD should not waste resources on competing in the high end GPU market and mostly focus on giving the mainstream GPU users (that actually care about both price and performance) the products that they want and deserve.

DanNeely - Tuesday, August 21, 2018 - link

The only way AMD can offer enough competitive pressure to influence NVidia's pricing is to offer similar levels of performance per mm^2 of GPU die. If they need 2x as much chip to match performance nvidia can lift prices across the entire product line matching price/pref with their competition while inflating the price of high end cards and laughing all the way to the bank. Meanwhile if AMD is reasonably competitive densitywise there's no reason they can't make cards that are able to compete most if not all the way up the stack.Impulses - Monday, August 20, 2018 - link

I'm in for an RTX 2080 if it actually beats 1080 To, but if it barely does so then a clearance deal on the latter might be the better deal...eddman - Monday, August 20, 2018 - link

What kind of reasoning is that. Based on that thought process it'd be ok for 3080 to cost $1000 since it might match or beat 2080 Ti.2080 is replacing 1080 and as a direct replacement it is $100 overpriced. Nvidia is simply raising the price because they can.

P.S. No, inflation is not the driving factor here since 2016 $600 is about $630 now.

CaedenV - Monday, August 20, 2018 - link

Not to entirely defend their prices (I agree it is higher than I want to pay), but the launch is compared to the 8800 for very good reason. Inflation adjusted, the 8800 with its introduction of CUDA was also an inflation adjusted $1000 on launch... and also useless. New tech is expensive, and not great. RTX is not going to work at 60fps 1080p... the demos were showing something closer to 720p 30fps. People who buy these chips have 4k monitors and will pretty much never use RTX (except for rendering video projects), just as nobody used CUDA for much of anything when it was introduced. This is the same thing.Fast forward a few years, you see the launch of the 580, for an inflation adjusted $500, and CUDA was actually powerful enough to be useful. We will see a similar curve here. Lots of R&D to pay for, lots of kinks to work out, lots more cores that need to be added, and eventually it will all be affordable and usable, even on a 4k display.

That said; How much you want to bet that if AMD had a competing card, nVidia would be selling similar hardware without the tensor cores and ray tracing capabilities, but still fantastic gaming cards for $600... would be great to see some competition, but sadly I bet we will see pressure from Intel's dGPUs before we see anything high end from AMD (other than a few limited release cards that nobody can actually purchase)

eddman - Tuesday, August 21, 2018 - link

$1000? 8800 GTX launched for $600 in 2006 which is about $750 today and 580's $500 is about $577 now.I do get the point about new technologies, R&D, etc. but it is still $100 more expensive than the card it is replacing (ok, $70).