ATI Radeon X800 Pro and XT Platinum Edition: R420 Arrives

by Derek Wilson on May 4, 2004 10:28 AM EST- Posted in

- GPUs

A New Compression Scheme: 3Dc

3Dc isn't something that's going to make current games run better or faster. We aren't talking about a glamorous technology; 3Dc is a lossy compression scheme for use in 3D applications (as its name is supposed to imply). Bandwidth is a highly prized commodity inside a GPU, and compression schemes exist to try to help alleviate pressure on the developer to limit the amount of data pushed through a graphics card.

There are already a few compressions schemes out there, but in their highest compression modes, they introduce some discontinuity into the texture. This is acceptable in some applications, but not all. The specific application ATI is initially targeting for use with 3Dc is normal mapping.

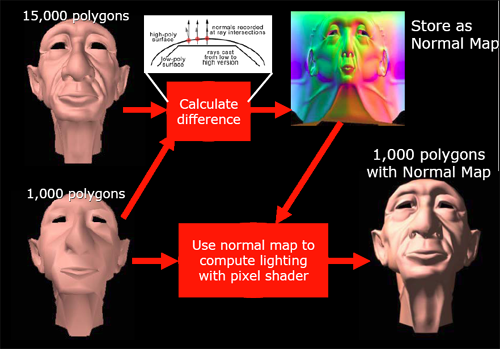

Normal mapping is used in making the lighting of a surface more detailed than is its geometry. Usually, the normal vector at any given point is interpolated from the normal data stored at the vertex level, but, in order to increase the detail of lighting and texturing effects on a surface, normal maps can be used to specify the way normal vectors should be oriented across an entire surface at a high level of detail. If very large normal maps are used, enormous amounts of lighting detail can produce the illusion of geometry that isn't actually there.

Here's an example of how normal mapping can add the appearance of more detailed geometry

In order to work with these large data sets, we would want to use a compression scheme. But since we don't want discontinuities in our lighting (which could appear as flashy or jumpy lighting on a surface), we would like a compression scheme that maintains the smoothness of the original normal map. Enter 3Dc.

This is an example of how 3Dc can help alieve continuity problems in normal map compression

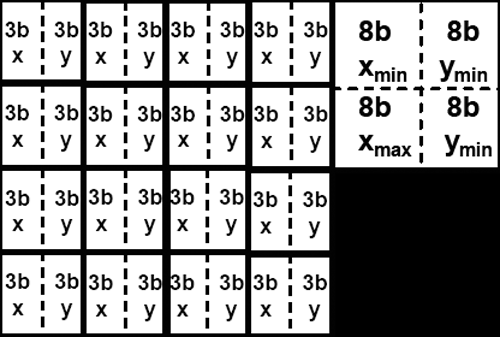

In order to facilitate a high level of continuity, 3Dc divides textures into four by four blocks of vector4 data with 8 bits per component (512bit blocks). For normal map compression, we throw out the z component which can be calculated from the x and y components of the vector (all normal vectors in a normal map are unit vectors and fit the form x^2 + y^2 + z^2 = 1). After throwing out the unused 16 bits from each normal vector, we then calculate the minimum and maximum x and minimum and maximum y for the entire 4x4 block. These four values are stored, and each x or y value is stored as a 3 bit value selecting any of 8 equally spaced steps between the minimum and maximum x or y values (inclusive).

The storage space required for a 4x4 block of normal map data using 3Dc

the resulting compressed data is 4 vectors * 4 vectors * 2 components * 3 bits + 32 bits (128 bits) large, giving a 4:1 compression ratio for normal maps with no discontinuities. Any two channel or scalar data can be compressed fairly well via this scheme. When compressing data that is very noisy (or otherwise inherently discontinuous -- not that this is often seen) accuracy may suffer, and compression ratio falls off for data that is more than two components (other compression schemes may be more useful in these cases).

ATI would really like this compression scheme to catch on much as ST3C and DXTC have. Of course, the fact that compression and decompression of 3Dc is built in to R420 (and not NV40) won't play a small part in ATI's evangelism of the technology. After all is said and done, future hardware support by other vendors will be based on software adoption rate of the technology, and software adoption will likely also be influenced by hardware vendor's plans for future support.

As far as we are concerned, all methods of increasing apparent useable bandwidth inside a GPU in order to deliver higher quality games to end users are welcome. Until memory bandwidth surpasses the needs of graphics processors (which will never happen), innovative and effective compressions schemes will be very helpful in applying all the computational power available in modern GPUs to very large sets of data.

95 Comments

View All Comments

adntaylor - Tuesday, May 4, 2004 - link

I wish they'd also tested with an nForce3 motherboard. nVidia have managed some very interesting performance enhancements on the AGP to HT tunnel that only works with the nVidia graphics cards. That might have pushed the 6800 in front - who knows!UlricT - Tuesday, May 4, 2004 - link

Hey... Though the review rocks, you guys desperately need an editor for spelling and grammar!Jeff7181 - Tuesday, May 4, 2004 - link

This pretty much settles it. With the excellent comparision between architectures, and the benchmark scores to prove the advantages and disadvantages of the architecture... my next card will be made by ATI.NV40 sure has a lot of potential, one might say it's ahead of it's time, supporting SM 3.0 and being so programmable. However, with a product cycle of 6 months to a year, being ahead of it's time is more of a disadvantage in this case. People don't care what it COULD do... people care what it DOES do... and the R420 seems to do it better. I just hope my venture into the world of ATI doesn't turn into driver hell.

NullSubroutine - Tuesday, May 4, 2004 - link

Im fan boy for neither company and objectively I can say the cards are equal. Some games the ATI cards are faster other games the Nvidia cards are faster. So it all depends on the game you play to which one is better and the price of the card you are looking for. (Hmm, maybe motherboard companies could make 2 AGP slots...)About the arguement of the PS 2.0/3.0...

2.0 Cards will be able to play games with 3.0, they may not have full functionality or they may run it slower. This will remain to be seen till games begin to use 3.0. However...

The one thing bad for Nvidia in my eyes is the pixel shader quality that can be seen in Farcry, whether this is a game or driver glitch it is still unknown.

I forgot to add I like that the ATI cards use less power, I dont want to have to pay for another PSU ontop of already high prices of video cards. I would also like to see a review again a month from now when newer drivers come out to see how much things have changed.

l3ored - Tuesday, May 4, 2004 - link

pschhh, did you see the unreal 3 demo? in the video i saw, it looked like it ran at about 5fps imagine running halo on a gfx 5200. however you could run it if you were to turn of halo's PS 2 effects. i think thats how it's going to be with unreal 3Slaanesh - Tuesday, May 4, 2004 - link

Since PS 3.0 is not supported by the X800 hardware, does this mean that those extremely impressive graphical features showed in the Unreal 3 tech demo (NV40 launch) and the near-to-be-released goodlooking PS 3.0 Far Cry update are both NOT playable on the X800?? This would be a huge disadvantage for ATi since alot of the upcoming topgames will support PS3.0!l3ored - Tuesday, May 4, 2004 - link

i agree phiro, personally i think im gonna get the one that hits $200 first (may be a while)Phiro - Tuesday, May 4, 2004 - link

Hearing about the 6850 and the other Emergency-Extreme-Whatever 6800 variants that are floating about irritates me greatly. Nvidia, you are losing your way!Instead of spending all that time, effort and $$ just to try to take the "speed champ" title, make your shit that much cheaper instead! If your 6800 Ultra was $425 instead of $500, that would give you a hell of alot more market share and $$ than a stupid Emergency Edition of your top end cards... We laugh at Intel for doing it, and now you're doing it too, come fricking on...

gordon151 - Tuesday, May 4, 2004 - link

#14, I think it has more to do with the fact those OpenGL benchmarks are based on a single engine that was never fast on ATI hardware to begin with.araczynski - Tuesday, May 4, 2004 - link

12: personally i think the TNT line was better then the Voodoo line. I think they bought them out only to get rid of the competition, which was rather stupid because i think they would have died out sooner or later anyway because nvidia was just better. I would guess that perhaps they bought them out cuz that gave them patent rights and they woudln't have to worry about being sued for probably copying some of the technology :)