ATI Radeon X800 Pro and XT Platinum Edition: R420 Arrives

by Derek Wilson on May 4, 2004 10:28 AM EST- Posted in

- GPUs

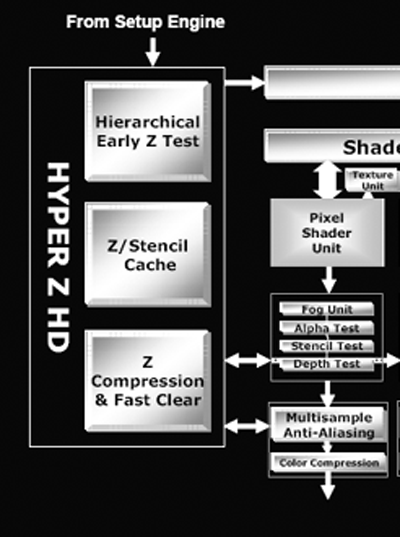

Depth and Stencil with Hyper Z HD

In accordance with their "High Definition Gaming" theme, ATI is calling the R420's method of handling depth and stencil processing Hyper Z HD. Depth and stencil processing is handled at multiple points throughout the pipeline, but grouping all this hardware into one block can make sense as each step along the way will touch the z-buffer (an on die cache of z and stencil data). We have previously covered other incarnations of Hyper Z which have done basically the same job. Here we can see where the Hyper Z HD functionality interfaces with the rendering pipeline:

The R420 architecture implements a hierarchical and early z type of occlusion culling in the rendering pipeline.

With early z, as data emerges from the geometry processing portion of the GPU, it is possible to skip further rendering large portions of the scene that are occluded (or covered) by other geometry. In this way, pixels that won't be seen don't need to run through the pixel shader pipelines and waste precious resources.

Hierarchical z indicates that large blocks of pixels are checked and thrown out if the entire tile is occluded. In R420, these tiles are the very same ones output by the geometry and setup engine. If only part of a tile is occluded, smaller subsections are checked and thrown out if possible. This processing doesn't eliminate all the occluded pixels, so pixels coming out of the pixel pipelines also need to be tested for visibility before they are drawn to the framebuffer. The real difference between R3xx and R420 is in the number of pixels that can be gracefully handled.

As rasterization draws nearer, the ATI and NVIDIA architectures begin to differentiate themselves more. Both claim that they are able to calculate up to 32 z or stencil operations per clock, but the conditions under which this is true are different. NV40 is able to push two z/stencil operations per pixel pipeline during a z or stencil only pass or in other cases when no color data is being dealt with (the color unit in NV40 can work with z/stencil data when no color computation is needed). By contrast, R420 pushes 32 z/stencil operations per clock cycle when antialiasing is enabled (one z/stencil operation can be completed per clock at the end of each pixel pipeline, and one z/stencil operation can be completed inside the multisample AA unit).

The different approaches these architectures take mean that each will excel in different ways when dealing with z or stencil data. Under R420, z/stencil speed will be maximized when antialiasing is enabled and will only see 16 z/stencil operations per clock under non-antialiased rendering. NV40 will achieve maximum z/stencil performance when a z/stencil only pass is performed regardless of the state of antialiasing.

The average case for NV40 will be closer to 16 z/stencil operations per clock, and if users don't run antialiasing on R420 they won't see more than 16 z/stencil operations per clock. Really, if everyone begins to enable antialiasing, R420 will begin to shine in real world situations, and if developers embrace z or stencil only passes (such as in Doom III), NV40 will do very well. The bottom line on which approach is better will be defined by the direction the users and developers take in the future. Will enabling antialiasing win out over running at ultra-high resolutions? Will developers mimic John Carmack and the intensive shadowing capabilities of Doom III? Both scenarios could play out simultaneously, but, really, only time will tell.

95 Comments

View All Comments

ZobarStyl - Tuesday, May 4, 2004 - link

Jibbo I thought that the dynamic branching capability as part of PS3.0 could make rendering a scene faster because it skips rendering unneccessary pixels and thus could offer an increase in performance, albeit a small one. In an interview one of the developers of Far Cry said that there weren't many more things that PS3.0 could do that 2.0 can't, but that 3.0 can do things in a single pass that a 2.0 shader would have to do in multiple passes. The way he described it, the real pretty effects can come in later but a streamlined (read: slightly faster) shader could very well improve NV40 scores as is. This seems kind of analogous to the whole 64-bit processor ordeal going on; Intel says you don't need it, but then most articles show higher scores from A64 chips when they are in a 64 bit OS, so basically if you streamline it you can run a little bit faster than in less efficient 32-bit.In the end, it'll still be bitter fanboys fighting it out and buying whatever product their respective corporation feeds them, despite features or speeds or price or whatever. Personally, like I said before, I'll wait and see who really ends up earning my dollar.

Anyway, thanks for keeping me on my toes though, jib...I can't get lazy now... =)

Barkuti - Tuesday, May 4, 2004 - link

From my point of view, the 6800U is superior high end hardware. Folks, you don't need to be that intelligent to understand that if ATI needs 520 Mhz to "beat" nVidia's 400 MHz chip, as it will need to overclock proportionally to keep the same level of performance that means it will need a good bunch of extra MHz to stay at least on par on the overclocking front.I think the final revision of the 6800U will manage 500 MHz overclocks or around (probably more if they deliberately set the initial clock low waiting for ATI), so ATI's hardware may need around 650 Mhz, which I doubt it'll make. As for the power requirements, sure ATI is the winner, but the nVidia's card can be fed with more standard PSU's than they claim; I just think they played on the safe side.

Oh, sure, power may be a limiting factor when oc'ing the 6800U, but the reality is that people who buy these kind of harware already has top end computer components (including the PSU), so no worries here also.

And finally speaking, I think PS 3.0 will make some additional difference. With the possibility to somewhat enhance shader performance and the superior displacement mapping effect, it may give it the edge in at least a handful of games. We'll see.

"Just my 2 cents"

Cheers

Staples - Tuesday, May 4, 2004 - link

Everyone be sure to check out Tom's review. Looks like the X800 did better here than it did against the 6800. I have seen other reviews and the X800 doesn't really seem as fast in comparison as it does here.Anyway, it is a lot faster than I though. The 6800 was impressive but it seems that the reason it does really well in some games and not so great in others is because some games have NVIDIA specific code that the 6800 takes advantage of very well.

UlricT - Tuesday, May 4, 2004 - link

wtf? the GT is outperforming the Ultra in F1 Challenge?jibbo - Tuesday, May 4, 2004 - link

Agree with you all the way on the fanboys, ZobarStyl.Just wanted to point out that PS3.0 is not "faster" - it's simply an API. It allows longer and more complex shaders so, if anything, it's likely to be "slower." I'm guessing that designers who use PS3.0 heavily will see serious fill-rate problems on the 6800. These shaders will have potentially 65k+ instructions with dynamic branching, a minimum of 4 render targets, 32-bit FP minimum color format, etc - I seriosuly doubt any hardcore 3.0 shader programs will run faster than existing 2.0 shaders.

Clearly a developer can have much nicer quality and exotic effects if he/she exploits these, but how many gamers will have a PS3.0 card that will run these extremely complex shaders at high resolutions and AA/AF without crawling to single-digit fps? It's my guess that it will be *at least* a year until games show serious quality differentiation between PS2.0 and PS3.0. But I have been wrong in the past...

T8000 - Tuesday, May 4, 2004 - link

I think it is strange that the tested X800XT is clocked at 520 Mhz, while the 6800U, that is manufactured by the same taiwanese company and also has 16 pipelines, is set at 400 Mhz.This suggests a lot of headroom on the 6800U or a large overclock on the X800XT.

Also note that the 6800U scored much better on tomshardware.com (HALO 65FPS@1600x1200), but that can also be caused by their use of the 3.2 Ghz P4 instead of a 2.2 Ghz A64.

ZobarStyl - Tuesday, May 4, 2004 - link

I love seeing these fanboys announce each product as the best thing ever (same thing happened with the Prescott, Intel fanboys called it the end of AMD and the AMD guys laughed and called it a flamethrower) without actually reading the benches. NV won some, ATi won some. Most of the time it was tiny margins either way. Fanboys aside, this is gonna be a driver war nothing more. The biggest margin was on Far Cry, and I'm personally waiting on the faster PS3.0 to see what that bench really is. This is a great card but price drops and drivers updates will eventually show us the real victor.jibbo - Tuesday, May 4, 2004 - link

If I had to guess, DX10 and Longhorn will coincide with the release of new hardware from everyone.Akaz1976 - Tuesday, May 4, 2004 - link

Just thought of something. If i am reading AT review right, ATi now has milked the original Radeon9700 architecture for nearly 2 years (sure says a lot of good things about the ArtX design team).Anyone know when the true next gen chip can be expected?

Akaz

Ilmater - Tuesday, May 4, 2004 - link

---------------------------------------Hearing about the 6850 and the other Emergency-Extreme-Whatever 6800 variants that are floating about irritates me greatly. Nvidia, you are losing your way!

Instead of spending all that time, effort and $$ just to try to take the "speed champ" title, make your shit that much cheaper instead! If your 6800 Ultra was $425 instead of $500, that would give you a hell of alot more market share and $$ than a stupid Emergency Edition of your top end cards... We laugh at Intel for doing it, and now you're doing it too, come fricking on...

--------------------------------------------

This is ridiculous!! What do you think the XT Platinum Edition from ATI is? The only difference is that nVidia released first, so it's more obvious when they do it than when ATI does. I'm not really a fanboy of either, but you shouldn't dog nVidia for something that everyone does.

Plus, if nVidia dropped their prices, ATI would do the same thing. Then nVidia would be right back where it was before, but they wouldn't be making any money on the cards.