ATI Radeon X800 Pro and XT Platinum Edition: R420 Arrives

by Derek Wilson on May 4, 2004 10:28 AM EST- Posted in

- GPUs

A New Compression Scheme: 3Dc

3Dc isn't something that's going to make current games run better or faster. We aren't talking about a glamorous technology; 3Dc is a lossy compression scheme for use in 3D applications (as its name is supposed to imply). Bandwidth is a highly prized commodity inside a GPU, and compression schemes exist to try to help alleviate pressure on the developer to limit the amount of data pushed through a graphics card.

There are already a few compressions schemes out there, but in their highest compression modes, they introduce some discontinuity into the texture. This is acceptable in some applications, but not all. The specific application ATI is initially targeting for use with 3Dc is normal mapping.

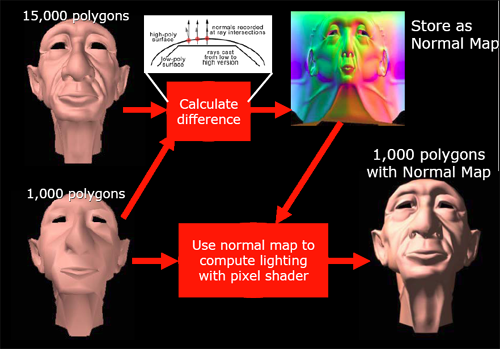

Normal mapping is used in making the lighting of a surface more detailed than is its geometry. Usually, the normal vector at any given point is interpolated from the normal data stored at the vertex level, but, in order to increase the detail of lighting and texturing effects on a surface, normal maps can be used to specify the way normal vectors should be oriented across an entire surface at a high level of detail. If very large normal maps are used, enormous amounts of lighting detail can produce the illusion of geometry that isn't actually there.

Here's an example of how normal mapping can add the appearance of more detailed geometry

In order to work with these large data sets, we would want to use a compression scheme. But since we don't want discontinuities in our lighting (which could appear as flashy or jumpy lighting on a surface), we would like a compression scheme that maintains the smoothness of the original normal map. Enter 3Dc.

This is an example of how 3Dc can help alieve continuity problems in normal map compression

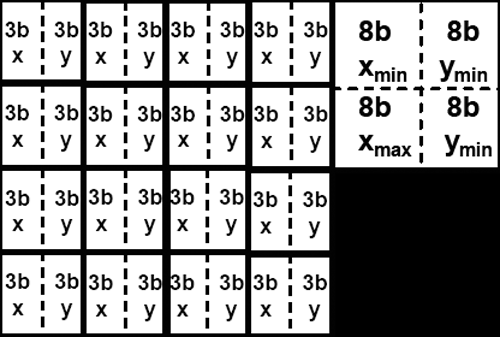

In order to facilitate a high level of continuity, 3Dc divides textures into four by four blocks of vector4 data with 8 bits per component (512bit blocks). For normal map compression, we throw out the z component which can be calculated from the x and y components of the vector (all normal vectors in a normal map are unit vectors and fit the form x^2 + y^2 + z^2 = 1). After throwing out the unused 16 bits from each normal vector, we then calculate the minimum and maximum x and minimum and maximum y for the entire 4x4 block. These four values are stored, and each x or y value is stored as a 3 bit value selecting any of 8 equally spaced steps between the minimum and maximum x or y values (inclusive).

The storage space required for a 4x4 block of normal map data using 3Dc

the resulting compressed data is 4 vectors * 4 vectors * 2 components * 3 bits + 32 bits (128 bits) large, giving a 4:1 compression ratio for normal maps with no discontinuities. Any two channel or scalar data can be compressed fairly well via this scheme. When compressing data that is very noisy (or otherwise inherently discontinuous -- not that this is often seen) accuracy may suffer, and compression ratio falls off for data that is more than two components (other compression schemes may be more useful in these cases).

ATI would really like this compression scheme to catch on much as ST3C and DXTC have. Of course, the fact that compression and decompression of 3Dc is built in to R420 (and not NV40) won't play a small part in ATI's evangelism of the technology. After all is said and done, future hardware support by other vendors will be based on software adoption rate of the technology, and software adoption will likely also be influenced by hardware vendor's plans for future support.

As far as we are concerned, all methods of increasing apparent useable bandwidth inside a GPU in order to deliver higher quality games to end users are welcome. Until memory bandwidth surpasses the needs of graphics processors (which will never happen), innovative and effective compressions schemes will be very helpful in applying all the computational power available in modern GPUs to very large sets of data.

95 Comments

View All Comments

rms - Tuesday, May 4, 2004 - link

"the near-to-be-released goodlooking PS 3.0 Far Cry update "When is that patch scheduled for? I recall seeing some rumour it was due in September...

rms

Fr0zeN - Tuesday, May 4, 2004 - link

Yeah I agree, the GT looks like it's gonna give the x800P a run for its money. On a side note, the differences between P and XT versions seem to be greater than r9800's, hmm.In the end it's the most overclockable $200 card that'll end up in my comp. There's no way I'm paying $500 for something that I can compensate for by turning the rez down to 10x7... Raw benchmarks mean nothing if it doesn't oc well!

Doop - Tuesday, May 4, 2004 - link

The cards seem very close, I tend to favor nVidia now since they have superior multi monitor and professional 3D drivers and I regret buying my Fire GL X1.It's strange ATi didn't announce a 16 pipeline card orginally, it will be interesting to see in a month or two who actually ends up delivering cards.

I mean if they're being made in significant quantities they'll be at your local store with a reduced 'street' price but if it's just a paper launch they'll just be at Alienware, Dell (with a new PC only) or $500 if you can find one.

jensend - Tuesday, May 4, 2004 - link

#17, the Serious Engine has nothing to do with the Q3 engine; Nvidia's superior OpenGL performance is not dependent on any handful of engines' particular quirks.Zobar is right; contra Jibbo, the increased flexibility of PS3 means that for many 2.0 shader programs a PS3 version can achieve equivalent results with a lesser performance hit.

As far as power goes, I'm surprised NV made such a big deal out of PSU requirements, as its new cards (except the 6800U Extremely Short Production Run Edition/6850U/Whatever they end up calling that part) compare favorably wattage-wise to the 5950U and don't pull all that much more power than the 9800XT. Both companies have made a big performance per watt leap, and it'll be interesting to see how the mid-range and value cards compare in this respect.

blitz - Tuesday, May 4, 2004 - link

"Of course, we will have to wait and see what happens in that area, but depending on what the test results for our 6850 Ultra end up looking like, we may end up recommending that NVIDIA push their prices down slightly (or shift around a few specs) in order to keep the market balanced."It sounds as if you would be giving nvidia advice on their pricing strategy, somehow I don't think they would listen nor be influenced by your opinion. It could be better phrased that you would advise consumers to wait for prices to drop or look elsewhere for better price\performance ratio.

Cygni - Tuesday, May 4, 2004 - link

Hmmmm, interesting. I really dont see where anyone can draw the conclusion that the x800 Pro is CLEARLY the winner. The 6800 GT and x800 Pro traded game wins back and forth. There doesnt seem to be any clear cut winner to me. Wolf, JediA, X2, F1C, and AQ3 all went clearly to the GT... this isnt open and shut. Alot of the other tests were split depending on resolution/AA. On the other hand, I dont think you can say that the GT is clearly better than the x800 Pro either.Personally, I will buy whichever one hits a reasonable price point first. $150-200. Both seem to be pretty equal, and to me, price matters far more.

kherman - Tuesday, May 4, 2004 - link

BRING ON DOOM 3!!!!!!We all know inside that this is what ID was waiting for!

Diesel - Tuesday, May 4, 2004 - link

------------------I think it is strange that the tested X800XT is clocked at 520 Mhz, while the 6800U, that is manufactured by the same taiwanese company and also has 16 pipelines, is set at 400 Mhz.

------------------

This could be because NV40 has 222M transistors vs. R420 at 160M transistors. I think the amount of power required and heat generated is proportional to transistor count and clock speed.

edub82 - Tuesday, May 4, 2004 - link

I know this is an ATI article but that 6800 GT is looking very attractive. It beats the x800Pro on a fairly regular basis is a single slot and molex connector card and is starting at 400 and hopefully will go down a few dollars ;) in 6 months when i want to upgrade.Slaanesh - Tuesday, May 4, 2004 - link

"Clearly a developer can have much nicer quality and exotic effects if he/she exploits these, but how many gamers will have a PS3.0 card that will run these extremely complex shaders at high resolutions and AA/AF without crawling to single-digit fps? It's my guess that it will be *at least* a year until games show serious quality differentiation between PS2.0 and PS3.0. But I have been wrong in the past..."--------

I dunnow.. When Morrowind got released, only he few GF3 cards on the market were able to show the cool pixel shader water effects and they did it well; at that time I was really pissed I went for the cheaper Geforce2 Ultra although it had some better benchmarks at a much lower price. I don't think I want make that mistake again and pay the same amount of money for a card that doesnt support the latest technology..