The AMD Threadripper 2990WX 32-Core and 2950X 16-Core Review

by Dr. Ian Cutress on August 13, 2018 9:00 AM ESTPrecision Boost 2

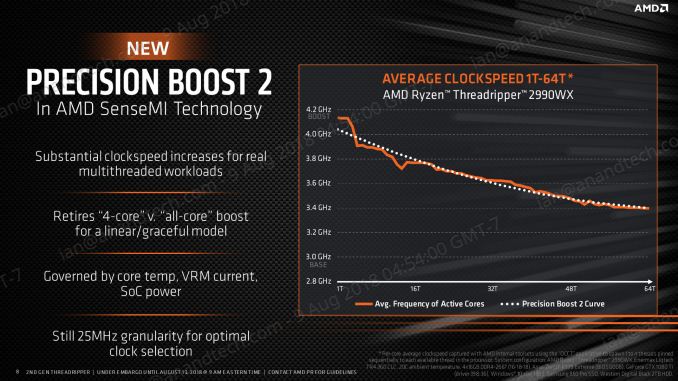

Exact per-core turbo timings for the new processors will be determined by AMD’s voltage-frequency scaling functionality through Precision Boost 2. This feature, which we covered extensively in our Ryzen 7 2700X review, relies on available power and current to determine frequency, rather than a discrete look-up-table for voltage and frequency based on loading. Depending on the system default capabilities, the frequency and voltage will dynamically shift in order to use more of the power budget available at any point in the processor loading.

The idea is that the processor can use more of the power budget available to it than a fixed look up table that has to be consistent between all SKUs that are stamped with that number.

Precision Boost 2 also works in conjunction with XFR2 (eXtreme Frequency Range) which reacts to additional thermal headroom. If there is additional thermal budget, driven by a top-line cooler, then the processor is enabled to use more power up to the thermal limit and get additional frequency. AMD claims that a good cooler in a low ambient situation can compute >10% better in selected tests as a result of XFR2.

Ultimately this makes testing Threadripper 2 somewhat difficult. With a turbo table, performance is fixed between the different performance characteristics of each bit of silicon, making power the only differentiator. With PB2 and XF2, no two processors will perform the same. AMD has also hit a bit of a snag with these features, choosing to launch Threadripper 2 during the middle of a heatwave in Europe. Europe is famed for its lack of air conditioning everywhere, and when the ambient temperature is going above 30ºC, this will limit additional performance gains. It means that a review from a Nordic publication might see better results than one from the tropics, quite substantially.

Luckily for us we tested most of our benchmarks while in an air conditioned hotel thanks to Intel’s Data-Centric Innovation Summit which was the week before launch.

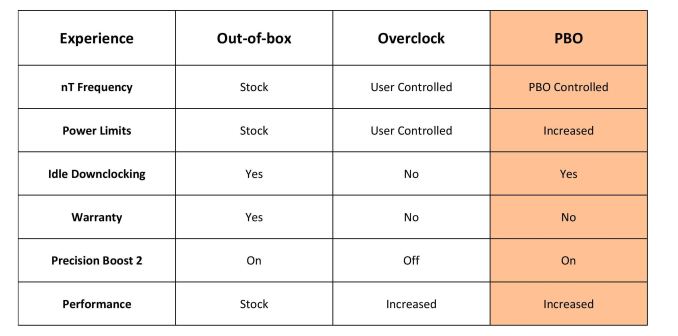

Precision Boost Overdrive

The new processors also support a feature called Precision Boost Overdrive, which looks at three key areas for power, thermal design current, and electrical design current. If any of these three areas has additional headroom, then the system will attempt to raise both the frequency and the voltage for increased performance. PBO is a mix of ‘standard’ overclocking, giving an all core boost, but gives a single core frequency uplift along with the support to still keep Precision Boost trying to raise frequency in middle-sized workloads, which is typically lost with a standard overclock. PBO also allows for idle power saving with a standard performance. PBO is enabled through Ryzen Master.

The three key areas are defined by AMD as follows:

- Package (CPU) Power, or PPT: Allowed socket power consumption permitted across the voltage rails supplying the socket

- Thermal Design Current, or TDC: The maximum current that can be delivered by the motherboard voltage regulator after warming to a steady-state temperature

- Electrical Design Current, or EDC: The maximum current that can be delivered by the motherboard voltage regulator in a peak/spike condition

By extending these limits, PBO gives rise for PB2 to have more headroom, letting PB2 push the system harder and further. PBO is quoted by AMD as supplying up to +16% performance beyond the standard.

AMD also clarifies that PBO is pushing the processor beyond the rated specifications and is an overclock: and thus any damage incurred will not be protected by warranty

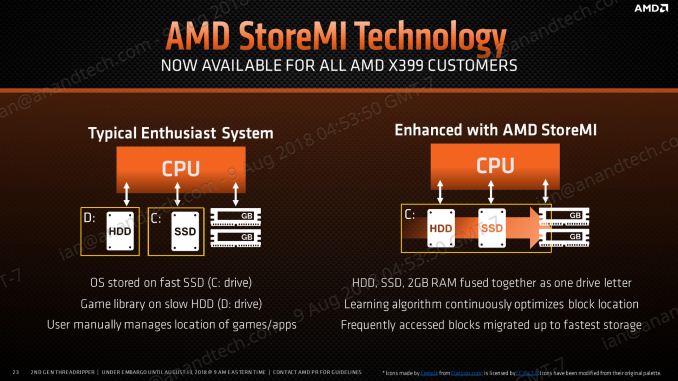

StoreMI

Also available with the new Ryzen Threadripper 2 processors is StoreMI, AMD’s solution to caching by offering configurable tiered storage for users that want to mix DRAM, SSD, and HDD storage into a single unified platform. The software implementation dynamically adjusts data between up to 2GB of DRAM, up to 256 GB of SSD (NVMe or SATA), and a spinning hard drive to afford the best reading and writing experience when there isn’t enough fast storage.

AMD initially offered this software as a $20 add-on to the Ryzen APU platform, then it became free (up to a 256GB SSD) for the Ryzen 2000-series processors. That offer now extends to Threadripper. AMD’s best case scenario is citing a 90% improvement in loading times.

171 Comments

View All Comments

plonk420 - Tuesday, August 14, 2018 - link

worse for efficiency?https://techreport.com/r.x/2018_08_13_AMD_s_Ryzen_...

Railgun - Monday, August 13, 2018 - link

How can you tell? The article isn’t even finished.mapesdhs - Monday, August 13, 2018 - link

People will argue a lot here about performance per watt and suchlike, but in the real world the cost of the software and the annual license renewal is often far more than the base hw cost, resulting in a long term TCO that dwarfs any differences in some CPU cost. I'm referring here to the kind of user that would find the 32c option relevant.Also missing from the article is the notion of being able to run multiple medium scale tasks on the same system, eg. 3 or 4 tasks each of which is using 8 to 10 cores. This is quite common practice. An article can only test so much though, at this level of hw the number of different parameters to consider can be very large.

Most people on tech forums of this kind will default to tasks like 3D rendering and video conversion when thinking about compute loads that can use a lot of cores, but those are very different to QCD, FEA and dozens of other tasks in research and data crunching. Some will match the arch AMD is using, others won't; some could be tweaked to run better, others will be fine with 6 to 10 cores and just run 4 instances testing different things. It varies.

Talking to an admin at COSMOS years ago, I was told that even coders with seemingly unlimited cores to play with found it quite hard to scale relevant code beyond about 512 cores, so instead for the sort of work they were doing, the centre would run multilple simulations at the same time, which on the hw platform in question worked very nicely indeed (1856 cores of the SandyBridge-EP era, 14.5TB of globally shared memory, used primarily for research in cosmology, astrophysics and particle physics; squish it all into a laptop and I'm sure Sheldon would be happy. :D) That was back in 2012, but the same concepts apply today.

For TR2, the tricky part is getting the OS to play nice, along with the BIOS, and optimised sw. It'll be interesting to see how 2990WX performance evolves over time as BIOS updates come out and AMD gets feedback on how best to exploit the design, new optimisations from sw vendors (activate TR2 mode!) and so on.

SGI dealt with a lot of these same issues when evolving its Origin design 20 years ago. For some tasks it absolutely obliterated the competition (eg. weather modelling and QCD), while for others in an unoptimised state it was terrible (animation rendering, not something that needs shared memory, but ILM wrote custom sw to reuse bits of a frame already calculated for future frame, the data able to fly between CPUs very fast, increasing throughput by 80% and making the 32-CPU systems very competitive, but in the long run it was easier to brute force on x86 and save the coder salary costs).

There are so many different tasks in the professional space, the variety is vast. It's too easy to think cores are all that matter, but sometimes having oodles of RAM is more important, or massive I/O (defense imaging, medical and GIS are good examples).

I'm just delighted to see this kind of tech finally filter down to the prosumer/consumer, but alas much of the nuance will be lost, and sadly some will undoubtedly buy based on the marketing, as opposed to the golden rule of any tech at this level: ignore the publish benchmarks, the ony test that actually matters is your specific intended task and data, so try and test it with that before making a purchasing decision.

Ian.

AbRASiON - Monday, August 13, 2018 - link

Really? I can't tell if posts like these are facetious or kidding or what?I want AMD to compete so badly long term for all of us, but Intel have such immense resources, such huge infrastructure, they have ties to so many big business for high end server solutions. They have the bottom end of the low power market sealed up.

Even if their 10nm is delayed another 3 years, AMD will only just begin to start to really make a genuine long term dent in Intel.

I'd love to see us at a 50/50 situation here, heck I'd be happy with a 25/75 situation. As it stands, Intel isn't finished, not even close.

imaheadcase - Monday, August 13, 2018 - link

Are you looking at same benchmarks as everyone else? I mean AMD ass was handed to it in Encoding tests and even went neck to neck against some 6c intel products. If AMD got one of these out every 6 months with better improvements sure, but they never do.imaheadcase - Monday, August 13, 2018 - link

Especially when you consider they are using double the core count to get the numbers they do have, its not very efficient way to get better performance.crotach - Tuesday, August 14, 2018 - link

It's happened before. AMD trashes Intel. Intel takes it on the chin. AMD leads for 1-2 years and celebrates. Then Intel releases a new platform and AMD plays catch-up for 10 years and tries hard not to go bankrupt.I dearly hope they've learned a lesson the last time, but I have my doubts. I will support them and my next machine will be AMD, which makes perfect sense, but I won't be investing heavily in the platform, so no X399 for me.

boozed - Tuesday, August 14, 2018 - link

We're talking about CPUs that cost more than most complete PCs. Willy-waving aside, they are irrelevant to the market.Ian Cutress - Monday, August 13, 2018 - link

Hey everyone, sorry for leaving a few pages blank right now. Jet lag hit me hard over the weekend from Flash Memory Summit. Will be filling in the blanks and the analysis throughout today.But here's what there is to look forward to:

- Our new test suite

- Analysis of Overclocking Results at 4G

- Direct Comparison to EPYC

- Me being an idiot and leaving the plastic cover on my cooler, but it completed a set of benchmarks. I pick through the data to see if it was as bad as I expected

The benchmark data should now be in Bench, under the CPU 2019 section, as our new suite will go into next year as well.

Thoughts and commentary welcome!

Tamz_msc - Monday, August 13, 2018 - link

Are the numbers for test LuxMark C++ test correct? Seems they've been swapped(2900WX and 2950X).