The AMD Threadripper 2990WX 32-Core and 2950X 16-Core Review

by Dr. Ian Cutress on August 13, 2018 9:00 AM ESTPower Consumption, TDP, and Prime95 vs POV-Ray

For most of us, processors hover around the 15W range in notebooks, and 65-95W in desktops. High-end desktop processors have always been power hungry, with numbers of 130W and 140W fairly common. When AMD launched a 220W processor on the old Vishera platform, pushing the Bulldozer family cores up to 5.0 GHz, we all thought AMD was completely mental – there were plenty of motherboards that were socket compatible at the time, although to actually harness 220W and up, a range of new high-end motherboards had to be announced. Intel’s highest on-roadmap processor part to date has an official TDP rating of 205W, but now AMD has gone one better, with 250W.

The two new WX processors, the 32-core 2990WX and 2970WX, are both rated for 250W. In both cases they have all four silicon dies active, six Infinity Fabric links active (as described in page 4), and aim to push the boundaries in performance, with AMD showing slides with a 3.6 GHz all-core turbo. The other two processors, X-series replacements, are at 180W, the same as the first generation Threadripper parts they replace.

However, not all TDPs are equal. How Intel and AMD measure TDP has changed over the years, and subsequently now have little value to the real world. Let me explain.

TDP Is a Joke

The value of TDP, or thermal design power, is not a measure of power consumption. It is technically a measure of cooler performance, and a cooler needs to be rated at the TDP level in order to perform regular functions. Actual power consumption should technically be higher – thermal losses from the processor into the socket and from the socket into the motherboard also contribute to cooling, but are not involved in the TDP number. However, for most use cases, TDP and power consumption are used interchangeably, as their differences are minor.

Given this is a review about AMD processors, we can start there. AMD’s TDP calculation relies on a simple formula:

TDP = (Load Temperature in Celsius – Idle Temperature in Celcius) / Thermal Capacity of Cooler

Thus typically AMD will quote for its Ryzen 7 2700X processor that with a load temperature of about 62C, an idle temperature of 42C, and a cooler with a thermal capacity of 0.189 C per Watt (the Wraith Max), we get a value of around 105W.

AMD’s formula has two problems in that the load temperature can be adjusted with fans / external air flow, and that the thermal capacity of the cooler can have a big impact in the result – with a large liquid cooler that has a higher thermal capacity, such as 0.400 C per Watt, then the rated TDP is actually lower: in the case of the Ryzen 7 2700X as above, it would come out as 50 W. AMD does use the thermal performance of its bundled cooler, just to keep things consistent, but it does mean that the TDP rating and the power consumption are not equal and can be manufacturered in a certain direction if AMD were simply to use a different cooler for its comparisons.

Intel’s version of TDP is a little more complex, for one main reason that has not made it into the ecosystem. Intel only defines its TDP for the base frequency, and not the turbo frequency. As a result, if Intel releases a processor with a 95W TDP, a base frequency of 3.2 GHz, a single-core turbo of 4.7 GHz, and an all-core turbo of 4.2 GHz, then Intel only guarantees that power consumption at the base frequency of 3.2 GHz will be 95W, not the all-core turbo. This leaves the base operation for the processor in any motherboard that uses turbo (all of them) to have a higher power draw than TDP in any loaded scenarios.

This is very annoying. Intel advertises the single core turbo quite prolifically in its marketing, and has recently decided that its all-core turbo values should not be published. We are told that it is proprietary, which is vast about-turn from its previous disclosure policy. Not only that, this data is freely available with the chips in hand. But it does mean that almost every processor will have an all-core turbo frequency above the base frequency, and thus can run above TDP very easily.

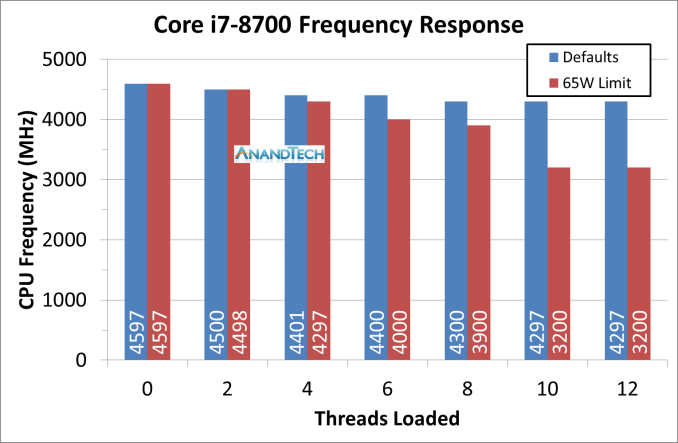

We can see this best with a Core i7-8700 and its 65W TDP. It has a 3.2 GHz base frequency, a single core turbo of 4.6 GHz, and an all-core turbo of 4.3 GHz. If we load up the threads and then limit the power consumption to 65W, we get the following:

So just a warning about when it comes to TDP values. Take them with a grain of salt. Or a spoonful.

Power Consumption

There are several ways to measure power consumption of a processor. The typical easy way is to use a power meter which gives the wall power consumption of the full system, including efficiency losses by the power delivery of the motherboard. The complex way is hooking up the required tools to the board to measure the current through the 12V connector, and measure the CPU voltage using the overclocking measurement points on certain motherboards. A third way is by reading the hardware registers with applicable software.

Reading registers is a double edged sword. First, you are relying on internal measurements, which often have one of the wider error margins. Second, you are relying on the CPU manufacturer to report the correct numbers, which is not always true (!). However on the plus side, we can often get more information out from the processor, such as a breakdown of per-core power, DRAM power, IO/Interconnect power, integrated graphics power, and a general look at how the power is distributed. The hardware registers are how the system tells itself how much power it is using, and how it has to adjust the voltage/frequency depending on current draw, power draw, or thermal performance. The other plus side is that we can roll it into our testing scripts fairly easily.

Testing for power consumption is also a topic to consider. Usually a power virus is used, which can prod every area of the processor simultaneously for the peak power draw. A power virus is often used as an overclocking stability test, but these have one drawback in that they typically do not represent real-world power draw. It is a fine line between a real-world test and a synthetic test designed to cram every joule of energy through a chip. Software such as LINPACK is often used as a highly optimized power test, or internal tools from Intel and AMD can stress a chip even harder.

Prime95 is an often used candidate, because it also ends up being hyper-optimized for almost every core and drives the power home. Its workload is semi-synthetic, based on calculating prime numbers, but the stress test forgoes the results and just aims at power. During this review, we started playing a little with POV-Ray as our power tester, as it seemed to cause more power draw than Prime95, while also being a real-world ray-tracing workload. As I am writing this, I am deciding which tool is best to measure power consumption: Prime95 has trouble with larger core counts (sometimes it is hard to get numbers beyond 25 threads), and to get POV-Ray to work technically we adjust the affinity loading, which is more about loading cores than threads, however the data suggests that it is actually the threads that are being loaded. I will point out which software we are using in our data at each stage, however our POV-Ray was developed mid-review, so most data is with Prime95.

Overall Power Consumption

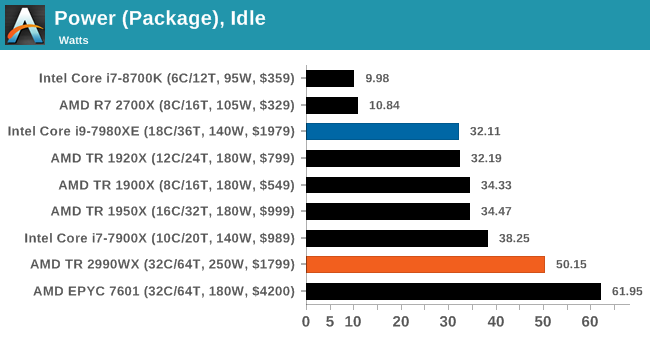

For this first set of data, I want to represent the full-chip power consumption measured in different scenarios. Firstly at idle, which as we’ve seen on Page 4 can be properly dominated by how the interconnect on the processor powers up and powers down.

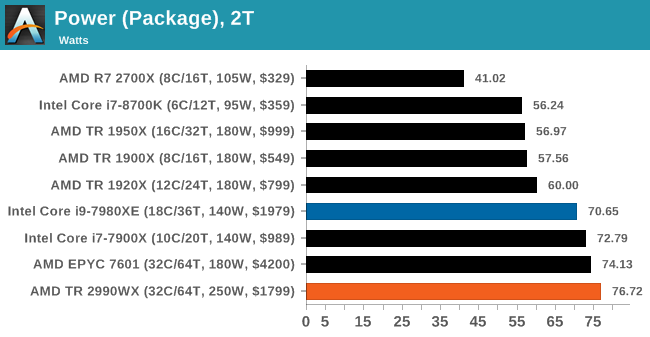

Next, we load a single core with two threads of Prime95. Our testing methodology forces both threads onto the same core for those that can accept multiple threads. Users focusing on a single task will be more in this area for power, or for systems where Windows is tinkering in the background.

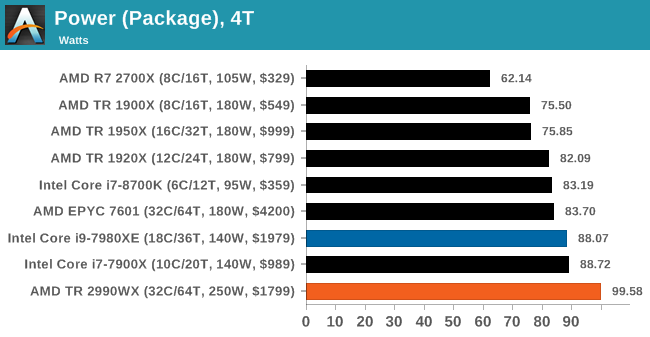

The third test is the system loaded with four threads of Prime95. This will cover the majority of how most people use systems day to day, with multiple browser tabs and windows open, several software packages on the go, or perhaps a game or two.

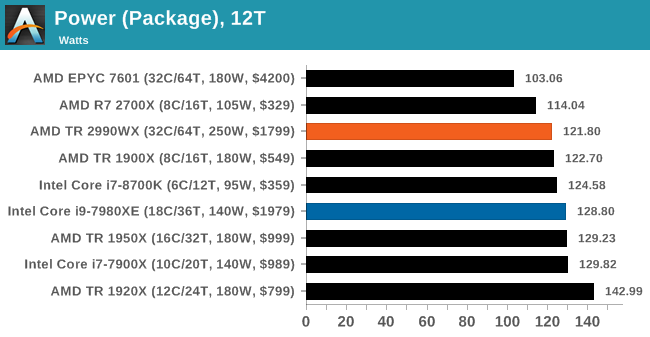

Moving up a gear to twelve threads of Prime95, we are moving more into the multi-taskers that have sizeable workloads. This will include gamers and streamers, or users who are rendering while also performing other tasks.

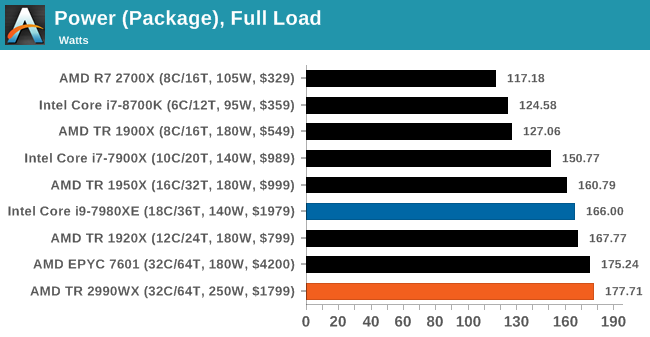

The final graph, for good measure, is the full load power draw. For this test we are running a full set of Prime95 threads, however in the future we will be using POV-Ray for this test as it tends to be more consistent, especially at high thread-count workloads. The only downside here is that an overclocked 2990WX can complete the POV-Ray test in under 20 seconds.

Per-Core Power Consumption

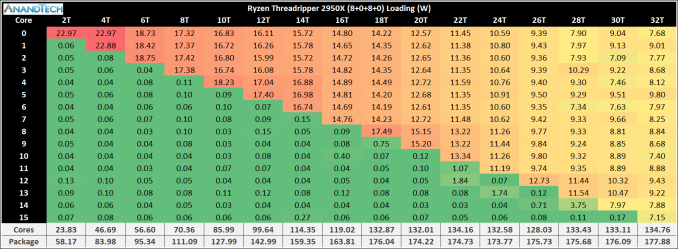

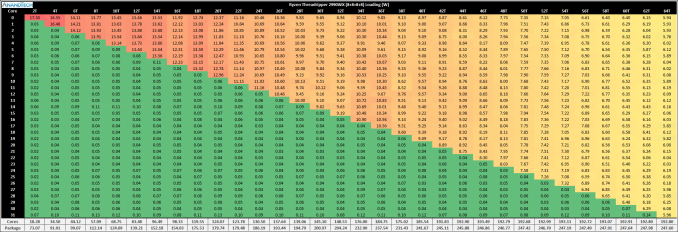

Before we created the POV-Ray power consumption test, I ran both of the new Threadripper processors we have on an all-threads test with Prime95, and extracted the power consumption of each core at each loading. Click each image to zoom in.

On the first core loading, we can see that the power consumption is ~23W, which is super high for a single Zeppelin core. This goes through to two cores loaded as well, but when we hit three cores we only top out around 18.8W per core. Given that this chip has four CCXes, we have to wonder if this is because the threads are being loaded onto the same CCX (which is what is happening, based on the processor enumeration), and we are hitting CCX power limits. At four cores loaded, each core is more around 17.4W.

Moving onto five cores loaded, and the fifth core sits at 18.2W while the other four are at 16.8W, indicating that this new core is located on a new CCX. When we move from eight cores to nine cores, we see the same thing whereby the ninth core has 17.5W of power, whereas the other eight are at 14.3W. The power distribution finally drops to 7-9W per core when we hit all 16 cores loaded.

At the end, we observe a total package power consumption of ~178W, near the 180W TDP, with ~135W on the cores and the rest on the non-core hardware (Infinity Fabric, IO, IMC).

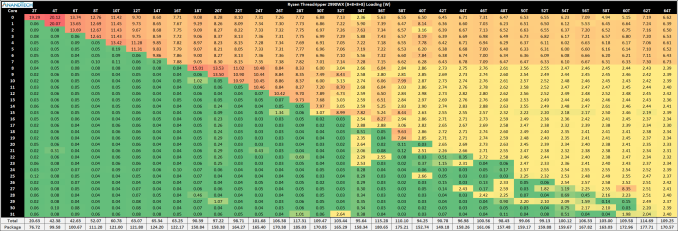

Moving onto the 2990WX, and the story looks very odd indeed.

For the most part, the power consumption data up to 15 cores follows the same trend as the 2950X. However as we push on, it is clear that the first zeppelin die is clearly the favored – as more threads are loaded, power is taken from the second die, all the way down to 2.4W per core. The first zeppelin die, at full load, has around 6.6W per core, but all the other cores on the processor have 2.4W. There might be something in this test causing the first die to have preferential power over the others. It is also worth noting that the power consumption for the chip is near 180W, not the 250W as listed by TDP.

It was around this time that the POV-Ray version of the power script was developed. I ran it through the 2990WX to see the results. This time it was far more as expected:

Surprisingly, the load became very evenly distributed as the number of threads was ramped, as we were able to fully use up to the 250W TDP even at stock levels with a good cooler. In the end we were using 193W for the cores and 55W for everything else, and at no point did we see active cores dangling below 3W – in fact when all the cores were loaded, each core seemed to find 6W to be comfortable. We hit the 240W-250W package power at around 40 threads loaded, and beyond that each core added meant the power had to be redistributed.

Two thoughts came to mind – the first was easily verifiable: the BIOS was stuck at 180W power consumption after putting in the 2950X perhaps? I checked and before I did the 2990WX power tests, I previously had had the 1920X in. I did a full BIOS reset, and did not see a change, indicating that this wasn’t a BIOS power limitation.

The second is to look at frequencies. Taking a single data point, 40 threads loaded, there was a slight differential, but only in power.

During the Prime95 test, the first silicon die was running at around 7W per core, and had a frequency of 3575 MHz. The second silicon die was shown at 3W per core, but at 3525 MHz. The other idle cores were at 1775 MHz or 2000 MHz, consuming milliwatts.

During the POV-Ray test, every active core was at around 9.1W per core, and had a frequency of 3575 MHz. All the idle cores were at 2000 MHz (three of which at 1775 MHz), again consuming milliwatts per core.

Aside from the per-core power numbers being reported, the chips seemed overall similar in frequencies, with the POV-Ray test a little higher, which comes through in the total power consumption under POV-Ray being higher.

Ultimately it comes down to this – power reporting with Prime95 beyond 20 cores or so, or on multi-die chips, doesn’t seem to work that well. Going forward we will be using our POV-Ray test, which also seems to draw more power.

Core vs Non-Core Power

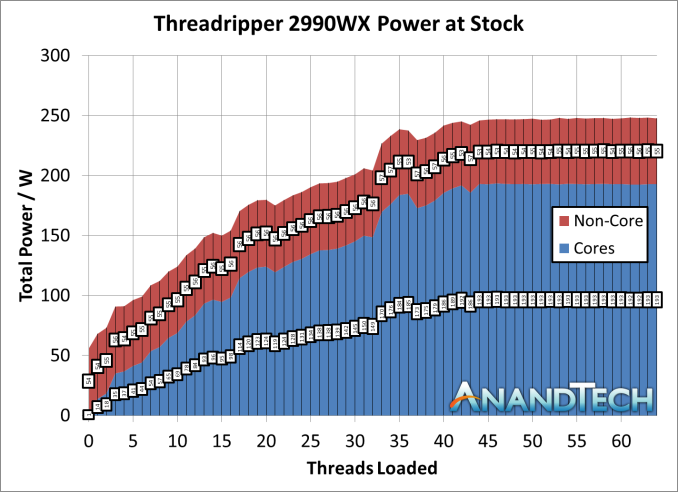

Going back to the page where we spoke about Infinity Fabric frequency, we can do a similar plot under the POV-Ray test for the 2990WX.

There’s still a good amount of variation in overall loading, with some wobbles, but the data (aside from peak consumption) is broadly in line with our Prime95 test, with the non-core power such as Infinity Fabric consuming 55-60W of the total. As a result we see the non-core power as a percentage of the total power go from 75% with two threads loaded down to 22% by the time 40 threads are loaded.

171 Comments

View All Comments

plonk420 - Tuesday, August 14, 2018 - link

worse for efficiency?https://techreport.com/r.x/2018_08_13_AMD_s_Ryzen_...

Railgun - Monday, August 13, 2018 - link

How can you tell? The article isn’t even finished.mapesdhs - Monday, August 13, 2018 - link

People will argue a lot here about performance per watt and suchlike, but in the real world the cost of the software and the annual license renewal is often far more than the base hw cost, resulting in a long term TCO that dwarfs any differences in some CPU cost. I'm referring here to the kind of user that would find the 32c option relevant.Also missing from the article is the notion of being able to run multiple medium scale tasks on the same system, eg. 3 or 4 tasks each of which is using 8 to 10 cores. This is quite common practice. An article can only test so much though, at this level of hw the number of different parameters to consider can be very large.

Most people on tech forums of this kind will default to tasks like 3D rendering and video conversion when thinking about compute loads that can use a lot of cores, but those are very different to QCD, FEA and dozens of other tasks in research and data crunching. Some will match the arch AMD is using, others won't; some could be tweaked to run better, others will be fine with 6 to 10 cores and just run 4 instances testing different things. It varies.

Talking to an admin at COSMOS years ago, I was told that even coders with seemingly unlimited cores to play with found it quite hard to scale relevant code beyond about 512 cores, so instead for the sort of work they were doing, the centre would run multilple simulations at the same time, which on the hw platform in question worked very nicely indeed (1856 cores of the SandyBridge-EP era, 14.5TB of globally shared memory, used primarily for research in cosmology, astrophysics and particle physics; squish it all into a laptop and I'm sure Sheldon would be happy. :D) That was back in 2012, but the same concepts apply today.

For TR2, the tricky part is getting the OS to play nice, along with the BIOS, and optimised sw. It'll be interesting to see how 2990WX performance evolves over time as BIOS updates come out and AMD gets feedback on how best to exploit the design, new optimisations from sw vendors (activate TR2 mode!) and so on.

SGI dealt with a lot of these same issues when evolving its Origin design 20 years ago. For some tasks it absolutely obliterated the competition (eg. weather modelling and QCD), while for others in an unoptimised state it was terrible (animation rendering, not something that needs shared memory, but ILM wrote custom sw to reuse bits of a frame already calculated for future frame, the data able to fly between CPUs very fast, increasing throughput by 80% and making the 32-CPU systems very competitive, but in the long run it was easier to brute force on x86 and save the coder salary costs).

There are so many different tasks in the professional space, the variety is vast. It's too easy to think cores are all that matter, but sometimes having oodles of RAM is more important, or massive I/O (defense imaging, medical and GIS are good examples).

I'm just delighted to see this kind of tech finally filter down to the prosumer/consumer, but alas much of the nuance will be lost, and sadly some will undoubtedly buy based on the marketing, as opposed to the golden rule of any tech at this level: ignore the publish benchmarks, the ony test that actually matters is your specific intended task and data, so try and test it with that before making a purchasing decision.

Ian.

AbRASiON - Monday, August 13, 2018 - link

Really? I can't tell if posts like these are facetious or kidding or what?I want AMD to compete so badly long term for all of us, but Intel have such immense resources, such huge infrastructure, they have ties to so many big business for high end server solutions. They have the bottom end of the low power market sealed up.

Even if their 10nm is delayed another 3 years, AMD will only just begin to start to really make a genuine long term dent in Intel.

I'd love to see us at a 50/50 situation here, heck I'd be happy with a 25/75 situation. As it stands, Intel isn't finished, not even close.

imaheadcase - Monday, August 13, 2018 - link

Are you looking at same benchmarks as everyone else? I mean AMD ass was handed to it in Encoding tests and even went neck to neck against some 6c intel products. If AMD got one of these out every 6 months with better improvements sure, but they never do.imaheadcase - Monday, August 13, 2018 - link

Especially when you consider they are using double the core count to get the numbers they do have, its not very efficient way to get better performance.crotach - Tuesday, August 14, 2018 - link

It's happened before. AMD trashes Intel. Intel takes it on the chin. AMD leads for 1-2 years and celebrates. Then Intel releases a new platform and AMD plays catch-up for 10 years and tries hard not to go bankrupt.I dearly hope they've learned a lesson the last time, but I have my doubts. I will support them and my next machine will be AMD, which makes perfect sense, but I won't be investing heavily in the platform, so no X399 for me.

boozed - Tuesday, August 14, 2018 - link

We're talking about CPUs that cost more than most complete PCs. Willy-waving aside, they are irrelevant to the market.Ian Cutress - Monday, August 13, 2018 - link

Hey everyone, sorry for leaving a few pages blank right now. Jet lag hit me hard over the weekend from Flash Memory Summit. Will be filling in the blanks and the analysis throughout today.But here's what there is to look forward to:

- Our new test suite

- Analysis of Overclocking Results at 4G

- Direct Comparison to EPYC

- Me being an idiot and leaving the plastic cover on my cooler, but it completed a set of benchmarks. I pick through the data to see if it was as bad as I expected

The benchmark data should now be in Bench, under the CPU 2019 section, as our new suite will go into next year as well.

Thoughts and commentary welcome!

Tamz_msc - Monday, August 13, 2018 - link

Are the numbers for test LuxMark C++ test correct? Seems they've been swapped(2900WX and 2950X).