The AMD Threadripper 2990WX 32-Core and 2950X 16-Core Review

by Dr. Ian Cutress on August 13, 2018 9:00 AM ESTHEDT Benchmarks: Rendering Tests

Rendering is often a key target for processor workloads, lending itself to a professional environment. It comes in different formats as well, from 3D rendering through rasterization, such as games, or by ray tracing, and invokes the ability of the software to manage meshes, textures, collisions, aliasing, physics (in animations), and discarding unnecessary work. Most renderers offer CPU code paths, while a few use GPUs and select environments use FPGAs or dedicated ASICs. For big studios however, CPUs are still the hardware of choice.

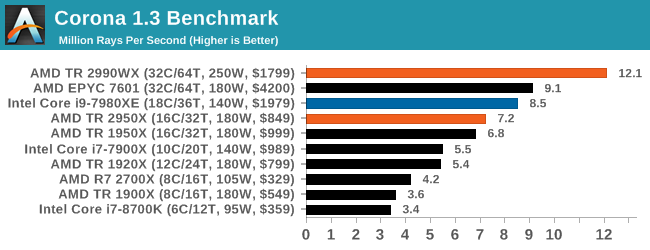

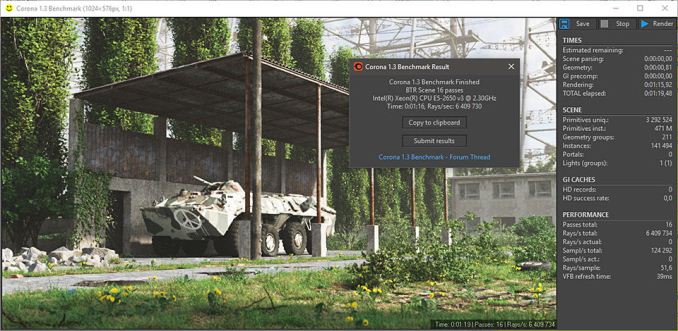

Corona 1.3: Performance Render

An advanced performance based renderer for software such as 3ds Max and Cinema 4D, the Corona benchmark renders a generated scene as a standard under its 1.3 software version. Normally the GUI implementation of the benchmark shows the scene being built, and allows the user to upload the result as a ‘time to complete’.

We got in contact with the developer who gave us a command line version of the benchmark that does a direct output of results. Rather than reporting time, we report the average number of rays per second across six runs, as the performance scaling of a result per unit time is typically visually easier to understand.

The Corona benchmark website can be found at https://corona-renderer.com/benchmark

So this is where AMD broke our graphing engine. Because we report Corona in rays per second, having 12 million of them puts eight digits into our engine, which it then tries to interpret as a scientific number (1.2 x 10^7), which it can’t process in a graph. We had to convert this graph into millions of rays per second to get it to work.

The 2990WX hits out in front with 32 cores, with its higher frequency being the main reason it is so far ahead of the EPYC processor. The EPYC and Core i9 are close together, however the TR2950X at half the cost comes reasonably close.

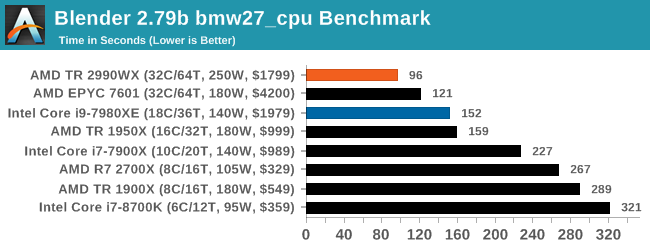

Blender 2.79b: 3D Creation Suite

A high profile rendering tool, Blender is open-source allowing for massive amounts of configurability, and is used by a number of high-profile animation studios worldwide. The organization recently released a Blender benchmark package, a couple of weeks after we had narrowed our Blender test for our new suite, however their test can take over an hour. For our results, we run one of the sub-tests in that suite through the command line - a standard ‘bmw27’ scene in CPU only mode, and measure the time to complete the render.

Blender can be downloaded at https://www.blender.org/download/

The additional cores on the 2990WX puts it out ahead of the EPYC and Core i9, with the 2990WX having an extra 58% throughput over the Core i9. That is very substantial indeed.

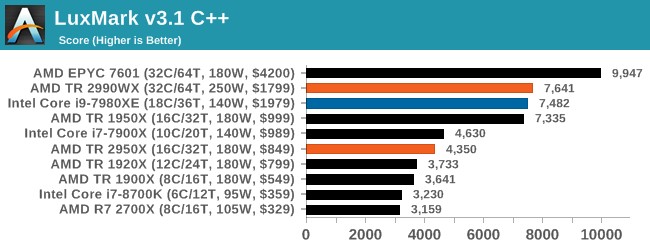

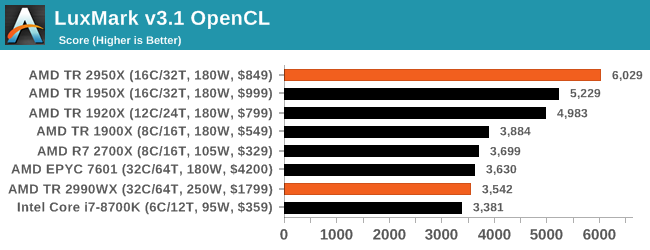

LuxMark v3.1: LuxRender via Different Code Paths

As stated at the top, there are many different ways to process rendering data: CPU, GPU, Accelerator, and others. On top of that, there are many frameworks and APIs in which to program, depending on how the software will be used. LuxMark, a benchmark developed using the LuxRender engine, offers several different scenes and APIs.

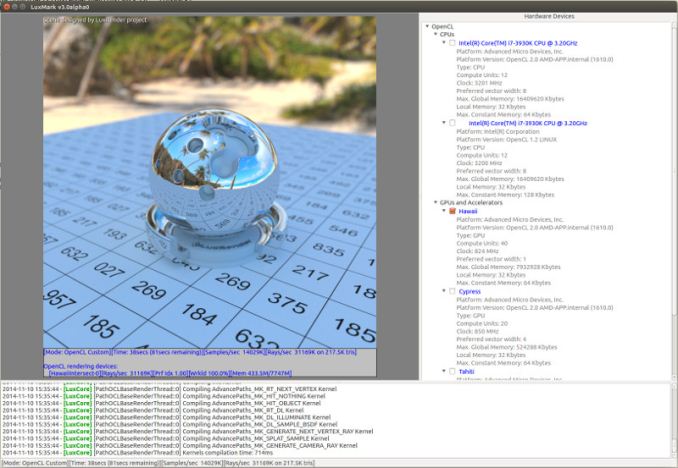

Taken from the Linux Version of LuxMark

In our test, we run the simple ‘Ball’ scene on both the C++ and OpenCL code paths, but in CPU mode. This scene starts with a rough render and slowly improves the quality over two minutes, giving a final result in what is essentially an average ‘kilorays per second’.

Intel’s Skylake-X processors seem to fail our OpenCL test for some reason, but in the C++ test the extra memory controllers on EPYC sets it ahead of both TR2 and Core i9. The 2990WX and Core i9 are almost equal here.

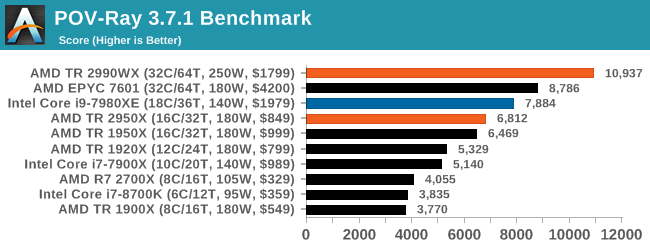

POV-Ray 3.7.1: Ray Tracing

The Persistence of Vision ray tracing engine is another well-known benchmarking tool, which was in a state of relative hibernation until AMD released its Zen processors, to which suddenly both Intel and AMD were submitting code to the main branch of the open source project. For our test, we use the built-in benchmark for all-cores, called from the command line.

POV-Ray can be downloaded from http://www.povray.org/

This test is another that loves the cores and frequency of the 2990WX, finishing the benchmark in almost 20 seconds. It might be time for a bigger built-in benchmark.

171 Comments

View All Comments

Eastman - Tuesday, August 14, 2018 - link

Just a comment regarding studios and game developers. I work in the industry and 90% of these facilities do run with Xeon workstations and ECC memory. Either custom built or purchased from the likes of Dell or HP. So yes, there is a market place for workstations. No serious pro would do work on a mobile tablet or phone where there is a huge market growth. There is definitely a place for a single 32 core CPUs. But among say 100 workstations there might be a place for only 4-5 of the 2990WX. Those would serve particles/fluids dynamics simulation. Most of the workload would be sent to render farms sometimes offsite. Those render farms could use Epyc/Xeon chips. If I was a head of technology, I would seriously consider these CPUs for my artists workflow.ATC9001 - Wednesday, August 15, 2018 - link

Another big thing which people don't consider is...the true "price" of these systems is nearly neck and neck. Sure you can save a couple hundred with AMD CPU, but by the time you add in RAM, mobo, PSU, storage etc....you're talking a 5k+...Intel doesn't want AMD to go away (think anti-trust) but they are definitely stepping up efforts which is great for consumers!

LsRamAir - Thursday, August 16, 2018 - link

We've been patient! Looked at all the ads multiple times for support to. Please drop the rest of the knowledge, Sir! "Still writing" on the overclocking page is nibblin' at my patience and intrigue hemisphere.Relic74 - Wednesday, August 29, 2018 - link

Yes of course there is, I have one of the new 32 core systems and I use it with SmartOS. A VM management OS that could allow up to 8 game developers to use a single 32 Core workstation without a single bit of performance lost. That is as long as each VM has control over their own GPU. 4 Cores(most games dont new more than that in fact, no game needs more that), 32GB to 64GB of RAM (depending on server config) and an Nvidia 1080ti or higher, per VM. That is more than enough and would save the company thousands, in fact, that is exactly what most game developers use. Servers with 8 to 12 GPU's, dual CPUs, 32 to 64 cores, 512GB of RAM, standard config.You should watch Linus Tech Tips 12 node gaming system off of a single computer, it's the future and is amazing.

eek2121 - Saturday, August 18, 2018 - link

You are downplaying the gaming market. It's a multi-billion dollar industry. Nothing niche about it.HStewart - Monday, August 13, 2018 - link

I agree with you - so this mainly concerning "It's over, Intel is finished"Normally I don't care much to discuss AMD related threads - but when people already bad mouth Intel, it all fair game in my opinion.

But what is important and why I agree is that it not even close. Because the like it or not, PC Game industry which primary reason for desktop now is a minimal part of industry now - computers are mostly going to mobile - and just go into local BestBuy and you see why it not even close.

Plus as in a famous WW II saying, "The Sleeper has been Awaken". One is got to be blind, if you think "Intel is finished" I think the real reason that 10nm is not coming out, is that Intel wants to shut down AMD for once and for always. I see this coming in two areas - in the CPU area and also with GPU - I believe the i870xG is precursor to it - with AMD GPU being replace with Artic Sound.

But AMD does have a good side to this. That it keep Intel's prices down and Intel improving products.

ishould - Monday, August 13, 2018 - link

"I think the real reason that 10nm is not coming out, is that Intel wants to shut down AMD for once and for always." This is actually not true, Intel is having *major* yield issues with 10nm, hence 14nm being a 4-year-node (possibly 5 years if it slips from the expected Holiday 2019), and is a contributing factor for the decline of Intel/rise of AMD.HStewart - Monday, August 13, 2018 - link

I not stating that Intel didn't have yield issues - but there is 2 things that should be taking in account - and of course Intel only really knows1. (Intel has stated this) That all 10nm are not equal - and then Intel's 10nm is closer to competition's 7nm - and this is likely the reason why it taking long.

2. Intel realizes the process issues - and if you think they are not aware of competition in market - not just AMD but also ARM then one is a fool

ishould - Monday, August 13, 2018 - link

I agree they were probably being too ambitious with their scaling (2.4x) for 10nm. Rumor is that they've had to sacrifice some scaling to get better yields. EUV cannot come soon enough!MonkeyPaw - Monday, August 13, 2018 - link

I highly highly doubt that Intel would postpone 10nm just to “shut down AMD.” Intel has shareholders to look out for, and Intel needs 10nm out the door yesterday. Their 10nm struggles are real, and it is costing them investor confidence. No way would they wait around to win a pissing match with AMD while their stock value goes down.