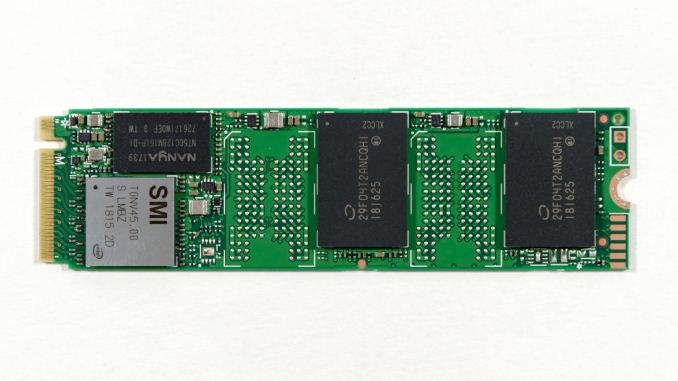

The Intel SSD 660p SSD Review: QLC NAND Arrives For Consumer SSDs

by Billy Tallis on August 7, 2018 11:00 AM ESTConclusion

As the first SSD with QLC NAND to hit our testbed, the Intel SSD 660p provides much-awaited hard facts to settle the rumors and worries surrounding QLC NAND. With only a short time to review the drive we haven't had time to do much about measuring the write endurance, but our 1TB sample has been subjected to 8TB of writes and counting (out of a rated 200TB endurance) without reporting any errors and the SMART status indicates about 1% of the endurance has been used, so things are looking fine thus far.

On the performance side of things, we have confirmed that QLC NAND is slower than TLC, but the difference is not as drastic as many early predictions about QLC NAND suggested. If we didn't already know what NAND the 660p uses under the hood, Intel could pass it off as being an unusually slow TLC SSD. Even the worst-case performance isn't any worse than what we've seen with some older, smaller TLC SSDs with NAND that is much slower than the current 64-layer stuff.

The performance of the SLC cache on the Intel SSD 660p is excellent, rivaling the high-end 8-channel controllers from Silicon Motion. When the 660p isn't very full and the SLC cache is still quite large, it provides significant boosts to write performance. Read performance is usually very competitive with other low-end NVMe SSDs and well out of reach of SATA SSDs. The only exception seems to be that the 660p is not very good at suspending write operations in favor of completing a quicker read operation, so during mixed workloads or when the drive is still working on background processing to flush the SLC cache the read latency can be significantly elevated.

Even though our synthetic tests are designed to give drives a reasonable amount of idle time to flush their SLC write caches, the 660p keeps most of the data as SLC until the capacity of QLC becomes necessary. This means that when the SLC cache does eventually fill up, there's a large backlog of work to be done migrating data in to QLC blocks. We haven't yet quantified how quickly the 660p can fold the data from the SLC cache into QLC during idle times, but it clearly isn't enough to keep pace with our current test configurations. It also appears that most or all of the tests that were run after filling the drive up to 100% did not give the 660p enough idle time after the fill operation to complete its background cleanup work, so even some of the read performance measurements for the full-drive test runs suffer the consequences of filling up the SLC write cache.

In the real world, it is very rare for a consumer drive to need to accept tens or hundreds of GB of writes without interruption. Even the installation of a very large video game can mostly fit within the SLC cache of the 1TB 660p when the drive is not too full, and the steady-state write performance is pretty close to the highest rate data can be streamed into a computer over gigabit Ethernet. When copying huge amounts of data off of another SSD or sufficiently fast hard drive(s) it is possible to approach the worst-case performance our benchmarks have revealed, but those kind of jobs already last long enough that the user will take a coffee break while waiting.

Given the above caveats and the rarity with which they matter, the 660p's performance seems great for the majority of consumers who have light storage workloads. The 660p usually offers substantially better performance than SATA drives for very little extra cost and with only a small sacrifice in power efficiency. The 660p proves that QLC NAND is a viable option for general-purpose storage, and most users don't need to know or care that the drive is using QLC NAND instead of TLC NAND. The 660p still carries a bit of a price premium over what we would expect a SATA QLC SSD to cost, so it isn't the cheapest consumer SSD on the market, but it has effectively closed the price gap between mainstream SATA and entry-level NVMe drives.

Power users may not be satisfied with the limitations of the Intel SSD 660p, but for more typical users it offers a nice step up from the performance of SATA SSDs with a minimal price premium, making it an easy recommendation.

86 Comments

View All Comments

limitedaccess - Tuesday, August 7, 2018 - link

SSD reviewers need to look into testing data retention and related performance loss. Write endurance is misleading.Ryan Smith - Tuesday, August 7, 2018 - link

It's definitely a trust-but-verify situation, and is something we're going to be looking into for the 660p and other early QLC drives.Besides the fact that we only had limited hands-on time with this drive ahead of the embargo and FMS, it's going to take a long time to test the drive's longevity. Even with 24/7 writing, with a sustained 100MB/sec write rate you're looking at only around 8TB written/day. Which means you're looking at weeks or months to exhaust the smallest drive.

eastcoast_pete - Tuesday, August 7, 2018 - link

Hi Ryan and Billie,I second the questions by limitedaccess and npz, also on data retention in cold storage. Now, about Ryan's answer: I don't expect you guys to be able to torture every drive for months on end until it dies, but, is there any way to first test the drive, then run continuous writes/rewrites for seven days non-stop, and then re-do some core tests to see if there are any signs or even hints of deterioration? The issue I have with most tests is that they are all done on virgin drives with zero hours on them, which is a best-case scenario. Any decent drive should be good as new after only 7 days (168 hours) of intensive read/write stress. If it's still as good as when you first tested it, I believe that would bode well for possible longevity. Conversely, if any drive shows even mild deterioration after only a week of intense use, I'd really like to know, so I can stay away.

Any chance for that or something similar?

JoeyJoJo123 - Tuesday, August 7, 2018 - link

>and then re-do some core tests to see if there are any signs or even hints of deterioration?That's not how solid state devices work. They're either working or they're not. And even if they're dead, that's not to say anything that it was indeed the nand flash that deteriorated beyond repair, it could've been the controller or even the port the SSD was connected that got hosed.

Literally testing a single drive says absolutely nothing at all about the expected lifespan of your single drive. This is why mass aggregate reliability ratings from people like Backblaze is important. They buy enough bulk drives that they can actually average out the failure rates and get reasonable real world reliability numbers of the the drives used in hot and vibration-prone server rack environments.

Anandtech could test one drive and say "Well it worked when we first plugged it in, and when we rebooted, the review sample we got no longer worked. I guess it was a bad sample" or "Well, we stress tested it for 4 weeks under a constant mixed read/write load, and the SMART readings show that everything is absolutely perfect, we can extrapolate that no drive of this particular series will never _ever_ fail for any reason whatsoever until the heat death of the universe". Either way, both are completely anecdotal evidence, neither can have any real conclusive evidence found due to the sample size of ONE drive, and does nothing but possibly kill the storage drive off prematurely for the sake of idiots salivating over elusive real world endurance rating numbers when in reality IT REALLY DOESN'T MATTER TO YOU.

Are you a standard home consumer? Yes.

And you're considering purchasing this drive that's designed and marketed towards home consumers (ie: this is not a data center priced or marketed product)?: Yes.

Are you using it under normal home consumer workloads (ie: you're not reading/writing hundreds of MB/s 24/7 for years on end)? Yes.

Then you have nothing to worry about. If the drive dies, then you call up/email the manufacturer and get warranty replacement for your drive. And chances are, your drives will likely be useless due to ever faster and more spacious storage options in the future than they will fail. I got a basically worthless 80GB SATA 2 (near first gen) SSD that's neither fast enough to really use as a boot drive nor spacious enough to be used anywhere else. If anything the NAND on that early model should be dead, but it's not, and chances are the endurance ratings are highly pessimistic of their actual death as seen in the ARS Technica report where Lee Hutchinson stressed SSDs 24/7 for ~18 months before they died.

eastcoast_pete - Tuesday, August 7, 2018 - link

Firstly, thanks for calling me one of the "idiots salivating over elusive real world endurance rating numbers". I guess it takes one to know one, or think you found one. Second, I am quite aware of the need to have a sufficient sample size to make any inference to the real world. And third, I asked the question because this is new NAND tech (QLC), and I believe it doesn't hurt to put the test sample that the manufacturer sends through its paces for a while, because if that shows any sign of performance deterioration after a week or so of intense use, it doesn't bode well for the maturity of the tech and/or the in-house QC.And, your last comment about your 80 GB near first gen drive shows your own ignorance. Most/maybe all of those early SSDs were SLC NAND, and came with large overprovisioning, and yes, they are very hard to kill. This new QLC technology is, well, new, so yes I would like to see some stress testing done, just to see if the assumption that it's all just fine holds, at least for the drive the manufacturer provided.

Oxford Guy - Tuesday, August 7, 2018 - link

If a product ships with a defect that is shared by all of its kind then only one unit is needed to expose it.mapesdhs - Wednesday, August 8, 2018 - link

Proof by negation, good point. :)Spunjji - Wednesday, August 8, 2018 - link

That's a big if, though. If say 80% of them do and Anandtech gets the one that doesn't, then...2nd gen OCZ Sandforce drives were well reviewed when they first came out.

Oxford Guy - Friday, August 10, 2018 - link

"2nd gen OCZ Sandforce drives were well reviewed when they first came out."That's because OCZ pulled a bait and switch, switching from 32-bit NAND, which the controller was designed for, to 64-bit NAND. The 240 GB model with 64-bit NAND, in particular, had terrible bricking problems.

Beyond that, there should have been pressure on Sandforce's decision to brick SSDs "to protect their firmware IP" rather than putting users' data first. Even prior to the severe reliability problems being exposed, that should have been looked at. But, there is generally so much passivity and deference in the tech press.

Oxford Guy - Friday, August 10, 2018 - link

This example shows why it's important for the tech press to not merely evaluate the stuff they're given but go out and get products later, after the initial review cycle. It's very interesting to see the stealth downgrades that happen.The Lenovo S-10 netbook was praised by reviewers for having a matte screen. The matte screen, though, was replaced by a cheaper-to-make glossy later. Did Lenovo call the machine with a glossy screen the S-11? Nope!

Sapphire, I just discovered, got lots of reviewer hype for its vapor chamber Vega cooler, only to replace the models with those. The difference? The ones with the vapor chamber are, so conveniently, "limited edition". Yet, people have found that the messaging about the difference has been far from clear, not just on Sapphire's website but also on some review sites. It's very convenient to pull this kind of bait and switch. Send reviewers a better product then sell customers something that seems exactly the same but which is clearly inferior.