The Asus ROG Swift PG27UQ G-SYNC HDR Monitor Review: Gaming With All The Bells and Whistles

by Nate Oh on October 2, 2018 10:00 AM EST- Posted in

- Monitors

- Displays

- Asus

- NVIDIA

- G-Sync

- PG27UQ

- ROG Swift PG27UQ

- G-Sync HDR

From G-Sync Variable Refresh To G-Sync HDR Gaming Experience

The original FreeSync and G-Sync were solutions to a specific and longstanding problem: fluctuating framerates would cause either screen tearing or, with V-Sync enabled, stutter/input lag. The result of VRR has been a considerably smoother experience in the 30 to 60 fps range. And an equally important benefit was compensating for dips and peaks over the wide ranges introduced with higher refresh rates like 144Hz. So they were very much tied to a single specification that directly described the experience, even if the numbers sometimes didn’t do the experience justice.

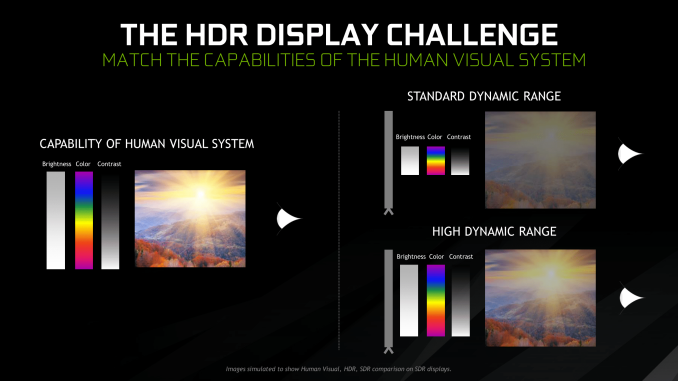

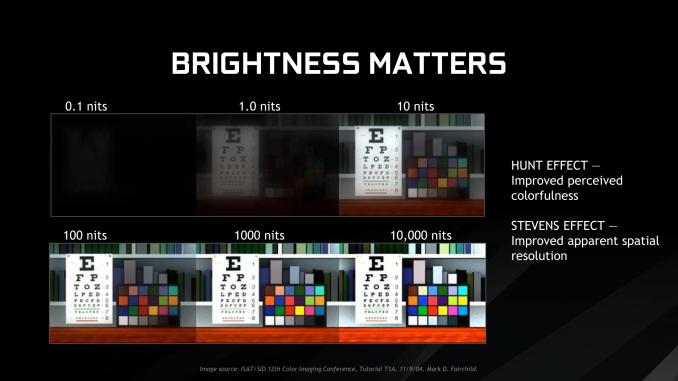

Meanwhile, HDR in terms of gaming is a whole suite of things that essentially allows for greater brightness, blacker darkness, and better/more colors. More importantly, this requires developer support for applications and production of HDR content. The end result is not nearly as static as VRR, as much depends on the game’s implementation – or in NVIDIA’s case, sometimes with Windows 10’s implementation. Done properly, even with simply better brightness, there can be perceived enhancements with colorfulness and spatial resolution, which are the Hunt effect and Stevens effect, respectively.

So we can see why both AMD and NVIDIA are pushing the idea of a ‘better gaming experience’, though NVIDIA is explicit about this with G-Sync HDR. The downside of this is that the required specifications for both FreeSync 2 and G-Sync HDR certifications are closed off and only discussed broadly, deferring to VESA’s DisplayHDR standards. Their situations, however, are very different. For AMD, their explanations are a little more open, and outside of HDR requirements, FreeSync 2 also has a lot to do with standardizing SDR VRR quality with mandated LFC, wider VRR range, and lower input lag. Otherwise, they’ve also stated that FreeSync 2’s color gamut, max brightness, and contrast ratio requirements are broadly comparable to those in DisplayHDR 600, though the HDR requirements do not overlap completely. And with FreeSync/FreeSync 2 support on Xbox One models and upcoming TVs, FreeSync 2 appears to be a more straightforward specification.

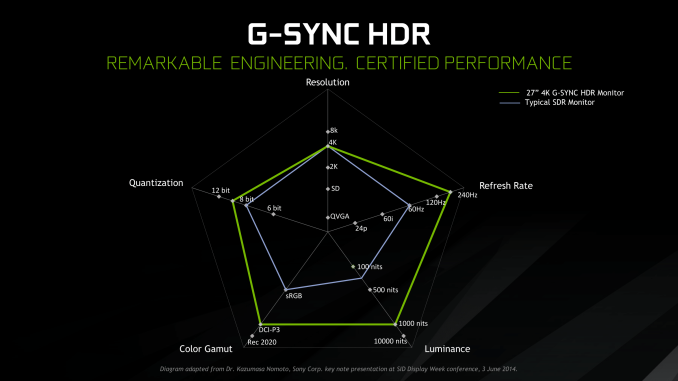

For NVIDIA, their push is much more general and holistic with respect to feature standards, and purely focused on the specific products. At the same time, they discussed the need for consumer education on the spectrum of HDR performance. While there are specific G-Sync HDR standards as part of their G-Sync certification process, those specifications are only known to NVIDIA and the manufacturers. Nor was much detail provided on minimum requirements outside of HDR10 support, peak 1000 nits brightness, and unspecified coverage of DCI-P3 for the 4K G-Sync HDR models, citing their certification process and deferring detailed capabilities to other certifications that G-Sync HDR monitors may have. In this case, UHD Alliance Premium and DisplayHDR 1000 certifications for the Asus PG27UQ. Which is to say that, at least for the moment, the only G-Sync HDR displays are those that adhere to some very stringent standards; there aren't any monitors under this moniker that offer limited color gamuts or subpar dynamic contrast ratios.

At least with UHD Premium, the certification is specific to 4K resolution, so while the announced 65” 4K 120Hz Big Format Gaming Displays almost surely will be, the 35” curved 3440 × 1440 200Hz models won’t. Practically-speaking, all the capabilities of these monitors are tied into the AU Optronics panels inside them, and we know that NVIDIA worked closely with AUO as well as the monitor manufacturers. As far as we know those AUO panels are only coupled with G-Sync HDR displays, and vice versa. No other standardized specification was disclosed, only referring back to their own certification process and the ‘ultimate gaming display’ ideal.

As much as NVIDIA mentioned consumer education on the HDR performance spectrum, the consumer is hardly any more educated on a monitor’s HDR capabilities with the G-Sync HDR branding. Detailed specifications are left to monitor certifications and manufacturers, which is the status quo. Without a specific G-Sync HDR page, NVIDIA lists G-Sync HDR features under the G-Sync page, and while those features are specified as G-Sync HDR, there is no explanation on the full differences between a G-Sync HDR monitor and a standard G-Sync monitor. The NVIDIA G-Sync HDR whitepaper is primarily background on HDR concepts and a handful of generalized G-Sync HDR details.

For all intents and purposes, G-Sync HDR is presented not as specification or technology but as branding for a premium product family, and right now for consumers it is more useful to think of it that way.

91 Comments

View All Comments

FreckledTrout - Tuesday, October 2, 2018 - link

AUO have stated it lands this fall so should be very soon. They made it sound like they will have a shipping monitor by the end of 2018 albeit who really knows but im sure its under 1 year away at this point.Can Google: "AUO Expects to Launch Mini LED Gaming Monitor in 2H18"

imaheadcase - Wednesday, October 3, 2018 - link

Don't keep hopes hope, remember this monitor in this very review was delayed 6+ monthsLolimaster - Tuesday, October 2, 2018 - link

384 zones is just CRAP, you only find that number of zones on low end cheapo TV's with FALD just o be a bit more "premium". For that price is should have 1000 AT LEAST.Seems we will need to wait for LCD with minileds to actually start seeing monitors with 5000+zones.

know of fence - Tuesday, October 2, 2018 - link

Consoles started to push that 4K / HDR nonsense and now the monopoly provides a monitor to match for the more money than sense crowd. The obscure but sensible strobing backlight / ULMB got sacrificed for the blasted buzzwords and Gsync. Is it because the panel is barely fast enough for Gsync or is it a general shift in direction, doubling down on proprietary G-stink and the ridiculously superfluous 4K native. Is it because with failing VR, high frame rates are off the table completely?Is there any mention on how 1920x1080 looks on that monitor (too bad), because the pixel density is decidedly useless and non standard. But scaled down to half it could be 81.5 ppi and this thing can actually be used to read text.

godrilla - Tuesday, October 2, 2018 - link

$1799 at micr1 fyi!godrilla - Tuesday, October 2, 2018 - link

Microcenter*Hectandan - Tuesday, October 2, 2018 - link

"the most desired and visible aspects of modern gaming monitors: ultra high resolution (4K)"No it's not. At least on Windows where UI scaling still sucks. At least on "slow" graphics card like 2080 Ti where 4K doesn't run 144fps. And 4K monitors can't do 1440p natively, so a huge deal breaker.

Zan Lynx - Wednesday, October 3, 2018 - link

If you had a graphics card that could always run 144 Hz then you would have no need for GSync.imaheadcase - Wednesday, October 3, 2018 - link

Why would you care about scaling for gaming? Besides, plenty of 3rd party apps to correct windows bullshit.Hectandan - Thursday, October 4, 2018 - link

No I don't care about scaling in games, but I do care about 144fps in games. Only possible in 4K with SLI 2080 Ti and good game SLI support. Plenty of games don't.Also plenty of 3rd party apps not correcting Windows bullshit, and I gain no extra working space if I do scale.

Simply too many downsides and too little benefit.