The Intel Core i7-8086K Review

by Ian Cutress on June 11, 2018 8:00 AM EST- Posted in

- CPUs

- Intel

- Core i7

- Anniversary

- Coffee Lake

- i7-8086K

- 5 GHz

- 8086K

- 5.0 GHz

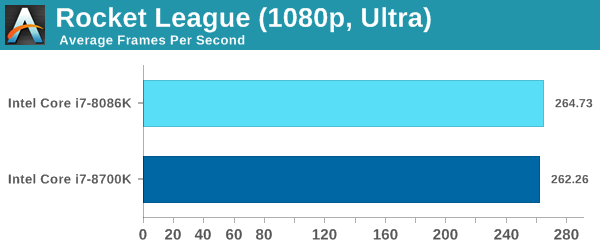

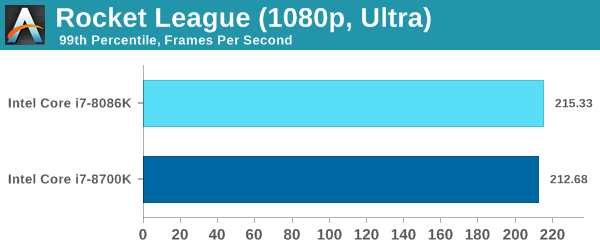

Rocket League

Hilariously simple pick-up-and-play games are great fun. I'm a massive fan of the Katamari franchise for that reason — passing start on a controller and rolling around, picking up things to get bigger, is extremely simple. Until we get a PC version of Katamari that I can benchmark, we'll focus on Rocket League.

Rocket League combines the elements of pick-up-and-play, allowing users to jump into a game with other people (or bots) to play football with cars with zero rules. The title is built on Unreal Engine 3, which is somewhat old at this point, but it allows users to run the game on super-low-end systems while still taxing the big ones. Since the release in 2015, it has sold over 5 million copies and seems to be a fixture at LANs and game shows. Users who train get very serious, playing in teams and leagues with very few settings to configure, and everyone is on the same level. Rocket League is quickly becoming one of the favored titles for e-sports tournaments, especially when e-sports contests can be viewed directly from the game interface.

Based on these factors, plus the fact that it is an extremely fun title to load and play, we set out to find the best way to benchmark it. Unfortunately for the most part automatic benchmark modes for games are few and far between. Partly because of this, but also on the basis that it is built on the Unreal 3 engine, Rocket League does not have a benchmark mode. In this case, we have to develop a consistent run and record the frame rate.

Read our initial analysis on our Rocket League benchmark on low-end graphics here.

With Rocket League, there is no benchmark mode, so we have to perform a series of automated actions, similar to a racing game having a fixed number of laps. We take the following approach: Using Fraps to record the time taken to show each frame (and the overall frame rates), we use an automation tool to set up a consistent 4v4 bot match on easy, with the system applying a series of inputs throughout the run, such as switching camera angles and driving around.

It turns out that this method is nicely indicative of a real bot match, driving up walls, boosting and even putting in the odd assist, save and/or goal, as weird as that sounds for an automated set of commands. To maintain consistency, the commands we apply are not random but time-fixed, and we also keep the map the same (Aquadome, known to be a tough map for GPUs due to water/transparency) and the car customization constant. We start recording just after a match starts, and record for 4 minutes of game time (think 5 laps of a DIRT: Rally benchmark), with average frame rates, 99th percentile and frame times all provided.

The graphics settings for Rocket League come in four broad, generic settings: Low, Medium, High and High FXAA. There are advanced settings in place for shadows and details; however, for these tests, we keep to the generic settings. For both 1920x1080 and 4K resolutions, we test at the High preset with an unlimited frame cap.

All of our benchmark results can also be found in our benchmark engine, Bench.

ASRock RX 580 Performance

111 Comments

View All Comments

bug77 - Monday, June 11, 2018 - link

So what happened here? It looks like Intel's play with frequencies made this throttle more often. At least that the only explanation I can find for 8700k ending up better in so many tests.Tkan215215 - Monday, June 11, 2018 - link

As always its called milking and wallet ripper they know people still Buy them anywaybug77 - Monday, June 11, 2018 - link

I wasn't expecting this to be a cost-effective part, but rather a collector-oriented one.But mostly worse than a standard part is surely unexpected.

AutomaticTaco - Monday, June 11, 2018 - link

I don't think it's worse as much as the silicon lottery exists regardless of it. In other words, even among speed binned parts some OC better than others. And that's true for both the 8086K, the 8700K or any others.just4U - Wednesday, June 13, 2018 - link

I agree bug,I'd be very interested in this processor if it brought something to the table to justify it's cost. The 4790K did with a better thermal design. They could have added a kick ass cooler, or a factory delid and redo for better thermals. Something .. anything besides a small bump in clocks.

Drumsticks - Monday, June 11, 2018 - link

It might be milking, but I kind of have a hard time believing that. They're only making 50,000 of them, and only at about a 21% markup over the 8700k. But they're flat out giving away 16% of the chips. I doubt Intel is going to milk much money beyond their regular business from this. It's the companies 50th anniversary year, so I'm going to guess it's just positive fanfare and a collector's item related to that and it happening to be an anniversary for a well known processor at the same time.Old_Fogie_Late_Bloomer - Monday, June 11, 2018 - link

I enjoy hating Intel as much as the next guy but this is a good point.Revenue from 41,914 8086Ks: $17,813,450

Revenue from 50,000 8700Ks: $17,500,000 (at $350 apiece)

The remaining $313,450 doesn't really feel like a lot of money when you factor in binning the chips and dealing with all the other overhead of the promotion, especially since Intel isn't getting all of that money anyway.

SanX - Monday, June 11, 2018 - link

This was actually not the revenue but the PROFIT you blind people with easily effed brains. The production cost for this chip was probably less then 20 bucks. The processor in your phone is probably more hi-tech, has more transistors, more cores, and was made on more advances factories with 10nm litho being all sold below $25.mkaibear - Tuesday, June 12, 2018 - link

What are you smoking?His maths is bang on, although he neglects the cut the retailer will be taking off the top for that. They aren't making that much profit off each chip.

SanX - Tuesday, June 12, 2018 - link

They aren't making that much profit off each chip? If they aren't making huge profits then all mobile chip factories lose money by selling the same transistor count processors like the one in Apple or Samsung phones for just $25