Intel Persistent Memory Event: Live Blog

by Ian Cutress on May 30, 2018 12:31 PM EST- Posted in

- Memory

- Intel

- 3D XPoint

- Optane

- Apache Pass

Want to read our news about this event? Go here!

https://www.anandtech.com/show/12828/intel-launches-optane-dimms-up-to-512gb-apache-pass-is-here

12:34PM EDT - It's a Live Blog! More Optane in bound, looks like Apache Pass.

12:35PM EDT - Time for an unexpected Live Blog. I'm in San Francisco at Intel's building discussing Persisteant Memory

12:35PM EDT - Almost three hours of talks this morning, first up Lisa Spelman covering the Data Center market

12:36PM EDT - Drivers revolve around latency - the ability to compute and respond as quickly as possible

12:36PM EDT - Intel is more than a CPU company

12:37PM EDT - A more holistic value of performance across compute, storage, acceleration and connectivity

12:37PM EDT - Acceleration includes new instructions for DL training and FPGAs

12:37PM EDT - Connectivity about removing bottlenecks around networking: Omni-Path, Silicon Photonics

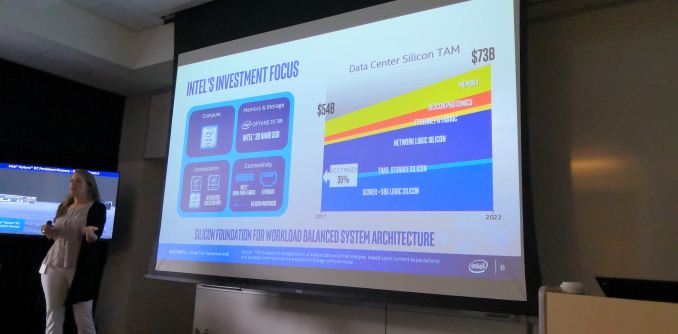

12:39PM EDT - $54B TAM in 2017, $73B TAM in 2022, MSS estimate at 35%

12:39PM EDT - >Sorry, Camera trouble. No network!

12:40PM EDT - Map services have evolved as the hardware has evolved

12:40PM EDT - Bandwidth and latency and detail has grown

12:41PM EDT - Also user generated data

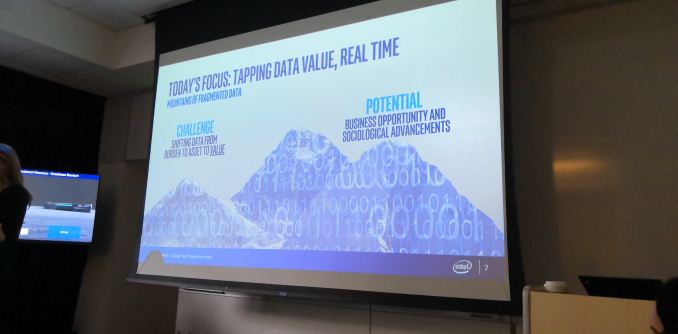

12:41PM EDT - Use cases will be unlocked as people start to look at data as an asset, rather than a burden

12:42PM EDT - Data generates data, compounding the benefit

12:42PM EDT - Intel has made progress in the industry, but huge amount of potential still there

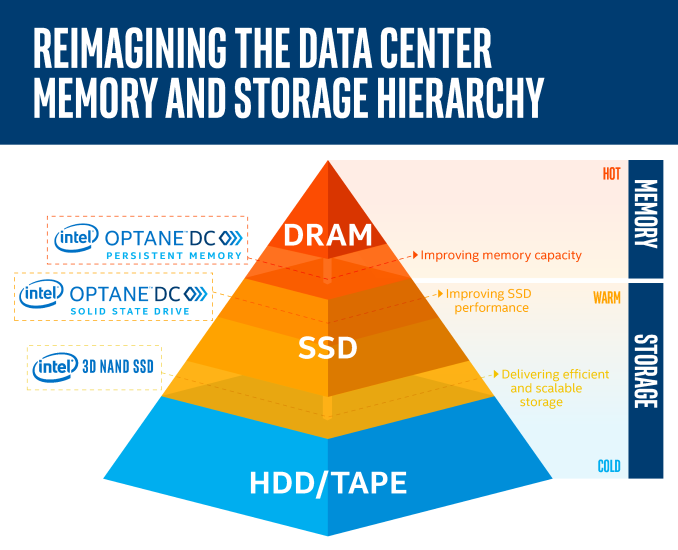

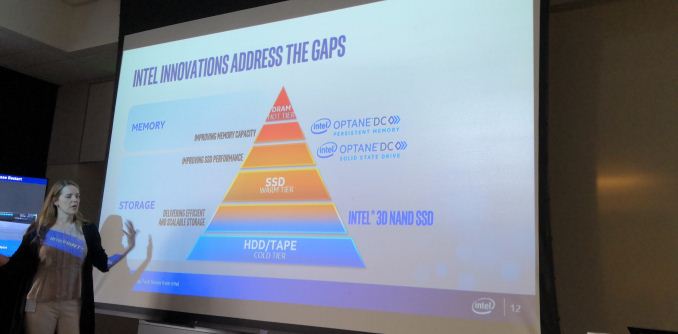

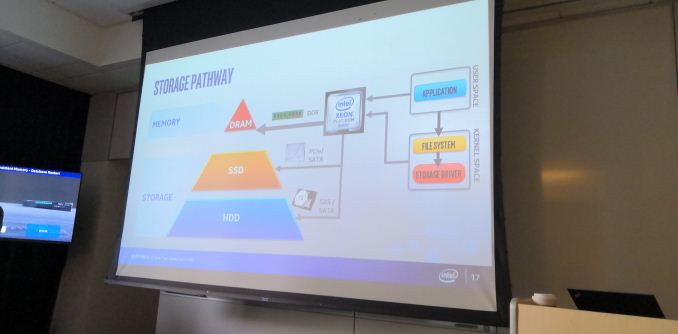

12:43PM EDT - As data exists today, three tiers: Cold Tier (HDD/Tape), Warm (SSD), Hot (DRAM)

12:45PM EDT - To fundamentally think how applications are architected is difficult

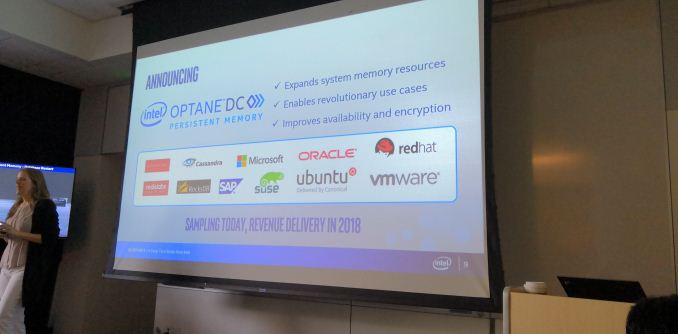

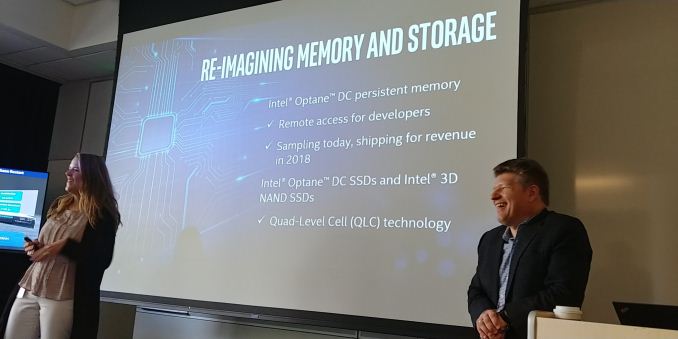

12:45PM EDT - Announcements today: Optane DC Persistent Memory

12:45PM EDT - Sampling today, Revenue in 2018

12:45PM EDT - 'Crystal Ridge' ?

12:45PM EDT - Larger capacity of memory at lower cost

12:46PM EDT - Applications developed with persistent memory in mind

12:46PM EDT - Capability for lots of applications

12:46PM EDT - Working with a bunch of companies for a long time

12:46PM EDT - Microsoft, Oracle, redhat, ubuntu, SuSe, VMware

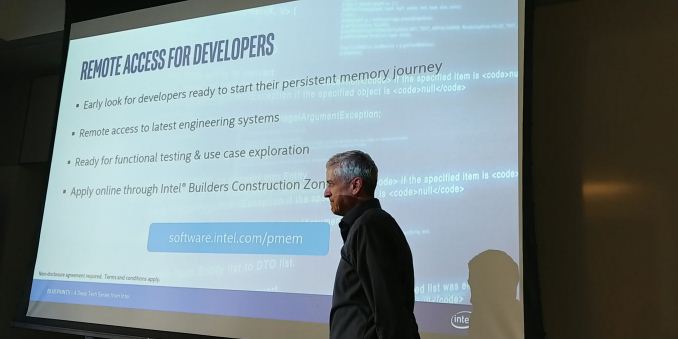

12:47PM EDT - Announcing for developers, remote access to systems with Optane DC Persistent Memory through Intle Builders Consutrcution Zone

12:48PM EDT - Available shortly

12:48PM EDT - Weeks rather than months, but no exact date

12:48PM EDT - Complimentary, but invite only. Have to submit proposal

12:49PM EDT - Capacity sizes for developers to be covered later

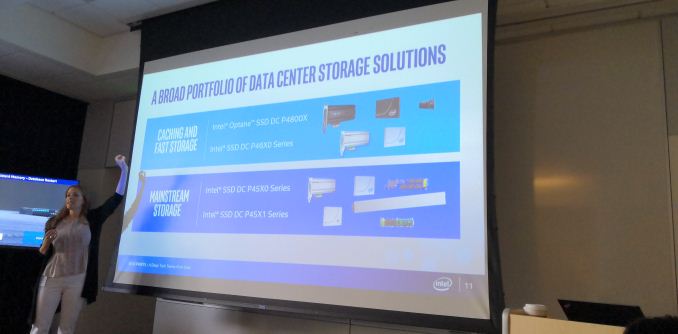

12:49PM EDT - Currently today, Intel offers Caching and Fast Storage tier, and Mainstream tier

12:50PM EDT - Larger capacities at more affordable price points

12:50PM EDT - Bridging the gap between the three tiers

12:51PM EDT - Optane DC Persistent Memory below DRAM, Optane SSD above SSD

12:51PM EDT - Intel QLC incoming

12:52PM EDT - Alper Ilkbahar, CP/GM of DC Memory and Storage on the stage now

12:52PM EDT - Discussing more technical details

12:53PM EDT - 'We want you to leave today with the same level of excitement as us'

12:53PM EDT - >As long as we leave with a sample... :)

12:54PM EDT - Reiterating the Mind The Gaps heirarchy

12:54PM EDT - Cold/Warm/Hot tiers are not continuous, they have their own specific reasons for being the way they are

12:55PM EDT - DRAM is a good fit for fast latency and small granularity

12:55PM EDT - Speed comes at the cost of implementation, and limitations on capacity

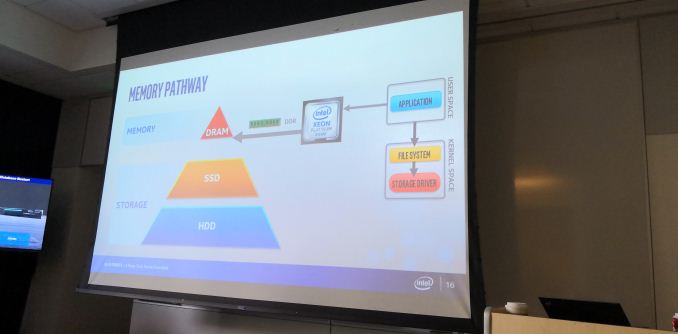

12:56PM EDT - Storage tier is accessed through different mechanisms to memory

12:56PM EDT - 10000 cycles, loses cache due to context switch

12:57PM EDT - Data returns in blocks, not bytes

12:57PM EDT - 3 orders of magnitude

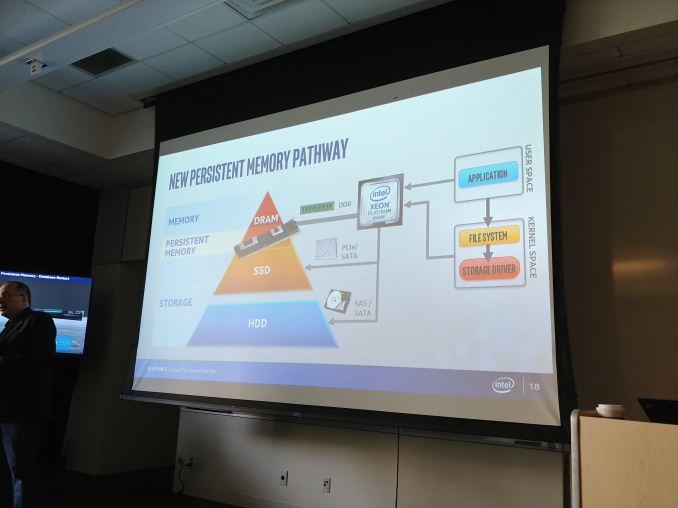

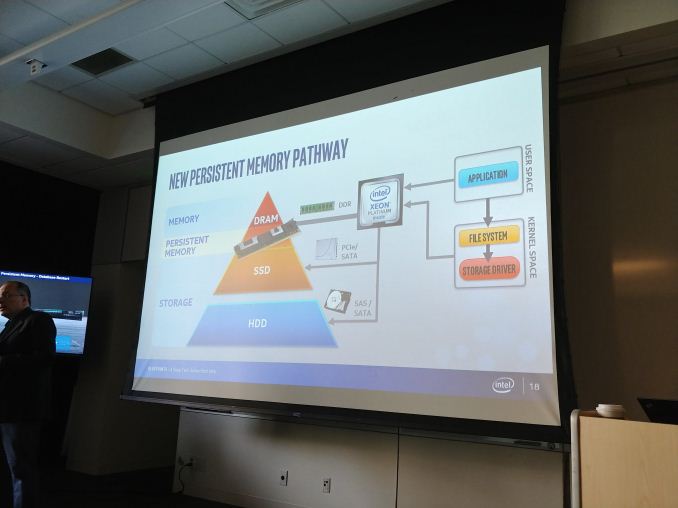

12:58PM EDT - Persistent memory bridges the gap

12:59PM EDT - Offer a path through existing storage drivers, or allowing applications access into the hardware and bypass

12:59PM EDT - Intel is working on the latter

12:59PM EDT - 'It acts like a true memory'

12:59PM EDT - Signifies compute significantly

12:59PM EDT - DRAM-like speeds on DDR bus. Hardware accessible, byte accessible, large capacity, lower cost

01:00PM EDT - >(sorry, pictures are SUPER slow to upload)

01:00PM EDT - 3 SKUs product at launch - 128 GB, 256 GB, 512 GB

01:01PM EDT - Essentially a hardware memory accessed with load/store instructions

01:01PM EDT - DDR4 pin compatible

01:01PM EDT - Compatible with next generation Xeon platforms

01:01PM EDT - Does not rely on external power or capacitors

01:01PM EDT - Uses NVM technology for persistence

01:02PM EDT - Hardware encryption on chip and advanced ECC

01:02PM EDT - Boot time encryption key

01:03PM EDT - Demo time. Database restart when database is in NVM memory.

01:05PM EDT - Scales beyond with big and affordable memory. More capacity = more containers

01:07PM EDT - Meeting the same availability uptime

01:08PM EDT - Helps systems where memory is a bottleneck

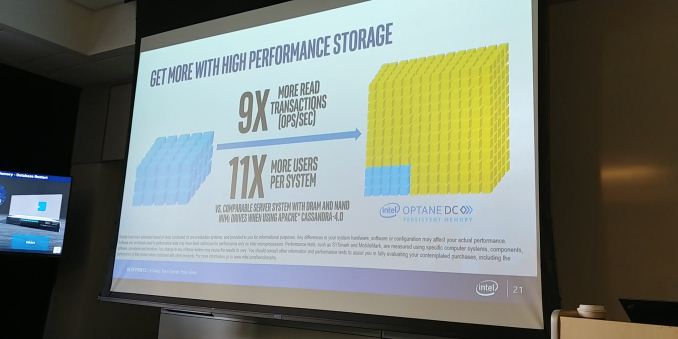

01:10PM EDT - Cassandra database example

01:10PM EDT - Optane DC Persistent Memory gives 9x more read transactions (OP/Sec), 11x more users per system

01:12PM EDT - >The image looks like it's using less DRAM but a big Optane drive

01:12PM EDT - > e.g. instead of 1 TB DRAM, use 256 GB DRAM + 1 TB Optane DC PM

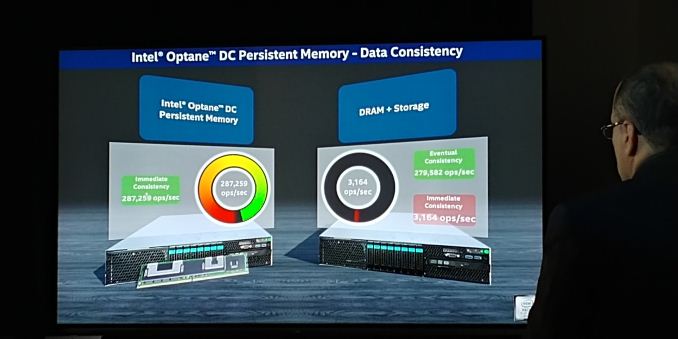

01:13PM EDT - Optane DC PM allows for immediate consistency compared to traditional DRAM+NVMe

01:14PM EDT - Now a key-value test comparison

01:15PM EDT - Every now and then, DRAM has to push data out to storage, which limits the perf

01:16PM EDT - 99% perf loss on DRAM+Storage when relying on storage write-backs

01:17PM EDT - 287k ops/sec on Optane PC PM, vs 3k-279k on DRAM+Storage model

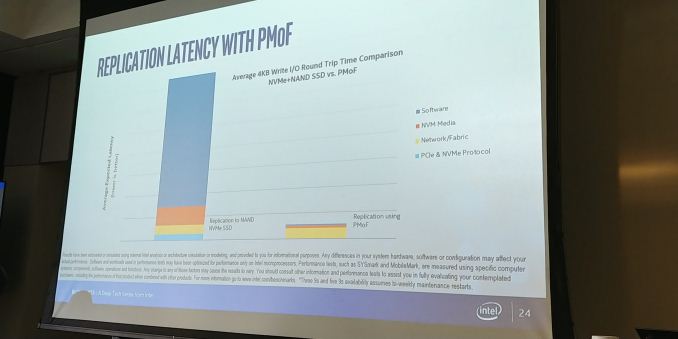

01:18PM EDT - Now Persistent Memory over Fabric (PMoF) for data replication with direct load/store access

01:19PM EDT - Pulling data from PM on another system over the fabric

01:20PM EDT - Current Replication from NAND to NAND across systems has high latency. Over PMoF using Optane DC PM, latency is much lower

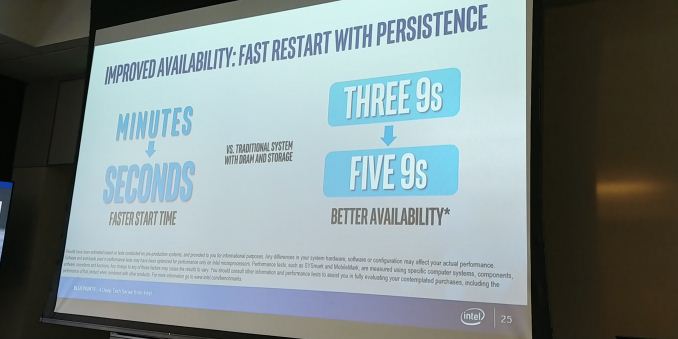

01:21PM EDT - On the Database Restart test, Optane DC PM took 16.9s. DRAM+Storage system took 2100 seconds

01:21PM EDT - Moving reliability from three 9s to five 9s

01:22PM EDT - start time goes from minutes to seconds

01:22PM EDT - >Servers can take 10 minutes to start up

01:22PM EDT - Andy Rudoff to the stage. Head PM software architect

01:23PM EDT - 'Joined the project many years ago'

01:23PM EDT - Making sure ISVs can use the technology

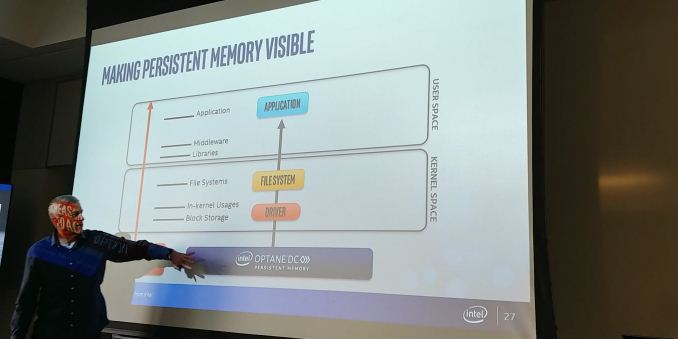

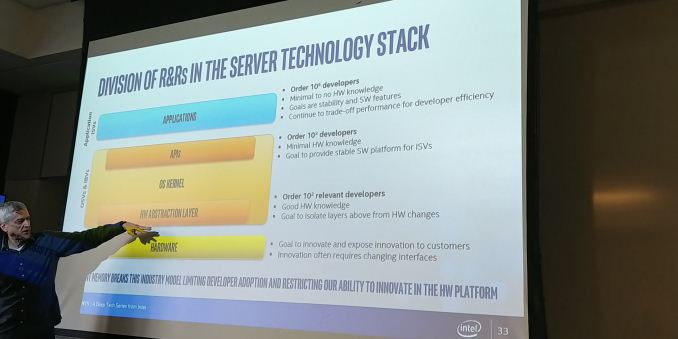

01:23PM EDT - How far up the stack should the PM be visible

01:24PM EDT - Like a fast disk, or a fast cache, or a programmable file system

01:24PM EDT - Higher you go up the stack, the more software is needed, but the more value can be drawn from it

01:24PM EDT - Balancing act between the barrier to adoption and increasing application value

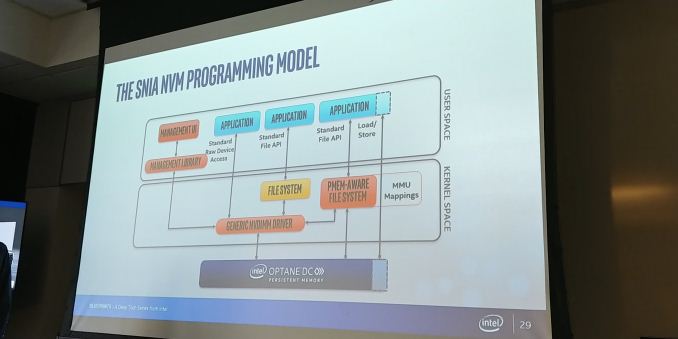

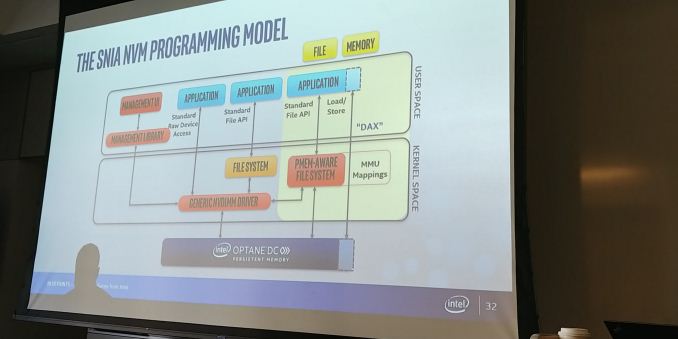

01:25PM EDT - SNIA NVM Programming Model. 30 members, co-chair by Intel and HPE

01:26PM EDT - Making sure ISVs do not get locked into a proprietary API

01:27PM EDT - Three paths - management, storage, and application

01:28PM EDT - Use cases from generic NVDIMM driver (looks like a fast drive) up to a fully configured system

01:29PM EDT - Memory mapping a file typically has context switching. PM allows for direct access

01:29PM EDT - Persistent Memory Aware File System required for direct load/store

01:29PM EDT - Intel calls this 'DAX' - Direct Access

01:31PM EDT - Data structures are still persistent in memory, saving time

01:31PM EDT - Most developers want to use DC PM with little hardware knowledge

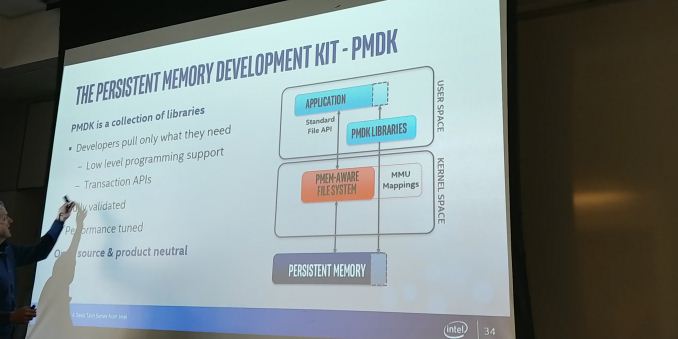

01:34PM EDT - Persistent Memory Development Kit - PMDK

01:34PM EDT - Collection of Libraries

01:35PM EDT - Low level programming support, transaction APIs, performance tuned, open source and product neutral

01:35PM EDT - Not trying to make money on PMDK, trying to enable the ecosystem

01:35PM EDT - PMoF is covered in PMDK

01:36PM EDT - Sync replication available now, Async is a work in progress

01:38PM EDT - Windows and Linux

01:38PM EDT - VTune has been updated to understand Persistent Memory

01:40PM EDT - Free downloads of PMDK available

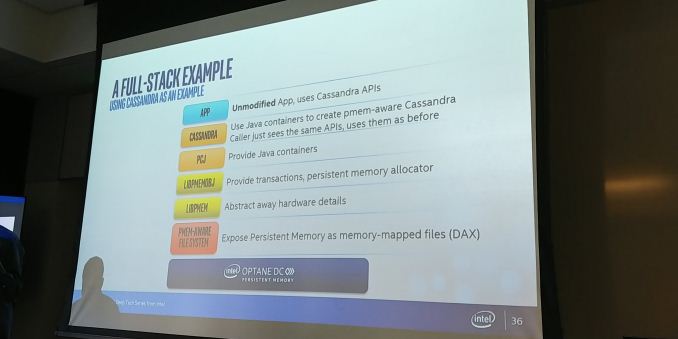

01:40PM EDT - Cassandra full stack example

01:42PM EDT - Changed Cassandra to use persistent Java containers

01:43PM EDT - The things that Cassandra provides doesn't change - the app on top using Cassandra doesn't need to know about the PM model

01:45PM EDT - SAP Hana has already done an Optane DC PM demo

01:45PM EDT - VMWare has done changes to Hyper-V

01:46PM EDT - Intel has done changes for Xen and posted them for upstream review

01:48PM EDT - Remote Developer Access is now in the early look stage

01:48PM EDT - Submit proposals to Intel. Ready for functional testing and use case exploration

01:49PM EDT - OK small 10-min break

02:08PM EDT - We got some photos of the modules

02:09PM EDT - 256 GB and 512 GB modules

02:09PM EDT - OK here we go again

02:09PM EDT - Bill Lizinske to the stage. Head of the NVMe group

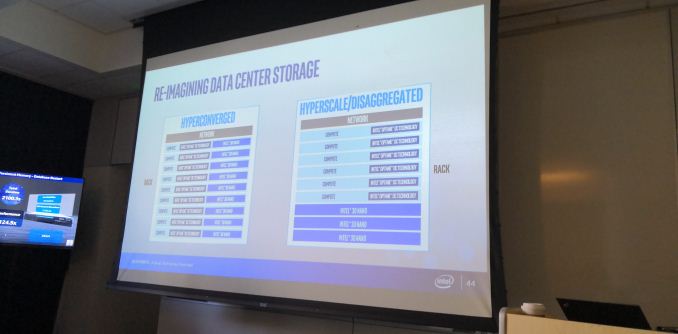

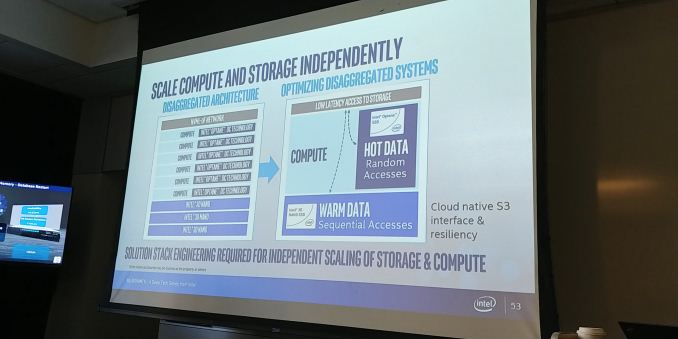

02:10PM EDT - Two directions = Hyperconverged and Hyperscale / Disaggregated

02:13PM EDT - Discussing 3D NAND vs 3D XPoint

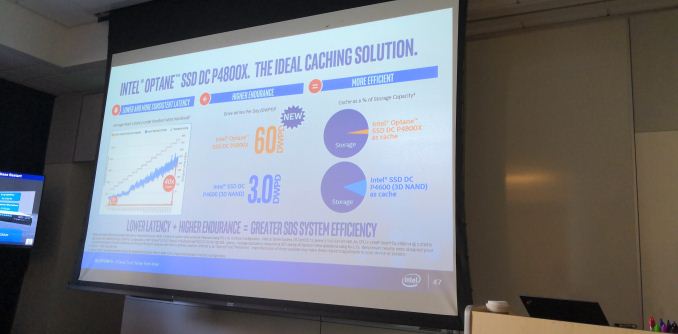

02:13PM EDT - Optane is great for storage, and caching in particular

02:14PM EDT - 'Optane has impressive endurance'

02:14PM EDT - Drives moving up to 60 drive writes per day

02:16PM EDT - Swapping NAND for Optane, might lower the cache size, but gives more perf

02:17PM EDT - Intel will introduce new drives at 60 DWPD, rather than what the slide says about the P4800X. That stays at 30 DWPD

02:17PM EDT - Still using first gen 3DXP

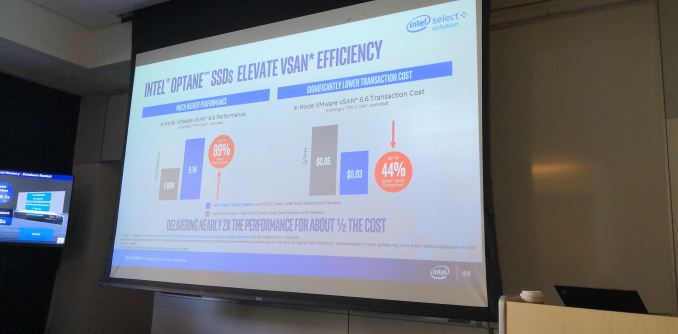

02:18PM EDT - Intel team worked for a year with VMWare to get a significant perf increase using Optane

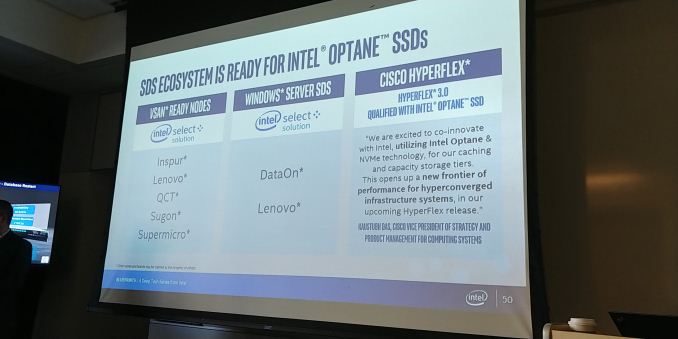

02:18PM EDT - Wanting to work with all the hyper converged players

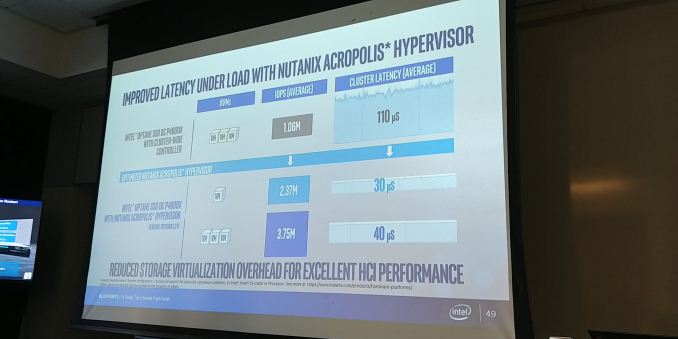

02:20PM EDT - Lower read latencies when taking out software-based latency

02:21PM EDT - Taking away noisy neighbor problems

02:22PM EDT - Intel Optane SSD deployments still increasing, increase in OEMs offering VSAN Ready Nodes

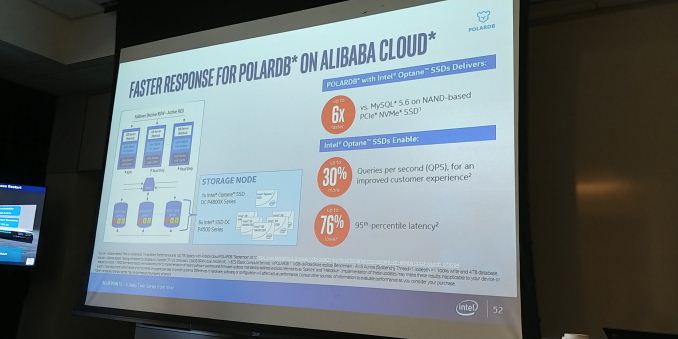

02:24PM EDT - Optane storage in MySQL mission critical cloud server database

02:24PM EDT - tiering the data between Optane and 3D NAND

02:25PM EDT - Always important to balance Optane/NAND ratio

02:26PM EDT - Disaggregating storage from compute allows them to scale independently as required

02:27PM EDT - Use Optane for random access, NAND for sequential access

02:28PM EDT - Making sure the local data set is in the right location for the type of access

02:28PM EDT - 'If you design the system properly'

02:31PM EDT - Disaggregation allows for tune performance and resilience

02:31PM EDT - Announcements about new Optane storage software stacks in the near future

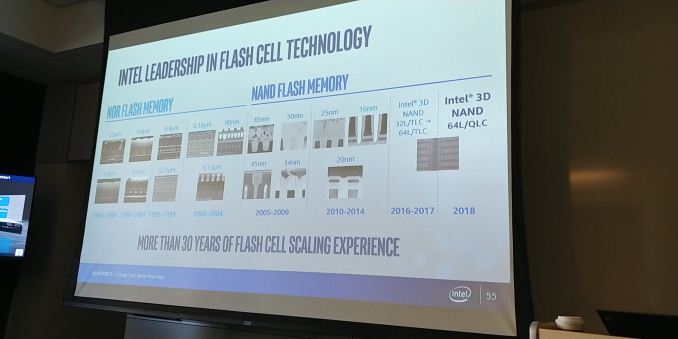

02:32PM EDT - Now for more on 3D NAND

02:32PM EDT - NAND is all about cost

02:32PM EDT - Replace more HDDs with SSDs

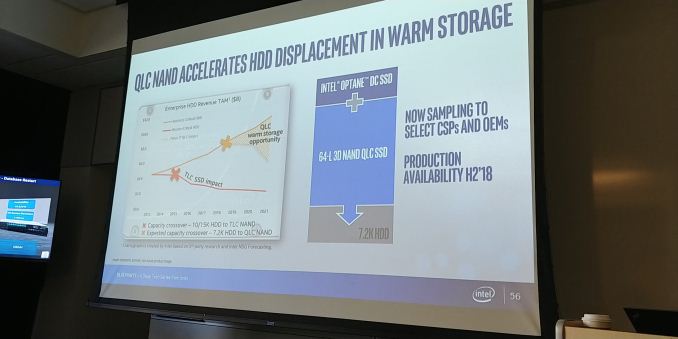

02:32PM EDT - Moving to QLC

02:33PM EDT - Have been using floating gate technology throughout

02:33PM EDT - Currently shipping 64L TLC. Just gone to production of 64L QLC

02:34PM EDT - A bit of Optane and a whole lot of 3D NAND

02:34PM EDT - Now sampling QLC, production availability in H2 2018

02:34PM EDT - Moving QLC NAND into the Warm storage tier

02:34PM EDT - pushing HDDs further into the cold storage

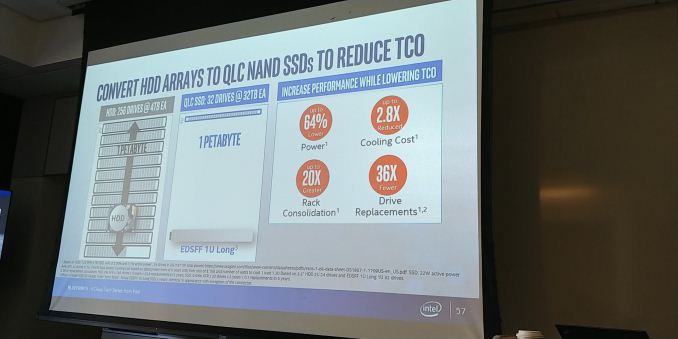

02:35PM EDT - Using the Ruler form factor to reinvent the data center

02:35PM EDT - $10B+ TAM in 2021

02:35PM EDT - 16 TB Ruler

02:36PM EDT - Replace 256 x 4 TB HDDs with 32 ruler drives for a single 1U

02:36PM EDT - (Also, less likely to break)

02:37PM EDT - Ruler = EDSFF

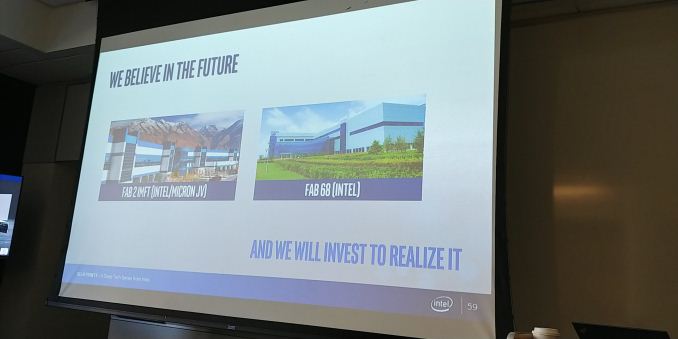

02:37PM EDT - Investment in Fab 2 and Fab 68

02:37PM EDT - Fab 2 in Utah, dedicated for 3D XP. 100% converted

02:38PM EDT - Fab 68 is doing all of Intel's 3D NAND

02:38PM EDT - No public numbers on wafers per month from Fab 68, but much bigger than Fab 2

02:38PM EDT - New fab space at Fab 68 coming online, which is 1.75x bigger than previous

02:40PM EDT - QLC will be in client drives in second half of this year

02:41PM EDT - Q&A time for a bit

02:56PM EDT - OK Q&A over, here are the questions and results

02:56PM EDT - Q) Do the DIMMs require next gen CPUs? A) Yes

02:57PM EDT - Q) You mentioned Intel will be revenue shipping for 2018. Will ecosystem partners be shipping systems in 2018? A) We expect systems to be shipping in 2018, but the details of specific customers will be up to them

02:57PM EDT - Q) What is Crystal Ridge? A) Crystal Ridge is the top level family of persistent memory parts, of which Apache Pass is a member

02:58PM EDT - Q) It was mentioned about DDR4 pin compatibility with the DIMMs. Can users mix and match the DIMMs on individual channels, or will there be dedicated channels? A) Not disclosed at this time.

02:59PM EDT - Q) One of the slides had a Xeon Platinum logo. Will the DIMMs only work on Xeon Platinum? A) Not disclosed at this time. We just showed pairing the best with the best

02:59PM EDT - Q) Will there be a DIMM writes per day? A) Not disclosed at this time. But DIMMs will be operational for the lifetime of the DIMM

03:00PM EDT - Q) What is the power draw of the Optane DIMM compared to regular DIMMs? A) Not disclosed at this time.

03:00PM EDT - Q) Does this mean the memory limits of future Xeons are being increased? A) Not disclosed at this time.

03:00PM EDT - Q) Will all future Xeons support the Optane DIMMs? A) Not disclosed at this time.

03:00PM EDT - Q) Clock speeds of the DIMMs? A) Standard DDR4 speeds. Other details not disclosed.

03:01PM EDT - Q) Will the QLC NAND adhere to JEDEC data retention. A) Not disclosed at this time.

03:01PM EDT - That's a wrap. Some discussions and customer presentations for the rest of the day.

28 Comments

View All Comments

sharath.naik - Wednesday, May 30, 2018 - link

Not sure if the industry will adopt this. The whole idea of ram is fast access at RUN TIME and having the hard drive as a permanent storage (5000 write is manageable as most writes happen in memory due to transient data). But having a write cycle life to the ram does not make any sense, that too something that is as low as 10000 writes. Boot up time for servers has never been an issue after the advent of SSDs.Seems like a pointless implementation, unless there is no write cycles.

haukionkannel - Wednesday, May 30, 2018 - link

Because these works directly as system memory and are also faster than any ssd solution. They are very useful in situations where you have to handle very large files, or anything that eats a lot of memory. So big that it does not fit to normal ram. This is much faster than loading that data from any normal storage. Some heavy video editing would be quite optimal to this type of memory or some very large databases, so that the whole thing would stay in the system memory.CaedenV - Wednesday, May 30, 2018 - link

Think about having a huge database, and how no amount of RAM can really hold it. So instead you keep the active bits of the DB in RAM, and the rest of it on the fastest storage possible. It use to be HDDs, then SSDs, then Optane, and now this. Much cheaper than RAM, and much faster than SSDs, this is what Optane/xpointe was supposed to be from day 1. Buy a server with a 'mere' 128GB of RAM, and 512GB of this optane and you can save a ton of money while taking virturally no performance hit. And with the ability to quickly back up RAM to the Optane you can get away with smaller battery backups and easier power recovery options.This was Optane should have been from day 1. There is a real use for this rather than the BS released with previous Optane SSDs. Glad to see it finally come out, and hopefully they launch it well.

name99 - Wednesday, May 30, 2018 - link

Venues like Flash Memory Summit have been talking about this for years. That the point of the references to SNIA.The point is that what you are supposed to get is PERSISTENT RANDOM BYTE access. This has a number of consequences, but most importantly it allows you to design things like databases or file systems that can utilize "in-memory" optimized data structures based on pointers rather than IO optimized data structures built around 4K atomic units; and to have reads and writes that are pure function calls without having to go through OS (and then IO) overhead.

Technically there are a number of challenges that make this non-trivial; in particular to ensure ACID transactions just like with a traditional database you have to ensure that metadata is written out in a very particular order (so that if something goes wrong partway, the persistent state is not inconsistent) and this mean both new cache control instructions needed in the CPU, and new algorithms+data structures needed for the design and manipulation of the database/file system.

Now, that's the dream. Because Intel has been SO slow in releasing actual nvDIMMs people are (justifiably) more than a little skeptical of exactly what they ARE releasing and its characteristics. IF Intel were to release what they promised years ago, everything I said above would work just great. You'd have the equivalent of say 500GB persistent storage in your computer with the performance (more or less) of DRAM and, rather than the most simple-minded solutions of using all that space as a cache for the file system, you would use it to host actual databases used by the OS and/or some applications for even better performance.

BUT no-one (sane) trusts Intel any more so, yeah, your skepticism is warranted. WHEN real, decent, persistent DRAM arrives at DRAM speeds and acceptable power levels, what I said above will happen. IS that what Intel is delivering? Hmm. Their vagueness about details and specs makes one wonder...

It certainly SEEMS like they are pushing this as a faster (old-style) file system that's on the DRAM bus rather than the PCI bus so that much closer to CPU; but they are NOT pushing the fact that you can use the persistence for memory-style (pointer-based) data structures rather than as a file system. (The stuff they call DAX).

This may just reflect that those (fancier) use cases are not yet ready --- or it may reflect the fact that their write rates and write endurance are not good enough...

peevee - Thursday, May 31, 2018 - link

"The whole idea of ram is fast access at RUN TIME"That WAS the idea of RAM, up to about 20-25 years ago. Since then, CPU speeds and DRAM speeds started diverging more and more, and now performance profile of DRAM looks like the performance profile of HDD 25 years ago (you can read 512B sector quite quickly after a very significant latency).

Real RAM now is last level cache - unfortunately, it is as small as RAM used to be 25 years ago while requirements of modern, terribly unoptimized software are much higher.

sharath.naik - Wednesday, May 30, 2018 - link

Samsung's rapid Ram cached SSD with some capacitors to push the data to SSD in case of power failure will achieve a better solution than this.rocky12345 - Wednesday, May 30, 2018 - link

Yea I use Samsung Rapid Cache on my Sammy SSD it works fairly well too I have not had any problems with it yet...."Knocks on wood"...lolBilly Tallis - Wednesday, May 30, 2018 - link

The whole point of these Optane DIMMs is to offer capacities beyond the amount of DRAM you can afford. NVDIMMs that use DRAM as working memory and backup to NAND flash on power loss are only at 32GB per module and require external capacitor banks. These Optane DIMMs will offer 4-16x the capacity and don't need any supercaps to be non-volatile.SharpEars - Wednesday, May 30, 2018 - link

What are the price points? Are we talking $3999 per module, here?Ian Cutress - Wednesday, May 30, 2018 - link

This is looking more a disclosure than a launch. Launch in the future, as it requires next-generation Xeons. Still waiting for the Q&A, news is breaking :)