The Toshiba RC100 SSD Review: Tiny Drive In A Big Market

by Billy Tallis on June 14, 2018 9:00 AM ESTExploring the NVMe Host Memory Buffer Feature

Most modern SSDs include onboard DRAM, typically in a ratio of 1GB RAM per 1TB of NAND flash memory. This RAM is usually dedicated to tracking where each logical block address is physically stored on the NAND flash—information that changes with every write operation due to the wear leveling that flash memory requires. This information must also be consulted in order to complete any read operation. The standard DRAM to NAND ratio provides enough RAM for the SSD controller to use a simple and fast lookup table instead of more complicated data structures. This greatly reduces the work the SSD controller needs to do to handle IO operations, and is key to offering consistent performance.

SSDs that omit this DRAM can be cheaper and smaller, but because they can only store their mapping tables in the flash memory instead of much faster DRAM, there's a substantial performance penalty. In the worst case, read latency is doubled as potentially every read request from the host first requires a NAND flash read to look up the logical to physical address mapping, then a second read to actually fetch the requested data.

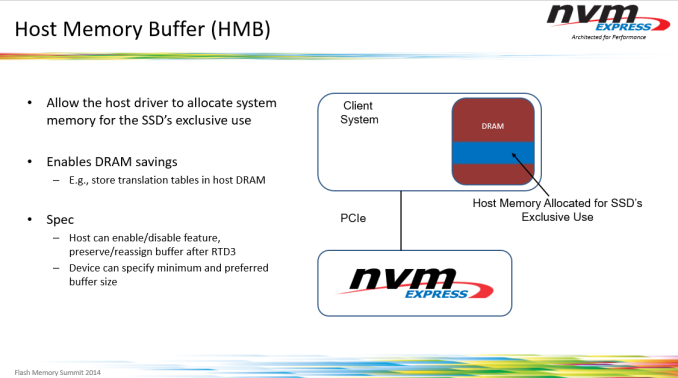

The NVMe version 1.2 specification introduced an in-between option for SSDs. The Host Memory Buffer (HMB) feature takes advantage of the DMA capabilities of PCI Express to allow SSDs to use some of the DRAM attached to the CPU, instead of requiring the SSD to bring its own DRAM. Accessing host memory over PCIe is slower than accessing onboard DRAM, but still much faster than reading from flash. The HMB is not intended to be a full-sized replacement for the onboard DRAM that mainstream SSDs use. Instead, all SSDs using the HMB feature so far have targeted buffer sizes in the tens of megabytes. This is sufficient for the drive to cache mapping information for tens of gigabytes of flash, which is adequate for many consumer workloads. (Our ATSB Light test only touches 26GB of the drive, and only 8GB of the drive is accessed more than once.)

Caching is of course one of the most famously difficult problems in computer science and none of the SSD controller vendors are eager to share exactly how their HMB-enabled controllers and firmware use the host DRAM they are given, but it's safe to assume the caching strategies focus on retaining the most recently and heavily used mapping information. Areas of the drive that are accessed repeatedly will have read latencies similar to that of mainstream drives, while data that hasn't been touched in a while will be accessed with performance resembling that of traditional DRAMless SSDs.

SSD controllers do have some memory built in to the controller itself, but usually not enough to allow a significant portion of NAND mapping tables to be cached. For example, the Marvell 88SS1093 Eldora high-end NVMe SSD controller has numerous on-chip buffers with capacities in the kilobyte range and aggregate capacity of less than 1MB. Some SSD vendors have hinted that their controllers have significantly more on-board memory—Western Digital says this is why their SN520 NVMe SSD doesn't use HMB, but they declined to say how much memory is on that controller. We've also seen some other drives in recent years that don't fall clearly into the DRAMless category or the 1GB per TB ratio. The Toshiba OCZ VX500 uses a 256MB DRAM part for the 1TB model, but the smaller capacity drives rely just on the memory built in to the controller (and of course, Toshiba didn't disclose the details of that controller architecture).

The Toshiba RC100 requests a block of 38 MB of host DRAM from the operating system. The OS could provide more or less than the drive's preferred amount, and if the RC100 gets less than 10MB it will give up on trying to use HMB at all. Both the Linux and Windows NMVe drivers expose some settings for the HMB feature, allowing us to test the RC100 with HMB enabled and disabled. In theory, we could also test with varying amounts of host memory allocated to the SSD, but that would be a fairly time-consuming exercise and would not reflect any real-world use cases, because the driver settings in question are so obscure and not worth changing from their defaults.

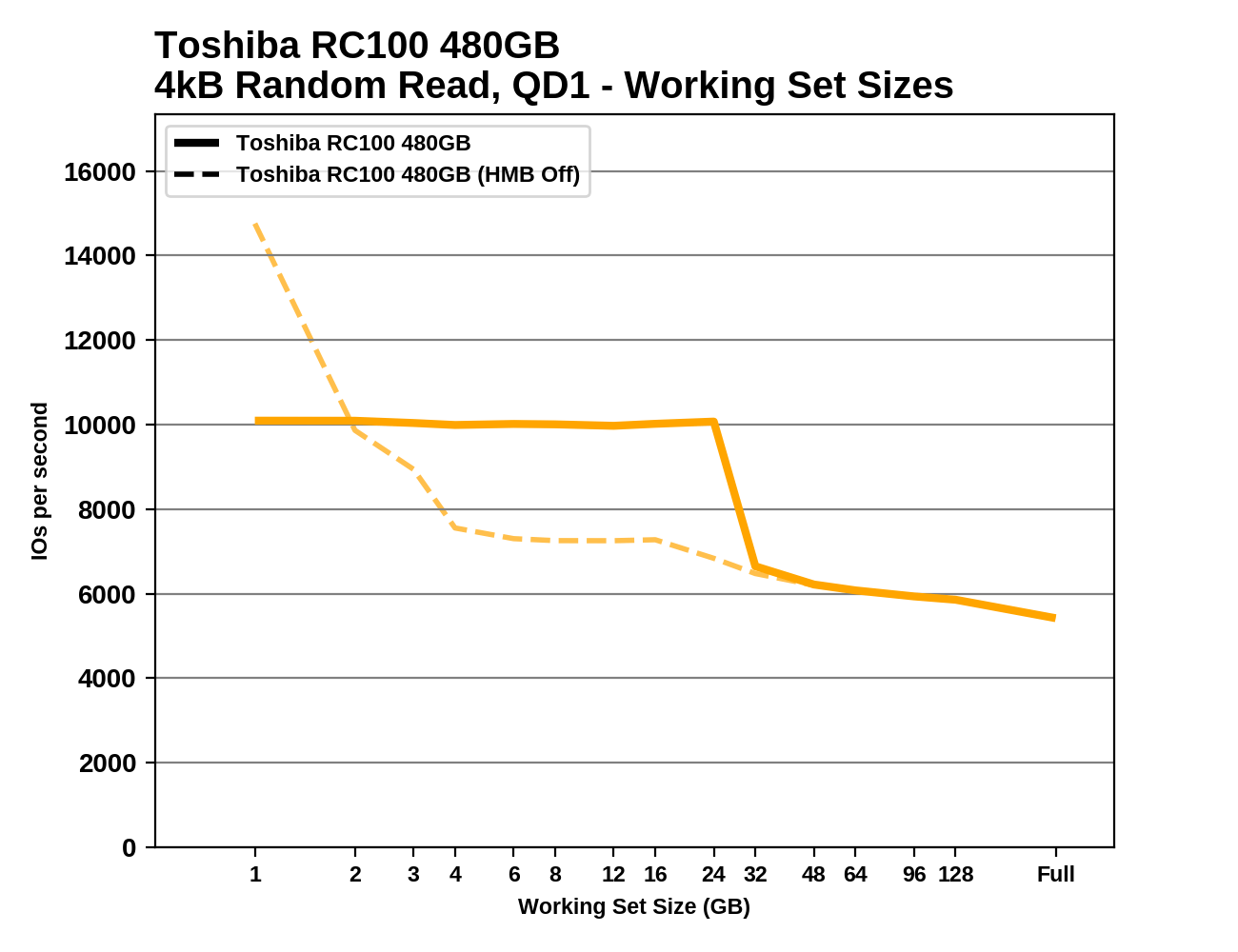

Working Set Size

We can see the effects of the HMB cache quite clearly by measuring random read performance while increasing the test's working set—the amount of data that's actively being accessed. When all of the random reads are coming from the same 1GB range, the RC100 performs much better than when the random reads span the entire drive. There's a sharp drop in performance when the working set approaches 32GB. When the RC100 is tested with HMB off, performance is just as good for a 1GB working set (and actually substantially better on the 480GB model), but larger working sets are almost as slow as the full-span random reads. It looks like the RC100's controller may have about 1MB of built-in memory that is much faster than accessing host DRAM over the PCIe link.

|

|||||||||

Most mainstream SSDs offer nearly the same random read performance regardless of the working set size, though performance through this test varies some due to other factors (eg. thermal throttling). The drives using the Phison E7 and E8 NVMe controllers are a notable exception, with significant performance falloff as the working set grows, despite these drives being equipped with ample onboard DRAM.

62 Comments

View All Comments

Mikewind Dale - Thursday, June 14, 2018 - link

Interesting review. Thanks.I'm hoping that smaller, 11" and 13" laptops will start offering M.2 2242 instead of eMMC. I've been wary of purchasing a smaller laptop because I'm afraid that if the NAND ever reaches its lifespan, the laptop will be dead, with no way to replace the storage. An M.2 2242 would solve that problem.

PeachNCream - Thursday, June 14, 2018 - link

Boot options in the BIOS may allow you to select USB or SD as an option in the event that a modern eMMC system suffers from a soldered on drive failure. In that case, it's still possible to boot from an OS and use the computer. In that case, I'd go for some sort of lightweight Linux OS for performance reasons, but even a full distro works okay on USB 3.0 and up. SD is a slower option, but you may not want your OS drive to protrude from the side of the computer. Admittedly, that's a sort of cumbersome solution to keeping a low-budget PC alive when replacement costs aren't usually that high.peevee - Thursday, June 14, 2018 - link

"but this is only on platforms with properly working PCIe power management, which doesn't include most desktops"Billy, could you please elaborate on this?

artifex - Thursday, June 14, 2018 - link

Yeah, I'd also like to hear more about this.Billy Tallis - Thursday, June 14, 2018 - link

I've never encountered a desktop motherboard that had PCIe ASPM on by default, so at most it's a feature for power users and OEMs that actually care about power management. I've seen numerous motherboards that didn't even have the option of enabling PCIe ASPM, but the trend from more recent products seems to be toward exposing the necessary controls. Among boards that do let you fully enable ASPM, it's still possible for using it to expose bugs with peripherals that breaks things—sometimes the peripheral in question is a SSD. The only way I'm able to get low-power idle measurements out of PCIe SSDs on the current testbed is to tell Linux to ignore what the motherboard firmware says and force PCIe ASPM on, but this doesn't work for everything. Without some pretty sensitive power measurement equipment, it's almost impossible for an ordinary desktop user to know if their PCIe SSD is actually achieving the <10mW idle power that most drives advertise.peevee - Thursday, June 14, 2018 - link

So by "properly working" you mean "on by default in BIOS"? Or there are actual implementation bugs in some Intel or AMD CPUs or chipsets?Billy Tallis - Thursday, June 14, 2018 - link

Implementation bugs seem to be primarily a problem with peripheral devices (including peripherals integrated on the motherboard), which is why motherboard manufacturers are often justified in having ASPM off by default or entirely unavailable.AdditionalPylons - Thursday, June 14, 2018 - link

That's very interesting. And thanks Billy for a nice review! I too appreciate you doing something different. There will unfortunately always be someone angry on the Internet.Kwarkon - Friday, June 15, 2018 - link

L1.2 is a special PCIe link state that requires hardware CLREQ signal. When L1.2 is active all communication on PCIe is down thus both host and NVME device do not have to listen for data.Desktops don't have this signal ( it is grounded), so even if you tell the SSD (NVME admin commands) that L1.2 support is enabled it will still not be able to negotiate it.

In most cases m.2 NVME require certain PCIe link state to get lowest power for their Power State.

The PS x are just states that if all conditions are met than the SSD will get its power down to somewhere around stated value.

You can always check tech specs of the NVME. If in fact low power is supported than the lowest power will be stated as "deep sleep L1.2 " or similar.

Death666Angel - Saturday, June 16, 2018 - link

Prices in Germany do not line up one bit with the last chart. :D The HP EX920 1TB is 335€ and the ADATA SX8200 960GB is 290€. The SBX just has a weird amazon.de reseller who sells the 512GB version for 200€. The 970 Evo 1TB is 330€ and the Intel 760p 1TB is 352€. And for completeness, the WD Black 1TB is 365€. Even when accounting for exchange rates and VAT, the relative prices are nowhere near the US ones. :)