Assessing Cavium's ThunderX2: The Arm Server Dream Realized At Last

by Johan De Gelas on May 23, 2018 9:00 AM EST- Posted in

- CPUs

- Arm

- Enterprise

- SoCs

- Enterprise CPUs

- ARMv8

- Cavium

- ThunderX

- ThunderX2

Memory Subsystem: Bandwidth

Measuring the full bandwidth potential of a system with John McCalpin's Stream bandwidth benchmark is getting increasingly difficult on the latest CPUs, as core and memory channel counts have continued to grow. As you can see from the results below, it not easy to measure bandwidth. The result vary wildly depending on the setting you choose.

| Memory: STREAM Bandwidth | ||

| Mem Hierarchy |

Compiler & OS settings | Result |

| Cavium ThunderX2 Gcc 7.2 binary |

-O2 -mcmodel=large -fopenmp -DVERBOSE -fno-PIC" OMP_PROC_BIND=spread |

241 GB/s |

| Cavium ThunderX2 Gcc 7.2 binary |

-Ofast -fopenmp -static OMP_PROC_BIND=spread |

157 GB/s |

| Cavium ThunderX2 Gcc 7.2 binary |

OMP_PROC_BIND not configured | 118 GB/s |

| Intel ICC Binary | -fast -qopenmp -parallel KMP_AFFINITY=verbose,scatter |

183 GB/s |

| Intel gcc Binary | Ofast -fopenmp -static OMP_PROC_BIND=spread |

151 GB/s |

| Intel gcc Binary | Ofast -fopenmp -static OMP_PROC_BIND not configured |

150 GB/s |

Theoretically, the ThunderX2 has 33% more bandwidth available than an Intel Xeon, as the SoC has 8 memory channels compared to Intel's six channels. These high bandwidth numbers can only be achieved in very specific conditions and require quite a bit of tuning to avoid reaching out to remote memory. In particular, we have to ensure that threads don't migrate from one socket to the other.

We first tried to achieve the best results on both architectures. In case of Intel the ICC compiler always produced better results with some low level optimizations inside the stream loops. In case of Cavium, we followed the instructions of Cavium. So strictly speaking these are not comparable, but it should give you an idea of what kind of bandwidth these CPUs can achieve at their respective peaks. To be fair to Intel, with ideal settings (AVX-512) you should be able to achieve 200 GB/s.

Nevertheless, it is clear that the ThunderX2 system can deliver between 15% and 28% more bandwidth to its CPU cores. This works out to 235 GB/sec, or about 120 GB/sec per socket. Which in turn is about 3 times more than what the original ThunderX was capable off.

Memory Subsystem: Latency

While Bandwidth measurements are only relevant to a small part of the server market, almost every application is heavily impacted by the latency of memory subsystem. To that end, we used LMBench in an effort to try to measure cache and memory latency. The numbers we looked at were "Random load latency stride=16 Bytes". Note that we're expressing the L3 cache and DRAM latency in nanoseconds since we don't have accurate L3-cache clockspeed values.

| Memory: LMBench Latency | |||

| Mem Hierarchy |

Cavium ThunderX DDR4-2133 |

Cavium ThunderX2 DDR4-2666 |

Intel Skylake 8176 DDR4-2666 |

| L1-cache (cycles) | 3 | 4 | 4 |

| L2-cache (cycles) | 40/80 (*) | 8-9 | 12 |

| L3-cache 4-8 MB (ns) | N/A | 27-30 ns | 24-29 ns |

| Memory 384-512 (ns) | 103/206 (*) | 156-157 ns | 89-91 ns |

The L2-cache of the ThunderX2 is accessed with very little latency, and with a single thread running, the L3-cache is competitive with the Intel's complex L3 cache. Once we hit the DRAM however, Intel offers significantly lower latency.

Memory Subsystem: TinyMemBench

To get a deeper understanding of the respective architectures, we also ran the open source TinyMemBench benchmark. The source code was compiled with GCC 7.2 and the optimization level was set to "-O3". The benchmark's testing strategy is described rather well in its manual:

Average time is measured for random memory accesses in the buffers of different sizes. The larger the buffer, the more significant the relative contributions of TLB, L1/L2 cache misses, and DRAM accesses become. All the numbers represent extra time, which needs to be added to L1 cache latency (4 cycles).

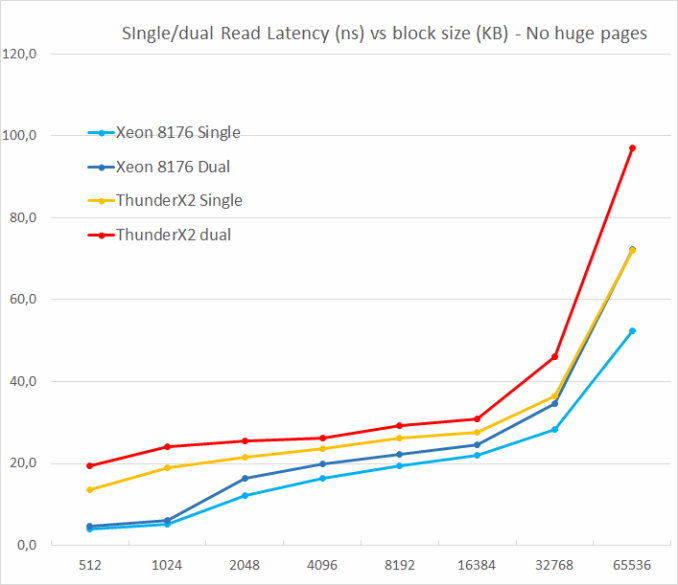

We tested with single and dual random read (no huge pages), as we wanted to see how the memory system coped with multiple read requests.

One of the major weaknesses of the original ThunderX was that it did not support multiple outstanding misses. Memory level parallelism is an important feature for any high-performance modern CPU core: using it it avoids cache misses that would starve the wide back end. A non-blocking cache is thus a key feature for wide cores.

The ThunderX2 does not suffer from that problem at all, thanks to its non-blocking cache. Just like the Skylake core in the Xeon 8176, a second read causes the overall latency to increase by only 15-30%, and not 100%. According to TinyMemBench, the Skylake core has tangibly better latencies. The datapoint at 512 KB is of course easy to explain: the Skylake core is still fetching from its fast L2, while the ThunderX2 core has to access its L3. But the numbers at 1 and 2 MB indicate that Intel's prefetchers offer a serious advantage as the latency stays is an averag of the L2 and the L3-cache. Around 8 to 16 MB, the latency numbers are close, but once we go beyond the L3 (64 MB), Intel's Skylake offers lower memory latencies.

97 Comments

View All Comments

Wilco1 - Wednesday, May 23, 2018 - link

You might want to study RISC and CISC first before making any claims. RISC doesn't use more instructions than CISC. Vector instructions are actually quite similar on most ISAs. In fact I would say the Neon ones are more powerful and more general due to being well designed rather than added ad-hoc.HStewart - Wednesday, May 23, 2018 - link

The following site explain the difference using a simple multiply action, where a CISC architecture can do in single instruction, RISC would need to use multiple instructionshttp://www.firmcodes.com/difference-risc-sics-arch...

of course as time move on RISC chips added more complex operations and CISC also found ways to breaking more complex CISC instruction in smaller RISC like microcode increasing the chip ability to multitask the pipeline.

Wilco1 - Thursday, May 24, 2018 - link

The example was about load/store architecture, not multiply. In reality almost all instructions use registers (even on CISCs) since memory is too slow, so it's not a good example of what happens in actual code. The number of executed instructions on large applications is actually very close. The key reason is that compilers avoid all the complex instructions on x86 and mostly use register operations, not memory.Kevin G - Tuesday, May 29, 2018 - link

Raw instruction counts isn't a good metric to determine the difference between RISC and CISC, especially as both have evolved to include various SIMD and transactional extensions.The big thing for RISC is that it only supports a handful of instruction formats, generally all of the same length (traditionally 4 bytes)* and have alignment rules in place. x86 on the other hand leverages a series of prefixes to enhance instructions which permits length up to 15 bytes. On the flip side, there are also x86 instructions that consume a single byte. This also means x86 doesn't have the alignment rules that RISC chips generally adhere to.

*ARM does offer some compressed instruction formats in Thumb/Thumb2 but they those are also of a fixed length. 16 bit Thumb instructions are half size as 32 bit ARM instructions and have alignment rules as well.

Modern x86 is radically different internally than its philosophical lineage. x86 instructions are broken down into micro-ops which are RISC-like in nature. These decoded instructions are now being cached to bypass the complex and power hungry decode stages. Compare this to some ARM cores where some instructions do not have to be decoded. While having a simpler decode doesn't directly help with performance, it does impact power consumption.

However, I would differ and say that ARM's FPU and vector history has been rather troubled. Initially ARM didn't specify a FPU but rather a method to add coprocessors. This lead to 3rd parties producing ARM cores with incompatible FPUs. It wasn't until recently that ARM themselves put their foot down and mandated NEON as the one to rule them all, especially in 64 bit mode.

peevee - Wednesday, May 23, 2018 - link

The whole RISC vs CISC distinction is outdated for at least 20 years. Both now include a shi(p)load of instruction far outnumbering original CISC processors like 68000 and 8088 (from the epoch of the whole CISC vs RISC discussion), and both have a lot of architectural registers (which on speculative OoO CPUs are not even the same as real register files). ARMv8 for example includes NEON instructions, which is like... "AVX-128" (or SSE3 or smth).A lot of instructions means that both have to have huge decoders, which limits how small the CPU can be (because any reduction in other hardware which decrease performance faster than cost). For 64-bit ARMv8.2 it is very unlikely than an implementation can be made smaller than A55, and it is a huge core (in transistors) compared to even Pentium, let alone 8088.

HStewart - Wednesday, May 23, 2018 - link

I think the big difference between SIMD technologies - even though ARM has included they are not as wide as instructions as Intel or AMD. The following link appears to have a good comparison of chip SIMD comparison in size, To me in looks like AMD is on AVX level 8/16 instead of 16/32 in current chips while ARM including Neon is 4 Wide which is actually less than Core 2 SSE instructions from 10 years ago.https://stackoverflow.com/questions/15655835/flops...

It also interesting to note Ryzen stats - which I heard that AMD implement AVX 256 by combine two 128 together

One thing is that both Intel and AMD CPUs have grown a long ways since 20 years ago. In fact even todays Atom's can out rune most core-2 CPU's from 10 years - not my Xeon 5160 however.

ZolaIII - Thursday, May 24, 2018 - link

It's 2x128 NEON SIMD per ARM A75 core which goes into your smartphone.Even with smaller SIMD utilising TBL QC Centriq is able to beat up an Xerox Gold.

https://blog.cloudflare.com/neon-is-the-new-black/

Wilco1 - Thursday, May 24, 2018 - link

Modern Arm cores have 2-3 128-bit SIMD units, so 16-24 SP FLOPS/cycle. About half of Skylake theoretical flops, and yet they can match or beat Skylake on many HPC codes. Size is not everything...peevee - Thursday, May 24, 2018 - link

"ARM including Neon is 4 Wide which is actually less than Core 2 SSE instructions from 10 years ago"How is it less? It is the same 128 bits, 2x64 or 4x32 or 2x16...

And AMD combines 2 AVX-256 operations (not 2 128-bit SSEs) to get AVX-512.

patrickjp93 - Friday, May 25, 2018 - link

AMD does NOT have AVX-512. They combine 2 128s into a 256 on Ryzen, ThreadRipper, and Epyc.