The AMD 2nd Gen Ryzen Deep Dive: The 2700X, 2700, 2600X, and 2600 Tested

by Ian Cutress on April 19, 2018 9:00 AM ESTCivilization 6

First up in our CPU gaming tests is Civilization 6. Originally penned by Sid Meier and his team, the Civ series of turn-based strategy games are a cult classic, and many an excuse for an all-nighter trying to get Gandhi to declare war on you due to an integer overflow. Truth be told I never actually played the first version, but every edition from the second to the sixth, including the fourth as voiced by the late Leonard Nimoy, it a game that is easy to pick up, but hard to master.

Benchmarking Civilization has always been somewhat of an oxymoron – for a turn based strategy game, the frame rate is not necessarily the important thing here and even in the right mood, something as low as 5 frames per second can be enough. With Civilization 6 however, Firaxis went hardcore on visual fidelity, trying to pull you into the game. As a result, Civilization can taxing on graphics and CPUs as we crank up the details, especially in DirectX 12.

Perhaps a more poignant benchmark would be during the late game, when in the older versions of Civilization it could take 20 minutes to cycle around the AI players before the human regained control. The new version of Civilization has an integrated ‘AI Benchmark’, although it is not currently part of our benchmark portfolio yet, due to technical reasons which we are trying to solve. Instead, we run the graphics test, which provides an example of a mid-game setup at our settings.

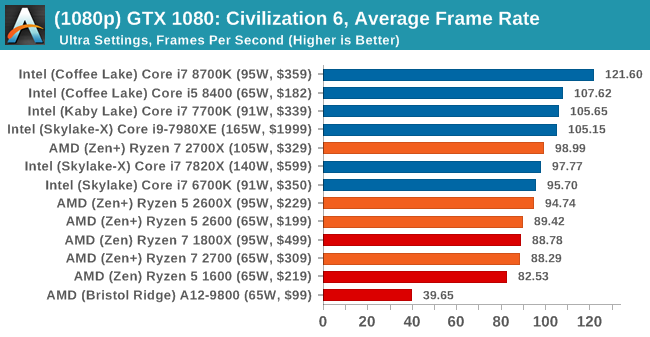

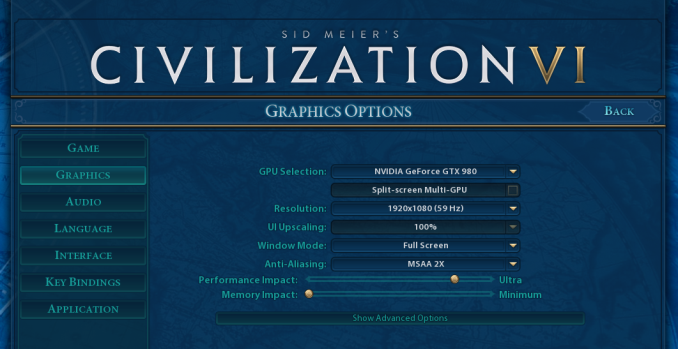

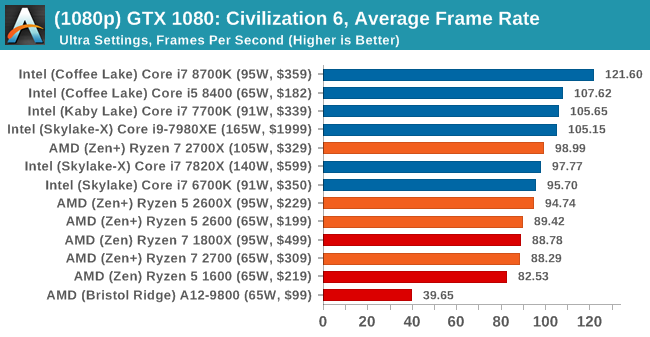

At both 1920x1080 and 4K resolutions, we run the same settings. Civilization 6 has sliders for MSAA, Performance Impact and Memory Impact. The latter two refer to detail and texture size respectively, and are rated between 0 (lowest) to 5 (extreme). We run our Civ6 benchmark in position four for performance (ultra) and 0 on memory, with MSAA set to 2x.

For reviews where we include 8K and 16K benchmarks (Civ6 allows us to benchmark extreme resolutions on any monitor) on our GTX 1080, we run the 8K tests similar to the 4K tests, but the 16K tests are set to the lowest option for Performance.

All of our benchmark results can also be found in our benchmark engine, Bench.

MSI GTX 1080 Gaming 8G Performance

1080p

4K

8K

16K

545 Comments

View All Comments

jor5 - Thursday, April 26, 2018 - link

Pull this shambles and repost when you've corrected it fully.mapesdhs - Monday, May 14, 2018 - link

Not an argument. It is just as interesting to learn about how and why this issue occured, to understand the nature of benchmarking. Life isn't just about being spoonfed end nuggets of things, the process itself is relevant. Or would you rather we don't learn from history?peevee - Thursday, April 26, 2018 - link

When 65W i7 8700 is 15% faster in Octane 2.0 than 105W Rizen 7 2700x, it is just sad.Of course, the horrible x64 practically demands than compilers must optimize for a very specific CPU implementation (choosing and sorting instructions in the code accordingly), AMD could have at least realized the fact and optimize their own implementation for the same Intel-optimized code generators...

GreenReaper - Thursday, April 26, 2018 - link

Intel compilers and libraries tend not to use the ideal instructions unless they detect a GenuineIntel signature via CPUID - it'll likely use the default lowest-common-denominator pathway instead.TDP is more of a guideline - it doesn't determine actual power usage (we've seen Coffee Lake use way more than the TDP), let alone the power used in a particular operation. Having said that, I wouldn't be surprised if Intel were more efficient in this particular test. But it'd be interesting to know how much impact Meltdown patches have in that area; they might well increase the amount of time the CPU spends idle (but not idle enough to go into a sleep mode) as it waited to fetch instructions.

SaturnusDK - Thursday, April 26, 2018 - link

Compare power consumption to blender score. Ryzen is about 9% more power efficient.TDP is literally Thermal Design Power. It has nothing to do with power consumption.

peevee - Thursday, April 26, 2018 - link

"TDP is literally Thermal Design Power. It has nothing to do with power consumption."Unless you have invented a way to overcome energy conservation law, power consumed = power dissipated.

SaturnusDK - Friday, April 27, 2018 - link

It's a guideline for cooling solutions. Look at the power consumption numbers in this test for example.Ryzen 2700X power consumption under full load 110W.

Intel i7 8700K power consumption under full load 120W.

Both are at stock speeds with the Ryzen having 8 cores versus 6 cores, and scoring 2700X 24% higher Cinebench scores. Ryzen is rated at 105W TDP so actual power consumption at stock speed is pretty close. The 8700K uses 120W so it's pretty far from the 95W TDP it is rated at.

ijdat - Saturday, April 28, 2018 - link

The 8700 also uses 120W so it's even further from the 65W TDP it's rated at. In comparison Ryzen 2700 uses 45W when it has the same rated 65W TDP. I know which one I'd prefer to put into a quiet low-power system...mapesdhs - Monday, May 14, 2018 - link

Perhaps this is AMD's biggest win this time round, potent HTPC setups.peevee - Thursday, April 26, 2018 - link

"Intel compilers "What Intel compilers have to do with it?