The AMD 2nd Gen Ryzen Deep Dive: The 2700X, 2700, 2600X, and 2600 Tested

by Ian Cutress on April 19, 2018 9:00 AM ESTCivilization 6

First up in our CPU gaming tests is Civilization 6. Originally penned by Sid Meier and his team, the Civ series of turn-based strategy games are a cult classic, and many an excuse for an all-nighter trying to get Gandhi to declare war on you due to an integer overflow. Truth be told I never actually played the first version, but every edition from the second to the sixth, including the fourth as voiced by the late Leonard Nimoy, it a game that is easy to pick up, but hard to master.

Benchmarking Civilization has always been somewhat of an oxymoron – for a turn based strategy game, the frame rate is not necessarily the important thing here and even in the right mood, something as low as 5 frames per second can be enough. With Civilization 6 however, Firaxis went hardcore on visual fidelity, trying to pull you into the game. As a result, Civilization can taxing on graphics and CPUs as we crank up the details, especially in DirectX 12.

Perhaps a more poignant benchmark would be during the late game, when in the older versions of Civilization it could take 20 minutes to cycle around the AI players before the human regained control. The new version of Civilization has an integrated ‘AI Benchmark’, although it is not currently part of our benchmark portfolio yet, due to technical reasons which we are trying to solve. Instead, we run the graphics test, which provides an example of a mid-game setup at our settings.

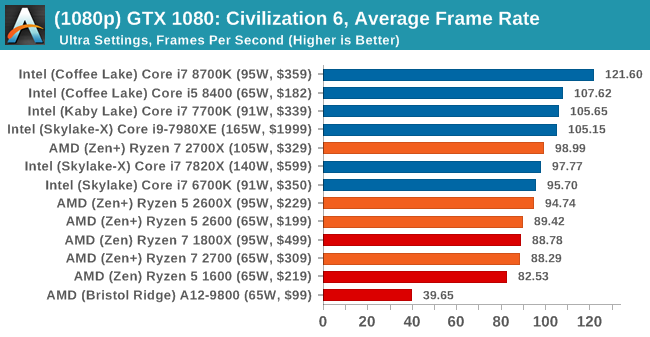

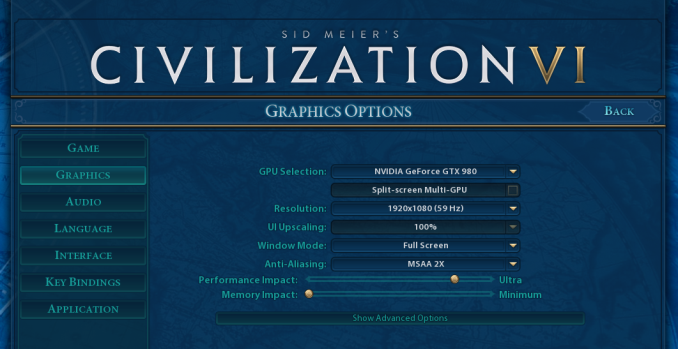

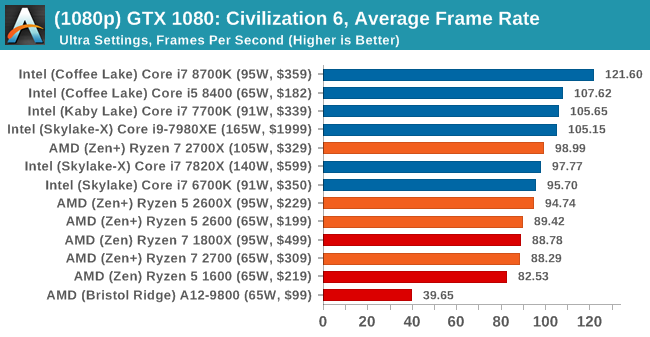

At both 1920x1080 and 4K resolutions, we run the same settings. Civilization 6 has sliders for MSAA, Performance Impact and Memory Impact. The latter two refer to detail and texture size respectively, and are rated between 0 (lowest) to 5 (extreme). We run our Civ6 benchmark in position four for performance (ultra) and 0 on memory, with MSAA set to 2x.

For reviews where we include 8K and 16K benchmarks (Civ6 allows us to benchmark extreme resolutions on any monitor) on our GTX 1080, we run the 8K tests similar to the 4K tests, but the 16K tests are set to the lowest option for Performance.

All of our benchmark results can also be found in our benchmark engine, Bench.

MSI GTX 1080 Gaming 8G Performance

1080p

4K

8K

16K

545 Comments

View All Comments

FaultierSid - Wednesday, April 25, 2018 - link

The question is if testing a CPU at 4K Gaming does make much sense. At 4K the bottleneck is the GPU, not the CPU, especially since they tested with a 1080 and not a 1080TI.It is not a coincidence that the cpus all are showing roundabout the same fps in the 4K tests. Civilization seems to be easier on the GPU and shows 8700K in the lead, all other games show almost same fps for all 4 tested CPUs. Thats because the fps is limited by GPU in that case, not by the CPU.

You might want to bring up the point that if you are Gaming in 4K and at highest settings, it doesn't make sense for you to look at 1080p benchmarks. And right now this might make sense, but not in a couple years when you upgrade your GPU to a faster model and the games are not GPU bottlenecked anymore. Then where you now see 60fps you might see 100 fps with an 8700K and only 80fps with the Ryzen 2600X.

Basically, testing CPUs in Gaming at a resolution that stresses out the GPU so much that the performance of the CPU becomes almost irrelevant is not the right way to judge the Gaming Performance of a CPU.

If your point is that at the time you purchase a new GPU you will also purchase a new CPU, then this might not affect you, and you decide to pick the 2700X over an 8700K because of all the advantages in other areas.

But in general, we have to admit, the crown of "best gaming CPU" is (sadly) still in Intel's Corner.

mapesdhs - Monday, May 14, 2018 - link

If all you're doing is gaming at 4K then yes, in most titles thebottleneck will be the GPU, but this is not always the case. These days live streaming on Twitch is becoming popular, and for that it really does help to have more cores; the load is pushed back onto the CPU, even when the player sees smooth updates (the viewer side experience can be bad instead). GN has done some good tests on this. Plus, some games are more reliant on CPU power for various reasons, especially the use of outdated threading mechanisms. And in time, newer games will take better advantage of more cores, especially due the compatibility with consoles.jjj - Wednesday, April 25, 2018 - link

So what was wrong, was it HPET crippling Intel or does Intel have some kind of issue with 4 channels memory?Ryan Smith - Wednesday, April 25, 2018 - link

The former.risa2000 - Thursday, April 26, 2018 - link

Can you explain a bit HPET crippling? I was looking around Google, but did not find anything really conclusive.Uxot - Wednesday, April 25, 2018 - link

So...i have 2666mhz RAM...RAM support for 2700X says 2933...what does that mean ? is 2933 the lowest ram compatibility ? FML if i cant go with 2700X bcz of ram.. -_-Maxiking - Thursday, April 26, 2018 - link

It refers to the highest OFFICIALLY supported frequency by the chipset on your mobo. You should be able to run RAM with higher clocks than 2933 but they might be issues. Because Ryzen memory support sucks. For higher clocked rams, I would check it they are on the QVL, so that way, you can be sure, they were tested with your mobo and no issues will arrise.2666mhz RAM will run without any issue on your system.

johnsmith222 - Thursday, April 26, 2018 - link

Make sure you have the newest bios update, AGESA 1.0.0.2a seems to improve memory compatibility too. My crappy kingston 2400 cl17 now works fine at 3000 cl15 1.36V. I'll try 3200 at 1.38V later.Uxot - Wednesday, April 25, 2018 - link

Ok...my comment got deleted for NO REASON...Gideon - Thursday, April 26, 2018 - link

Good work tracking down the timing issues! I know that this review is still WIP, but just noticed that the "Power Analysis" block has a "fsfasd" written right after it, that probably isn't needed :)