The AMD 2nd Gen Ryzen Deep Dive: The 2700X, 2700, 2600X, and 2600 Tested

by Ian Cutress on April 19, 2018 9:00 AM ESTGrand Theft Auto

The highly anticipated iteration of the Grand Theft Auto franchise hit the shelves on April 14th 2015, with both AMD and NVIDIA in tow to help optimize the title. GTA doesn’t provide graphical presets, but opens up the options to users and extends the boundaries by pushing even the hardest systems to the limit using Rockstar’s Advanced Game Engine under DirectX 11. Whether the user is flying high in the mountains with long draw distances or dealing with assorted trash in the city, when cranked up to maximum it creates stunning visuals but hard work for both the CPU and the GPU.

For our test we have scripted a version of the in-game benchmark. The in-game benchmark consists of five scenarios: four short panning shots with varying lighting and weather effects, and a fifth action sequence that lasts around 90 seconds. We use only the final part of the benchmark, which combines a flight scene in a jet followed by an inner city drive-by through several intersections followed by ramming a tanker that explodes, causing other cars to explode as well. This is a mix of distance rendering followed by a detailed near-rendering action sequence, and the title thankfully spits out frame time data.

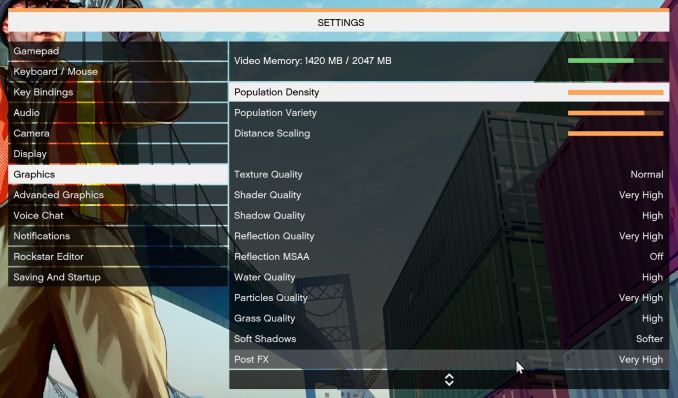

There are no presets for the graphics options on GTA, allowing the user to adjust options such as population density and distance scaling on sliders, but others such as texture/shadow/shader/water quality from Low to Very High. Other options include MSAA, soft shadows, post effects, shadow resolution and extended draw distance options. There is a handy option at the top which shows how much video memory the options are expected to consume, with obvious repercussions if a user requests more video memory than is present on the card (although there’s no obvious indication if you have a low end GPU with lots of GPU memory, like an R7 240 4GB).

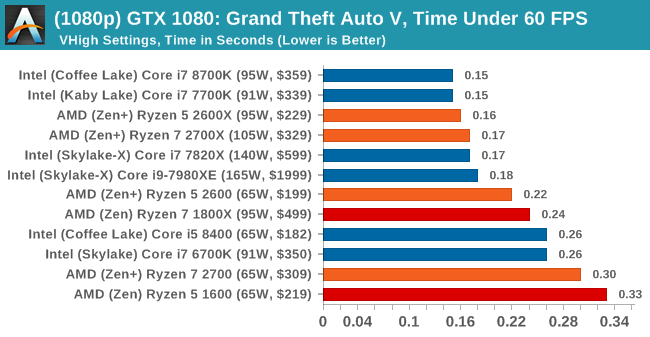

To that end, we run the benchmark at 1920x1080 using an average of Very High on the settings, and also at 4K using High on most of them. We take the average results of four runs, reporting frame rate averages, 99th percentiles, and our time under analysis.

All of our benchmark results can also be found in our benchmark engine, Bench.

MSI GTX 1080 Gaming 8G Performance

1080p

4K

545 Comments

View All Comments

jjj - Thursday, April 19, 2018 - link

I was wondering about gaming, so there is no mistake there as Ryzen 2 seems to top Intel.As of right now, I don't seem to find memory specs in the review yet, safe to assume you did as always, highest non-OC so Ryzen is using faster DRAM?

Also yet to spot memory letency, any chance you have some numbers at 3600MHz vs Intel? Thanks.

jjj - Thursday, April 19, 2018 - link

And just between us, would be nice to have some Vega gaming results under DX12.aliquis - Thursday, April 19, 2018 - link

Would be nice if any reviewer actually benchmarked storage devices maybe even virtualization because then we'd see meltdown and spectre mitigation performance. Then again do AMD have any for spectre v2 yet? If not who knows what that will do.HStewart - Thursday, April 19, 2018 - link

I notice that that systems had higher memory, but for me I believe single threaded performance is more important that more cores. But it would be bias if one platform is OC more than another. Personally I don't over clock - except for what is provided with CPU like Turbo mode.One thing that I foresee in the future is Intel coming out with 8 core Coffee Lake

But at least it appears one thing is over is this Meltdown/Spectre stuff

Lolimaster - Thursday, April 19, 2018 - link

Intel 8 core CL won't stop the bleeding, lose more profits making them "cheap" vs a new Ryzen 7nm with at least 10% more clocks and 10% more IPC, RIP.HStewart - Thursday, April 19, 2018 - link

I just have to agree to disagree on that statement - especially on "cheap" statementACE76 - Thursday, April 19, 2018 - link

CL can't scale to 8 cores...not without done serious changes to it's architecture...Intel is in some trouble with this Ryzen refresh...also worth noting is that 7nm Ryzen 2 will likely bring a considerable performance jump while Intel isn't sitting on anything worthwhile at the moment.Alphasoldier - Friday, April 20, 2018 - link

All Intel's 8cores in HEDT except SkylakeX are based on their year older architecture with a bigger cache and the quad channel.So if Intel have the need, they will simply make a CL 8core. 2700X is pretty hungry when OC'd, so Intel don't have to worry at all about its power consuption.

moozooh - Sunday, April 22, 2018 - link

> 2700X is pretty hungry when OC'dAnd Intel chips aren't? If Zen+ is already on Intel's heels for both performance per watt and raw frequency, a 7nm chip with improved IPC and/or cache is very likely going to have them pull ahead by a significant margin. And even if it won't, it's still going to eat into Intel's profit as their next tech is 10nm vs. AMD's 7nm, meaning more optimal wafer estate utilization for the latter.

AMD has really climbed back at the top of their game; I've been in the Intel camp for the last 12 years or so, but the recent developments throw me way back to K7 and A64 days. Almost makes me sad that I won't have any reason to move to a different mobo in the next 6–8 years or so.

mapesdhs - Friday, March 29, 2019 - link

Amusing to look back given how things panned out. So yes, Intel released the 9900K, but it was 100% more expensive than the 2700X. :D A complete joke. And meanwhile tech reviewers raved about a peasly 5 to 5.2 oc, on a chip that already has a 4.7 max turbo (major yawn fest), focusing on specific 1080p gaming tests that gave silly high fps number favoured by a market segment that is a tiny minority. Then what happens, RTX comes out and pushes the PR focus right back down to 60Hz. :DI wish people to stop drinking the Intel/NVIDIA coolaid. AMD does it aswell sometimes, but it's bizarre how uncritical tech reviewers often are about these things. The 9900K dragged mainstream CPU pricing up to HEDT levels; epic fail. Some said oh but it's great for poorly optimised apps like Premiere, completely ignoring the "poorly optimised" part (ie. why the lack of pressure to make Adobe write better code? It's weird to justify an overpriced CPU on the back of a pro app that ought to run a lot better on far cheaper products).