The AMD 2nd Gen Ryzen Deep Dive: The 2700X, 2700, 2600X, and 2600 Tested

by Ian Cutress on April 19, 2018 9:00 AM ESTCPU Rendering Tests

Rendering tests are a long-time favorite of reviewers and benchmarkers, as the code used by rendering packages is usually highly optimized to squeeze every little bit of performance out. Sometimes rendering programs end up being heavily memory dependent as well - when you have that many threads flying about with a ton of data, having low latency memory can be key to everything. Here we take a few of the usual rendering packages under Windows 10, as well as a few new interesting benchmarks.

All of our benchmark results can also be found in our benchmark engine, Bench.

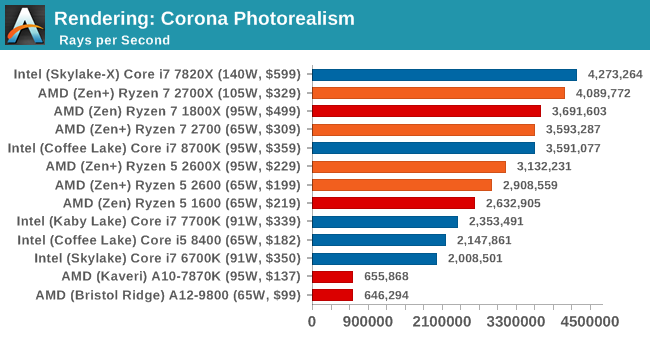

Corona 1.3: link

Corona is a standalone package designed to assist software like 3ds Max and Maya with photorealism via ray tracing. It's simple - shoot rays, get pixels. OK, it's more complicated than that, but the benchmark renders a fixed scene six times and offers results in terms of time and rays per second. The official benchmark tables list user submitted results in terms of time, however I feel rays per second is a better metric (in general, scores where higher is better seem to be easier to explain anyway). Corona likes to pile on the threads, so the results end up being very staggered based on thread count.

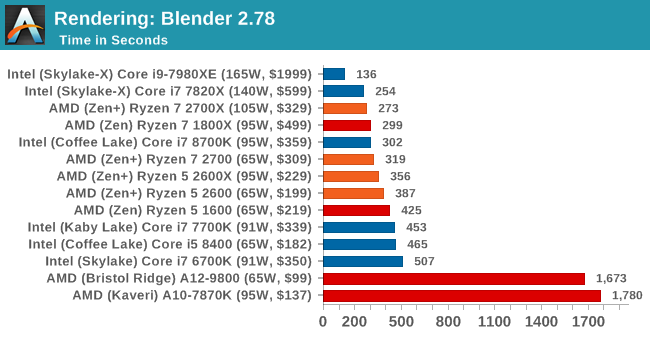

Blender 2.78: link

For a render that has been around for what seems like ages, Blender is still a highly popular tool. We managed to wrap up a standard workload into the February 5 nightly build of Blender and measure the time it takes to render the first frame of the scene. Being one of the bigger open source tools out there, it means both AMD and Intel work actively to help improve the codebase, for better or for worse on their own/each other's microarchitecture.

This is one multi-threaded test where the 8-core Skylake-based Intel processor wins against the new AMD Ryzen 7 2700X; the variable threaded nature of Blender means that the mesh architecture and memory bandwidth work well here. On a price/parity comparison, the Ryzen 7 2700X easily takes the win from the top performers. Users with the Core i7-6700K are being easily beaten by the Ryzen 5 2600.

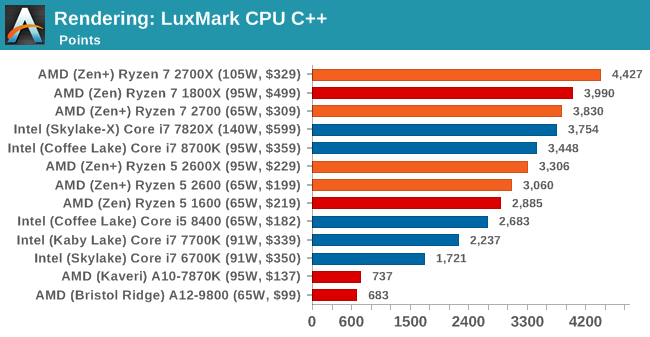

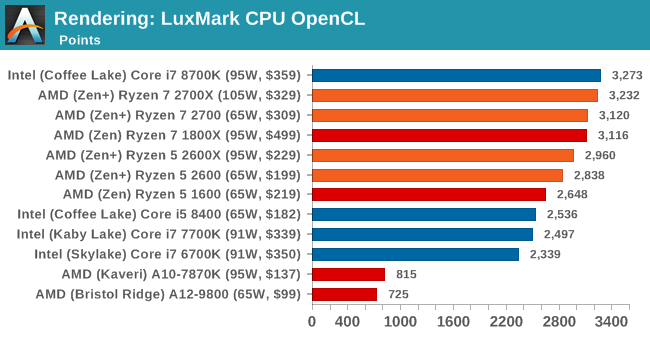

LuxMark v3.1: Link

As a synthetic, LuxMark might come across as somewhat arbitrary as a renderer, given that it's mainly used to test GPUs, but it does offer both an OpenCL and a standard C++ mode. In this instance, aside from seeing the comparison in each coding mode for cores and IPC, we also get to see the difference in performance moving from a C++ based code-stack to an OpenCL one with a CPU as the main host.

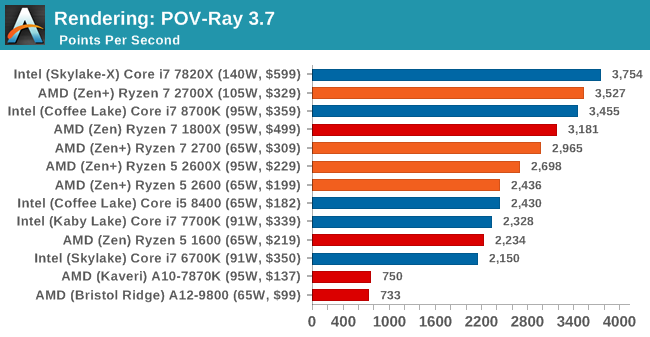

POV-Ray 3.7.1b4: link

Another regular benchmark in most suites, POV-Ray is another ray-tracer but has been around for many years. It just so happens that during the run up to AMD's Ryzen launch, the code base started to get active again with developers making changes to the code and pushing out updates. Our version and benchmarking started just before that was happening, but given time we will see where the POV-Ray code ends up and adjust in due course.

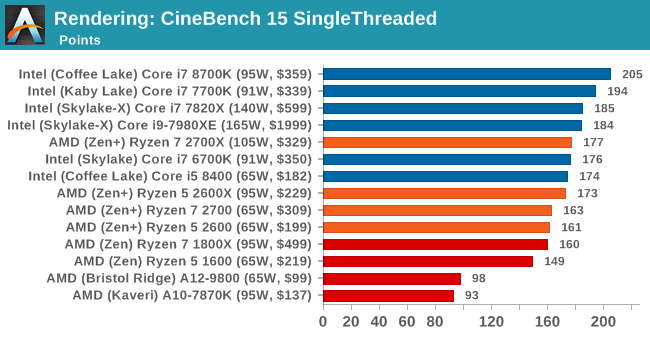

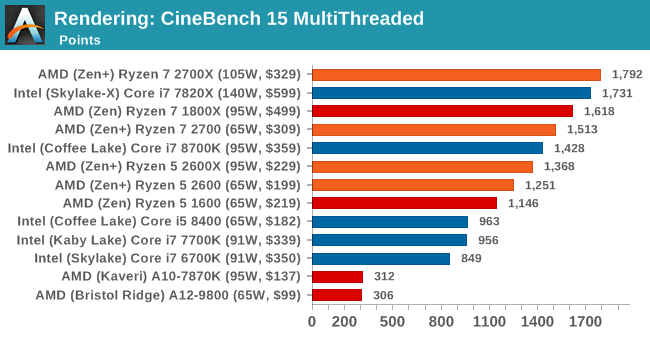

Cinebench R15: link

The latest version of CineBench has also become one of those 'used everywhere' benchmarks, particularly as an indicator of single thread performance. High IPC and high frequency gives performance in ST, whereas having good scaling and many cores is where the MT test wins out.

Intel is still the single thread champion in benchmarks like CineBench, but it would appear that the Ryzen 7 2700X is now taking the lead in the multithreaded test.

545 Comments

View All Comments

MDD1963 - Friday, April 20, 2018 - link

The Gskill 32 GB kit (2 x 16 GB/3200 MHz) I bought 13 months ago for $205 is now $400-ish...andychow - Friday, April 20, 2018 - link

Ridiculous comment. 7 years ago I bought 4x8 GB of RAM for $110. That same kit, from the same company, seven years later, now sells for $300. 4x16GB kits are around $800. Memory prices aren't at all the way they've always been. There is clear collusion going on. Micron and SK Hynix have both seen their stock price increase 400% in the last two years. 400%!!!!!The price of RAM just keeps increasing and increasing, and the 3 manufacturers are in no hurry to increase supply. They are even responsible for the lack of GPUs, because they are the bottleneck.

spdragoo - Friday, April 20, 2018 - link

You mean a price history like this?https://camelcamelcamel.com/Corsair-Vengeance-4x8G...

Or perhaps, as mentioned here (https://www.techpowerup.com/forums/threads/what-ha... how the previous-generation RAM tends to go up in price once the manufacturers switch to the next-gen?

Since I KNOW you're not going to claim that you bought DDR4 RAM 7 YEARS AGO (when it barely came out 4 years ago)...

Alexvrb - Friday, April 20, 2018 - link

I love how you ignored everyone that already smushed your talking points to focus on a post which was likely just poorly worded.RAM prices have traditionally gone DOWN over time for the same capacity, as density improves. But recently the limited supply has completely blown up the normal price-per-capacity-over-time curve. Profit margins are massive. Saying this is "the same as always" is beyond comprehension. If it wasn't for your reply I would have sworn you were simply trolling.

Anyway this is what a lack of genuine competition looks like. NAND market isn't nearly as bad but there's supply problems there too.

vext - Friday, April 20, 2018 - link

True. When prices double with no explanation, there must be collusion.The same thing has happened with videocards. I have great doubts about bitcoin mining as a driver for those price increases. If mining was so profitable, you would think there would be a mad scramble to design cards specifically for mining. Instead the load falls on the DYI consumer.

Something very odd is happening.

Alexvrb - Friday, April 20, 2018 - link

They DO design things specifically for mining. It's called an ASIC miner. Unfortunately for us, some currencies are ASIC-resistant, and in some cases they can potentially change the algorithm, which makes such (expensive!) development challenging.Samus - Friday, April 20, 2018 - link

Yep. I went with 16GB in 2013-2014 just because I was like meh what difference does $50-$60 make when building a $1000+ PC. These days I do a double take when choosing between 8GB and 16GB for PC's I build. Even hardcore gaming PC's don't *NEED* more than 8GB, so it's worth saving $100+Memory prices have nearly doubled in the last 5 years. Sure there is cheap ram, there always has been. But a kit of quality Gskill costs twice as much as a comparable kit of quality Gskill cost in 2012.

FireSnake - Thursday, April 19, 2018 - link

Awesome, as always. Happy reading! :)Chris113q - Thursday, April 19, 2018 - link

Your gaming benchmarks results are garbage and every other reviewer got different results than you did. I hope no one takes this review seriously as the data is simply incorrect and misleading.Ian Cutress - Thursday, April 19, 2018 - link

Always glad to see you offer links to show the differences.We ran our tests on a fresh version of RS3 + April Security Updates + Meltdown/Spectre patches using our standard testing implementation.