Zen and Vega DDR4 Memory Scaling on AMD's APUs

by Gavin Bonshor on June 28, 2018 9:00 AM EST- Posted in

- CPUs

- Memory

- G.Skill

- AMD

- DDR4

- DRAM

- APU

- Ryzen

- Raven Ridge

- Scaling

- Ryzen 3 2200G

- Ryzen 5 2400G

We have previously explored the importance of memory scaling within AMDs Ryzen CPUs: the question being answered today is how much of an effect on performance does the memory frequency have when Zen is paired with AMD’s own Vega graphics core. We run a complete suite of tests on AMD's Ryzen 3 2200G ($99) and Ryzen 5 2400G ($169) APUs with memory speeds from DDR4-2133 to DDR4-3466 using a kit of G.Skill Ripjaws V.

Recommended Reading on AMD Ryzen APUs

- Marrying Vega and Zen: The AMD Ryzen APU Review

- Overclocking The AMD Ryzen APUs: Guide and Results

- Delidding The AMD Ryzen APUs: How To Guide and Results

- AMD Ryzen 5 2400G and Ryzen 3 2200G Core Frequency Scaling: An Analysis

- Best CPUs for Gaming: Q2 2018

Memory Scaling on AMD Ryzen APUs

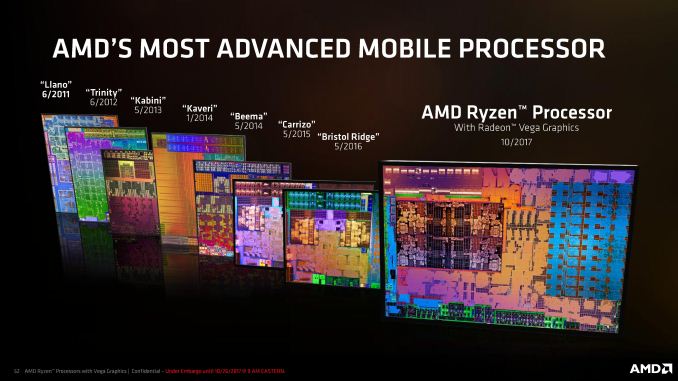

While adding Vega to Zen may be a new concept, the premise of the APU combing compute and graphics on the same chip remains the same. Graphics is often a memory bound operation - the speed at which the data can be accessed by the graphics is directly tied to the frame rate, and we have seen on chips in the past that the speed of the memory (or an interim cache) can vastly help accelerate the performance of the graphics. Graphics is usually the focus here, as faster memory only assists CPU workloads that are memory limited.

For example, see our articles on:

- Memory Scaling on Ryzen 7

- Memory Frequency Scaling on Intel's Skull Canyon NUC

- Memory Scaling on Haswell CPU, IGP and dGPU: DDR3-1333 to DDR3-3000

- DDR4 Haswell-E Scaling Review: 2133 to 3200

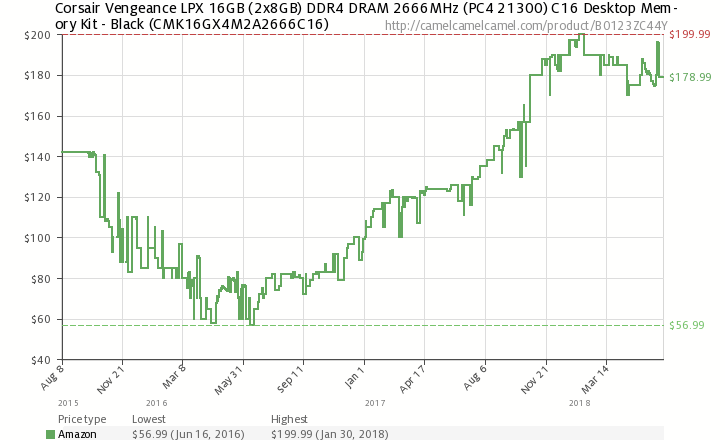

One of the main issues with memory right now is pricing. With the price of DDR4 having risen over the course of 2017 and with no signs of slowing in 2018, building a new desktop system has looked more expensive over the course of the last couple of years: the inflation of GPU pricing has also certainly contributed to those woes. While the general outlook on the current DDR4 DRAM market is that for a user wanting extra speed, more money must be spent is true, how that equates into actual performance becomes more relevant than ever before. On pricing, for example, here is a Corsair Vengeance LPX 2x8 DDR4-2666 memory kit over on Amazon:

The price of this memory when launched was $142, which decreased down to as low as $57 on sale but was an average of $75 during early 2016. Over the course of 2017 and 2018, this very popular memory kit is now trading at $179, having reached a high of $200. To put that in perspective, this kit launched at a cost of $8.88 per GB, went down as low as $3.56 per GB, and is now at $11.19 per GB. This is almost certainly a sellers market, not a buyers market. People are often spending money on capacity over speed. The goal of this article is to determine how much speed actually matters, especially when we look at lower-cost processors like the AMD Ryzen APUs.

Our APU with some other G.Skill TridentZ DRAM in an SFF test

For a user looking to build a budget system without focusing too much on high-end performance applications such as CAD or content creation, the Ryzen 3 2200 and Ryzen 5 2400 APUs has a lot to offer, especially when money is a highly limiting factor on purchasing decisions. As was within our Ryzen 5 2400G review, we concluded that AMD’s Ryzen 2000 series pairing offers the best value and performance compared against what’s currently on offer on both sides of the APU/CPU marketplace (Intel or AMD) when an iGPU is featured on chip.

Memory Scaling on APUs: More Infinity Fabric

Most of the following analysis in this section was taken from our previous Memory Scaling on Ryzen 7 article.

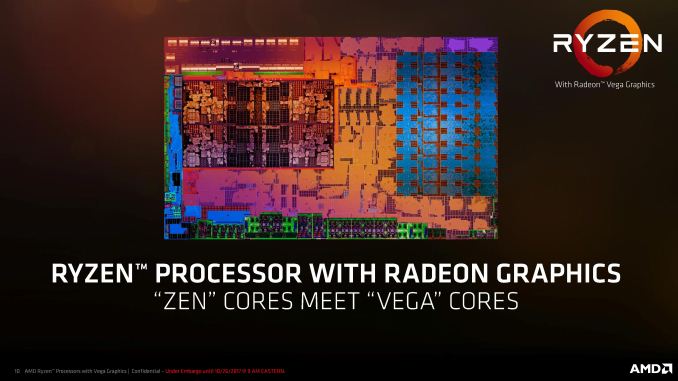

While we already know due to the previous testing we did with the Ryzen with what effect memory frequency has on the Zen cores, and AMD added a new element to this when it equipped the Ryzen 3 2200G and Ryzen 5 2400G with Vega. As per with the rest of the Ryzen processor range from AMD, each chip combines multiple technologies, but relatively speaking, the most relevant one which has the ability to affect memory performance on the Ryzen 2000 series is called Infinity Fabric.

The Infinity Fabric (hereafter shortened to IF) consists of two fabric planes: the Scalable Control Fabric (SCF) and the Scalable Data Fabric (SDF). The SCF is all about control: power management, remote management and security and IO. Essentially when data has to flow to different elements of the processor other than main memory, the SCF is in control. The SDF is where main memory access comes into play. There's still management here - being able to organize buffers and queues in order of priority assists with latency, and the organization also relies on a speedy implementation. The slide below is aimed more towards the IF implementation in AMD's server products, such as power control on individual memory channels, but still relevant to accelerating consumer workflow.

AMD's goal with IF was to develop an interconnect that could scale beyond CPUs, groups of CPUs, and GPUs. In the EPYC server product line, IF connects not only cores within the same piece of silicon, but silicon within the same processor and also processor to processor. Two important factors come into the design here: power (usually measured in energy per bit transferred) and bandwidth.

The bandwidth of the IF is designed to match the bandwidth of each channel of main memory, creating a solution that should potentially be unified without resorting to large buffers or delays. Discussing IF in the server context is a bit beyond the scope of what we are testing in this article, but the point we're trying to get across is that IF was built with a wide scope of products in mind. On the consumer platform, while IF isn't necessarily used to such a large degree as in server, the potential for the speed of IF to affect performance is just as high.

Test Bed and Hardware

As per our testing policy, we take a premium category motherboard suitable for the socket, and equip the system with a suitable amount of memory. With this test setup, we are using the BIOS to set the CPU core frequency using the provided straps on the MSI B350I Pro AC motherboard. The memory is set to the range of speeds as given for our testing.

| Test Setup | |||

| Processors | AMD Ryzen 3 2200G | AMD Ryzen 5 2400G | |

| Motherboard | MSI B350I Pro AC | ||

| Cooling | Thermaltake Floe Riing RGB 360 | ||

| Power Supply | Thermaltake Toughpower Grand 1200 W Gold PSU | ||

| Memory | G.Skill Ripjaws V DDR4-3600 17-18-18 2x8 GB 1.35 V |

||

| Integrated GPU | Vega 8 1100 MHz |

Vega 11 1250 MHz |

|

| Discrete GPU | ASUS Strix GTX 1060 6 GB 1620 MHz Base, 1847 MHz Boost |

||

| Hard Drive | Crucial MX300 1 TB | ||

| Case | Open Test Bed | ||

| Operating System | Windows 10 Pro | ||

With the aim being to procure a set of consistent results, the G.Skill Ripjaws V DDR4-3600 kit was set to latencies of 17-18-18-38 throughout each of the different straps tested. Due to an inability to support 100 MHz straps on our motherboard, the XMP profile was enabled in the BIOS on the memory Ripjaws V kit and the latency timings adjusted to 17-18-18-38 manually on the MSI B350I Pro AC motherboard. Each of the straps set for the aim in continuity of testing for frequency scaling.

A side note on our previous experience with memory scaling. In the past we introduced a concept of a Performance Index (PI) for each memory kit, to give a rough performance comparison metric between memory brands. This PI was defined as the data rate (such as DDR4-2400) divided by the CAS Latency (such as the 17 in 17-18-18) rounded down to the nearest whole number. In previous articles like this, typically the memory with the highest PI scored the best overall, especially in gaming, although in combinations with similar PIs, the one with the highest frequency was often ahead. We will revisit this concept later in the review.

In this review, we will be testing the following combinations of data rate and latencies:

| Data Rates Tested |

Sub-Timings | Performance Index |

Voltage |

| DDR4-2133 | 17-18-18 | 2133 / 17 = 125 | 1.35 V |

| DDR4-2400 | 17-18-18 | 2400 / 17 = 141 | 1.35 V |

| DDR4-2667 | 17-18-18 | 2667 / 17 = 157 | 1.35 V |

| DDR4-2866 | 17-18-18 | 2866 / 17 = 169 | 1.35 V |

| DDR4-3333* | 17-18-18 | 3333 / 17 = 196 | 1.35 V |

| DDR4-3466 | 17-18-18 | 3466 / 17 = 204 | 1.35 V |

*Corresponds to XMP Profile 1 on this memory kit

AGESA and Memory Support

At the time of the launch of Ryzen, a number of industry sources privately disclosed to us that the platform side of the product line was rushed. There was little time to do full DRAM compatibility lists, even with standard memory kits in the marketplace, and this lead to a few issues for early adopters to try and get matching kits that worked well without some tweaking. Within a few weeks this was ironed out when the memory vendors and motherboard vendors had time to test and adjust their firmware.

Overriding this was a lower than expected level of DRAM frequency support. During the launch, AMD had promised that Ryzen would be compatible with high speed memory, however reviewers and customers were having issues with higher speed memory kits (DDR4-3200 and above). These issues have been addressed via a wave of motherboard BIOS updates built upon an updated version of the AGESA (AMD Generic Encapsulated Software Architecture), specifically up to version 1.0.0.6, but now preceded by 1.1.0.1 (thanks to AMD's unusual version numbering system).

Whilst the maturity of the Ryzen platform is no longer an issue generally faced, the AGESA 1.1.0.1 microcode specifically focused on supporting the new Raven Ridge Ryzen 3 2200G and Ryzen 5 2400G APUs was announced before launch; we covered these BIOS updates for AMD's Ryzen APUs back in February at launch.

The whole purpose of the today's testing is to evaluate the scalability on AMD's Zen architecture and to see if the performance can be influenced by increasing the DRAM frequency. It would be foolish to not establish the effect on gaming performance and to see if memory frequency has a direct impact on frame rates given that previous generations of AMD APU have been reported to do so.

This Review

In this article we cover:

- Overview and Test Bed (this page)

- CPU Performance

- Integrated Graphics Performance

- Discrete Graphics Performance with a GTX 1060

- Conclusions

74 Comments

View All Comments

invasmani - Tuesday, July 10, 2018 - link

We really need quad channel memory to become mainstream on low end CPU's ideally and octa channel or even six channel or example to become more affordable on mid range ones. The ram itself performance increases are slowly so we need to run more parallel memory channels similar to CPU cores like Ryzen has done well with.peevee - Thursday, June 28, 2018 - link

"With the aim being to procure a set of consistent results, the G.Skill Ripjaws V DDR4-3600 kit was set to latencies of 17-18-18-38 throughout"By doing that, you essentially invalidated the test as in real life lower frequencies mean lower values for those latency-inducing parameters, reducing the impact of frequencies.

edzieba - Thursday, June 28, 2018 - link

Setting a constant CAS actually increases the impact on latency compared to most RAM available. CAS is a clock-relative measure, and normally you will see CAS latencies rise as clockspeeds rise. This means real-time latencies (nanoseconds) stay surprisingly flat across clock frequencies. Only if you pay the excruciating prices for low-CAS high-clock DIMMs (in which case, why on earth are you using them with a budget APU?) do you start to see appreciable reductions in access latency.Of course, higher clock rates means more access /granularity/ even if latency is static so it depends on what your workload benefits from.

peevee - Thursday, June 28, 2018 - link

That was exactly my point. You you can run CAS 17 at 3466, you can CAS 12 or 13 at 2133, even on much less expensive memory. And then difference in the results will be much less.As surprising as it might seem, the memory latencies for a while hit the limitations of speed of electromagnetic wave in the wires, and the only solution (both for latencies and energy consumption) with those outdated Von Neumann-based CPU architectures is to do PIP, like in the phones, instead of DIMMs/SODIMMs.

mode_13h - Thursday, June 28, 2018 - link

I've been hoping to see HBM2 appear in phones and (at least laptop) CPUs for a while, now.peevee - Tuesday, July 3, 2018 - link

HBM2 has nothing to do with it.Phones actually get it right, they usually have memory in stacked configuration with CPU reducing the length of the wires to the minimum. Or at least set the memory chips close to the CPU. Desktop CPUs using DIMMs are hopeless. They could have improved things with a special CPU slot which would accept DIMMs (or better yet SODIMMs) in square configuration, like this:

*DIMM*

D|--C--|D

I-||--P--|I

M|--U--|M

M|------|M

*DIMM*

peevee - Tuesday, July 3, 2018 - link

The best for the DIMMs would be to lie flat, and CPU has 4 memory channels with pins on each side for each channel. Best of all would be LPDDR4x, with 0.6V signal voltage, would save a bunch for the whole platform. Something like 4-channel CL4 1866 or 2133 would have plenty of bandwidth and properly low latency, maybe even LLC will not be needed for 4 cores or less.peevee - Tuesday, July 3, 2018 - link

..."would save a bunch" of power...Lolimaster - Friday, June 29, 2018 - link

The thing is DDR4 3000 CL15 barely cost a few buck over 2400 CL15.Lolimaster - Friday, June 29, 2018 - link

All memory even cheapo 2133 are in the range of 165-180. Only CL14 3200 is above $200.