Improving The Exynos 9810 Galaxy S9: Part 2 - Catching Up With The Snapdragon

by Andrei Frumusanu on April 20, 2018 9:00 AM EST- Posted in

- Mobile

- Samsung

- Smartphones

- Exynos 9810

- Exynos M3

- Galaxy S9

Scheduler mechanisms: WALT & PELT

Over the years, it seems Arm noticed the slow progress and now appears to be working more closely with Google in developing the Android common kernel, utilizing out-of-tree (meaning outside of the official Linux kernel) modifications that benefit performance and battery life of mobile devices. Qualcomm also has been a great contributor as WALT is now integrated into the Android common kernel, and there’s a lot of work going on from these parties as well as other SoC manufacturers to advance the platform in a way that benefits commercial devices a lot more.

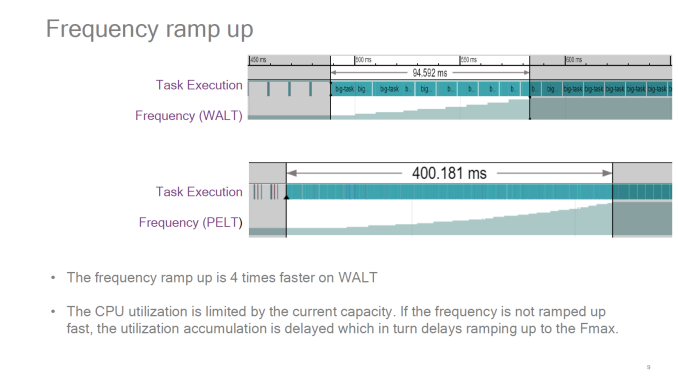

Samsung LSI’s situation here seems very puzzling. The Exynos 9810 is the first flagship SoC to actually make use of EAS, and they are basing the BSP (Board support package) kernel off of the Android common kernel. The issue here is that instead of choosing to optimise the SoC through WALT, they chose to fall back to full PELT dictated task utilisation. That’s still fine in terms of core migrations, however they also chose to use a very vanilla schedutil CPU frequency driver. This meant that the frequency ramp-up of the Exynos 9810 CPUs could have the same characteristics as PELT, which means it would be also bring with it one of the existing disadvantages of PELT: a relatively slow ramp-up.

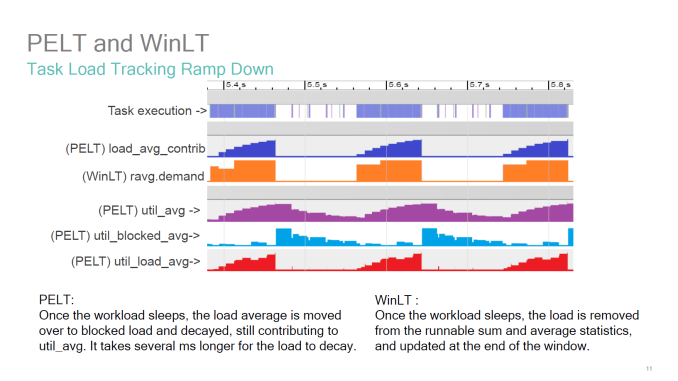

Source: BKK16-208: EAS

Source: WALT vs PELT : Redux – SFO17-307

One of the best resources on the issue actually comes from Qualcomm, as they had spearheaded the topic years ago. In the above presentation presented at Linaro Connect 2016 in Bangkok, we see the visual representation of the behaviour of PELT vs WinLT (which WALT was called at the time). The metrics to note here in the context of the Exynos 9810 are the util_avg (which is the default behaviour on the Galaxy S9) and the contrast to WALT’s ravg.demand and actual task execution. So out of all the possible options in terms of BSP configurations, Samsung seemed to have chosen the worst one for performance. And I do think this seems to have been a conscious choice as Samsung had made additional mechanisms to the both the scheduler (eHMP) and schedutil (freqvar) to counteract this very slow behaviour caused by PELT.

In trying to resolve this whole issue, instead of adding additional logic on top of everything I looked into fixing the issue at the source.

What was first tried is perhaps the most obvious route, and that's to enable WALT and see where that goes. While using WALT as a CPU utilisation signal for the Exynos S9 gave outstandingly good performance, it also very badly degraded battery life. I had a look at the Snapdragon 845 Galaxy S9’s scheduler, but here it seems Qualcomm diverges significantly from the Google common kernel which the Exynos is based on. This being far too much work to port, I had another look at the Pixel 2’s kernel – which luckily was a lot nearer to Samsung’s. I ported all relevant patches which were also applied to the Pixel 2 devices, along with porting EAS to a January state of the 4.9-eas-dev branch. This improved WALT’s behaviour while keeping performance, however there was still significant battery life degradation compared to the previous configuration. I didn’t want to spend more time on this so I looked through other avenues.

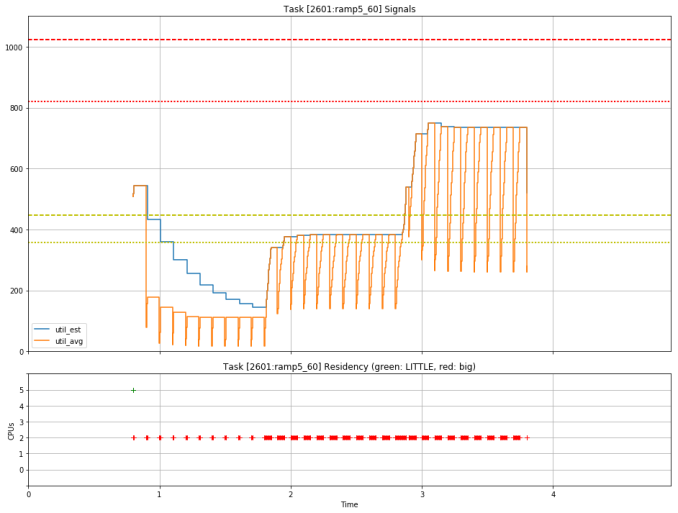

Source : LKML Estimate_Utilization (With UtilEst)

Looking through Arm's resources, it looks very much like the company is aware of the performance issues and is actively trying to improve the behaviour of PELT to more closely match that of WALT. One significant change is a new utilisation signal called util_est (Utilisation estimation) which is added on top of WALT and is meant to be used for CPU frequency selection. I backported the patch and immediately saw a significant improvement in responsiveness due to the higher CPU frequency state utilisation. Another simple way of improving PELT was reducing the ramp/decay timings, which incidentally also got an upstream patch very recently. I backported this as well to the kernel, and after testing a 8ms half-life setting for a bit and judging it to not be good for battery life, I settled on a 16ms settings, which is an improvement over the 32ms of the stock kernel and gives the best performance and battery compromise.

Because of these significant changes in the way the scheduler is fed utilisation statistics, the existing tuning from Samsung obviously weren’t valid anymore. I adapted most of them to the best I could, which basically involves just disabling most of them as they were no longer needed. Also I significantly changed the EAS capacity and cost tables, as I do not think that the way Samsung populated the table is correct or representative of actual power usage, which is very unfortunate. Incidentally, this last bit was one of the reasons that performance changed when I limited the CPU frequency in part 1, as it shifted the whole capacity table and changed the scheduler heuristic.

But of course, what most of you are here for is not how this was done but rather the hard data on the effects of my experimenting, so let's dive into the results.

76 Comments

View All Comments

lilmoe - Friday, April 20, 2018 - link

That's Microsoft's fault. Instead of focusing on a purely mobile architecture and the "enhancing" it for the desktop after it failed, they should have worked on the desktop experience from the start to be closer to mobile in terms of efficiency and hardware acceleration without sacrificing features, information density and responsiveness, relieving it of its dependence on general cpu compute.Instead of going platform agnostic for a native desktop, they went with a *form factor* agnostic approach that focused on the lowest common denominator (mobile) that didn't benefit either.

If the software is right, and workloads are properly accelerated/deligated, then the CPU of choice wouldn't be nearly as critical to get the optimum experience and performance of the device and the purpose it was is designed to serve.

serendip - Saturday, April 21, 2018 - link

Indeed, you may be on to something there. Microsoft added tablet and mobile features to Windows 10 in a halfhearted way, like how the touch keyboard on Windows tablets is a laggy and inaccurate mess compared to the iPad keyboard. I bring along a folding Bluetooth keyboard with my Windows tablet because using the virtual keyboard is such a painful experience.That said, there's probably a ton of Win32 baggage that relies on x86 to run so it could take years for Microsoft to refactor everything.

MrSpadge - Saturday, April 21, 2018 - link

Responsiveness and accuracy of your on-screen keyboard are largely determined by hardware, not MS. E.g. the keyboard on my wifes low-end Lumia 535 is pretty bad, whereas the same software on my Lumia 950 performs great.LiverpoolFC5903 - Monday, April 23, 2018 - link

The onscreen keyboard on my cube i7 book works as smoothly and quickly as any ipad or android tab. The Core m3 keeps it ticking along nicely. I use it purely as a tablet, even while working on it occasionally, with the type attachment collecting dust in my drawer.I think poor quality storage and CPU are the reasons for the bad experience.

Alexvrb - Saturday, April 21, 2018 - link

"relieving of its dependence on general CPU compute"Don't smoke crack and post.

tuxRoller - Saturday, April 21, 2018 - link

Otoh, arm servers are looking better all the time.Hurr Durr - Saturday, April 21, 2018 - link

It`s a pipedream, like loonix on desktop. Pretty sure it`s the same people anyway.tuxRoller - Saturday, April 21, 2018 - link

Chromeos is a thing.ZolaIII - Sunday, April 22, 2018 - link

Reed you unlettered kite pipe:https://blog.cloudflare.com/neon-is-the-new-black/...

tuxRoller - Monday, April 23, 2018 - link

That was a great post, and it makes SVE look all the more interesting in this space.