The Intel NUC8i7HVK (Hades Canyon) Review: Kaby Lake-G Benchmarked

by Ganesh T S on March 29, 2018 1:00 PM EST4K HTPC Credentials

The noise profile of the NUC8i7HVK is surprisingly good. At idle and low loads, the fans are barely audible, and they only kicked in during stressful gaming benchmarks. From a HTPC perspective, we had to put up with the fan noise during the decode and playback of codecs that didn't have hardware decode acceleration - 4Kp60 VP9 Profile 2 videos, for instance. Obviously, the unit is not for the discerning HTPC enthusiast who is better off with a passively cooled system.

Refresh Rate Accuracy

Starting with Haswell, Intel, AMD and NVIDIA have been on par with respect to display refresh rate accuracy. The most important refresh rate for videophiles is obviously 23.976 Hz (the 23 Hz setting). As expected, the Intel NUC8i7HVK (Hades Canyon) has no trouble with refreshing the display appropriately in this setting.

The gallery below presents some of the other refresh rates that we tested out. The first statistic in madVR's OSD indicates the display refresh rate.

Network Streaming Efficiency

Evaluation of OTT playback efficiency was done by playing back the Mystery Box's Peru 8K HDR 60FPS video in YouTube using Microsoft Edge and Season 4 Episode 4 of the Netflix Test Pattern title using the Windows Store App, after setting the desktop to HDR mode and enabling the streaming of HDR video.

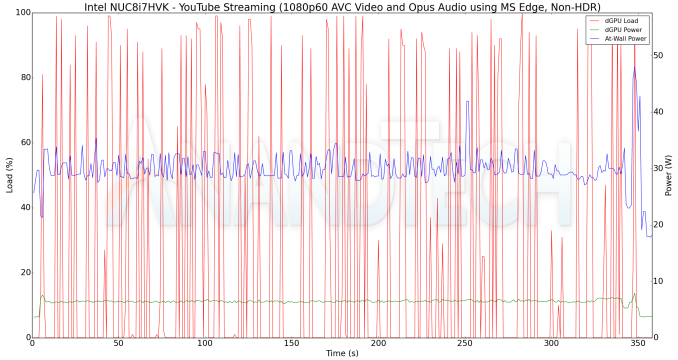

The YouTube streaming test, unfortunately, played back a 1080p AVC version. MS Edge utilizes the Radeon GPU which doesn't have acceleration for VP9 Profile 2 decode. Instead of software decoding, Edge apparently requests the next available hardware accelerated codec, which happens to be AVC. The graph below plots the discrete GPU load, discrete GPU chip power, and the at-wall power consumption during the course of the YouTube video playback.

Since the stream was a 1080p version, we start off immediately with the highest possible bitrate. The GPU power consumption is stable around 8W, with the at-wall power consumption around 30W. The Radeon GPU does not expose separate video engine and GPU loads to monitoring software. Instead, a generic GPU load is available. Its behavior is unlike what we get from the Intel's integrated GPUs or NVIDIA GPUs - instead of a smooth load profile, it has frequent spikes close to 100% and rushing back to idle, as evident from the red lines in the graphs in this section. Therefore, we can only take the GPU chip power consumption as an indicator of the loading factor.

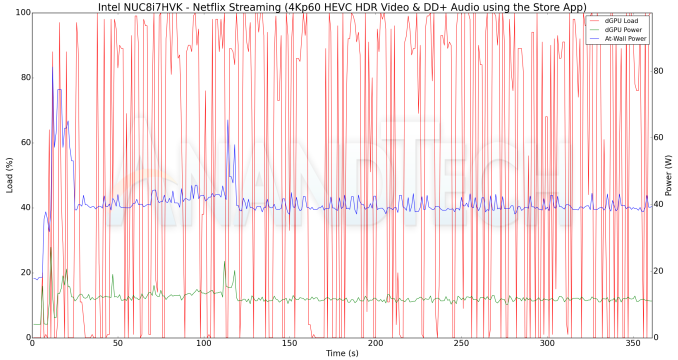

A similar graph for the Netflix streaming case (16 Mbps HEVC 10b HDR video) is also presented below. Manual stream selection is available (Ctrl-Alt-Shift-S) and debug information / statistics can also be viewed (Ctrl-Alt-Shift-D). Statistics collected for the YouTube streaming experiment were also collected here.

It must be noted that the debug OSD is kept on till the stream reaches the 16 Mbps playback stage around 2 minutes after the start of the streaming. The GPU chip power consumption ranges from 20W for the low resolution video (that requires scaling to 4K) to around 12W for the eventually fetched 16 Mbps 4K stream. The at-wall numbers range from 60W (after the initial loading spike) to around 40W in the steady state.

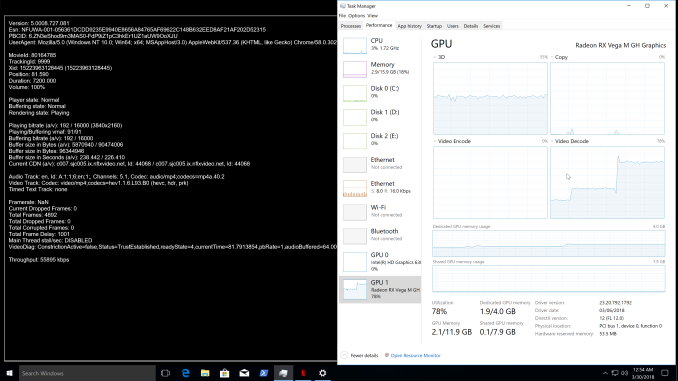

Update: I received a request to check whether the Netflix application was utilizing the Intel HD Graphics 630 for PlayReady 3.0 functionality. The screenshot below confirms that the 4K HEVC HDR playback with Netflix makes use of the Radeon RX Vega M GH GPU only.

Decoding and Rendering Benchmarks

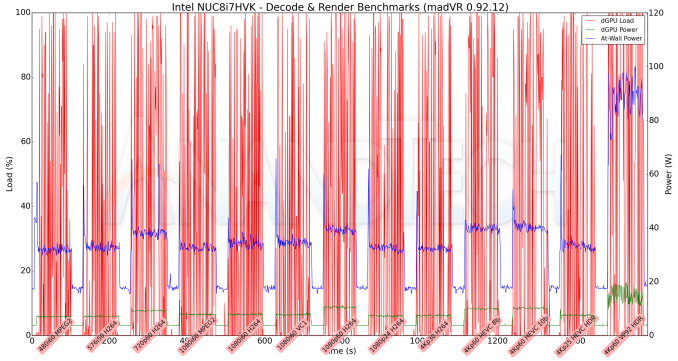

In order to evaluate local file playback, we concentrate on Kodi (for the casual user) and madVR (for the HTPC enthusiast). Under madVR, we decided to test out only the default out-of-the-box configuration. We recently revamped our decode and rendering test suite, as described in our 2017 HTPC components guide.

madVR 0.92.12 was evaluated with MPC-HC 1.7.15 (unofficial release) with its integrated LAV Filters 0.71. The video decoder was set to Direct 3D mode, with automatic selection of the GPU for decoding operations. For hardware-accelerated codecs, we see the at-wall power consumption around 35-40W and the GPU chip power consumption to be around 10W. For the software decode case (VP9 Profile 2), the at-wall power consumption is around 90W, and the GPU chip power consumption is around 18W (the power budget is likely for madVR processing of the software-decoded video frames).

One of the praiseworthy aspects of the madVR / MPC-HC / LAV Filters combination that we tested above was the automatic switch to HDR mode and back while playing the last couple of videos in our test suite. All in all, the combination of playback components was successful in processing all our test streams in a smooth manner.

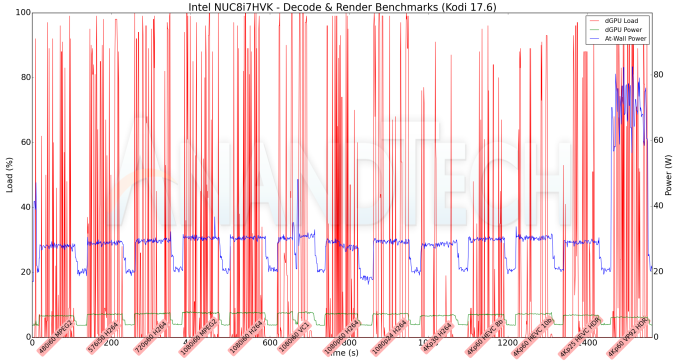

The same testing was repeated with the default configuration options for Kodi 17.6. The at-wall power consumption is substantially lower (around 30W) for the hardware-accelerated codecs. The GPU chip power is around 8W consistently for those. For the VP9 Profile 2 case, the at-wall number rises to 70W, but, there is not much change in the GPU chip power. We did encounter a hiccup in the 1080i60 VC-1 case, as the playback just froze for around 5 - 10s - evident in the graph below (the files were being played off the local SSD).

We attempted to perform some testing with VLC 3.0.1, but, encountered random freezes and blank screen outputs while using the default configuration for playing back the same videos. It is possible that the VLC 3.0.1 hardware decode infrastructure is not as robust as that of the MPC-HC / LAV Filters 0.71.0 combination, and the hardware acceleration APIs behave slightly differently with the Radeon GPU compared to the behavior seen with Intel's integrated GPU and NVIDIA's GPU.

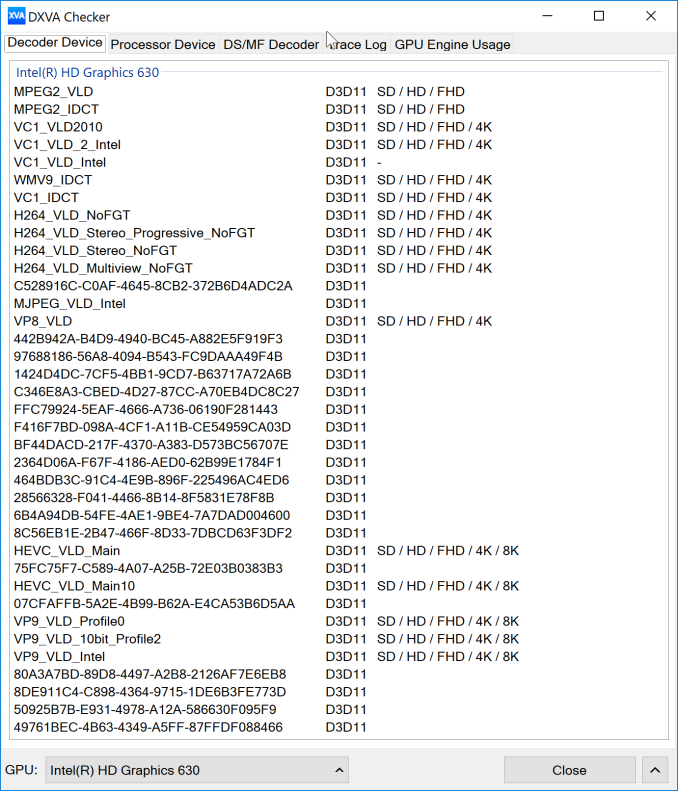

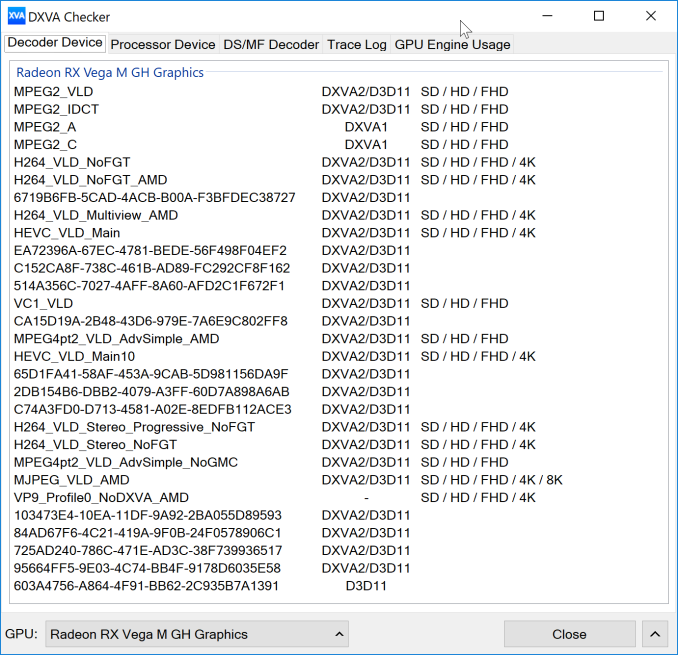

Moving on to the codec support, while the Intel HD 630 is a known quantity with respect to the scope of supported hardware accelerated codecs, the Radeon RX Vega M GH is not. DXVA Checker serves as a confirmation for the former and a source of information for the latter.

We can actually see that the codec support from the Intel side is miles ahead of the Radeon's capabilities. It is therefore a pity that users can't somehow set a global option to make all video decoding and related identification rely on the integrated GPU.

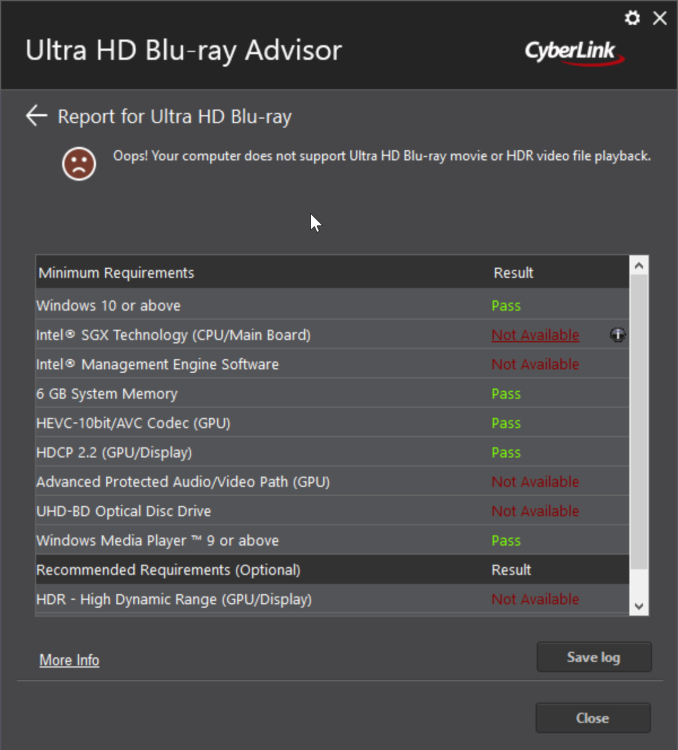

Intel originally claimed at the launch of the Hades Canyon NUCs that they would be able to play back UltraHD Blu-rays. The UHD BD Advisor tool from CyberLink, however, presented a different story.

After a bit of back and forth with Intel, it appears that the Hades Canyon NUCs will not be able to play back UHD Blu-rays. Apparently, the use of the Protected Audio Video Path (PAVP) in the integrated GPU is possible only if the display is also being driven by the same GPU. It turned out to be quite disappointing, particularly after Intel's promotion of UHD Blu-ray playback and PAVP as unique differentiating features of the Kaby Lake GPU.

124 Comments

View All Comments

PeachNCream - Friday, March 30, 2018 - link

The price really is way too high right now compared to a laptop if your focus is gaming. If you need a LAN party portable box, a laptop is usually a better option anyway since the screen and keyboard aren't additional components you'll have to take with you. Because the NUC is so small, it lacks the upgrade advantages offered by a desktop form factor so you're basically dealing with an overpriced, screenless laptop. I'm all for the technology at the heart of the new NUC, but you're absolutely right that it needs to start at $600.bill44 - Thursday, March 29, 2018 - link

I was hoping so much from this machine, ready to buy, only to be disappointed.No Titan Ridge TB3 (DP 1.4)

No UHD-BD playback

TB3 not connected to CPU

UHS-1 only card reader, no UHS-II

Issues with storage bandwidth since Spectre/Meltdown issues, no in-silicon fix (I know, 2nd half 2018).

Disappointing WiFi speed, no BT 5, even if M.2 changed no aerial.

Hardware decoding/codec issues

etc.

When can we expect the next (fixed version) :)

cacnoff - Thursday, March 29, 2018 - link

Titan Ridge - not out yet.UHD-BD playback - doesn't work on nvidia either.

Point to ANY designs with TB3 connected to CPU.

repoman27 - Thursday, March 29, 2018 - link

Apple does this pretty regularly. But last I knew, everyone else had to go through the PCH due to Windows / UEFI limitations. Which is a bummer because of the additional latency and clear potential for bandwidth contention.Hifihedgehog - Thursday, March 29, 2018 - link

“We can actually see that the codec support from the Intel side is miles ahead of the Radeon's capabilities.”This is misinformed at best, and categorically false at worse. I have been using a Ryzen 5 2400G, on an ASRock AB350 Gaming-ITX/ac motherboard to drive an HDMI 2.0 4K display at 12-bit (yes, despite lacking formal certification, HDMI 2.0 works flawlessly on 300-series motherboards). I successfully can play back various hefty video material, including HEVC in the form of lossless MKV rips of my UHD Blu-rays, and VP9. Moreover, the color, deblocking, and scaling is far superior in terms of pure optical fidelity to the Intel Skull Canyon NUC’s hazy, unrefined hardware decoding solution. Also, I could go at length about the dropout and timing issues of Intel’s HD Graphics with various high-end AV receivers (Marantz, Denon, and Yamaha) that I have had (e.g. timing issues from their onboard DP-to-HDMI converters causing DAC and sound processing to operate in a compatibility mode with 16-bit sound depth). Suffice it to say, from an objective standpoint, Intel’s NUCs are utter trash, littered with issues, that fall far short from being serious home theater solutions.

garbagedisposal - Thursday, March 29, 2018 - link

" misinformed at best, and categorically false at worse"Yes, you are.

"HDMI 2.0 works flawlessly on 300-series motherboards"

Congrats on discovering the obvious. Nobody claimed otherwise

"successfully can play back various hefty video material, including HEVC"

So can intel, better and at lower power. Isn't it sad that the radeon can't do VP9 or netflix?

"color, deblocking, and scaling is far superior"

Sure. Everything just looks so wrong on intel. Certainly takes a special snowflake like you to notice, good job.

"Marantz, Denon, and Yamaha"

Nobody cares.

Rabid angry people like you are funny, do you really think anyone is going to read or care about your comment? Go away LOL

Hifihedgehog - Thursday, March 29, 2018 - link

Please be polite and less “rabid.” Thanks.Hifihedgehog - Thursday, March 29, 2018 - link

For anyone reading the post above, Vega can correctly decode VP9, hybrid or otherwise. What was posted above is incorrect.Hifihedgehog - Thursday, March 29, 2018 - link

“Congrats on discovering the obvious. Nobody claimed otherwise”This is also false. Many reviews online of the Ryzen Raven Ridge APUs claimed that HDMI 2.0 support was yet non-existent given that most motherboard manufacturers state only HDMI 1.4 for their 300-series AM4 boards. This concerned many users, but after thorough research and testing (see here: smallformfactor (dot) net /forum/threads/raven-ridge-hdmi-2-0-compatibility-1st-gen-am4-motherboard-test-request-megathread.6709/ ; I am the one who started the thread and led its discussion), it was concluded that all current motherboards work without limitations or issue regardless of the published specifications. I will also ignore the passive-aggressive sarcasm and sentiment laced in-between the lines from the “Congrats” to the “LOL.” Please be less rabid and angry, and more people will take your seriously.

ganeshts - Thursday, March 29, 2018 - link

The Radeon GPU CAN NOT do VP9 Profile 2 - so you will NEVER get YouTube HDR on the. No PAVP. QuickSync ecosystem support is way ahead of Radeon VCE. So, tell me why I am wrong in saying that the Intel iGPU is miles ahead of the Radeon Vega ? Any neutral industry observer can see that I am completely justified in making those claims.I am not talking about Ryzen APUs - I am talking about the Radeon GPU in the Core i7-8809G, as utilized in the NUC8i7HVK.