The Intel Optane SSD 800p (58GB & 118GB) Review: Almost The Right Size

by Billy Tallis on March 8, 2018 5:15 PM ESTRandom Read Performance

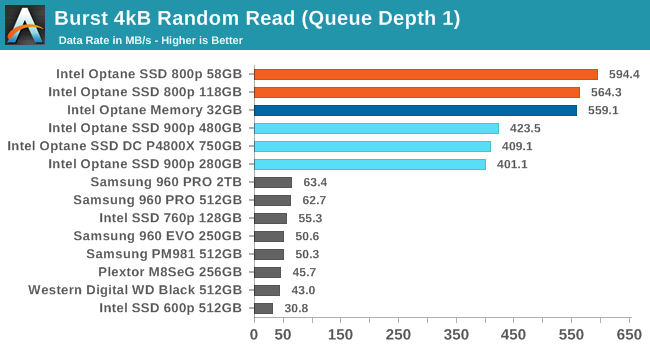

Our first test of random read performance uses very short bursts of operations issued one at a time with no queuing. The drives are given enough idle time between bursts to yield an overall duty cycle of 20%, so thermal throttling is impossible. Each burst consists of a total of 32MB of 4kB random reads, from a 16GB span of the disk. The total data read is 1GB.

Like the Optane Memory M.2, the Optane SSD 800p has extremely high random read performance even at QD1. The M.2 drives even have a substantial lead over the much larger and more power-hungry 900p and its enterprise counterpart P4800X. Even the best flash-based SSDs are almost an order of magnitude slower.

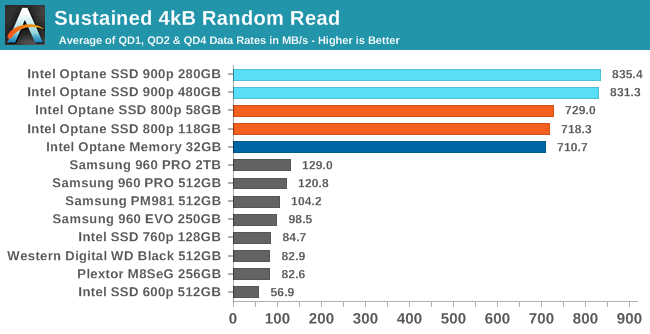

Our sustained random read performance is similar to the random read test from our 2015 test suite: queue depths from 1 to 32 are tested, and the average performance and power efficiency across QD1, QD2 and QD4 are reported as the primary scores. Each queue depth is tested for one minute or 32GB of data transferred, whichever is shorter. After each queue depth is tested, the drive is given up to one minute to cool off so that the higher queue depths are unlikely to be affected by accumulated heat build-up. The individual read operations are again 4kB, and cover a 64GB span of the drive.

The Optane SSDs continue to dominate on the longer random read test, though the addition of higher queue depths allows the 900p to pull ahead of the 800p.

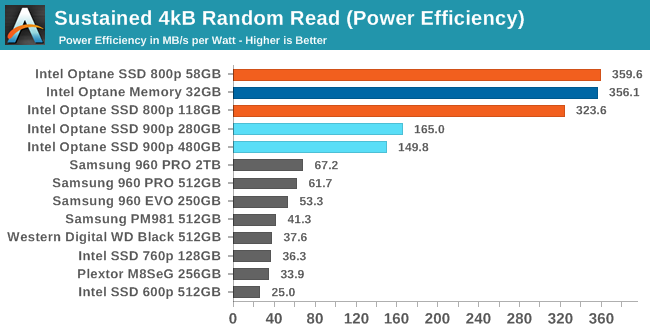

With extremely high performance but lacking the high power draw of the enterprise-class 900p, the Optane SSD 800p is by far the most power efficient at performing random reads.

|

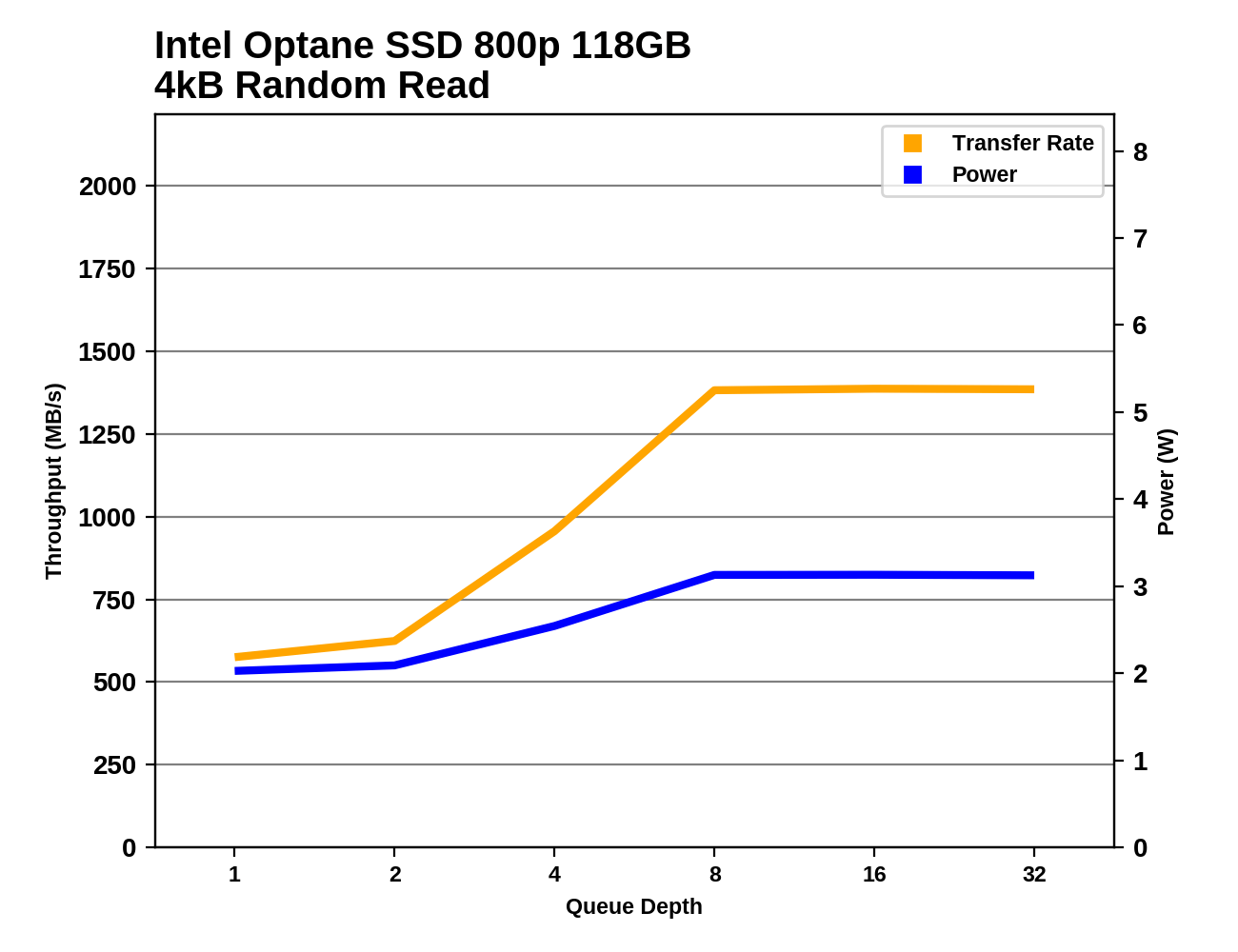

|||||||||

The Optane SSD 800p starts out in the lead at QD1, but its performance is overtaken by the 900p at all higher queue depths. The flash-based SSDs have power consumption that is comparable to the 800p, but even at QD32 Samsung's 960 PRO hasn't caught up to the 800p's random read performance.

Random Write Performance

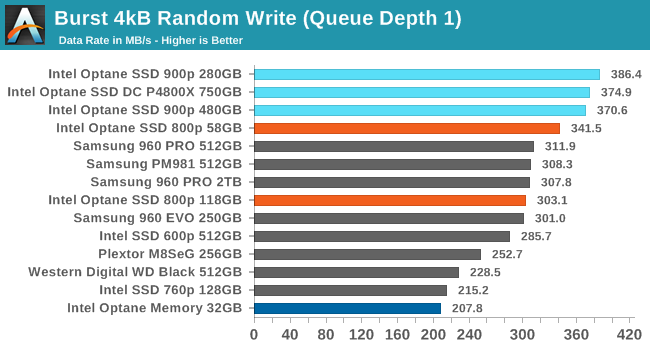

Our test of random write burst performance is structured similarly to the random read burst test, but each burst is only 4MB and the total test length is 128MB. The 4kB random write operations are distributed over a 16GB span of the drive, and the operations are issued one at a time with no queuing.

Flash-based SSDs can cache and combine write operations, so they are able to offer random write performance close to that of the Optane SSDs, which do not perform any significant caching. Where the 32GB Optane Memory offered relatively poor burst random write performance, the 800p is at least as fast as the best flash-based SSDs.

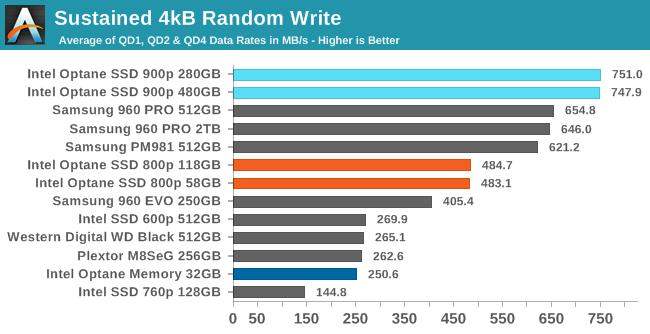

As with the sustained random read test, our sustained 4kB random write test runs for up to one minute or 32GB per queue depth, covering a 64GB span of the drive and giving the drive up to 1 minute of idle time between queue depths to allow for write caches to be flushed and for the drive to cool down.

When higher queue depths come into play, the write caching ability of Samsung's high-end NVMe SSDs allows them to exceed the Optane SSD 800p's random write speed, though the 900p still holds on to the lead. The 800p's improvement over the Optane Memory is even more apparent with this longer test.

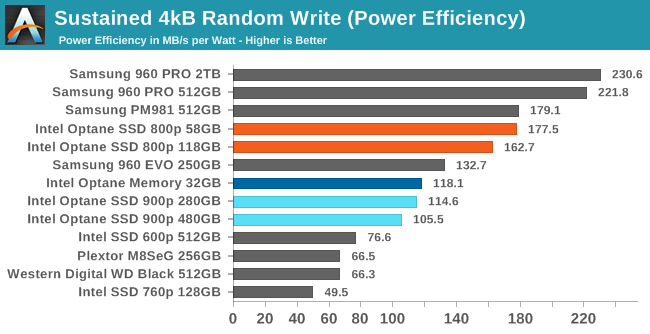

The power efficiency of the 800p during random writes is pretty good, though Samsung's top drives are better still. The Optane Memory lags behind on account of its poor performance, and the 900p ranks below that because it draws so much power in the process of delivering top performance.

|

|||||||||

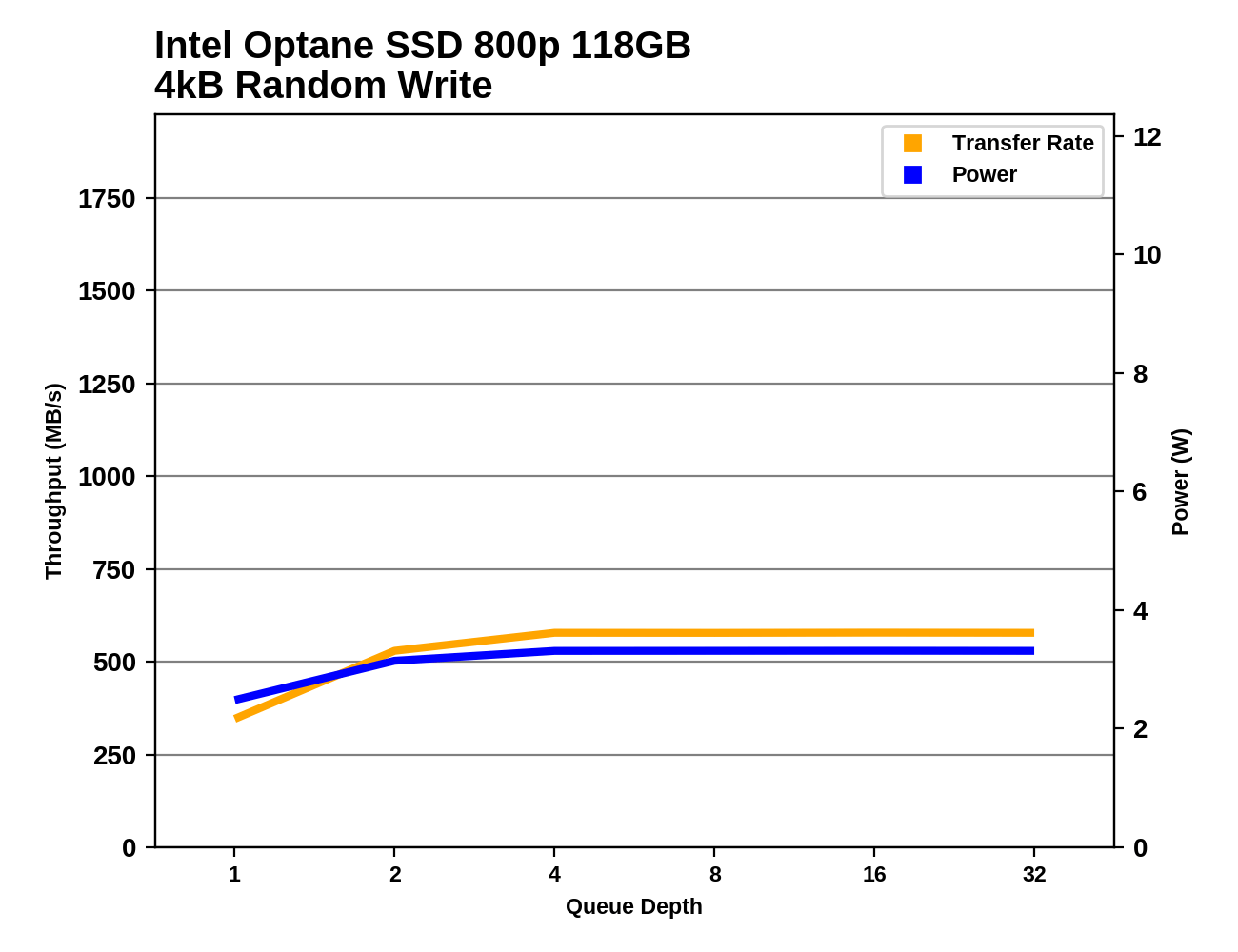

The Samsung 960 PRO and the Intel Optane SSD 900p show off at high queue depths thanks to the high channel counts of their controllers. The Optane SSD 800p doesn't have much room for performance to scale beyond QD2.

116 Comments

View All Comments

MrSpadge - Friday, March 9, 2018 - link

Did you ever had an SSD run out of write cycles? I've personally only witnessed one such case (old 60 GB drive from 2010, old controller, being almost full all the time), but numerous other SSD deaths (controller, Sandforce or whatever).name99 - Friday, March 9, 2018 - link

I have an SSD that SMART claims is at 42%. I'm curious to see how this plays out over the next three years or so.But yeah, I'd agree with your point. I've had two SSDs so far fail (many fewer than HDs, but of course I've owned many more HDs and for longer) and both those failures were inexplicable randomness (controller? RAM?) but they certainly didn't reflect the SSD running out of write cycles.

I do have some very old (heavily used) devices that are flash based (iPod nano 3rd gen) and they are "failing" in the expected SSD fashion --- getting slower and slower, and can be goosed with some speed for another year by giving them a bulk erase. Meaning that it does seem that SSDs "wear-out" failure (when everything else is reliable) happens as claimed --- the device gets so slow that at some some point you're better off just moving to a new one --- but it takes YEARS to get there, and you get plenty of warning, not unexpected medium failure.

MonkeyPaw - Monday, March 12, 2018 - link

The original Nexus 7 had this problem, I believe. Those things aged very poorly.80-wattHamster - Monday, March 12, 2018 - link

Was that the issue? I'd read/heard that Lollipop introduced a change to the cache system that didn't play nicely with Tegra chips.sharath.naik - Sunday, March 11, 2018 - link

the Endurance listed here is barely better than MLC. it is not where close to even SLCReflex - Thursday, March 8, 2018 - link

https://www.theregister.co.uk/2016/02/01/xpoint_ex...I know ddriver can't resist continuing to use 'hypetane' but seriously looking at this article, Optane appears to be a win nearly across the board. In some cases quite significantly. And this is with a product that is constrained in a number of ways. Prices also are starting at a much better place than early SSD's did vs HDD's.

Really fantastic early results.

iter - Thursday, March 8, 2018 - link

You need to lay off whatever you are abusing.Fantastic results? None of the people who can actually benefit from its few strong points are rushing to buy. And for everyone else intel is desperately flogging it at it is a pointless waste of money.

Due to its failure to deliver on expectations and promisses, it is doubtful intel will any time soon allocate the manufacturing capacity it would require to make it competitive to nand, especially given its awful density. At this time intel is merely trying to make up for the money they put into making it. Nobody denies the strong low queue depth reads, but that ain't enough to make it into a money maker. Especially not when a more performant alternative has been available since before intel announced xpoint.

Alexvrb - Thursday, March 8, 2018 - link

Most people ignore or gloss over the strong low QD results, actually. Which is ironic given that most of the people crapping all over them for having the "same" performance (read: bars in extreme benchmarks) would likely benefit from improved performance at low QD.With that being said capacity and price are terrible. They'll never make any significant inroads against NAND until they can quadruple their current best capacity.

Reflex - Thursday, March 8, 2018 - link

Alex - I'm sure they are aware of that. I just remember how consumer NAND drives launched, the price/perf was far worse than this compared to HDD's, and those drives still lost in some types of performance (random read/write for instance) despite the high prices. For a new tech, being less than 3x while providing across the board better characteristics is pretty promising.Calin - Friday, March 9, 2018 - link

SSD never had a random R/W problem compared to magnetic disks, not even if you compared them by price to RAIDs and/or SCSI server drives. What problem they might had at the beginning was in sequential read (and especially write) speed. Current sequential write speeds for hard drives are limited by the rpm of the drive, and they reach around 150MB/s for a 7200 rpm 1TB desktop drive. Meanwhile, the Samsung 480 EVO SSD at 120GB (a good second or third generation SSD) reaches some 170MB/s sequential write.Where the magnetic rotational disk drives suffer a 100 times reduction in performance is random write, while the SSD hardly care. This is due to the awful access time of hard drives (move the heads and wait for the rotation of the disks to bring the data below the read/write heads) - that's 5-10 milliseconds wait time for each new operation).