Marrying Vega and Zen: The AMD Ryzen 5 2400G Review

by Ian Cutress on February 12, 2018 9:00 AM ESTBenchmarking Performance: CPU Rendering Tests

Rendering tests are a long-time favorite of reviewers and benchmarkers, as the code used by rendering packages is usually highly optimized to squeeze every little bit of performance out. Sometimes rendering programs end up being heavily memory dependent as well - when you have that many threads flying about with a ton of data, having low latency memory can be key to everything. Here we take a few of the usual rendering packages under Windows 10, as well as a few new interesting benchmarks.

All of our benchmark results can also be found in our benchmark engine, Bench.

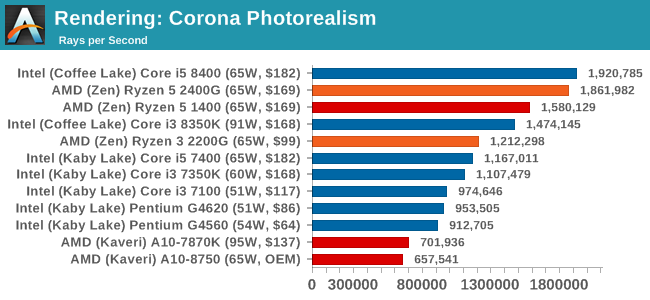

Corona 1.3: link

Corona is a standalone package designed to assist software like 3ds Max and Maya with photorealism via ray tracing. It's simple - shoot rays, get pixels. OK, it's more complicated than that, but the benchmark renders a fixed scene six times and offers results in terms of time and rays per second. The official benchmark tables list user submitted results in terms of time, however I feel rays per second is a better metric (in general, scores where higher is better seem to be easier to explain anyway). Corona likes to pile on the threads, so the results end up being very staggered based on thread count.

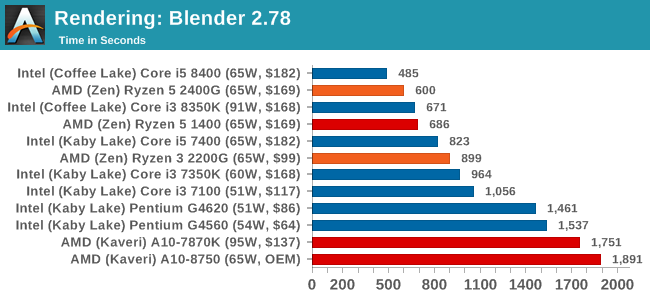

Blender 2.78: link

For a render that has been around for what seems like ages, Blender is still a highly popular tool. We managed to wrap up a standard workload into the February 5 nightly build of Blender and measure the time it takes to render the first frame of the scene. Being one of the bigger open source tools out there, it means both AMD and Intel work actively to help improve the codebase, for better or for worse on their own/each other's microarchitecture.

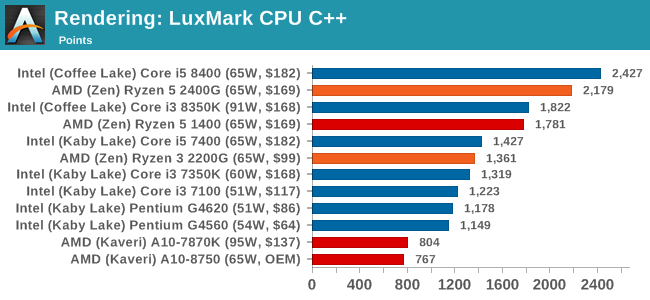

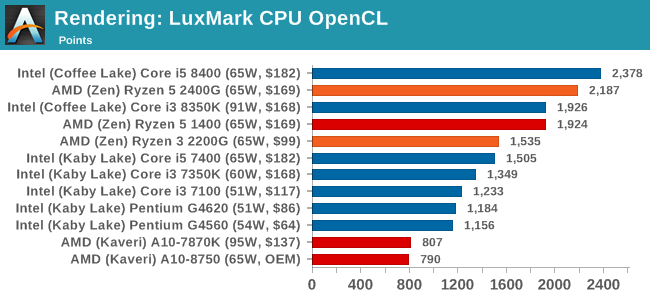

LuxMark v3.1: Link

As a synthetic, LuxMark might come across as somewhat arbitrary as a renderer, given that it's mainly used to test GPUs, but it does offer both an OpenCL and a standard C++ mode. In this instance, aside from seeing the comparison in each coding mode for cores and IPC, we also get to see the difference in performance moving from a C++ based code-stack to an OpenCL one with a CPU as the main host.

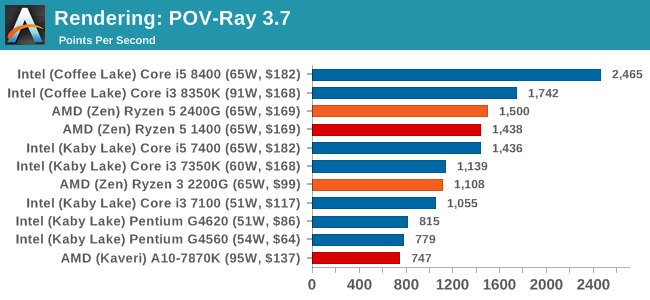

POV-Ray 3.7.1b4: link

Another regular benchmark in most suites, POV-Ray is another ray-tracer but has been around for many years. It just so happens that during the run up to AMD's Ryzen launch, the code base started to get active again with developers making changes to the code and pushing out updates. Our version and benchmarking started just before that was happening, but given time we will see where the POV-Ray code ends up and adjust in due course.

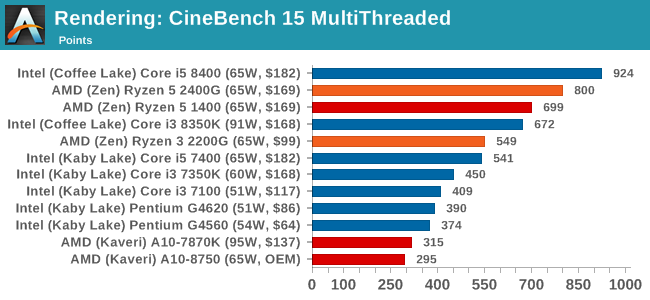

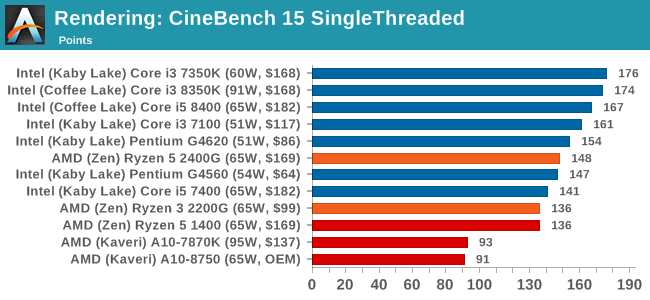

Cinebench R15: link

The latest version of CineBench has also become one of those 'used everywhere' benchmarks, particularly as an indicator of single thread performance. High IPC and high frequency gives performance in ST, whereas having good scaling and many cores is where the MT test wins out.

Conclusions on Rendering: It is clear from these graphs that most rendering tools require full cores, rather than multiple threads, to get best performance. The exception is Cinebench.

177 Comments

View All Comments

AndrewJacksonZA - Monday, February 12, 2018 - link

Hi Ian. I'm still on page one but I'm so excited! Can a 4xx Polaris card be Crossfired with this APU?prtskg - Tuesday, February 13, 2018 - link

No crossfire supported by these apus, according to AMD. You can check it out on AMD's product page.dgingeri - Monday, February 12, 2018 - link

How many PCIe lanes are available on them? I didn't see that info anywhere in the article.iter - Monday, February 12, 2018 - link

Only 8dgingeri - Monday, February 12, 2018 - link

Well, not great, but it can still run a RAID controller off the CPU lanes and a single port of 10Gbe from the chipset, or run a dual port 10Gbe from the CPU and a lower end SATA HBA from PCIex4 from the chipset with software RAID. The 2200G could make a decent storage server with a decent B350 board. I could do more with 16 lanes, but 8 is still workable. It's far cheaper than running a Ryzen 1200 with a X370 board and a graphics card with the same amount of lanes available for IO use and a faster CPU.Geranium - Monday, February 12, 2018 - link

8 PCIe Gen3 for gpu+4 Gen3 for SSD+4 Gen3 for Chipset.andrewaggb - Monday, February 12, 2018 - link

What's with the gaming benchmarks... Is there a valid reason that no games were benchmarked at playable settings? I'm going to have to go to another site to find out if these can get 60ish fps on medium or low settings.... And I thought these were being pitched at esports... so some overwatch and dota numbers might have been appropriate.AndrewJacksonZA - Monday, February 12, 2018 - link

"and can post 1920x1080 gaming results above 49 FPS in titles such as Battlefield One, Overwatch, Rocket League, and Skyrim, having 2x to 3x higher framerates than Intel’s integrated graphics. This is a claim we can confirm in this review.""These games are a cross of mix of eSports and high-end titles, and to be honest, we have pushed the quality settings up higher than most people would expect for this level of integrated graphics: most benchmarks hit around 25-30 FPS average with the best IGP solutions, down to 1/3 this with the worst solutions. The best results show that integrated graphics are certainly capable with the right settings, but also shows that there is a long way between integrated graphics and a mid-range discrete graphics option."

I would love to see which settings BF1 would have 49FPS please. Is it with everything on low, medium?

Ian Cutress - Monday, February 12, 2018 - link

I've added some sentences to the IGP page while I'm on the road. We used our 1080 high/ultra CPU Gaming suite for two reasons.Manch - Monday, February 12, 2018 - link

Which are?