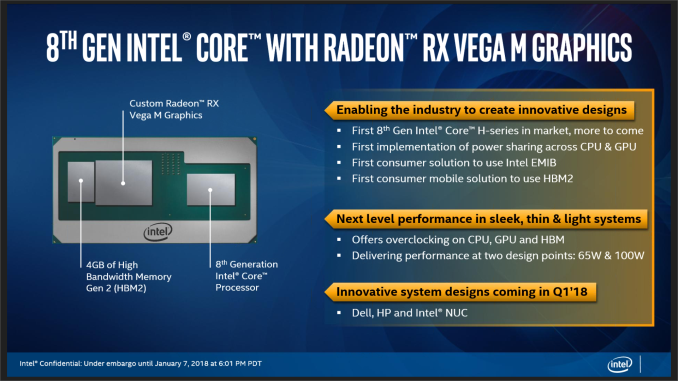

Intel Core with Radeon RX Vega M Graphics Launched: HP, Dell, and Intel NUC

by Ian Cutress on January 7, 2018 9:02 PM ESTFinal Words

Does This Make Intel and AMD the Best of Frenemies?

It does seem odd, Intel and AMD working together in a supplier-buyer scenario. Previous interactions between the two have been adversarial at best, or have required calls to lawyers at the worst. So who benefits from this relationship?

AMD: A GPU That Someone Else Sells? Sure, How Many Do You Need?

Some users might point to AMD’s financials as being a reason for this arrangement, in the event that Zen didn’t take off then this was a separate source of income for AMD. Ultimately AMD is looking healthier since Ryzen, and even if Intel did rock up with piles of money, the scope of the product is unclear how much volume Intel would be requesting.

Or some might state that this sort of product, if positioned correctly, would encroach into some of AMD’s markets, such as laptop APUs or laptop GPUs. My response to this is that it actually ends up a win for AMD: Intel is currently aiming at 65W/100W mobile devices, which is a way away from the Ryzen Mobile parts that should come into force during 2018. For every chip they sell to Intel, that’s a sale for them. It means that there discrete-class graphics in a system that might have had an NVIDIA product in it instead. One potential avenue is that NVIDIA’s laptop GPU program is extensive: now with Intel at the helm driving the finished product rather than AMD, there is scope for AMD-based graphics to appear in many more devices than if they went alone. People trust Intel on this, and have done for years: if it is marketed as an Intel product, it’s a win for AMD.

Intel: What Does Intel Get Out Of This?

Intel’s internal graphics, known as ‘Gen’ graphics externally, has been third best behind NVIDIA and AMD for grunt. It had trouble competing against ARM’s Mali in the low power space, and the scaling of the design has not seemed to lend itself to large, 250W GPUs for desktops. If you have been following certain analysts that keep tabs on Intel’s graphics, you might have read the potential woes and missed targets that have potentially happened behind closed doors every time there has been a node shrink. Even though Intel has competed with GT3/4 graphics with eDRAM in the past (known as the Crystalwell products), some of which performed well, they came at additional expense for the OEMs that used them.

So rather than scale Gen graphics out to something bigger, Intel worked with AMD to purchase Radeon Vega. It is unclear if Intel approached NVIDIA to try something similar, as NVIDIA is the bigger company, but AMD has a history of being required by one of Intel’s big OEM partners: Apple. AMD has also had a long term semi-custom silicon strategy in place, while NVIDIA does not advertise that part of their business as such.

What Intel gets is essentially a better version of their old Crystalwell products, albeit at a higher power consumption. The end product, Intel with Radeon RX Vega M Graphics, aim to offer other solutions (namely Intel + NVIDIA MX150/GTX1050) but with reduced board space, allowing for thinner/lighter designs or designs with more battery. A cynic might suggest that either way, it was always going to be an Intel sale, so why bother going to the effort? One of the tracks of Intel’s notebook products in recent years is trying to convince users to upgrade more frequently: for the last couple of years, users who buy 2-in-1s were found to refresh their units quicker than clamshell devices. Intel is trying to do the same thing here with a slightly higher class of product. Whether the investment to create such a product is worth it will bear out in sales numbers.

It's Not Completely Straightforward

One thing is clear though: Intel’s spokespersons that gave us our briefing were trained very specifically to avoid mentioning AMD by name about this product line. Every time I had expected them to say ‘AMD Graphics’ in our pre-briefing, they all said ‘Radeon’. As far as the official line goes, the graphics chip was purchased from ‘Radeon’, not from AMD. I can certainly understand trying to stay on brand message, and avoiding the name from an x86 competitive standpoint, but this product fires a shot across the bow of NVIDIA, not AMD. Call a spade a spade.

Aside from the three devices that will be coming with the new processors, from HP, Dell, and the Intel NUC, one interesting side story came out of this. Intel has already had interest from a cloud gaming company for these new processors. In the same way that a massive GPU based-datacenter can offer many users cloud gaming services, these new chips are set to be in the datacenter for 1080p gaming at super high density, perhaps moreso than current GPU solutions. An interesting thought.

Intel NUC Enthusiast 8: The Hades Canyon Platform

The HP and Dell units are set to be announced later this week during CES. For information about the Intel NUC, using the overclockable Core i7-8809G processor, Ganesh has the details in a separate news post.

66 Comments

View All Comments

haukionkannel - Monday, January 8, 2018 - link

This. Vega is very effisient in low clock rates!Yojimbo - Sunday, January 7, 2018 - link

Where do they claim that it will beat the GTX 1050 in terms of power efficiency? They show some select benchmarks that imply a certain efficiency in those specific cases, but I didn't see that they mentioned general power efficiency or price at all.This package from Intel does have HBM, which is more power efficient than GDDR5. That will help. But overall, my expectation is that Intel's new chip will be less efficient in graphics intensive tasks than a system with a latest generation discrete NVIDIA GPU. The dynamic tuning should help in cases where both CPU and GPU need to draw significant power, though.

We probably know how Vega performs. Assuming that the chips aren't TDP constrained, the more powerful of the two variants should probably perform somewhere between a 560 and 570 in games. The lesser variant should perform around a 560, less or more depending on how memory bandwidth plays into things. We'll have to see how power constraints factor into to things though.

Another thing to keep in mind is that for most of its lifetime, this chip will probably be going up against NVIDIA's next generation of GPUs and not their current generation. Intel did benchmark it against a 950M, but I wouldn't put it past them to ignore price differences in a comparison they release. The new chips will probably be expensive enough that they will have to go up against the latest generation of their competitor's chips.

Kevin G - Monday, January 8, 2018 - link

This does leave room for Intel produce a slimmer GT1 or even omitting a GPU entirely for mobile when the know that it will be paired with a Radeon Vega on package. That'd permit Intel to decrease costs on their end, though this would up to Intel to pass onward to OEMs.nico_mach - Monday, January 8, 2018 - link

AMD wasn't good at efficiency mostly due to fabbing. That's easily fixable with a deep-pocketed and suddenly desperate partner like Intel.artk2219 - Wednesday, January 10, 2018 - link

Vega is actually pretty efficient, just not when they try to chase high performance, then the power requirements jump exponentially in response to the higher clocks and voltage. Also, AMD has had the fficiency crown multiple times, just not recently. The Radeon 9700 pro, 9800 pro, 4850, 4870, 5850, 5870, 7790, 7950, and 7970 all say hello when compared to their Nvidia counterparts of the time.jjj - Sunday, January 7, 2018 - link

Ask AMD for a die shot so we can count CUs lolshabby - Sunday, January 7, 2018 - link

8th generation... kaby lake? Have i been sleeping under a rock?evilpaul666 - Sunday, January 7, 2018 - link

Is there a difference between Skylake, Kaby Lake and Coffee Lake that I'm unaware of?shabby - Sunday, January 7, 2018 - link

In mobile the only difference was the core count, it doubled when coffee lake was released, but this kaby lake has similar core counts for some reason.extide - Sunday, January 7, 2018 - link

Yeah, for U/Y (and now G) series 8th gen is 'Kaby Lake refresh, not Coffee Lake)