HiSilicon Kirin 970 - Android SoC Power & Performance Overview

by Andrei Frumusanu on January 22, 2018 9:15 AM EST

Today I would say that there’s only two truly vertically integrated mobile OEMs who have full control over their silicon: Apple and Huawei – and of the two one could say Huawei is currently even more integrated due to in-house modem development. Huawei’s semiconductor division, HiSilicon, has over the last several years been the one company which seems to have managed what the others haven’t: break in into the high-end market with solutions that are competitive with the current leader in the business, Qualcomm.

I remember the Honor 6 with the newly branded (Previously not having any “halo” line-up name) Kirin 920 SoC as the first device with the company’s in-house SoC that we reviewed. These and the following generation the Kirin 930 suffered from immaturity with problems such as a very power hungry memory controller and very disappointing camera processing pipeline (ISP/DSP). The Kirin 950 was in my opinion a turning point for HiSilicon as the product truly impressed and improved the quality of the product, catching many eyes in the semiconductor industry, including myself in the resulting review of the Huawei Mate 8.

Over the last several years we’ve seen great amounts of consolidation in the mobile semiconductor industry. Companies such as Texas Instruments which were once key players no longer offer mobile SoC products in their catalogue. We’ve seen companies such as Nvidia try and repeatedly fail at carving out meaningful market-share. MediaTek has tried providing higher end SoCs with the Helio X line-up with rather little success to the point that the company has put on hold development in that segment to rather focus on higher margin parts in the P-series.

Meanwhile even Samsung LSI, while having a relatively good product with its flagship Exynos series, still has not managed to win over the trust of the conglomorate's own mobile division. Rather than using Exynos as an exclusive keystone component of the Galaxy series, Samsing has instead been dual-sourcing it along with Qualcomm’s Snapdragon SoCs. It’s therefore not hard to make the claim that producing competitive high-end SoCs and semiconductor components is a really hard business.

Last year’s Kirin 960 was a bit of a mixed bag: the SoC still delivered good improvements over the Kirin 950 however it was limited in terms of what it could achieve against competing flagship SoCs from Samsung and Qualcomm as they both had a process node advantage. Huawei's introduction of flagships with new generation of SoCs in the fourth quarter is more close to the release time-frame of Apple than the usual first quarter that we’ve come accustomed of Qualcomm and Samsung.

As such when pitting the Kirin versus Snapdragon and Exynos’s we’re looking at a product that’s more often than not late to the party in terms of introduction of new technologies such as process node and IP. The Kirin 970 fits this profile: as a 10nm Cortex-A73 generation-based SoC, it lagged behind Qualcomm and Samsung in terms of process node, yet being too early in its release to match up with ARM’s release schedule to be able to adopt DynamiQ and A75 and A55 based CPU cores for this cycle. That being said the Kirin 970 enjoys a few months with technical feature parity with the Snapdragon 835 and Exynos 8895 before we see new Snapdragon 845 and Exynos 9810 products later in the usual spring refresh cycle.

Nevertheless, the article today is a focus on the Kirin 970 and its improvements and also an opportunity to review the current state of SoCs powering Android devices.

| HiSilicon High-End Kirin SoC Lineup | |||

| SoC | Kirin 970 | Kirin 960 | Kirin 950/955 |

| CPU | 4x A73 @ 2.36 GHz 4x A53 @ 1.84 GHz |

4x A73 @ 2.36GHz 4x A53 @ 1.84GHz |

4x A72 @ 2.30/2.52GHz 4x A53 @ 1.81GHz |

| GPU | ARM Mali-G72MP12 746 MHz |

ARM Mali-G71MP8 1037MHz |

ARM Mali-T880MP4 900MHz |

| LPDDR4 Memory |

4x 16-bit CH LPDDR4 @ 1833 MHz 29.9GB/s |

4x 16-bit CH LPDDR4 @ 1866MHz 29.9GB/s |

2x 32-bit LPDDR4 @ 1333MHz 21.3GB/s |

| Interconnect | ARM CCI | ARM CCI-550 | ARM CCI-400 |

| Storage I/F | UFS 2.1 | UFS 2.1 | eMMC 5.0 |

| ISP/Camera | Dual 14-bit ISP | Dual 14-bit ISP (Improved) |

Dual 14-bit ISP 940MP/s |

| Encode/Decode | 2160p60 Decode 2160p30 Encode |

2160p30 HEVC & H.264 Decode & Encode 2160p60 HEVC Decode |

1080p H.264 Decode & Encode 2160p30 HEVC Decode |

| Integrated Modem | Kirin 970 Integrated LTE (Category 18/13) DL = 1200 Mbps 5x20MHz CA, 256-QAM UL = 150 Mbps 2x20MHz CA, 64-QAM |

Kirin 960 Integrated LTE (Category 12/13) DL = 600Mbps 4x20MHz CA, 64-QAM UL = 150Mbps 2x20MHz CA, 64-QAM |

Balong Integrated LTE (Category 6) DL = 300Mbps 2x20MHz CA, 64-QAM UL = 50Mbps 1x20MHz CA, 16-QAM |

| Sensor Hub | i7 | i6 | i5 |

| NPU | Yes | No | No |

| Mfc. Process | TSMC 10nm | TSMC 16nm FFC | TSMC 16nm FF+ |

The Kirin 970, isn't a major IP overhaul as it continues to use the same central processing unit IP from ARM that was used in the Kirin 960. The new SoC even doesn't improve the frequency of the CPU clusters as we still see the same 2.36GHz for the A73 cores and 1.84GHz for the A53 cores. When ARM originally launched the A73 we had seen optimistic targets of up to 2.8GHz on TSMC 10nm, but we seem to have largely missed that target, a sign of ever increasing difficulty to scale frequency in mobile SoCs as the diminishing returns from process node updates become worse and worse.

The Kirin 970 does bring a major overhaul and change in the GPU configuration as we see the first implementation of ARM’s Mali G72 in a 12-cluster configuration, a 50% increase in core count over the Kirin 960’s G71-MP8 setup. The new GPU is running at a much reduced frequency of 746MHz versus the 1033MHz of the Kirin 960. In Matt Humrick’s review of the Kirin 960 we saw some disastrous peak average power figures of the Mali G71 outright exploding the thermal envelope of the Mate 9, so hopefully the architectural improvements of the new G72 alongside a wider and lower clocked configuration in conjunction with the new process node will bring significant improvements over its predecessor.

The new modem in the Kirin 970 now implements 3GPP LTE Release 13 and supports downlink speeds of up to 1200Mbps thanks to up to 5x20MHz carrier aggregation with 256-QAM, making the new Kirin modem feature equivalent to Qualcomm’s X20 modem that’ll be integrated in the Snapdragon 845.

The big story surrounding the Kirin 970 was the inclusion of a dedicated neural processing unit. The NPU, as HiSilicon decided to name it, is part of a new type and generation of specialised dedicated acceleration blocks with the aim of offloading “inferencing” of convolutional neural net (CNNs). Many will have heard buzzwords such as artificial intelligence surrounding the topic, but the correct term is machine learning or deep learning. The hardware acceleration blocks with various names from various companies do not actually do any deep learning, but rather are there to improve execution (inferencing) of neural network models while the training of the models will still remain something that will be done either in the cloud or by other blocks in the SoC such as the GPU. It’s still the early days but we’ll have a proper look at the NPU in its dedicated section of the article.

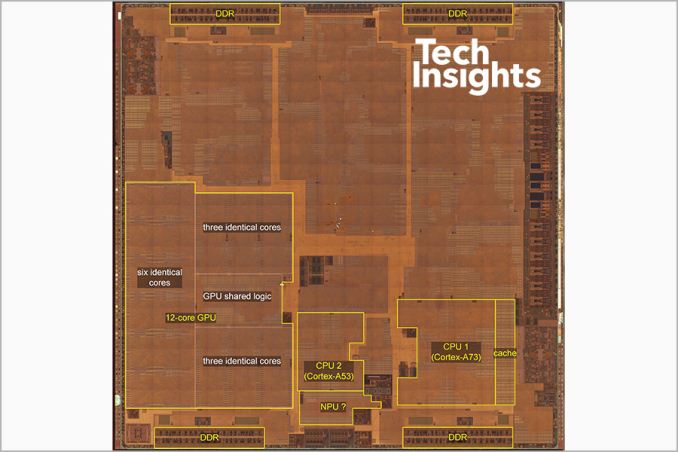

SoC die shot image & labels courtesy of TechInsights Mate 10 teardown

As aforementioned one of the bigger improvements of the Kirin 970 is the switch to a TSMC 10FF manufacturing node. While 10nm is supposed to be a long-lived node for Samsung's foundry – where indeed we’ll see two full generations of SoCs produced on 10LPE and 10LPP – TSMC is taking a different approach and sees its own 10FF process node a short-lived node and stepping-stone to the much anticipated 7FF node, which is to be introduced later in 2018. As such the only TSMC 10FF mobile products to date have been the low-volume MediaTek X30 and Apple 10X in summer and the high-volume Apple A11 and HiSilicon Kirin 970 in Q3-Q4, a 2-3 quarter after Samsung had entered high-volume production of the Snapdragon 835 and Exynos 8895.

HiSilicon’s expectations of the new process node are rather conservative improvement of only 20% in efficiency at the same performance point for the apples-to-apples CPU clusters, below ARM’s earlier predictions of 30%. This rather meagre improvement in power will be likely one of the reasons why HiSilicon decided not to increase the CPU clocks on the Kirin 970, instead focusing on bringing down power usage and lowering the TDP when compared to the Kirin 960.

The SoC does enjoy a healthy die size shrink from 117.72mm² down to 96.72mm² even though the new SoC has 50% more GPU cores as well as new IP blocks such as the NPU. Our colleagues at TechInsights have published a detailed per-block size comparison between the Kirin 960 and Kirin 970 and we see a 30-38% decrease in block size for apples-to-apples IP. The Cortex-A73 quad-core cluster now comes in at only 5.66mm², a metric to keep in mind and in stark contrast to Apple which is investing twice as much silicon area in its dual-core big CPU cluster.

116 Comments

View All Comments

StormyParis - Monday, January 22, 2018 - link

If the Modem IP is Huawei's one true in-house part, why didn't you at least test it alongside the CPU and GPU ? I'd assume in the real world, ti too has a large impact on batteyr and performance ?Ian Cutress - Monday, January 22, 2018 - link

The kit to properly test a modem power/attenuation to battery is around $50-100k. We did borrow one once, a few years ago, but it was only short-term loan. Not sure if/when we'll be able to do that testing again.juicytuna - Monday, January 22, 2018 - link

How does Mali have so many design wins? Why did Samsung switch from PowerVR to Mali? Cost savings? Politics? Because it clearly wasn't a descistion made on technical merit.lilmoe - Tuesday, January 23, 2018 - link

Because OEMs like Samsung are not stupid? And Mali is actually very power efficient and competitive?What are you basing your GPU decision on? Nothing in the articles provides evidence that Mali is less efficient than Adreno in UI acceleration or 60fps capped popular games (or even 60fps 1080p normalized T-Rex benchmark)...

Measuring the constant power draw of the GPU, which is supposed to be reached in vert short bursts, is absolutely meaningless.

lilmoe - Tuesday, January 23, 2018 - link

***Measuring the max (constant) power draw of the GPU, which is supposed to be reached in very short bursts during a workload, is absolutely meaningless.jospoortvliet - Saturday, January 27, 2018 - link

Your argument is half-way sensible for a CPU but not for a GPU.A GPU should not even HAVE a boost clock - there is no point in that for typical GPU workloads. Where a CPU is often active in bursts, a GPU has to sustain performance in games - normal UI work barely taxes it anyway.

So yes the max sustained performance and associated efficiency is ALL that matters. And MALI, at least in the implementations we have seen, is behind.

lilmoe - Sunday, January 28, 2018 - link

I think you're confusing fixed function processing with general purpose GPUs. Modern GPU clocks behave just like CPU cores, and yes, with bursts, just like NVidia's and AMD's. Not all scenes rendered in a game, for example, need the same GPU power, and not all games have the same GPU power needs.Yes, there is a certain performance envelope that most popular games target. That performance envelope/ target is definitely not SlingShot nor T-rex.

This is where Andrei's and your argument crumbles. You need to figure out that performance target and measure efficiency and power draw at that target. That's relatively easy to do; open up candy crush and asphalt 8 and measure in screen fps and power draw. That's how you measure efficiency on A SMARTPHONE SoC. Your problem is that you think people are using these SoCs like they would on a workstation. They don't. No one is going to render a 3dmax project on these phones, and there are no games that even saturate last year's flagship mobile gpu.

Not sure if you're not getting my simple and sensible point, or you're just being stubborn about it. Mobile SoC designed have argued for bursty gpu behavior for years. You guys need to get off your damn high horse and stop deluding yourself into thinking that you know better. What Apple or Qualcomm do isn't necessarily best, but it might be best for the gpu architecture THEY'RE using.

As for the CPU, you agree but Andrei insists on making the same mistake. You DON'T measure efficiency at max clocks. Again, max clocks are used in bursts and only for VERY short periods of time. You measure efficient by measuring the time it takes to complete a COMMON workload and the total power it consumes at that. Another hint, that common workload is NOT geekbench, and it sure as hell isn't SPEC.

lilmoe - Sunday, January 28, 2018 - link

The A75 is achieving higher performance mostly with higher clocks. The Exynos M3 is a wide core WITH higher clocks. Do you really believe these guys are idiots? You really think that's going to affect efficiency negatively? You think Android OEMs will make the same "mistake" Apple did and not provide adequate and sustainable power delivery?Laughable.

futrtrubl - Monday, January 22, 2018 - link

"The Kirin 970 in particular closes in on the efficiency of the Snapdragon 835, leapfrogging the Kirin 960 and Exynos SoCs."Except according to the chart right above it the 960 is still more efficient.

Andrei Frumusanu - Monday, January 22, 2018 - link

The efficiency axis is portrayed as energy (joules) per performance (test score). In this case the less energy used, the more efficient, meaning the shorter the bars, the better.