HiSilicon Kirin 970 - Android SoC Power & Performance Overview

by Andrei Frumusanu on January 22, 2018 9:15 AM ESTCPU Performance: SPEC2006

SPEC2006 has been a natural goal to aim for as a keystone analysis benchmark as it’s a respected industry standard benchmark that even silicon vendors use for architecture analysis and development. As we saw SPEC2017 released last year SPEC2006 is getting officially retired on January 9th, a funny coincidence as we now finally start using it.

As Android SoCs improve in power efficiency and performance it’s now becoming more practical to use SPEC2006 on consumer smartphones. The main concerns of the past were memory usage for subtests such as MCF, but more importantly sheer test runtimes for battery powered devices. For a couple of weeks I’ve been busy in porting over SPEC2006 to a custom Android application harness.

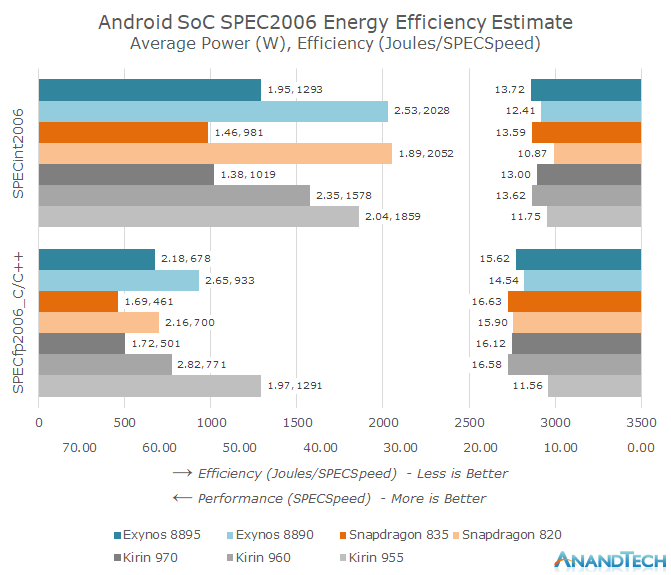

The results are quite remarkable as we see both the generational performance as well as efficiency improvements from the various Android SoC vendors. The Kirin 970 in particular closes in on the efficiency of the Snapdragon 835, leapfrogging the Kirin 960 and Exynos SoCs. We also see a non-improvement in absolute performance as the Kirin 970 showcases a slight performance degradation over the Kirin 960 – with all SoC vendors showing just meagre performance gains over the past generation.

Going Into The Details

Our new SPEC2006 harness is compiled using the official Android NDK. For this article the NDK version used in r16rc1 and Clang/LLVM were used as the compilers with just the –Ofast optimization flags (alongside applicable test portability flags). Clang was chosen over of GCC because Google has deprecated GCC in the NDK toolchain and will be removing the compiler altogether in 2018, making it unlikely that we’ll revisit GCC results in the future. It should be noted that in my testing GCC 4.9 still produced faster code in some SPEC subtests when compared to Clang. Nevertheless the choice of Clang should in the future also facilitate better Androids-to-Apples comparisons in the future. While there are arguments that SPEC scores should be published with the best compiler flags for each architecture I wanted a more apples-to-apples approach using identical binaries (Which is also what we expect to see distributed among real applications). As such for this article the I’ve chosen to pass to the compiler the –mcpu=cortex-a53 flag as it gave the best average overall score among all tested CPUs. The only exception was the Exynos M2 which profited from an additional 14% performance boost in perlbench when compiled with its corresponding CPU architecture target flag.

As the following SPEC scores are not submitted to the SPEC website we have to disclose that they represent only estimated values and thus are not officially validated submissions.

Alongside the full suite for CINT2006 we are also running the C/C++ subtests of CFP2006. Unfortunately 10 out of the 17 tests in the CFP2006 suite are written in Fortran and can only be compiled with hardship with GCC on Android and the NDK Clang lacks a Fortran front-end.

As an overview of the various run subtests, here are the various application areas and descriptions as listed on the official SPEC website:

| SPEC2006 C/C++ Benchmarks | ||||||

| Suite | Benchmark | Application Area | Description | |||

| SPECint2006 (Complete Suite) |

400.perlbench | Programming Language | Derived from Perl V5.8.7. The workload includes SpamAssassin, MHonArc (an email indexer), and specdiff (SPEC's tool that checks benchmark outputs). | |||

| 401.bzip2 | Compression | Julian Seward's bzip2 version 1.0.3, modified to do most work in memory, rather than doing I/O. | ||||

| 403.gcc | C Compiler | Based on gcc Version 3.2, generates code for Opteron. | ||||

| 429.mcf | Combinatorial Optimization | Vehicle scheduling. Uses a network simplex algorithm (which is also used in commercial products) to schedule public transport. | ||||

| 445.gobmk | Artificial Intelligence: Go | Plays the game of Go, a simply described but deeply complex game. | ||||

| 456.hmmer | Search Gene Sequence | Protein sequence analysis using profile hidden Markov models (profile HMMs) | ||||

| 458.sjeng | Artificial Intelligence: chess | A highly-ranked chess program that also plays several chess variants. | ||||

| 462.libquantum | Physics / Quantum Computing | Simulates a quantum computer, running Shor's polynomial-time factorization algorithm. | ||||

| 464.h264ref | Video Compression | A reference implementation of H.264/AVC, encodes a videostream using 2 parameter sets. The H.264/AVC standard is expected to replace MPEG2 | ||||

| 471.omnetpp | Discrete Event Simulation | Uses the OMNet++ discrete event simulator to model a large Ethernet campus network. | ||||

| 473.astar | Path-finding Algorithms | Pathfinding library for 2D maps, including the well known A* algorithm. | ||||

| 483.xalancbmk | XML Processing | A modified version of Xalan-C++, which transforms XML documents to other document types. | ||||

| SPECfp2006 (C/C++ Subtests) |

433.milc | Physics / Quantum Chromodynamics | A gauge field generating program for lattice gauge theory programs with dynamical quarks. | |||

| 444.namd | Biology / Molecular Dynamics | Simulates large biomolecular systems. The test case has 92,224 atoms of apolipoprotein A-I. | ||||

| 447.dealII | Finite Element Analysis | deal.II is a C++ program library targeted at adaptive finite elements and error estimation. The testcase solves a Helmholtz-type equation with non-constant coefficients. | ||||

| 450.soplex | Linear Programming, Optimization | Solves a linear program using a simplex algorithm and sparse linear algebra. Test cases include railroad planning and military airlift models. | ||||

| 453.povray | Image Ray-tracing | Image rendering. The testcase is a 1280x1024 anti-aliased image of a landscape with some abstract objects with textures using a Perlin noise function. | ||||

| 470.lbm | Fluid Dynamics | Implements the "Lattice-Boltzmann Method" to simulate incompressible fluids in 3D | ||||

| 482.sphinx3 | Speech recognition | A widely-known speech recognition system from Carnegie Mellon University | ||||

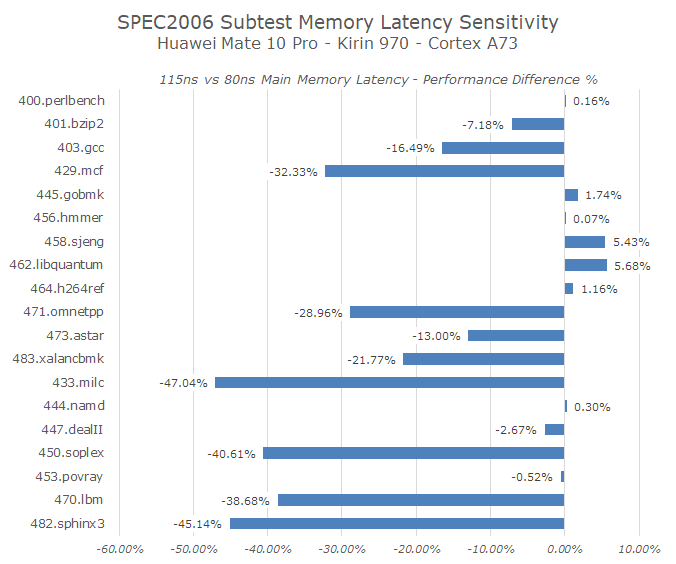

It’s important to note one extremely distinguishing aspect of SPEC CPU versus other CPU benchmarks such as GeekBench: it’s not just a CPU benchmark, but rather a system benchmark. While benchmarks such as GeekBench serve as a good quick view of basic workloads, the vastly greater workload and codebase size of SPEC CPU stresses the memory subsystem to a much greater degree. To demonstrate this we can see the individual subtest performance differences when solely limiting the memory controller frequency, in this case on the Mate 10 Pro with the Kirin 970.

An increase in main memory latency from just 80ns to 115ns (Random access within access window) can have dramatic effects on many of the more memory access sensitive tests in SPEC CPU. Meanwhile the same handicap essentially has no effect on the GeekBench 4 single-threaded scores and only marginal effect on some subtests of the multi-threaded scores.

In general the benchmarks can be grouped in three categories: memory-bound, balanced memory and execution-bound, and finally execution bound benchmarks. From the memory latency sensitivity chart it’s relatively easy to find out which benchmarks belong to which category based on the performance degradation. The worst memory bound benchmarks include the infamous 429.mcf but alongside we also see 433.milc, 450.soplex, 470.lbm and 482.sphinx3. The least affected such as 400.perlbench, 445.gobmk, 456.hmmer, 464.h264ref, 444.namd, 453.povray and with even 458.sjeng and 462.libquantum slightly increasing in performance pointing out to very saturated execution units. The remaining benchmarks are more balanced and see a reduced impact on the performance. Of course this is an oversimplification and the results will differ between architectures and platforms, but it gives us a solid hint in terms of separation between execution and memory-access bound tests.

As well as tracking performance (SPECspeed) I also included a power tracking mechanisms which relies on the device’s fuel-gauge for current measurements. The values published here represent only the active power of the platform, meaning it subtracts idle power from total absolute load power during the workloads to compensate for platform components such as the displays. Again I have to emphasize that the power and energy figures don't just represent the CPU, but the SoC system as a whole, including interconnects, memory controllers, DRAM, and PMIC overhead.

Alongside the current generation SoCs I also included a few predecessors to be able to track the progress that has happened over the last 2 years in the Android space and over CPU microarchitecture generations. Because the runtime of all benchmarks is in excess of 5 hours for the fastest devices we are actively cooling the phones with an external fan to ensure consistent DVFS frequencies across all of the subtests and that we don’t favour the early tests.

116 Comments

View All Comments

HStewart - Monday, January 22, 2018 - link

One thing I would not mind Windows for ARM - if had the following1. Cheaper than current products - 300-400 range

2. No need for x86 emulation - not need on such product - it would be good for Microsoft Office, email and internet machine. But not PC apps

StormyParis - Monday, January 22, 2018 - link

But then why do you need WIndows to do that ? Android iOS and CHromme already do it, with a lot more other apps.PeachNCream - Monday, January 22, 2018 - link

It's too early in the Win10 on ARM product life cycle to call the entire thing a failure. I agree that it's possible we'll be calling it failed eventually, but the problems aren't solely limited to the CPU of choice. Right now, Win10 ARM platforms are priced too high (personal opinion) and _might_ be too slow doing the behind-the-scenes magic necessary to run x86 applications. Offering a lot more battery life, which Win10 on ARM does, isn't enough of a selling point to entirely offset the pricing and limitations. While I'd like to get 22 hours of battery life doing useful work with wireless active out of my laptops, it's more off mains time than I can realistically use in a day so I'm okay with a lower priced system with shorter life (~5 hours) since I use my phone for multi-day, super light computing tasks already. That doesn't mean everyone feels that way so let's wait and see before getting out the hammer and nails for that coffin.jjj - Monday, January 22, 2018 - link

The CPU is the reason for the high price, SD835 comes at a high premium and LTE adds to it.That's why those machines are not competitive in price with Atom based machines.

Use a 25$ SoC and no LTE and Windows on ARM becomes viable with an even longer battery life.

PeachNCream - Monday, January 22, 2018 - link

I didn't realize the 835 accounted for so much of the BOM on those ARM laptops. Since Intel's tray pricing for their low end chips isn't exactly cheap (not factoring in OEM/volume discounts), it didn't strike me as a significant hurdle. I'd thought most of the price as due to low production volume and attempts to make the first generation's build quality attractive enough to have a ripple effect on subsequently cheaper models.tuxRoller - Monday, January 22, 2018 - link

I'm not sure they do.A search indicated that in 2014 the average price of a Qualcomm solution for a platform was $24. The speculation was that the high-end socs were sold in the high $30s to low $40s.

https://www.google.com/amp/s/www.fool.com/amp/inve...

jjj - Monday, January 22, 2018 - link

It's likely more like 50-60$ for the hardware and 15$ for licensing for a 700$ laptop- although that includes only licenses to Qualcomm and they are not the only ones getting payed.Even a very optimistic estimate can't go lower than 70$ total and that's a large premium vs my suggestion of a 25$ SoC with no LTE.

An 8 cores A53 might go below 10$, something like Helio X20 was around 20$ at it's time, one would assume that SD670 will be 25-35$, depending on how competitive Mediatek is with P70.

jjj - Monday, January 22, 2018 - link

Some estimates will go much higher though (look at LTE enabling components too ,not just SoC for the S8). http://www.techinsights.com/about-techinsights/ove...Don't think costs are quite that high but they are supposed to know better.

tuxRoller - Monday, January 22, 2018 - link

That's way higher than I've seen.http://mms.businesswire.com/media/20170420006675/e...

Now, that's for the exynos 8895, but is imagine prices are similar for Snapdragon.

Regardless, these are all estimates. I'm not aware of anyone who actually knows the real prices of these (including licenses) we has come out and told us.

jjj - Monday, January 22, 2018 - link

On licensing you can take a look at the newest 2 pdfs here https://www.qualcomm.com/invention/licensing.Those are in line with the China agreement they have at 3.5% and 5% out of 65% of the retail value. There would be likely discounts for exclusivity and so on. So ,assuming multinode, licensing would be 22.75$ for a 700$ laptop, before any discounts (if any) BUT that's only to Qualcomm and not others like Nokia, Huawei, Samsung, Ericsson and whoever else might try to milk this.

As for SoC, here's IHS for a SD835 phone https://technology.ihs.com/584911/google-pixel-xl-...