HiSilicon Kirin 970 - Android SoC Power & Performance Overview

by Andrei Frumusanu on January 22, 2018 9:15 AM ESTAn Introduction to Neural Network Processing

AI is currently the big buzzword when talking about consumer electronics. While marketing departments all over are trying to embrace the term, when we’re talking about the current use of AI in computing terms we’re specifically talking about machine learning. More precisely when talking about the latest generations of silicon IPs, we’re talking about the implementation of specialized hardware block which are optimized to run convolutional neural networks (CNNs).

While explaining how convolutional neural networks work in detail is far beyond this piece, they have been a research topic since the 1980’s. The idea is to try to simulate the behaviour of the human brain’s neurons. The keyword here again is simulation; no the various neural network IP’s hardware implementations do not mimic the human brain structure. While the field of neural networks in academia has been around for a long time, it’s only been in the last decade with the introduction software implementations that are able to run on GPUs that things have literally accelerated to become a lot more interesting. Via breakthroughs over the last half-decade, we’ve seen researchers iterate and develop CNN models that improve in terms of accuracy and efficiency.

Looking under the hood, it turns out that CNNs map pretty well to highly threaded execution models. The work itself has minimal branching or other "complex" behavior that requires a general purpose processor (CPU), and instead can typically be broken up into discrete, semi-independent threads. Furthermore the required computational accuracy is not all that high – running fully developed networks can be done via low-precision integers in some cases – again simplifying the scope of the problem. As a result, CNN research & development hit its stride earlier this decade when GPUs began shipping with the necessary compute features and the overall performance to resolve complex CNN execution in a reasonable-by-human-standards timeframe.

Of course, while GPUs have been the most adapted to running them, GPUs are not the only kind of highly parallel processor out there. As the field is evolving and companies want to commercialize their use in actual use-cases, we saw the need for much higher performance requirements as well as consideration for power efficiency. At this point we started seeing the move towards more specialized processing units whose architecture is built with machine learning in mind. Google was the first to announce such hardware with the announcement of the TPU back in 2016. More specialized hardware loses some flexibility, but in turn it gains power and area (die space) efficiency by only including the hardware and features necessary for the task.

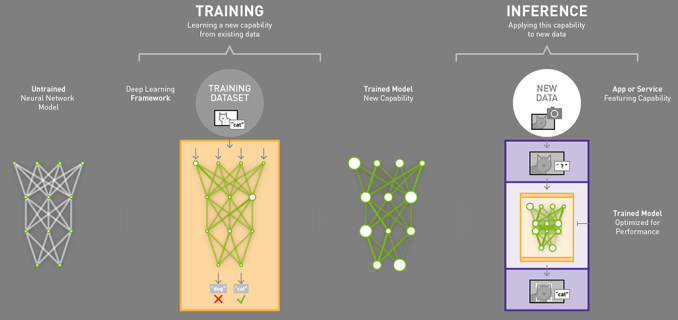

There are two key aspect to actually running NN workloads: first you have to have a trained model which contains the actual information that describes the data that the model is later meant to be run on. The training of models is rather processor intensive – not only is it a lot of work to begin with, but it has to be done with greater levels of precision than the execution of those models, which is to say that efficient neural network training requires more powerful and complex hardware than executing neural networks. Consequently, the idea is that the bulk of models will be trained by high performance hardware, such as server-class GPUs and specialized hardware such a Google’s TPUs on servers in the cloud.

The second aspect of NN is the execution the models; taking the completed models, feeding them new data, and generating results based on what the model perceives. The execution of a neural network model with input data to get an output result is called inferencing. And not unlike the conceptual differences between training and inferencing, the compute requirements for inferencing are quite a bit different as well. The name of the game is still highly parallel compute, but it can be done with lower precision computations and the overall amount of performance required for timely execution is lower as well. Which means that inference can be done on cheaper hardware in many more locations and scenarios.

Graphic source: Nvidia Blog

This in turn has caused the industry to move towards inferencing on edge devices (consumer devices) because it’s a much more performant and power efficient. If you have your trained model on your device locally you can just use the processing power of the device to run the inference and avoid having to upload data to the cloud and have a server do it. This alleviates issues such as latency, bandwidth, and power consumption but also eliminates privacy concerns as the input data never leaves your device.

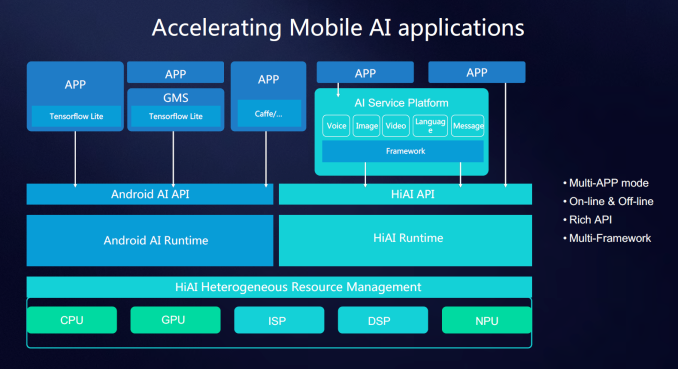

With the goal of running neural network inferencing locally on an edge device, we have the choice of running the implementation on various different processing blocks on devices such as a smartphone. CPU, GPU and even DSPs are all able to run inferencing tasks, however there are vast efficiency differences between them. General purpose CPUs are the least suited for the task as they are not designed with massive parallelised execution in mind. GPUs and DSPs are much better choices but even then there’s much room for improvement. It is here were we see a new class of processing accelerator like the NPU on the Kirin 970.

As these new IP blocks are still new the industry hasn’t had time to agree on a common nomenclature. HiSilicon/Huawei have coined the term NPU/neural processing unit while Apple publicly uses NE/neural engine. Other IP providers such as Cadence/Tensilica just outright call their implementation a neural network DSP (Vision C5), Imagination Technologies (Series 2NX) uses the term NNA/neural network accelerator and CEVA’s NeuPro settled on the marketing friendly “AI processor”. In the sense of simplicity I’ll just continue to refer to them as neural network IPs.

In the case of the Kirin 970 the NPU is provided by a new Chinese IP provider called Cambricon. The Kirin 970 NPU however isn’t a straight off-the-shelf offering but rather a co-development between Cambricon and HiSilicon optimized to HiSilicon’s requirements. Huawei quotes 2 TeraOPS FP16 performance on the IP, however this metric is misleading as the performance figure quotes sparse equivalent peak data, meaning the 8-bit quantized throughput. At this point in time we should largely shy away from theoretical performance figures of the neural network IPs as they don’t necessarily correlate to actual performance and there’s less understood architectural characteristics of the IPs that can play larger roles for the resulting end-performance.

The first hurdle to using a neural network on a hardware block other than the CPU is to make use of the proper APIs to access that block. The SoC and IP vendors all currently ship proprietary APIs and SDKs to enable application development for using hardware acceleration for neural networks. In the case of HiSilicon they offer the HiAI API which can manage the workloads between CPU, GPU and NPU. The API is currently not publicly available as it’s still under development, but developers which reach out to HiSilicon can get early access before the public release later in the year. Vendors such as Qualcomm make available the SNPE (Snapdragon Neural Processing Engine) SDK which does the equivalent task of enabling app developers to tap into resources of the GPU and DSP for neural network processing workloads. Other IP vendors of course have their own SDKs for their respective IPs.

However vendor-specific APIs may end up being a temporary quirk of the present time; the goal in the future is to have a common universal API alongside the respective vendor’s IPs. Google has already been working on this and the NN API introduced in Android 8.1 is already actively shipping on Pixel 2 devices. One note that I’ve been made aware of is that currently the NN API only supports a subset of features that is available to IP like the NPU, so for developers to take full advantage of the hardware and extract maximum performance Huawei still sees application developers targeting the various proprietary APIs while using the NN API as a fall-back method.

116 Comments

View All Comments

Ratman6161 - Wednesday, January 24, 2018 - link

Personally I think Samsung is in a great position...wheather you consider them "truly vertically integrated" or not. One thing to remember is that most often, Samsung flagship devices come in two variants. It's mostly in the US where we get the Qualcomm variants while elsewhere tends to get Exynos. The dual source is a great arrangement because every once in a while Qualcomm is going to turn out a something problematic like the Snapdragon 810. When that happens Samsung has the option to use its own which is what they did with the Galaxy S6/Note 5 generation which was Exynos only.Another point is: what do you consider "truly vertically integrated". The story cites Apple and Huewai but they don't actually manufacture their SOC's and neither does Qualcomm. I believe the Kirin SOC's are actually manufactured by TSMC while Apple and Qualcomm SOC's have at various times been actually manufactured in Samsung FABs. As far as I know, Samsung is the only company that even has the capability to design and also manufacture their own SOC. So in a way, you could say that my Samsung Note 5 is about the most vertically integrated phone there is, along with non-US versions of the S7 and S8 generations. In those cases you have a samsung SOC manufactured in a Samsung FAB in a Samsung phone with a Samsung screen etc. Don't make the mistake of thinking the whole world is just like us...they aren't. Also many of the screens for other brands are also of Samsung manufacture so you have to keep in mind that there is a lot more to the device than the SOC

fred666 - Monday, January 22, 2018 - link

Huawei only uses HiSilicon SoCs? Nothing from Qualcomm?Andrei Frumusanu - Monday, January 22, 2018 - link

They've used Qualcomm chip-sets and still do use them in segments they can't fill with their own SoCs.niva - Monday, January 22, 2018 - link

So they still use QC chips, but unlike them, Samsung isn't vertically integrated because they use QC chips.Get out of here.

Dr. Swag - Monday, January 22, 2018 - link

His point is Huawei only uses non-HiSilicon chips in price segments that they do not have SoCs for. Samsung, however, does sometimes use QC silicon even if they have SoCs that can fill that segment (e.g. Samsung uses the Snapdragon 835s even though they have the 8895).I'm not saying that I agree with Andrei's view, but there is a difference.

niva - Tuesday, January 23, 2018 - link

I completely disagree with the assessment that Samsung is somehow not "as vertically integrated" as Huawei. Samsung is not just vertically integrated, it produces components for many other key players in the market. They have reasons why they CHOOSE not to use their SOCs in specific markets and areas. Some of the rationale behind those choices may be questioned, but it's a choice. I too think that the world would be a better place if they actually put their own chip designs into their phones and directly competed against Qualcom. That of course might be the end of Qualcom and a whole lot of other companies... Samsung can easily turn into a monopoly that suffocates the entire market, so it's not just veritcal, but horizontal integration. What Huawei has accomplished in short order is impressive, but isn't Huawei just another branch of the Chinese government at this point? Sure yeah, their country is more vertically integrated. Maybe that's the line to take to justify the statement...levizx - Monday, February 26, 2018 - link

No, it's not INTEGRATED because it doesn't prefer its own over outsourcing. Samsung Mobile department runs separately from its Semiconductor department which act as a contractor no different than Qualcomm.As for Huawei being a branch of the Chinese government, it's as true as Google being part of the US government. Stop spruiking conspiracy theory. I know for a fact their employees almost fully owns the company.

KarlKastor - Thursday, January 25, 2018 - link

Well, that's not true. Huawei choose the Snapdragon 625 in the Nova. Why not use their own Kirin 600 Series? it is the same market segment.Samsung only opts for Snapdragon, where they have no own SoCs: all regions with CDMA2000 Networks.

In all other regions, europe for example, they ship all smartphones frome the J- and A-Series to the S-Series and Note with their Exynos SoCs.

yslee - Tuesday, January 30, 2018 - link

You keep on repeating that line, but where I am we have no CDMA2000 networks and still get Snapdragon Samsungs.levizx - Monday, February 26, 2018 - link

That's also not true, Samsung uses Snapdragon where there's no CDMA2000 as well. Huawei used to use VIA's 55nm CBP8.2D over Snapdragon.Mid-tier is not so indicative compared to higher end devices when it comes to, well everything. They may even outsource the ENTIRE DESIGN to a third party, and still proves nothing in particular. They might have chosen S625 because of supply issues which is completely reasonable. Same can not be applied to Samsung, since there's no such thing as supply issues when it comes to Exynos and Snapdragon.