The NVIDIA Titan V Preview - Titanomachy: War of the Titans

by Ryan Smith & Nate Oh on December 20, 2017 11:30 AM ESTGaming Performance

Sure, compute is useful. But be honest: you came here for the 4K gaming benchmarks, right?

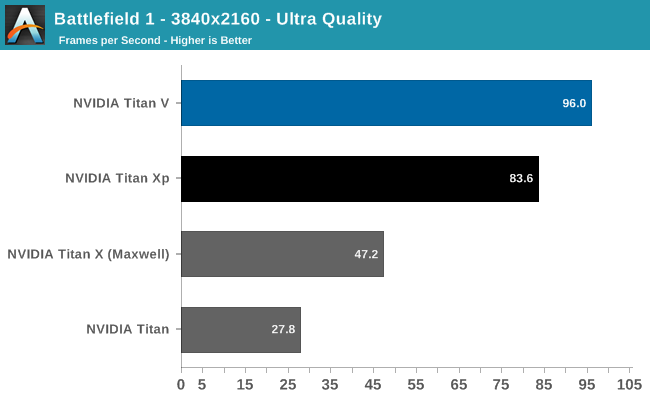

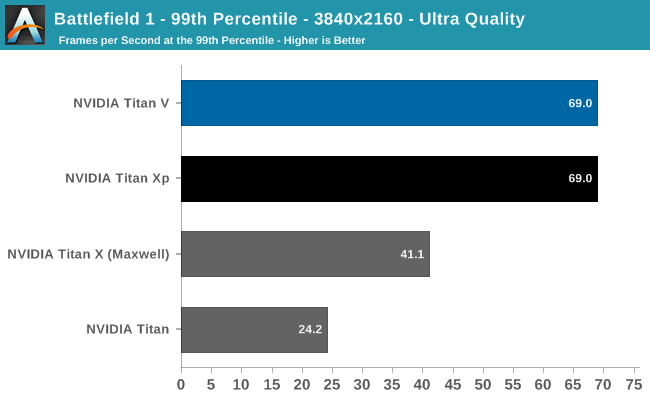

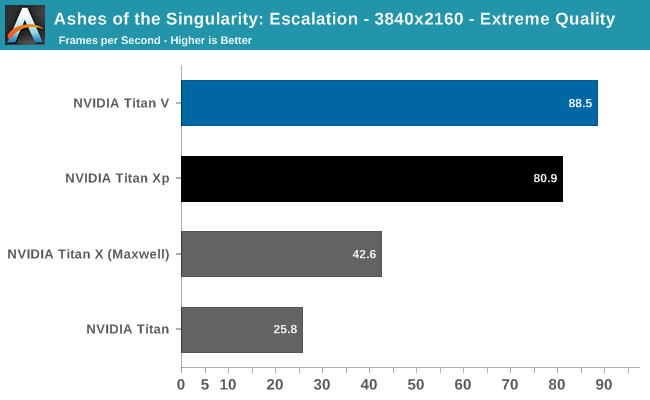

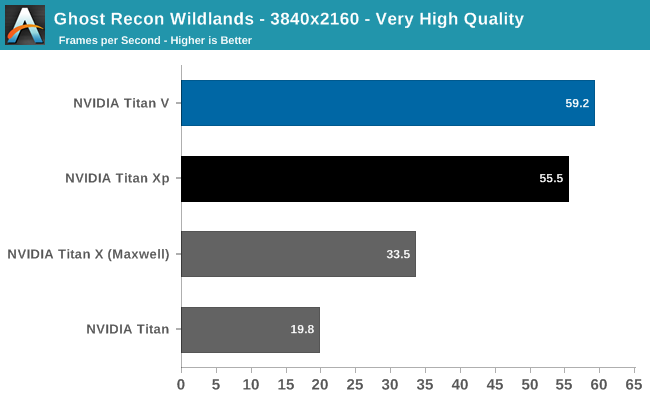

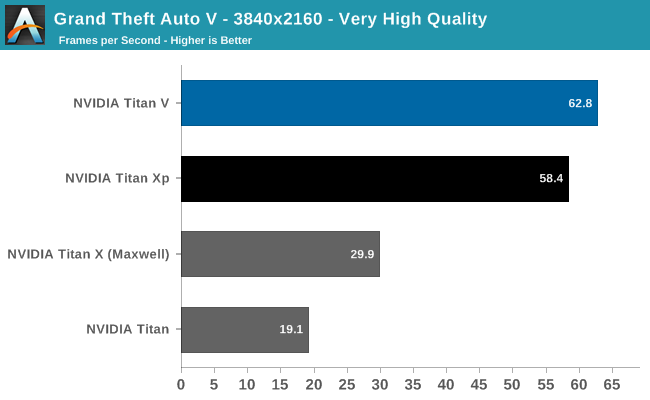

Already after Battlefield 1 (DX11) and Ashes (DX12), we can see that Titan V is not a monster gaming card, though it still is faster than Titan Xp. This is not unexpected, as Titan V's focus is quite far away from gaming as opposed to the focus of the previous Titan cards.

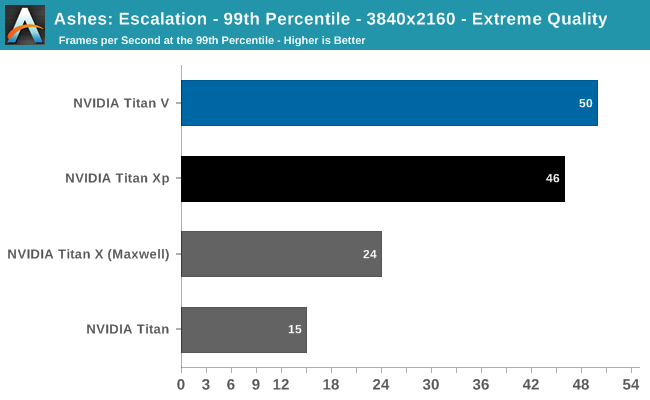

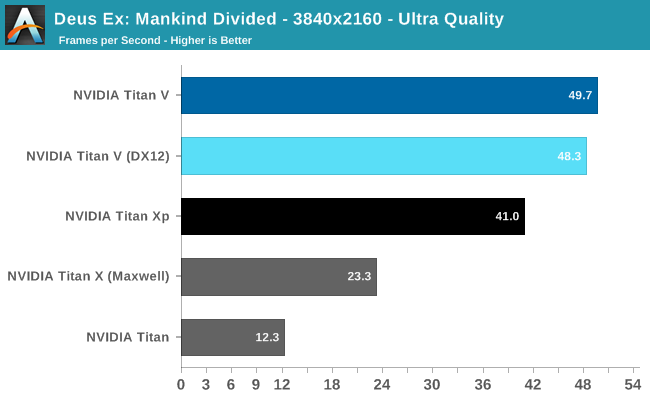

Despite being generally ahead of Titan Xp, it's clear Titan V is suffering from lack of gaming optimization. And for that matter, the launch drivers definitely have bugs in them as far as gaming is concerned. Titan V on Deus Ex resulted in small black box artifacts during the benchmark; Ghost Recon Wildlands experienced sporadic but persistant hitching, and Ashes occasionally suffered from fullscreen flickering.

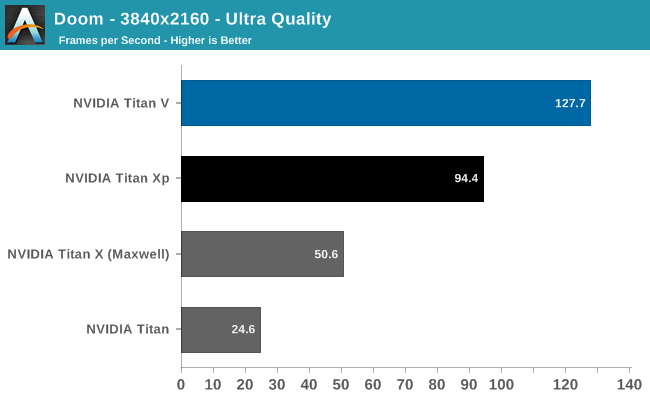

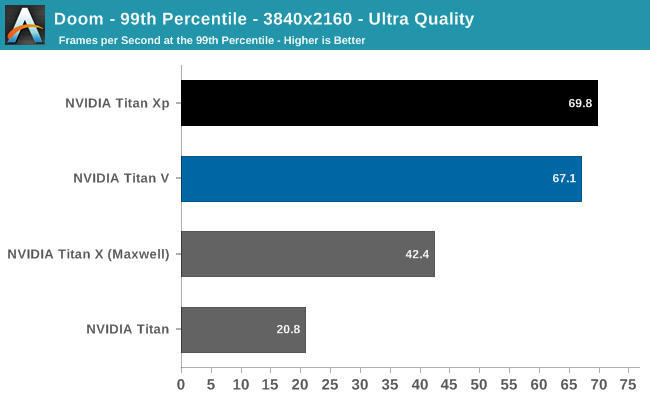

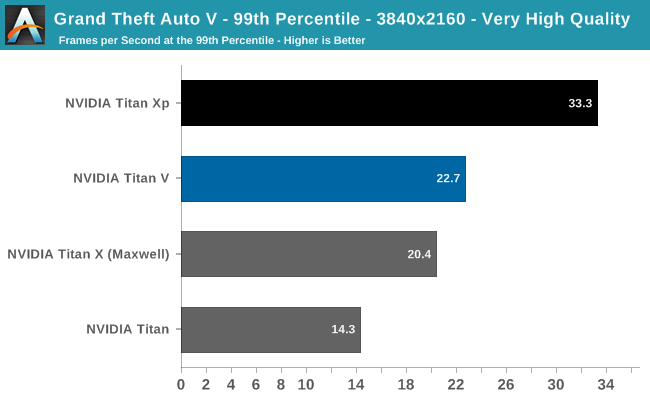

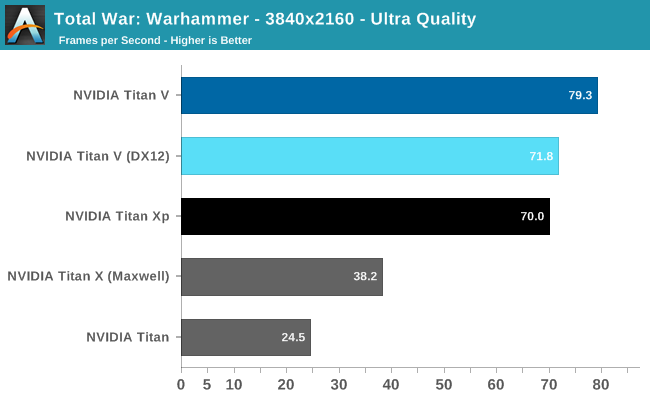

And despite the impressive 3-digit FPS in the Vulkan-powered DOOM, the card actually falls behind Titan Xp in 99th percentile framerates. For such high average framerates, even a 67fps 99th percentile can reduce perceived smoothness. Meanwhile, running Titan V under DX12 for Deus Ex and Total War: Warhammer resulted in less performance. But with immature gaming drivers, it is too early to say if these are representative of low-level API performance on Volta itself.

Overall, the Titan V averages out to around 15% faster than the Titan Xp, excluding 99th percentiles, but with the aforementioned caveats. Titan V's high average FPS in DOOM and Deus Ex are somewhat marred by stagnant 99th percentiles and minor but noticable artifacting, respectively.

So as a pure gaming card, our preview results indicate that this would not the best gaming purchase at $3000. Typically, a $1800 premium for around 10 - 20% faster gaming over the Titan Xp wouldn't be enticing, but it seems there are always some who insist.

111 Comments

View All Comments

mode_13h - Wednesday, December 27, 2017 - link

I don't know if you've heard of OpenCL, but there's not reason why a GPU needs to be programmed in a proprietary language.It's true that OpenCL has some minor issues with performance portability, but the main problem is Nvidia's stubborn refusal to support anything past version 1.2.

Anyway, lots of businesses know about vendor lock-in and would rather avoid it, so it sounds like you have some growing up to do if you don't understand that.

CiccioB - Monday, January 1, 2018 - link

Grow up.I repeat. None is wasting millions in using not certified, supported libraries. Let's avoid talking about entire frameworks.

If you think that researches with budgets of millions are nerds working in a garage with avoiding lock-in strategies as their first thought in the morning, well, grow up kid.

Nvidia provides the resources to allow them to exploit their expensive HW at the most of its potential reducing time and other associated costs. Also when upgrading the HW with a better one. That's what counts when investing millions for a job.

For you kid's home made AI joke, you can use whatever alpha library with zero support and certification. Others have already grown up.

mode_13h - Friday, January 5, 2018 - link

No kid here. I've shipped deep-learning based products to paying customers for a major corporation.I've no doubt you're some sort of Nvidia shill. Employee? Maybe you bought a bunch of their stock? Certainly sounds like you've drunk their kool aid.

Your line of reasoning reminds me of how people used to say businesses would never adopt Linux. Now, it overwhelmingly dominates cloud, embedded, and underpins the Android OS running on most of the world's handsets. Not to mention it's what most "researchers with budgets of millions" use.

tuxRoller - Wednesday, December 20, 2017 - link

"The integer units have now graduated their own set of dedicates cores within the GPU design, meaning that they can be used alongside the FP32 cores much more freely."Yay! Nvidia caught up to gcn 1.0!

Seriously, this goes to show how good the gcn arch was. It was probably too ambitious for its time as those old gpus have aged really well it took a long time for games to catch up.

CiccioB - Thursday, December 21, 2017 - link

<blockquote>Nvidia caught up to gcn 1.0!</blockquote>Yeah! It is known to the entire universe that it is nvidia that trails AMD performances.

Luckly they managed to get this Volta out in time before the bankruptcy.

tuxRoller - Wednesday, December 27, 2017 - link

I'm speaking about architecture not performance.CiccioB - Monday, January 1, 2018 - link

New bigger costier architectures with lower performance = failtuxRoller - Monday, January 1, 2018 - link

Ah, troll.CiccioB - Wednesday, December 20, 2017 - link

Useless cardVega = #poorvolta

StrangerGuy - Thursday, December 21, 2017 - link

AMD can pay me half their marketing budget and I will still do better than them...by doing exactly nothing. Their marketing is worse than being in a state of non-existence.