The NVIDIA Titan V Preview - Titanomachy: War of the Titans

by Ryan Smith & Nate Oh on December 20, 2017 11:30 AM ESTGaming Performance

Sure, compute is useful. But be honest: you came here for the 4K gaming benchmarks, right?

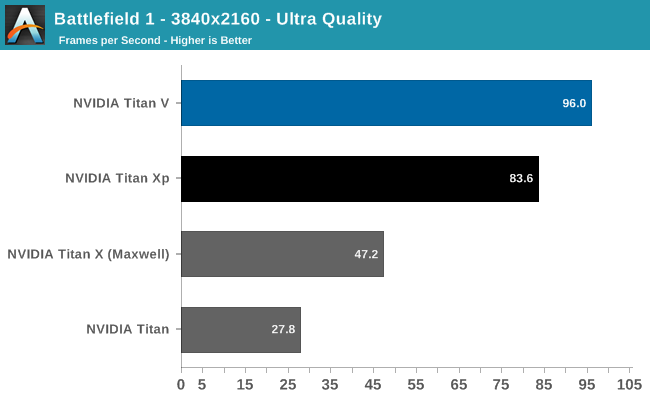

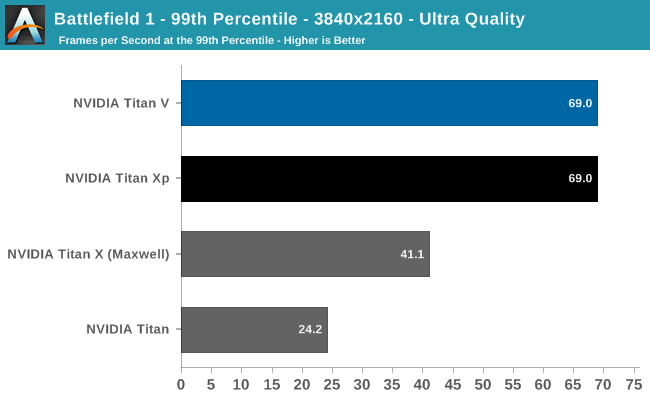

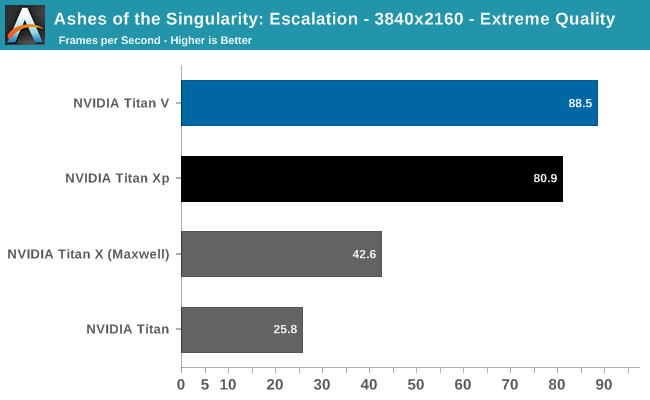

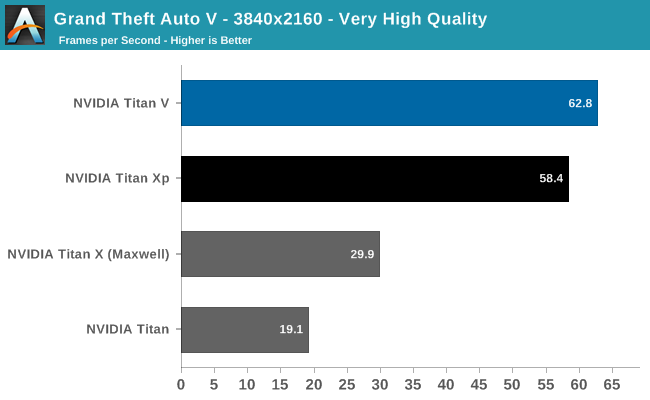

Already after Battlefield 1 (DX11) and Ashes (DX12), we can see that Titan V is not a monster gaming card, though it still is faster than Titan Xp. This is not unexpected, as Titan V's focus is quite far away from gaming as opposed to the focus of the previous Titan cards.

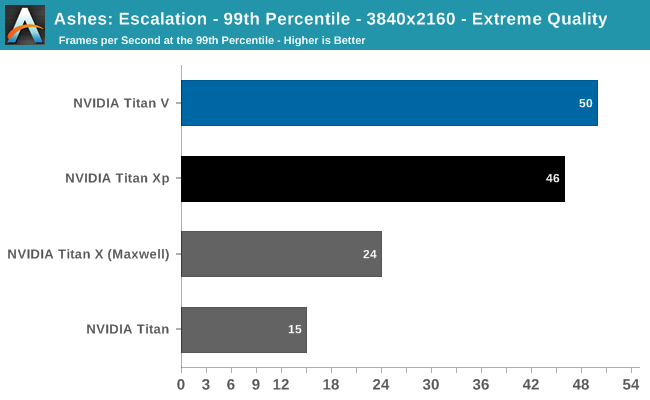

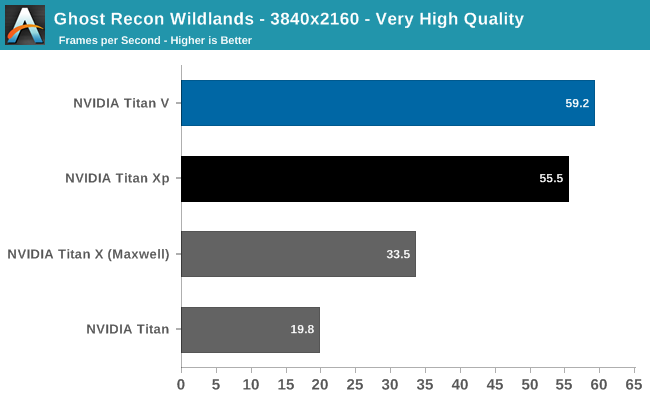

Despite being generally ahead of Titan Xp, it's clear Titan V is suffering from lack of gaming optimization. And for that matter, the launch drivers definitely have bugs in them as far as gaming is concerned. Titan V on Deus Ex resulted in small black box artifacts during the benchmark; Ghost Recon Wildlands experienced sporadic but persistant hitching, and Ashes occasionally suffered from fullscreen flickering.

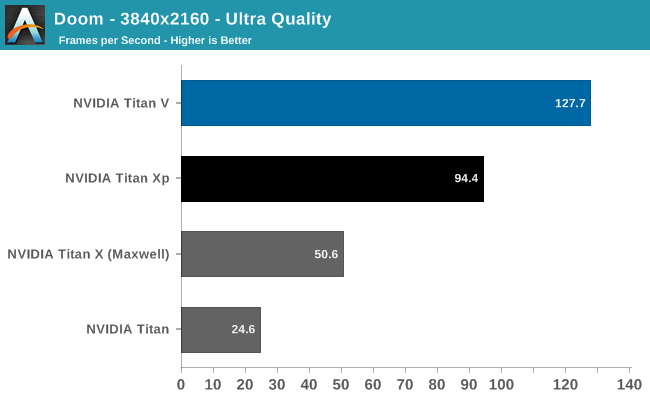

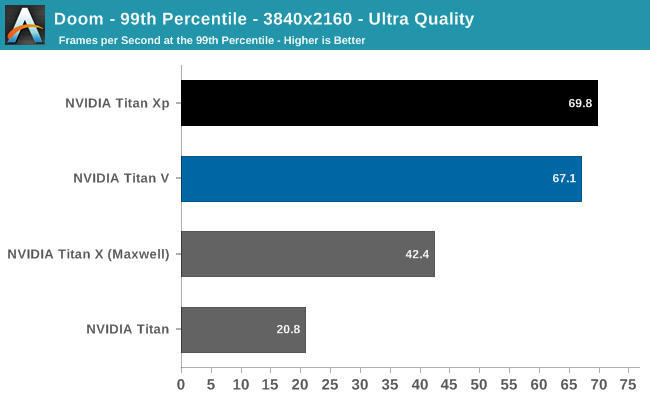

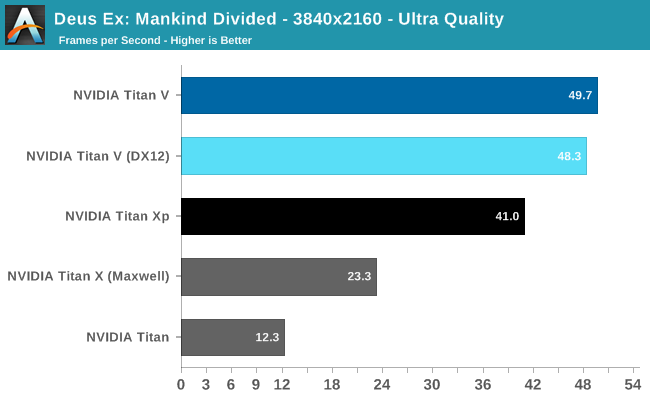

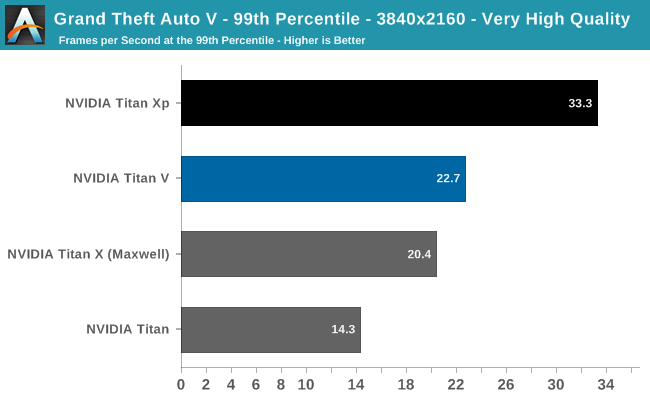

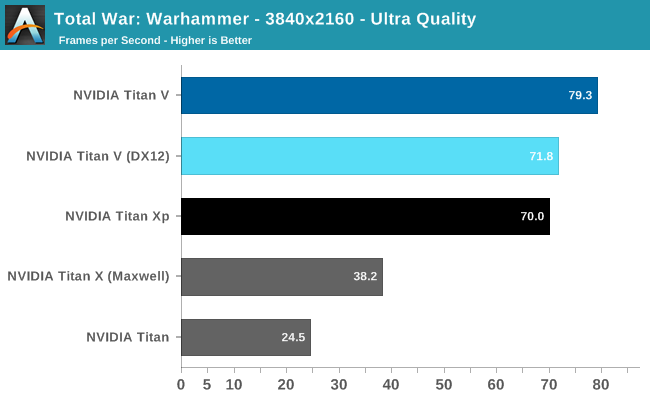

And despite the impressive 3-digit FPS in the Vulkan-powered DOOM, the card actually falls behind Titan Xp in 99th percentile framerates. For such high average framerates, even a 67fps 99th percentile can reduce perceived smoothness. Meanwhile, running Titan V under DX12 for Deus Ex and Total War: Warhammer resulted in less performance. But with immature gaming drivers, it is too early to say if these are representative of low-level API performance on Volta itself.

Overall, the Titan V averages out to around 15% faster than the Titan Xp, excluding 99th percentiles, but with the aforementioned caveats. Titan V's high average FPS in DOOM and Deus Ex are somewhat marred by stagnant 99th percentiles and minor but noticable artifacting, respectively.

So as a pure gaming card, our preview results indicate that this would not the best gaming purchase at $3000. Typically, a $1800 premium for around 10 - 20% faster gaming over the Titan Xp wouldn't be enticing, but it seems there are always some who insist.

111 Comments

View All Comments

maroon1 - Wednesday, December 20, 2017 - link

Correct if I'm wrong, Crysis warhead running 4K with 4xSSAA means it is running 8K (4 times as much as 4K) and then downscale to 4KRyan Smith - Wednesday, December 20, 2017 - link

Yes and no. Under the hood it's actually using a rotated grid, so it's a little more complex than just rendering it at a higher resolution.The resource requirements are very close to 8K rendering, but it avoids some of the quality drawbacks of scaling down an actual 8K image.

Frenetic Pony - Wednesday, December 20, 2017 - link

A hell of a lot of "It works great but only if you buy and program exclusively for Nvidia!" stuff here. Reminds me of Sony's penchant for exclusive lock in stuff over a decade ago when they were dominant. Didn't work out for Sony then, and this is worse for customers as they'll need to spend money on both dev and hardware.I'm sure some will be shortsighted enough to do so. But with Google straight up outbuying Nvidia for AI researchers (reportedly up to, or over, 10 million for just a 3 year contract) it's not a long term bet I'd make.

tuxRoller - Thursday, December 21, 2017 - link

I assumed you've not heard of CUDA before?NVIDIA had long been the only game in town when it comes to gpgpu HPC.

They're really a monopoly at this point, and researchers have no interest in making they're jobs harder by moving to a new ecosystem.

mode_13h - Wednesday, December 27, 2017 - link

OpenCL is out there, and AMD has had some products that were more than competitive with Nvidia, in the past. I think Nvidia won HPC dominance by bribing lots of researchers with free/cheap hardware and funding CUDA support in popular software packages. It's only with Pascal that their hardware really surpassed AMD's.tuxRoller - Sunday, December 31, 2017 - link

Ocl exists but cuda has MUCH higher mindshare. It's the de facto hpc framework used and taught in schools.mode_13h - Sunday, December 31, 2017 - link

True that Cuda seems to dominate HPC. I think Nvidia did a good job of cultivating the market for it.The trick for them now is that most deep learning users use frameworks which aren't tied to any Nvidia-specific APIs. I know they're pushing TensorRT, but it's certainly not dominant in the way Cuda dominates HPC.

tuxRoller - Monday, January 1, 2018 - link

The problem is that even the gpu accelerated nn frameworks are still largely built first using cuda. torch, caffe and tensorflow offer varying levels of ocl support (generally between some and none).Why is this still a problem? Well, where are the ocl 2.1+ drivers? Even 2.0 is super patchy (mainly due to nvidia not officially supporting anything beyond 1.2). Add to this their most recent announcements about merging ocp into vulkan and you have yourself an explanation for why cuda continues to dominate.

My hope is that khronos announce vulkan 2.0, with ocl being subsumed, very soon. Doing that means vendors only have to maintain a single driver (with everything consuming spirv) and nvidia would, basically, be forced to offer opencl-next. Bottom-line: if they can bring the ocl functionality into vulkan without massively increasing the driver complexity, I'd expect far more interest from the community.

mode_13h - Friday, January 5, 2018 - link

Your mistake is focusing on OpenCL support as a proxy for AMD support. Their solution was actually developing OpenMI as a substitute for Nvidia's cuDNN. They have forks of all the popular frameworks to support it - hopefully they'll get merged in, once ROCm support exists in the mainline Linux kernel.Of course, until AMD can answer the V100 on at least power-effeciency grounds, they're going to remain an also-ran, in the market for training. I think they're a bit more competitive for inferencing workloads, however.

CiccioB - Thursday, December 21, 2017 - link

What are you suggesting?GPU are a very customized piece of silicon and you have to code for them with optimization for each single architecture if you want to exploit them at the maximum.

If you think that people buy $10.000 cards to be put in $100.000 racks for a multiple $1.000.000 server just to use open source not optimized not supported not guarantee code in order to make AMD fanboys happy, well, not, it's not like the industry works.

Grow up.