Spotted: 960 GB & 1.5 TB Intel Optane SSD 900P

by Anton Shilov on December 15, 2017 9:00 AM EST- Posted in

- SSDs

- Intel

- Enterprise SSDs

- 3D XPoint

- Optane

- Optane 900P

Intel’s Optane SSD 900P featuring 3D XPoint memory have an edge over SSDs based on NAND flash when it comes to performance and promise to excel them in endurance. Meanwhile the Optane SSD 900P lineup is criticized for relatively low capacities — only 240 GB and 480 GB models are available now, which is not enough for hosting large virtual machines. Apparently, Intel has disclosed that there are 960 GB and 1.5 TB models up its sleeve.

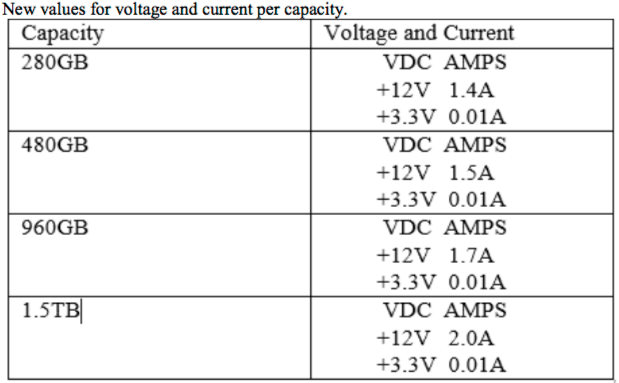

Intel on Thursday issued a product change notification informing its customers about the Optane SSD 900P regulatory and other label changes. Among other things, the document lists Intel Optane 900P 960 GB and 1.5 TB drives. The SSDs are mentioned in context with their voltage and current, which may indicate that we are dealing with products that already have their specs, at least when it comes to power consumption. Meanwhile, Intel does not list part numbers of the higher-capacity 960 GB and 1.5 TB Optane drives, so it is unclear whether the SKUs are meant for general availability, or for select customers only.

Intel intends to start shipments of Optane SSD 900P products with new labels on 27 December, but it is unknown when we are going to see the 900P with enlarged capacities. Intel officially positions the Optane SSD 900P for workstations and high-end desktops, which is why two out of three available models come in HHHL form-factor. Therefore, a potential launch of the 900P 960 GB/1.5 TB models in U.2 form-factor may indicate expansion of 3D XPoint to servers that store massive amounts of data. In the meantime, Intel has already confirmed plans to expand capacity of its Optane SSD DC P4800X for datacenters to 1.5 TB, so Optane capacity increases are in the table for Intel.

We have reached out to Intel for comments and will update the news story once we get more information.

Related Reading:

- The Intel Optane SSD 900P 280GB Review

- Intel Optane SSD DC P4800X 750GB Hands-On Review

- Intel To Launch 3D XPoint DIMMs in 2H 2018

Source: Intel (via ServeTheHome)

17 Comments

View All Comments

FXi - Friday, December 15, 2017 - link

I care very much. I'm quite pleased that Intel said "more capacities coming soon" and they didn't just speak the words and wait a year for bigger drives. We'll have to see how long it really takes of course, but this is positive information. As far as caring about this drive? It redefines both durability and speed on a scale that truly makes it "next gen". Of course some may not care about that. That's ok. But plenty of us know when "leap products" come and why they are important to an upgrade plan.DanNeely - Friday, December 15, 2017 - link

Well they've maxed out the 25W power budget allowed for an x4/8 card. Meaning we won't see a 2TB version unless they either reduce overall power consumption somewhere/how (presumably gen2 storage or controller chips), go all the way to x16, or add an external power connector to the card.Strunf - Friday, December 15, 2017 - link

X4 PCIE slots aren't that common, typically you have x1 and x16, not a big issue to put the card on a x16 slot; the external power supply isn't a problem either.DanNeely - Friday, December 15, 2017 - link

x4 isn't common on consumer boards, but is more common than x16 on server ones if the LGA-2011v3 x2 board I looked at on Newegg are representative. I eyeball it as about 2/3rds x4, 1/3 x16, with x1 being MIA. Only being able to install 2 or 3 cards instead of 6 would be problematic for the crapton of fast storage servers the biggest capacities of these are likely to go into.mitr - Friday, December 15, 2017 - link

Has anyone tried intel optane with older servers running E5V2?rbarone69 - Wednesday, December 20, 2017 - link

Anyone have any real world experience on how this drive works large Visual Studio projects? Compiling and loading etc... I'd buy one for each of my developers if I can reduce compile times down. Just cant seem to find real hard numbers on this.PaulStoffregen - Thursday, August 2, 2018 - link

Also very interested in compile time for large projects, on Linux with gcc.Really curious to hear how Linux IO polling mode affects large compile jobs. Apparently these drives are so fast that it's a net win for the driver to just wait, rather than going to all the usual trouble of scheduling and later responding to an interrupt.

If anyone from AnandTech is reading, please consider publishing software compile benchmarks. There's a lot of "pro users" who develop software, where the cost of faster hardware to speed up compiling code is pretty easy to justify.