The Intel Optane SSD 900p 480GB Review: Diving Deeper Into 3D XPoint

by Billy Tallis on December 15, 2017 12:15 PM ESTAnandTech Storage Bench - The Destroyer

The Destroyer is an extremely long test replicating the access patterns of very IO-intensive desktop usage. A detailed breakdown can be found in this article. Like real-world usage, the drives do get the occasional break that allows for some background garbage collection and flushing caches, but those idle times are limited to 25ms so that it doesn't take all week to run the test. These AnandTech Storage Bench (ATSB) tests do not involve running the actual applications that generated the workloads, so the scores are relatively insensitive to changes in CPU performance and RAM from our new testbed, but the jump to a newer version of Windows and the newer storage drivers can have an impact.

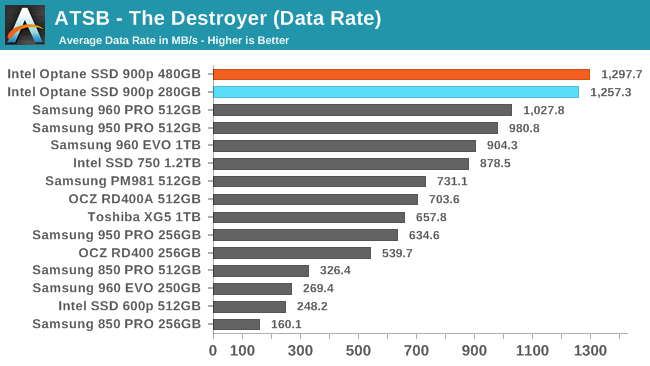

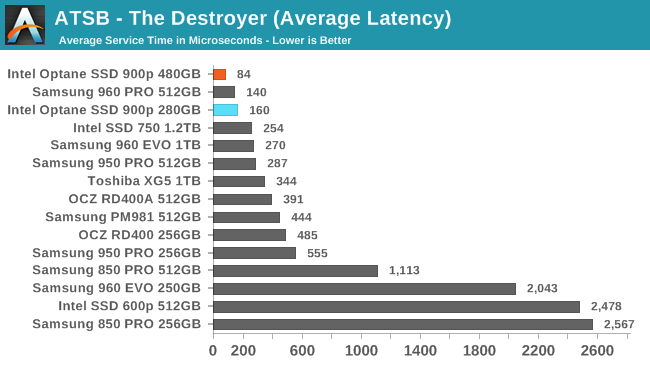

We quantify performance on this test by reporting the drive's average data throughput, the average latency of the I/O operations, and the total energy used by the drive over the course of the test.

The average data rate of the 480GB Optane SSD 900p on The Destroyer is a few percent higher than the 280GB model scored, further increasing the lead over the fastest flash-based SSDs.

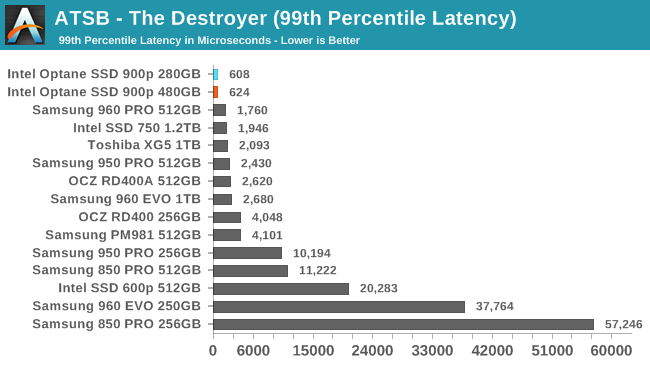

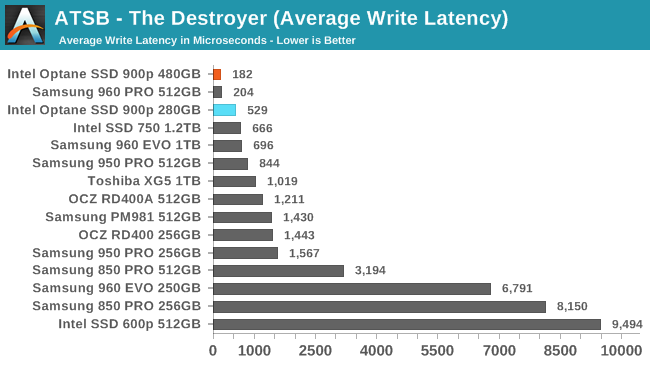

The 480GB Optane SSD 900p shows a substantial drop in average latency relative to the 280GB model, allowing it to score better than any flash-based SSD. For 99th percentile latency the 480GB model scores slightly worse than the 280GB, but both are still far ahead of any competing drive.

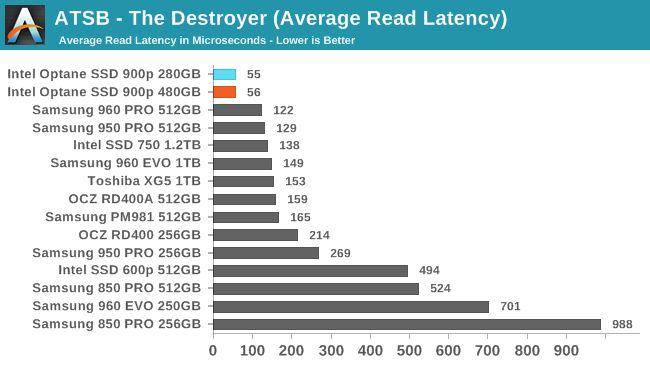

The two capacities of Optane SSD 900p have essentially the same average read latency that is less than half that of any flash-based SSD. For average write latency, the 480GB model sets a new record while the 280GB performed worse than it did the first time around, but still faster than anything other than the Samsung 960 PRO.

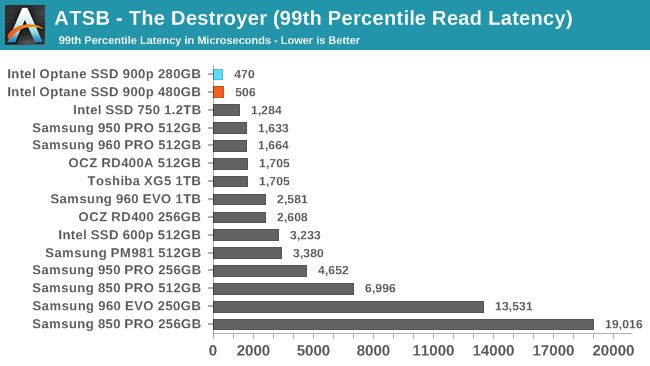

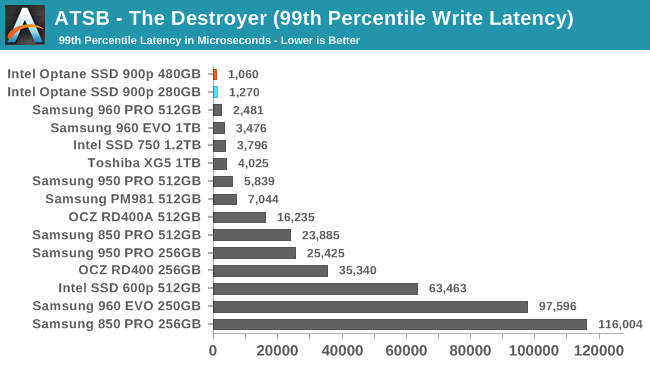

The 99th percentile read and write latency scores for the Optane SSD 900p are all substantially better than any flash-based SSD, even though the 280GB's results again show some variation between this test run and our original review. The 99th percentile read latency scores are particularly good, with the Optane SSDs around 0.5ms while the best flash-based SSDs are in the 1-2ms range.

69 Comments

View All Comments

Notmyusualid - Sunday, December 17, 2017 - link

So, when you are at gun point, in a corner, you finally concede defeat?I think you need professional help.

tuxRoller - Friday, December 15, 2017 - link

If you are staying with a single thread submission model Windows may we'll have a decent sized advantage with both iocp and rio. Linux kernel aio is just such a crap shoot that it's really only useful if you run big databases and you set it up properly.IntelUser2000 - Friday, December 15, 2017 - link

"Lower power consumption will require serious performance compromises.Don't hold your breath for a M.2 version of the 900p, or anything with performance close to the 900p. Future Optane products will require different controllers in order to offer significantly different performance characteristics"

Not necessarily. Optane Memory devices show the random performance is on par with the 900P. It's the sequential throughput that limits top-end performance.

While its plausible the load power consumption might be impacted by performance, not always true for idle. The power consumption in idle can be cut significantly(to 10's of mW levels) by using a new controller. It's reasonable to assume the 900P uses the controller derived from the 750, which is also power hungry.

p1esk - Friday, December 15, 2017 - link

Wait, I don't get it: the operation is much simpler than flash (no garbage collection, no caching, etc), so the controller should be simpler. Then why does it consume more power?IntelUser2000 - Friday, December 15, 2017 - link

You are still confusing load power consumption with idle power consumption. What you said makes sense for load, when its active. Not for idle.Optane Memory devices having 1/3rd the idle power demonstrates its due to the controller. They likely wanted something with short TTM, so they chose whatever controller they had and retrofitted it.

rahvin - Friday, December 15, 2017 - link

Optane's very nature as a heat based phase change material is always going to result in higher power use than NAND because it's always going to take more energy to heat a material up than it would to create a magnetic or electric field.tuxRoller - Saturday, December 16, 2017 - link

That same nature also means that it will require less energy per reset as the process node shrinks (roughly e~1/F).In general, pcm is a much more amenable to process scaling than nand.

CheapSushi - Friday, December 15, 2017 - link

Keep in mind a big part of the sequential throughput limit is the fact that the Optane M.2s are x2 PCIe lanes. This AIC is x4. Most NAND M.2 sticks are x4 as well.twotwotwo - Friday, December 15, 2017 - link

I'm curious whether it's possible to get more IOPS doing random 512B reads, since that's the sector size this advertises.When the description of the memory tech itself came out, bit addressability--not having to read any minimum block size--was a selling point. But it may be that the controller isn't actually capable of reading any more 512B blocks/s than 4KB ones, even if the memory and the bus could handle it.

I don't think any additional IOPS you get from smaller reads would help most existing apps, but if you were, say, writing a database you wanted to run well on this stuff, it'd be interesting to know that small reads help.

tuxRoller - Friday, December 15, 2017 - link

Those latencies seem pretty high. Was this with Linux or Windows? The table on page one indicates both were used.Can you run a few of these tests against a loop mounted ram block device? I'm curious to see what both the min, average and standard deviation values of latency look like when the block layer is involved.