Dissecting Intel's EPYC Benchmarks: Performance Through the Lens of Competitive Analysis

by Johan De Gelas & Ian Cutress on November 28, 2017 9:00 AM EST- Posted in

- CPUs

- AMD

- Intel

- Xeon

- Skylake-SP

- Xeon Platinum

- EPYC

- EPYC 7601

Conclusion

First of all, Intel's benchmarks lend further support to what we already suspected: Intel's Scalable Xeon is better at serving databases for a number of reasons: better data locality (fewer NUMA nodes), better single-threaded performance, and a more "useable" cache. The claim that Intel offers much more predictable database performance seems very reasonable to us: the EPYC platform is much younger and much more complex to tune as it is a "virtual 8 socket" system.

Secondly it is true that the Intel Scalable Xeon is more versatile: the past 5 years AMD's presence in the server market was neglible, while Intel has been steadily adding virtualization features (posted interrupts), I/O features and more (TSX for example). Many of these features are now supported by the hypervisor and OSes out there.

The EPYC platform has some catching up to do. Firmware updates and other software updates were necessary to run a hypervisor, and only relatively recent versions of the Linux kernel (February 2017 w/4.10+) have support for the EPYC processor. So even if we doubt that the 8160 can really deliver 37% better performance than the AMD EPYC in the real world, there is no denying that the Intel Xeon is a "safer bet" for VMware virtualization.

Nevertheless, it is interesting to see that Intel admits that there are quite a few use cases out there where AMD has an advantage. The AMD EPYC has a performance per dollar advantage in webserving and Java servers, for example.

Otherwise, there is some merit to the claim that AVX-512 allows Intel to offer excellent HPC performance without the use of a GPU in compute intensive applications. At the same time, if you are after the best performance on these very parallel workloads, a GPU almost always offers several times higher performance. AVX-512 can also not save Intel in several bandwidth-intensive benchmarks such, as in fluid dynamics.

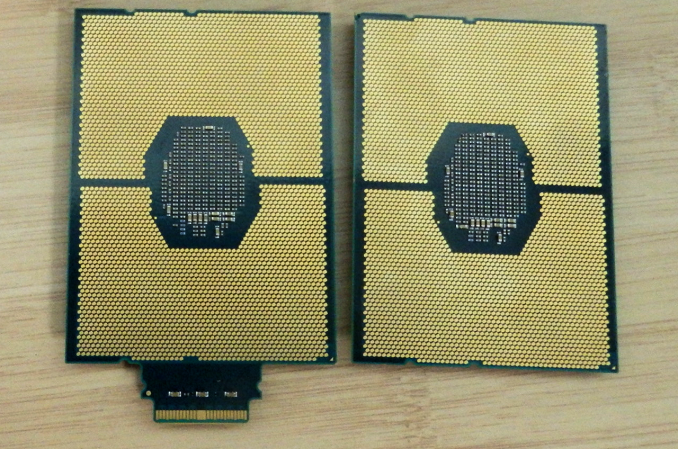

Intel Xeon-SP CPUs (Left: with Omni-Path)

One interesting element to the whole scenario is that at no point does Intel ever approach the performance per watt angle in these discussions. It leaves a big question unanswered from Intel - perhaps we should invoke Hanlon's Razor at this point and call it a missed opportunity, rather than suggest that Intel does not want to speak about power. Our own results showed a win for AMD's EPYC here though, when comparing two 145W Xeon 8176 parts to two 180W EPYC 7601 parts. More testing on specific workloads is needed.

In summary, Intel makes several good points, even when those points aren't always in their own favor. The company clearly has an interest in ensuring that the Xeon's performance leadership remains well-known in light of AMD's EPYC-fueled resurgence, and while there's nothing altruistic about Intel's benchmarking, they are working from a sound position. Still, in defending their position – and by extension their high margins – Intel does highlight the Xeon's biggest weakness versus the EPYC in this newly competitive market: the Skylake Xeon can offer excellent performance, but that performance comes with an equally heavy price tag.

105 Comments

View All Comments

sharath.naik - Tuesday, November 28, 2017 - link

Epyc single socket 32core/64 thread CPU is ~2000$. There is no Intel equivalent here, which is disappointing. As the single socket systems are only ~22 core max and no 205 watt parts.IGTrading - Tuesday, November 28, 2017 - link

You're talking nonsense mate :)I'd pay extra to have extra physical cores when I'm speccing a server holding VMs, but AMD gives us more cores for less money.

I also love AMD's RAID which works absolutely great and it's free while Intel's is annoyingly a paid-for solution.

Intel doesn't say one peep about Full Encrypted RAM, because they don't have it.

Intel doesn't say a pee about power consumption because their platform looses in every test.

Intel doesn't say a peep about EPYC 1.1 or EPYC Plus or whatever which will be a drop-in upgrade for the current platforms.

I was put in the shitty situation of speccing Xeon based machines because the per-core licenses were extremely expensive and the Xeon solution is offering us better performance, but other than this situation, we're doing everything to avoid working with Intel.

We still have servers that started out with dual Opterons and grew to Hexa-Core over the years.

That saved our clients a ton of money and their jaws dropped when we advised that they need to move back to Xeon if they want to upgrade (EPYC was still 2 years away then) .

It may be fashionable as a young lads to root for the "cool winner" like Ferrari, Bugatti or Intel , but when you've worked multiple decades in the industry and had to swallow all the crap Intel was pulling, you start rooting for the little guy.

ddrіver - Tuesday, November 28, 2017 - link

Paying anywhere between $12K-$50+K more per machine just to have the Intel logo tends to add up. Ending up with up to 200W more per machine also incurs some extra costs.If you said the cost fades when compared to licensing costs of many software solutions I would understand. But the metal itself... no, the extra cost for that Xeon is either stupidity or protection tax.

Geranium - Tuesday, November 28, 2017 - link

How many server software really using AVX-512? Can you give us a list (excluding AI and machine learning apps, because those ran better on GPU/Dedicate hardware).SaltyVincent - Wednesday, November 29, 2017 - link

I haven't come across in personally, but something else to add is the amount of heat these chips generate when running AVX-512 under load. Running any AVX benchmarks on Intel chips usually results in throttling.deltaFx2 - Wednesday, November 29, 2017 - link

"The whole "pricetag" thing is not really an issue": No? Is that why the volume sales in the server market is the mid-section of the former Xeon E5? Wouldn't people be buying top end E7s (Platinum in today's lingo)? Of course pricetag matters, and matters even more when you're deploying tens of thousands of nodes.Ro_Ja - Tuesday, November 28, 2017 - link

Head title needs a wee bit edit.negusp - Tuesday, November 28, 2017 - link

Your comment needs a big bit edit.Ryan Smith - Tuesday, November 28, 2017 - link

Head title? I'm not sure I follow.IGTrading - Tuesday, November 28, 2017 - link

These TSX instructions have a lot in common with AMD's own proposed ASF instructions which were discussed 3 years before TSX.Don't you think so ?