Dissecting Intel's EPYC Benchmarks: Performance Through the Lens of Competitive Analysis

by Johan De Gelas & Ian Cutress on November 28, 2017 9:00 AM EST- Posted in

- CPUs

- AMD

- Intel

- Xeon

- Skylake-SP

- Xeon Platinum

- EPYC

- EPYC 7601

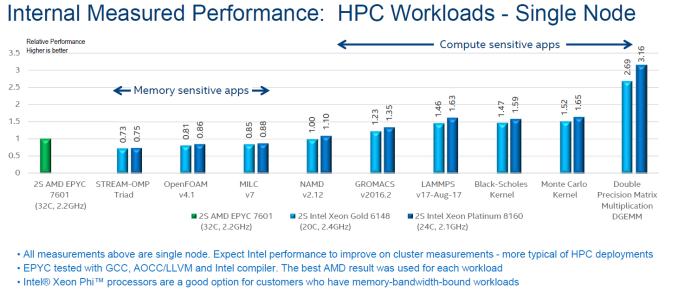

HPC Benchmarks

Discussing HPC benchmarks feels always like opening a can of worms to me. Each benchmark requires a thorough understanding of the software and performance can be tuned massively by using the right compiler settings. And to make matters worse: in many cases, these workloads can be run much faster on a GPU or MIC, making CPU benchmarking in some situations irrelevant.

NAMD (NAnoscale Molecular Dynamics) is a molecular dynamics application designed for high-performance simulation of large biomolecular systems. It is rather memory bandwidth limited, as even with the advantage of an AVX-512 binary, the Xeon 8160 does not defeat the AVX2-equipped AMD EPYC 7601.

LAMMPS is classical molecular dynamics code, and an acronym for Large-scale Atomic/Molecular Massively Parallel Simulator. GROMACS (for GROningen MAchine for Chemical Simulations) primarily does simulations for biochemical molecules (bonded interactions). Intel compiled the AMD version with the Intel compiler and AVX2. The Intel machines were running AVX-512 binaries.

For these three tests, the CPU benchmarks results do not really matter. NAMD runs about 8 times faster on an NVIDIA P100. LAMMPS and GROMACS run about 3 times faster on a GPU, and also scale out with multiple GPUs.

Monte Carlo is a numerical method that uses statistical sampling techniques to approximate solutions to quantitative problems. In finance, Monte Carlo algorithms are used to evaluate complex instruments, portfolios, and investments. This is a compute bound, double precision workload that does not run faster on a GPU than on Intel's AVX-512 capable Xeons. In fact, as far as we know the best dual socket Xeons are quite a bit faster than the P100 based Tesla. Some of these tests are also FP latency sensitive.

Black-Scholes is another popular mathematical model used in finance. As this benchmark is also double precision, the dual socket Xeons should be quite competitive compared to GPUs.

So only the Monte Carlo and Black Scholes are really relevant, showing that AVX-512 binaries give the Intel Xeons the edge in a limited number of HPC applications. In most HPC cases, it is probably better to buy a much more affordable CPU and to add a GPU or even a MIC.

The Caveats

Intel drops three big caveats when reporting these numbers, as shown in the bullet points at the bottom of the slide.

Firstly is that these are single node measurements: One 32-core EPYC vs 20/24-core Intel processors. Both of these CPUs, the Gold 6148 and the Platinum 8160, are in the ball-park pricing of the EPYC. This is different to the 8160/8180 numbers that Intel has provided throughout the rest of the benchmarking numbers.

The second is the compiler situation: in each benchmark, Intel used the Intel compiler for Intel CPUs, but compiled the AMD code on GCC, LLVM and the Intel compiler, choosing the best result. Because Intel is going for peak hardware performance, there is no obvious need for Intel to ensure compiler parity here. Compiler choice, as always, can have a substantial effect on a real-world HPC can of worms.

The third caveat is that Intel even admits that in some of these tests, they have different products oriented to these workloads because they offer faster memory. But as we point out on most tests, GPUs also work well here.

105 Comments

View All Comments

sharath.naik - Tuesday, November 28, 2017 - link

Epyc single socket 32core/64 thread CPU is ~2000$. There is no Intel equivalent here, which is disappointing. As the single socket systems are only ~22 core max and no 205 watt parts.IGTrading - Tuesday, November 28, 2017 - link

You're talking nonsense mate :)I'd pay extra to have extra physical cores when I'm speccing a server holding VMs, but AMD gives us more cores for less money.

I also love AMD's RAID which works absolutely great and it's free while Intel's is annoyingly a paid-for solution.

Intel doesn't say one peep about Full Encrypted RAM, because they don't have it.

Intel doesn't say a pee about power consumption because their platform looses in every test.

Intel doesn't say a peep about EPYC 1.1 or EPYC Plus or whatever which will be a drop-in upgrade for the current platforms.

I was put in the shitty situation of speccing Xeon based machines because the per-core licenses were extremely expensive and the Xeon solution is offering us better performance, but other than this situation, we're doing everything to avoid working with Intel.

We still have servers that started out with dual Opterons and grew to Hexa-Core over the years.

That saved our clients a ton of money and their jaws dropped when we advised that they need to move back to Xeon if they want to upgrade (EPYC was still 2 years away then) .

It may be fashionable as a young lads to root for the "cool winner" like Ferrari, Bugatti or Intel , but when you've worked multiple decades in the industry and had to swallow all the crap Intel was pulling, you start rooting for the little guy.

ddrіver - Tuesday, November 28, 2017 - link

Paying anywhere between $12K-$50+K more per machine just to have the Intel logo tends to add up. Ending up with up to 200W more per machine also incurs some extra costs.If you said the cost fades when compared to licensing costs of many software solutions I would understand. But the metal itself... no, the extra cost for that Xeon is either stupidity or protection tax.

Geranium - Tuesday, November 28, 2017 - link

How many server software really using AVX-512? Can you give us a list (excluding AI and machine learning apps, because those ran better on GPU/Dedicate hardware).SaltyVincent - Wednesday, November 29, 2017 - link

I haven't come across in personally, but something else to add is the amount of heat these chips generate when running AVX-512 under load. Running any AVX benchmarks on Intel chips usually results in throttling.deltaFx2 - Wednesday, November 29, 2017 - link

"The whole "pricetag" thing is not really an issue": No? Is that why the volume sales in the server market is the mid-section of the former Xeon E5? Wouldn't people be buying top end E7s (Platinum in today's lingo)? Of course pricetag matters, and matters even more when you're deploying tens of thousands of nodes.Ro_Ja - Tuesday, November 28, 2017 - link

Head title needs a wee bit edit.negusp - Tuesday, November 28, 2017 - link

Your comment needs a big bit edit.Ryan Smith - Tuesday, November 28, 2017 - link

Head title? I'm not sure I follow.IGTrading - Tuesday, November 28, 2017 - link

These TSX instructions have a lot in common with AMD's own proposed ASF instructions which were discussed 3 years before TSX.Don't you think so ?