Intel to Develop Discrete GPUs, Hires Raja Koduri as Chief Architect & Senior VP

by Ryan Smith on November 8, 2017 5:15 PM EST- Posted in

- GPUs

- Intel

- Raja Koduri

On Monday, Intel announced that it had penned a deal with AMD to have the latter provide a discrete GPU to be integrated onto a future Intel SoC. On Tuesday, AMD announced that their chief GPU architect, Raja Koduri, was leaving the company. Now today the saga continues, as Intel is announcing that they have hired Raja Koduri to serve as their own GPU chief architect. And Raja's task will not be a small one; with his hire, Intel will be developing their own high-end discrete GPUs.

Starting from the top and following yesterday’s formal resignation from AMD, Raja Koduri has jumped ship to Intel, where he will be serving as a Senior VP for the company, overseeing the new Core and Visual Computing group. As a chief architect and general manager, Intel is tasking Raja with significantly expanding their GPU business, particularly as the company re-enters the discrete GPU field. Raja of course has a long history in the GPU space as a leader in GPU architecture, serving as the manager of AMD’s graphics business twice, and in between AMD stints serving as the director of graphics architecture on Apple’s GPU team.

Meanwhile, in perhaps the only news that can outshine the fact that Raja Koduri is joining Intel, is what he will be doing for Intel. As part of today’s revelation, Intel has announced that they are instituting a new top-to-bottom GPU strategy. At the bottom, the company wants to extend their existing iGPU market into new classes of edge devices, and while Intel doesn’t go into much more detail than this, the fact that they use the term “edge” strongly implies that we’re talking about IoT-class devices, where edge goes hand-in-hand with neural network inference. This is a field Intel already plays in to some extent with their Atom processors on the GPU side, and their Movidius neural compute engines on the dedicated silicon sign.

However in what’s likely the most exciting part of this news for PC enthusiasts and the tech industry as a whole, is that in aiming at the top of the market, Intel will once again be going back into developing discrete GPUs. The company has tried this route twice before; once in the early days with the i740 in the late 90s, and again with the aborted Larrabee project in the late 2000s. However even though these efforts never panned out quite like Intel has hoped, the company has continued to develop their GPU architecture and GPU-like devices, the latter embodying the massive parallel compute focused Xeon Phi family.

Yet while Intel has GPU-like products for certain markets, the company doesn’t have a proper GPU solution once you get beyond their existing GT4-class iGPUs, which are, roughly speaking, on par with $150 or so discrete GPUs. Which is to say that Intel doesn’t have access to the midrange market or above with their iGPUs. With the hiring of Raja and Intel’s new direction, the company is going to be expanding into full discrete GPUs for what the company calls “a broad range of computing segments.”

Reading between the lines, it’s clear that Intel will be going after both the compute and graphics sub-markets for GPUs. The former of course is an area where Intel has been fighting NVIDIA for several years now with less success than they’d like to see, while the latter would be new territory for Intel. However it’s very notable that Intel is calling these “graphics solutions”, so it’s clear that this isn’t just another move by Intel to develop a compute-only processor ala the Xeon Phi.

NVIDIA are at best frenemies; the companies’ technologies complement each other well, but at the same time NVIDIA wants Intel’s high-margin server compute business, and Intel wants a piece of the action in the rapid boom in business that NVIDIA is seeing in the high performance computing and deep learning markets. NVIDIA has already begun weaning themselves off of Intel with technologies such as the NVLInk interconnect, which allows faster and cache-coherent memory transfers between NVIDIA GPUs and the forthcoming IBM POWER9 CPU. Meanwhile developing their own high-end GPU would allow Intel to further chase developers currently in NVIDIA’s stable, while in the long run also potentially poaching customers from NVIDIA’s lucrative (and profitable) consumer and professional graphics businesses.

To that end, I’m going to be surprised if Intel doesn’t develop a true top-to-bottom product stack that contains midrange GPUs as well – something in the vein of Polaris 10 and GP106 – but for the moment the discrete GPU aspect of Intel’s announcement is focused on high-end GPUs. And, given what we typically see in PC GPU release cycles, even if Intel does develop a complete product stack, I wouldn’t be too surprised if Intel’s first released GPU was a high-end GPU, as it’s clear this is where Intel needs to start first to best combat NVIDIA.

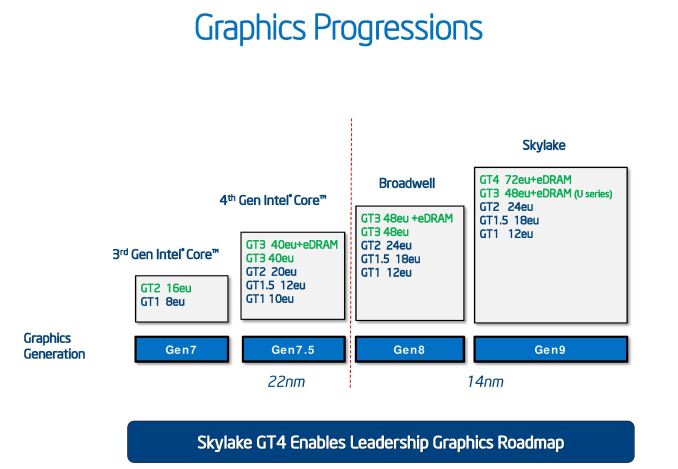

More broadly speaking, this is an interesting shift in direction for Intel, and one that arguably indicates that Intel’s iGPU-exclusive efforts in the GPU space were not the right move. For the longest time, Intel played very conservatively with its iGPUs, maxing out with the very much low-end GT2 configuration. More recently, starting with the Haswell generation in 2013, Intel introduced more powerful GT3 and GT4 configurations. However this was primarily done at the behest of a single customer – Apple – and even to this day, we see very little OEM adoption of Intel’s higher performance graphics options by the other PC OEMs. The end result has been that Intel has spent the last decade making the kinds of CPUs that their cost-conscious customers want, with just a handful of high-performance versions.

I would happily argue that outside of Apple, most other PC OEMs don’t “get it” with respect to graphics, but at this juncture that’s beside the point. Between Monday’s strongly Apple-flavored Kaby Lake-G SoC announcement and now Intel’s vastly expanded GPU efforts, the company is, if only finally, becoming a major player in the high-performance GPU space.

Besides taking on NVIDIA though, this is going to put perpetual underdog AMD into a tough spot. AMD’s edge over Intel for the longest time has been their GPU technology. The Zen CPU core has thankfully reworked that balance in the last year, though AMD still hasn’t quite caught up to Intel here on peak performance. The concern here is that the mature PC market has strongly favored duopolies – AMD and Intel for CPUs, AMD and NVIDIA for GPUs – so Intel’s entrance into the discrete GPU space upsets the balance on the latter. And while AMD is without a doubt more experienced than Intel, Intel has the financial and fabrication resources to fight NVIDIA, something AMD has always lacked. Which isn’t to say that AMD is by any means doom, but Intel’s growing GPU efforts and Raja’s move to Intel has definitely made AMD’s job harder.

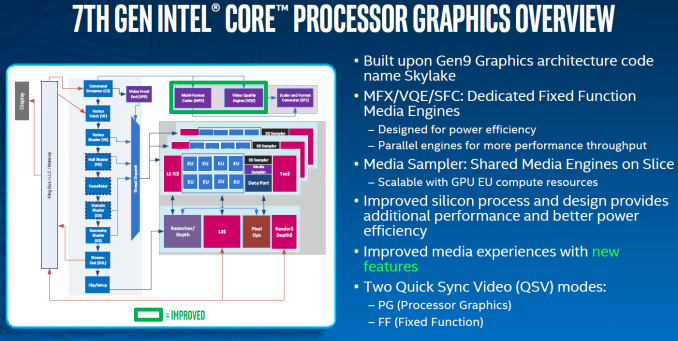

Meanwhile, on the technical side of matters, the big question going forward with Intel’s efforts is over which GPU architecture Intel will use to build their discrete GPUs. Despite their low performance targets, Intel’s Gen9.5 graphics is a very capable architecture in terms of features and capabilities. In fact, prior to the launch of AMD’s Vega architecture a couple months back, it was arguably the most advanced PC GPU architecture, supporting higher tier graphics features than even NVIDIA’s Pascal architecture. So in terms of features alone, Gen9.5 is already a very decent base to start from.

The catch is whether Gen9.5 and its successors can efficiently scale out to the levels needed for a high-performance GPU. Architectural scalability is in some respects the unsung hero of GPU architecture design, as while it’s kind of easy to design a small GPU architecture, it’s a lot harder to design an architecture that can scale up to multiple units in a 400mm2+ die size. Which isn’t to say that Gen9.5 can’t, only that we as the public have never seen anything bigger than the GT4 configuration, which is still a relatively small design by GPU standards.

Though perhaps the biggest wildcard here is Intel’s timetable. Nothing about Intel’s announcement says when the company wants to launch these high-end GPUs. If, for example, Intel wants to design a GPU from scratch under Raja, then this would be a 4+ year effort and we’d easily be talking about the first such GPU in 2022. On the other hand, if this has been an ongoing internal project that started well before Raja came on board, then Intel could be a lot closer. Given what kind of progress NVIDIA has made in just the last couple of years, I can only imagine that Intel wants to move quickly, and what this may boil down to is a tiered strategy where Intel takes both routes, if only to release a big Gen9.5(ish) GPU soon to buy time for a new architecture later.

In directing these tasks, Raja Koduri has in turn taken on a very big role at Intel. Until recently, Intel’s graphics lead was Tom Piazza, a Sr. Fellow and capable architect, but also an individual who was never all that public outside of Intel. By contrast, Raja will be a much more public individual thanks to the combination of Intel’s expanded GPU efforts, Raja’s SVP role, and the new Core and Visual Computing group that has been created just for him.

For what Intel is seeking to do, it’s clear why they picked Raja, given his experience inside and outside of AMD, and more specifically, with integrated graphics at both AMD and Apple. The flip side to that however is that while Apple’s graphics portfolio boomed under Raja during his time at the company, his most recent AMD stint didn’t go quite as well. AMD’s Vega GPU architecture has yet to live up to all of its promises, and while success and failure at this level is never the responsibility of a single individual, Intel will certainly be looking to have a better launch than Vega. Which, given the company’s immense resources, is definitely something they can do.

But at the end of the day, this is just the first step for Intel and for Raja. By hiring an experienced hand like Raja Koduri and by announcing that they are getting into high-end discrete GPUs, Intel is very clearly telegraphing their intent to become a major player in the GPU space. Given Intel’s position as a market leader it’s a logical move, and given their lack of recent discrete GPU experience it’s also an ambitious move. So while this move stands to turn the PC GPU market as we know it on its head, I’m looking forward to seeing just what a GPU-focused Intel can do over the coming years.

Source: Intel

200 Comments

View All Comments

Alexvrb - Wednesday, November 8, 2017 - link

They gave Raja RTG like he wanted... and overall RTG has been lackluster in the discrete market under his leadership. Vega 56 is the only interesting release in recent history, mainly because of the disruptive pricing (currently starts around $400). Shrug. Here's hoping Navi is a bit more impressive.Stuka87 - Thursday, November 9, 2017 - link

Vega was started before Raja took over RTG though.Alexvrb - Thursday, November 9, 2017 - link

Raja Koduri has headed up graphics since they rehired him (yes, rehired) from Apple in April of 2013. 4.5 years isn't enough for you, hmm? RTG was a restructuring, yes, and they did that to please him a little over 2 years ago. But even prior to RTG, he was the graphics boss.I still might buy a Vega 56 if the price is right, but they sure as heck aren't stealing Nvidia's performance or efficiency crowns, nor are they making much of a dent in marketshare.

FreckledTrout - Thursday, November 9, 2017 - link

I would be curious how much of the cash RTG brought in when AMD's CPU's were getting trashed over the last 7 years went to R&D of Vega. I get this feeling AMD used the ATI/RTG's earnings to build Zen.Alexvrb - Thursday, November 9, 2017 - link

Have you SEEN their financials? Do you have any idea how much they paid for ATi? A lot of people still feel it was a mistake. If anything it diverted tons of money from their CPU division for years. Look at their situation now. Lots of debt, had to sell off assets - they sold their fabs. They have been scratching and clawing for every bit of cash they can get, just to stay alive. They are even selling graphics to Intel for a MCM setup, thus shoring up Intel's only major weakness.Will it all pay off with their Zen-based efforts? Maybe, but you give FAR too much credit to their graphics division. Nvidia has been edging them out in almost every measure, including marketshare. The main reason I often use AMD graphics is pricing, and that's not always a given. I certainly wouldn't put Raja on a pedestal.

HStewart - Thursday, November 9, 2017 - link

Of Raju had inside information about AMD including financials and this could be the primary reason he left.But I think people give way too much credit for Zen - in lots of ways it last dish efforts to glue dies to get just increase core count.

But I think what most people don't realize that even the internal Intel GPU's are good enough for average customers needs - IE for word processing and spreadsheets.

Alexvrb - Saturday, November 11, 2017 - link

Zen is the architecture, not the die setup, and it's a LOT more impressive feat of engineering than Vega. Also "glued-together" dies is nothing new. Intel has done it and as a matter of fact does it today. It's not mandatory for the architecture, however it has some advantages in terms of yield and flexibility. Both Intel and AMD have also ramped up the scalability and power of their "glue", by the way.As far as Intel's existing iGPUs being good enough... you're wrong. If you were right, Intel still wouldn't be taking GPUs seriously. This is 2017. GPU-accelerated workloads are growing, not shrinking. MR is the future. Intel is buying graphics chips from AMD, and working on their own to eventually replace those. So both their iGPUs, MCM GPUs, and discrete GPUs will all be upgraded (and NEED to be upgraded) in the coming years.

Alexvrb - Saturday, November 11, 2017 - link

Also I don't really mean to trash Vega, it's just very iterative vs last gen GCN. It also runs into similar problems trying to scale up as the last big die GCN chips... it becomes a power hog. Vega should shine a lot brighter in a power-constrained environment.Samus - Thursday, November 9, 2017 - link

A lot of people called this yesterday, I just wouldn't believe it because...I mean don't we have non-compete agreements? Perhaps Intel's deal with AMD on Monday was part of the negotiations to get Raja?Either way, this is awesome. nVidia needs real competition. Someone needs to put the brakes on their gravy train.

06GTOSC - Thursday, November 9, 2017 - link

Non-compete agreements are practically impossible to enforce.