The NVIDIA GeForce GTX 1070 Ti Founders Edition Review: GP104 Comes in Threes

by Nate Oh on November 2, 2017 9:00 AM EST- Posted in

- GPUs

- GeForce

- NVIDIA

- Pascal

- GTX 1070 Ti

Power, Temperature, & Noise

As always, we'll take a look at inter-related metrics of power, temperature and noise. Particularly with noise, these factors can render unwanted even a decently-performing card, a situation that usually goes hand-in-hand with high power consumption and heat output. As this is a new GPU, we will quickly review the GeForce GTX 1070 Ti's stock voltages as well.

| GeForce Video Card Voltages | |||||

| GTX 1070 Ti Boost | GTX 1070 Boost | GTX 1070 Ti Idle | GTX 1070 Idle | ||

| 1.062v | 1.062v | 0.65v | 0.625v | ||

With the exception of a slightly higher idle voltage, everything remains the same in comparison to the GTX 1080 and 1070. The idle voltage actually matches the GTX 1080 Ti idle voltage, but isn't particularly significant as it seems to vary from card to card. In comparison to previous generations, these voltages are exceptionally lower because of the FinFET process used, something we went over in detail in our GTX 1080 and 1070 Founders Edition review. As we said then, the 16nm FinFET process requires said low voltages as opposed to previous planar nodes, so this can be limiting in scenarios where a lot of power and voltage are needed, i.e. high clockspeeds and overclocking.

Fortunately for NVIDIA, GP104 has always been able to clock exceptionally high, at least the good chips, so with a slight knock on efficiency in the form of a 180W TDP, the GTX 1070 Ti Founders Edition is also able to clock exceptionally high, and then a little more. I also suspect the maturity of TSMC's 16nm FinFET process is playing a part here, but the card's higher TDP and NVIDIA's clockspeed choices make it hard to validate that point.

| GeForce Video Card Average Clockspeeds | |||

| Game | GTX 1070 Ti | GTX 1070 | |

| Max Boost Clock |

1898MHz

|

1898MHz

|

|

| Battlefield 1 |

1826MHz

|

1797MHz

|

|

| Ashes: Escalation |

1838MHz

|

1796MHz

|

|

| DOOM |

1856MHz

|

1780MHz

|

|

| Ghost Recon Wildlands |

1840MHz

|

1807MHz

|

|

| Dawn of War III |

1848MHz

|

1807MHz

|

|

| Deus Ex: Mankind Divided |

1860MHz

|

1803MHz

|

|

| Grand Theft Auto V |

1865MHz

|

1839MHz

|

|

| F1 2016 |

1840MHz

|

1825MHz

|

|

| Total War: Warhammer |

1832MHz

|

1785MHz

|

|

The end result is that the GTX 1070 Ti is amusingly able to reverse the GeForce Founders Edition trend of decreased clocks with higher-performing models; that is, as we reviewed them, the GTX 1080 Ti maintained lower clockspeeds than the GTX 1080, which in turn had lower average clockspeeds than the vapor-chamberless GTX 1070, which had lower clockspeeds than the GTX 1060. Typically, that was the case due to extra hardware units, and thus extra power consumption and heat. But as we see in the GTX 1070 Ti Founders Edition, despite the extra SMs and such, the vapor chamber and higher TDP work well in allowing the GTX 1070 Ti to boost high. In fact, higher than the GTX 1070 FE across the board, but in relative terms the clockspeeds only come out to about 2% faster on average than the GTX 1070 FE. In relation to the 1683MHz boost specification, the GTX 1070 Ti on average clocks nearly 10% above that in these games, though that is something we've already seen with previous Pascal cards. And we can also start to see the line of thinking that leads to framing the GTX 1070 Ti as an "overclocking monster."

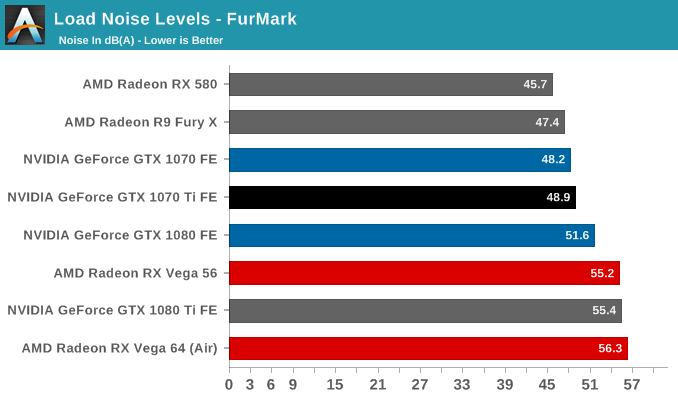

As those higher clockspeeds bear out in power, heat, and noise, there are no surprises here with the GTX 1070 Ti Founders Edition. We've already seen several variations in the aforementioned Founders Edition cards. Here, the blower and vapor chamber are more than adequate for what the card can put out, and in turn lessening the work (and noise) the fan needs to do.

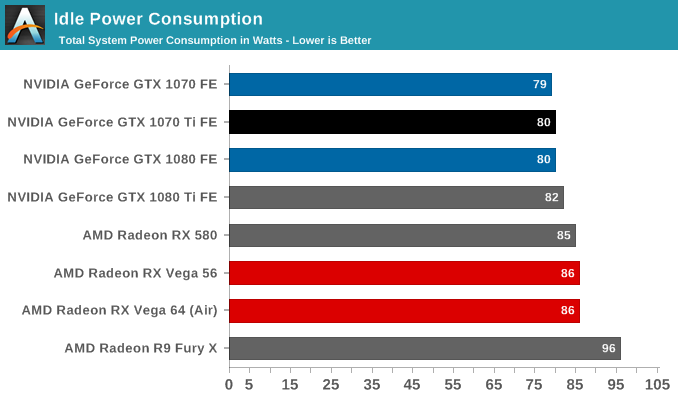

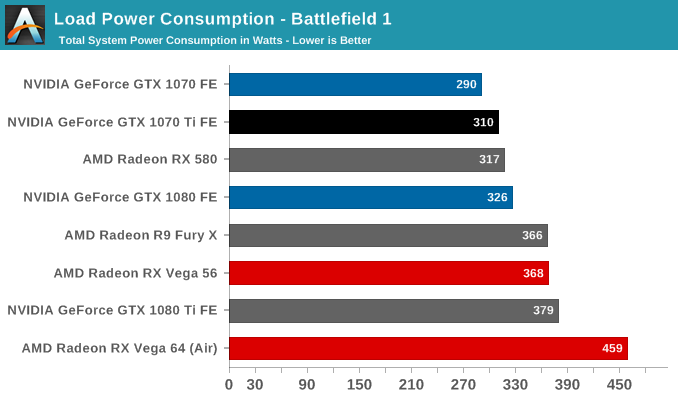

Given the higher 180W TDP, the card can also be closer to GTX 1080 levels of power consumption if need be, though with measurements at the wall, the accuracy of quantification is less than ideal. But in any case, the GTX 1070 Ti has no real necessity to be a power-sipper at this performance range, for which the crown has already gone to the GTX 1070. On top of that, drops in power efficiency will likely not be noticable when compared to its RX Vega competition, or even just against RX Vega 56, whose Battlefield 1 power consumption at the wall is closer to the GTX 1080 Ti FE than the GTX 1080, let alone the GTX 1070 Ti FE.

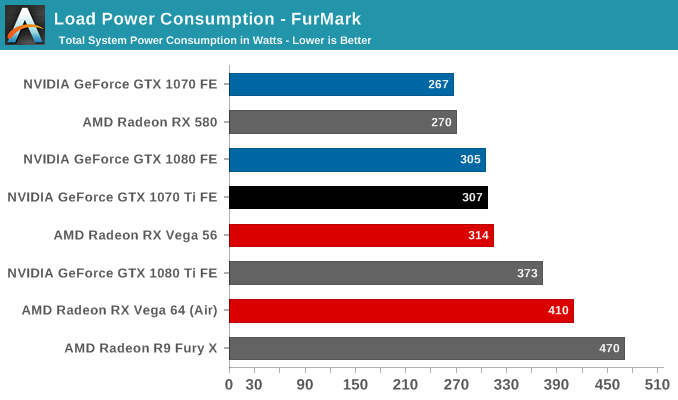

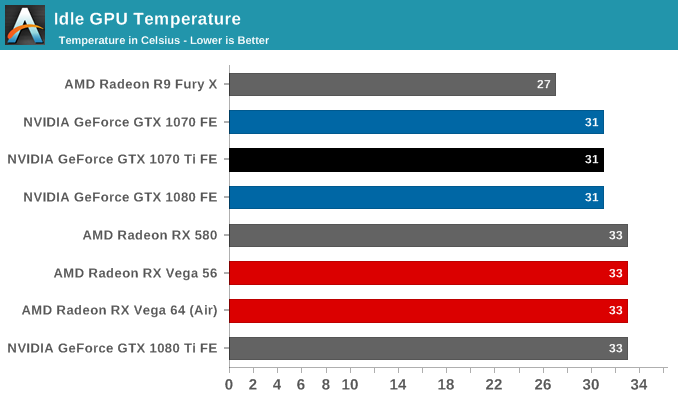

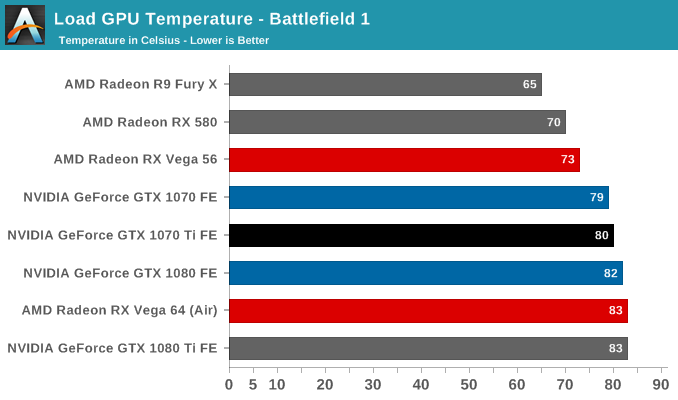

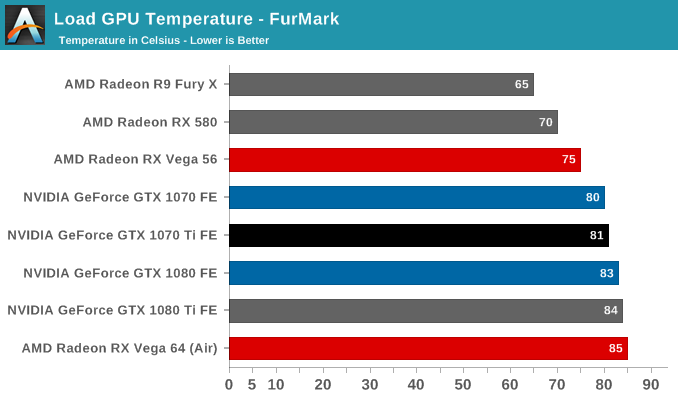

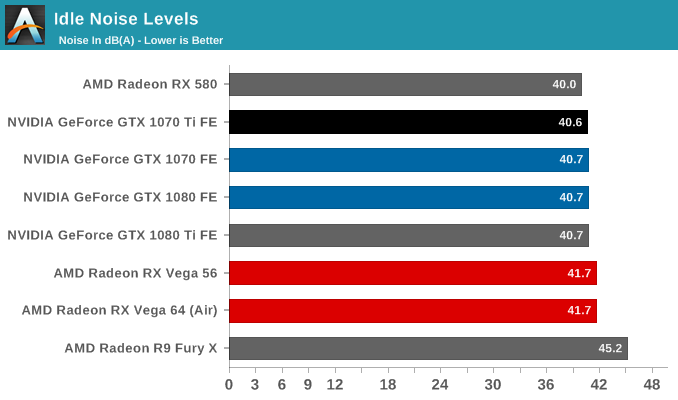

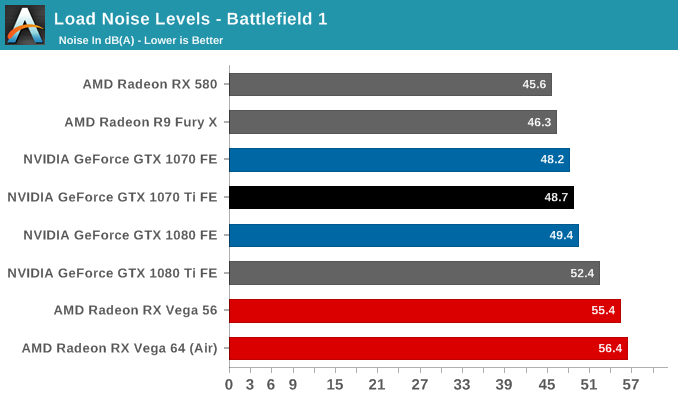

As far as heat and noise go, the GTX 1070 Ti FE has the same 83C throttle point as the GTX 1080 and 1070 FEs, and will approach there under load, though with the GTX 1080's vapor chamber cooler, is kept a little cooler. This follows in fanspeeds and noise as well, and particularly in the eternal power-virus that is Furmark. So for both temperature and noise, the GTX 1070 Ti FE sits right in between the GTX 1080 and 1070 FEs.

78 Comments

View All Comments

BrokenCrayons - Thursday, November 2, 2017 - link

This review was a really good read. I also like that the game screenshots were dropped from it since they didn't exactly add much, but do eat a little of my data plan when I'm reading from a mobile device.As for the 1070 Ti, agreed its priced a bit too high. However, I think most of the current-gen GPUs are pushing the price envelope right now. Except maybe the 1030 of course which has a reasonable MSRP and doesn't require a dual slot cooler. That's really the only graphics card outside of an iGPU I'd seriously consider if I were in the market at the moment, but then again I'm not playing a lot of games on a PC because I have a console and a phone for that sort of thing.

Communism - Thursday, November 2, 2017 - link

Literally the same price as a 1070 non-Ti was a week ago.Those cards sold so well that retailers are still gouging them to this day.

Communism - Thursday, November 2, 2017 - link

And I should mention that the only reason that retail prices of 1070, Vega 56, and Vega 64 went down is due to the launch of 1070 Ti.timecop1818 - Thursday, November 2, 2017 - link

Still got that fuckin' DVI shit in 2017.DanNeely - Thursday, November 2, 2017 - link

Lack of a good way to run dual link DVI displays via HDMI/DP is probably keeping it around longer than originally intended. This includes both relatively old 2560x1600 displays that predate DP or HDMI 1.4 and thus could only do DL-DVI, and cheap 'Korean' 2560x1440 monitors from 2 or 3 years ago. The basic HDMI/DP-DVI adapters are single link and max out at 1920x1200. A few claim 2560x1600 by overclocking the data rate by 100% to stuff it down a single link worth of wires; this is mostly useless though since other than HDMI1.4 capable displays (which don't need this) virtually no DVI monitors can actually take a signal that fast. Active DP-DLDVI adapters can theoretically do it for $70-100, but they all came out buggy to one degree or another and sales were apparently too low to justify a new generation of hardware that fixed the issues.Nate Oh - Saturday, November 4, 2017 - link

This is actually precisely why I don't mind DVI too much, because I have and still use a 1-DVI-input-only Korean 1440p A- monitor from 3 years ago, overclocked to 96Hz. DVI probably needs to go away at some point soon, but maybe not too soon :)ddferrari - Friday, November 3, 2017 - link

So, that DVI port really ruins everything for ya? What are you, 14??There are tons of overclockable 1440p Korean monitors out there that only have one input- DVI. Adding a DVI port doesn't increase cost, slow down performance, or increase heat levels- so what's your imaginary problem again?

Notmyusualid - Sunday, November 5, 2017 - link

@ ddferrariWhat are you - ddriver incarnate?

I'm older than 14, and I wish the DVI port wasn't' there, as it is work to strip them off when I make my GPUs into single-slot water-cooled versions. Removing / modifying the bracket is one thing, but pulling out those DVI ports is another.

Silma - Thursday, November 2, 2017 - link

How can you compare the Vega 56 to the GTX 1070 when it's 7 dB noisier and consumes up to 78 watts more ?sach1137 - Thursday, November 2, 2017 - link

Because the MSRP's of both the cards are same. Vega 56 beats 1070 in almost all games.yes it consumes more power and noiser too. But for some people it doesnt matter it gives 10-15% more performance than 1070. When Overclocked you can extract more from Vega 56 too.